The 2026 Startup QA Floor: Why Codebase-First Agents are the New Standard

In 2026, startups are fundamentally reimagining QA through agentic, codebase-first approaches. Traditional testing - where humans write brittle scripts after features ship - has become a competitive disadvantage. This article details the three-agent architecture (Planner, Automator, Maintainer) that leading startups now deploy as their quality floor. By integrating deeply with source code rather than black-box testing, these agents achieve 100% autonomous test coverage that self-heals and evolves with your codebase, eliminating the bottleneck between AI-accelerated development and safe deployment.

The 2026 Startup QA Floor: Why Codebase-First Agents are the New Standard

The startup landscape of 2026 has undergone a fundamental phase shift. Two years ago, the primary bottleneck for software delivery was the production of code. Today, in an era where AI coding agents ship features at a velocity that far exceeds human review capacity, the bottleneck has migrated. The crisis is no longer about writing the code, but about ensuring that the code being shipped does not silently dismantle the integrity of the entire system.

Startups that rely on legacy quality assurance models, whether manual testing or fragile, script-based automation, are hitting a structural wall. The traditional "And then" approach to testing (build a feature, and then write a test, and then fix the test when it breaks) has become an existential liability. It is too slow, too brittle, and too disconnected from the underlying reality of the codebase.

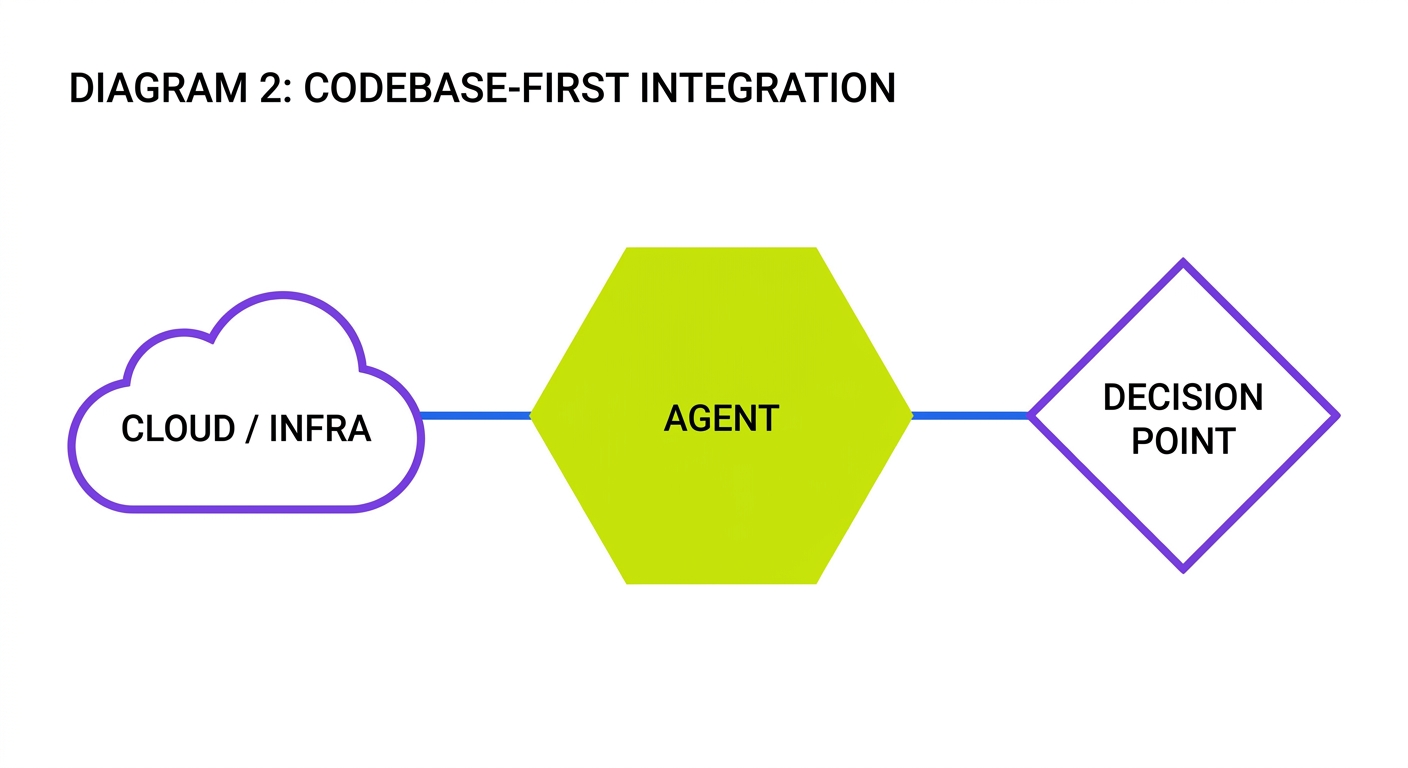

The new standard is the Codebase-First Agentic QA Floor. This is not a suite of tests. It is a persistent layer of intelligence that lives inside the repository, understands the intent of the engineers, and maintains a self-healing quality net that scales alongside the feature set.

The Three Pillars of Agentic Quality

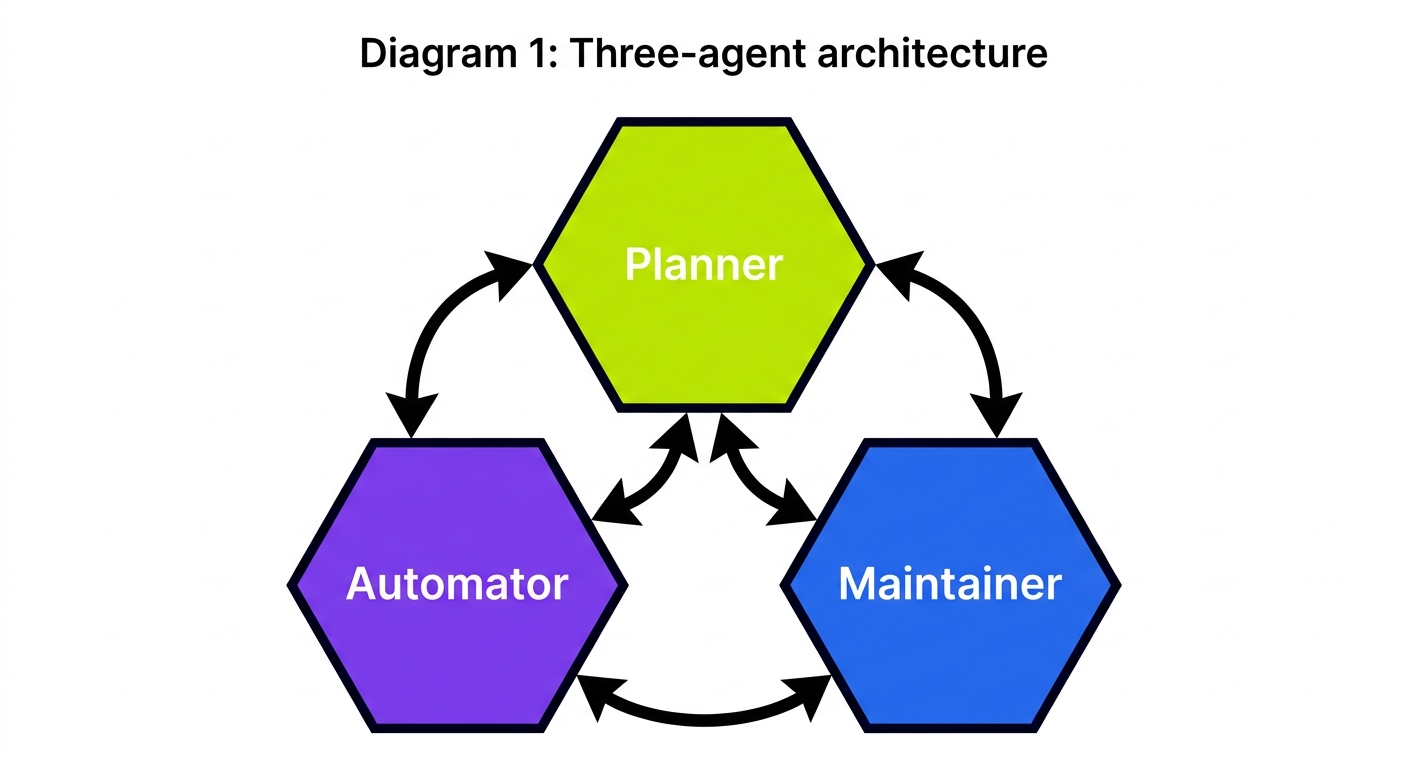

To achieve a resilient quality floor, Autonoma has pioneered a three-agent architecture. This approach moves away from the concept of a "test suite" and toward a "living quality system."

The Planner Agent is the brain of the operation. It does not wait for a human to define a test case. Instead, it continuously reads the codebase, analyzing every pull request to understand how the application's surface area has evolved. By comparing the new code against the existing architecture, the Planner identifies where the "vibe" of the application has shifted and determines which new behaviors require validation.

The Planner's power comes from its comprehensive understanding of the entire codebase. When a new endpoint is added to a backend service, the Planner doesn't just see the API definition. It analyzes the database models the endpoint touches, the business logic it implements, and even the frontend components that will consume it. This holistic view enables the Planner to generate test scenarios that cover not just the happy path but also edge cases that even experienced QA engineers might miss.

// Example Planner Agent output analyzing a PR

const plannerAnalysis = {

newSurface: [

{

type: "API_ENDPOINT",

path: "/api/projects/:id/collaborators",

method: "POST",

impactedModels: ["Project", "User", "Collaboration"],

securityConcerns: ["Authentication", "Authorization"],

testScenarios: [

"Adding collaborator to own project",

"Adding collaborator to project with no access",

"Adding non-existent user as collaborator",

"Adding collaborator when at maximum team size"

]

}

],

modifiedBehavior: [

{

component: "ProjectSettingsPage",

change: "UI layout restructured, but same functionality",

regressionRisk: "LOW",

recommendedAction: "Update selectors in existing tests"

}

]

};But a plan is only as good as its execution. This is where the Automator Agent comes in. Unlike traditional QA engineers who spend hours fighting with CSS selectors and brittle Playwright scripts, the Automator executes the state changes required to validate a plan. It sets up the environment, seeds the database with the exact state required for the scenario, and performs the interactions at the protocol level.

The Automator's sophistication is in how it interacts with the system. Traditional test frameworks operate at the UI or API level, making them vulnerable to surface-level changes. The Automator works at the protocol level, understanding the underlying data flows between components. When testing a feature that requires a specific database state, it doesn't rely on complex seeding scripts:

// Example of Automator Agent generating database setup code

const setupDatabase = async (testContext) => {

// Analyze schema to understand relationships

const schema = await codebaseReader.getDatabaseSchema();

// Generate minimal viable database state for test

const requiredState = {

projects: [{

id: "proj_test123",

name: "Test Project",

ownerId: "user_alice",

maxCollaborators: 5,

currentCollaborators: 4 // Setting up edge case

}],

users: [

{ id: "user_alice", email: "alice@example.com" },

{ id: "user_bob", email: "bob@example.com" }

],

// No collaborations yet - test will create one

};

// Direct database manipulation rather than API calls

await testContext.db.transaction(async (tx) => {

for (const [table, records] of Object.entries(requiredState)) {

for (const record of records) {

await tx[table].upsert(record);

}

}

});

};Therefore, even when the UI changes or the underlying API shifts, the Maintainer Agent ensures the floor remains solid. If a test fails because of a non-breaking change (like a renamed button or a modified CSS class), the Maintainer performs a self-healing operation. It reads the logs, understands the failure context, and updates the quality logic autonomously. The human engineer is only paged when the Maintainer identifies a genuine regression in business logic.

The Maintainer's intelligence lies in distinguishing between cosmetic changes and actual regressions. When a test fails, the Maintainer analyzes both the error and the recent code changes to determine the root cause:

// Example of Maintainer Agent analyzing a test failure

async function analyzeTestFailure(testId, errorLog, recentChanges) {

// Extract error context

const errorType = extractErrorType(errorLog);

if (errorType === "SELECTOR_NOT_FOUND") {

// Analyze the DOM changes in recent PRs

const domChanges = await findRelevantDOMChanges(recentChanges);

if (domChanges.length > 0) {

// Selector needs updating, not a real regression

const updatedSelector = generateNewSelector(errorLog, domChanges);

await updateTestCode(testId, {

type: "SELECTOR_UPDATE",

oldSelector: extractFailedSelector(errorLog),

newSelector: updatedSelector,

confidence: 0.94,

reasoning: "Component structure changed but functionality preserved"

});

return {

actionTaken: "SELF_HEALED",

notifyEngineers: false

};

}

}

// If we get here, it's a genuine regression

return {

actionTaken: "ESCALATED",

notifyEngineers: true,

impactAssessment: await assessBusinessImpact(errorLog, recentChanges)

};

}Why Codebase-First Matters

Legacy testing tools are "black box." They look at the application from the outside, clicking buttons and hoping for the best. This is why they are so brittle. A minor UI change can break a hundred tests, leading to "red CI fatigue" where teams begin ignoring failures.

A codebase-first approach is fundamentally different. The agents have full access to the source code, the database schema, and the infrastructure definitions. They understand why a change happened, not just that it happened.

When a frontend engineer renames a component or restructures a page, traditional tests break because they're looking for elements that no longer exist. Codebase-first agents see these changes in the pull request, understand the structural modifications, and automatically update their testing strategies before the tests even run. This proactive adaptation eliminates the brittle nature of traditional testing approaches.

The codebase-first architecture provides several crucial advantages:

First, it enables deterministic testing environments. By understanding the data model and service interactions from the source code, agents can create precise, minimal test states for each scenario. Traditional approaches often rely on massive, generic seed datasets that both slow down tests and hide edge cases.

Second, it dramatically improves test coverage identification. Legacy tools can tell you which lines of code were executed during tests, but they can't tell you which behaviors remain unverified. Codebase-first agents analyze the logic paths to identify gaps in coverage that matter functionally, not just syntactically.

Third, it creates a self-documenting quality system. When test requirements are derived directly from code, they automatically stay in sync with the implementation. New team members no longer need to decipher mysterious test suites. The agents can explain why each test exists and what business logic it verifies.

For a startup in 2026, this level of integration is the difference between shipping with confidence and shipping with fear. When the agents understand the code, they can automatically handle database state setup. There is no more fighting with complex SQL seed scripts or waiting for a staging environment to refresh. The Automator Agent prepares the exact state needed for every test run in milliseconds.

The Protocol-Level Testing Revolution

Traditional testing operates at two primary levels: UI testing (clicking on elements) and API testing (making HTTP calls). Both approaches are fundamentally limited because they interact with the system at its boundaries rather than understanding its internal workings.

Protocol-level testing represents the next evolution. Rather than interacting with components through their public interfaces, protocol testing works directly with the underlying communication mechanisms between system parts.

Consider a typical e-commerce checkout flow. A UI test might simulate a user adding items to a cart, entering shipping information, and completing payment. An API test would make HTTP requests to the relevant endpoints. But both approaches are vulnerable to interface changes that don't affect the underlying business logic.

Protocol-level testing, enabled by codebase-first agents, works differently:

// Example of protocol-level testing for checkout

const testCheckoutProtocol = async () => {

// Agent analyzes codebase to identify communication patterns

const protocolMap = await codebaseAnalyzer.mapServiceInteractions({

startingPoint: "CheckoutService",

depth: 3

});

// Direct manipulation of the protocol messages

await testContext.interceptProtocol({

source: "CartService",

destination: "CheckoutService",

message: { type: "CART_FINALIZED", items: [{id: "prod123", quantity: 2}] }

});

// Verify that the correct sequence of messages is triggered

const paymentRequest = await testContext.captureNextProtocolMessage({

source: "CheckoutService",

destination: "PaymentService"

});

assert.equal(paymentRequest.totalAmount, 199.98);

// Simulate successful payment response at protocol level

await testContext.sendProtocolMessage({

source: "PaymentService",

destination: "CheckoutService",

message: {

type: "PAYMENT_PROCESSED",

transactionId: "tx_test123",

status: "SUCCESS"

}

});

// Verify order creation protocol message

const orderCreation = await testContext.captureNextProtocolMessage({

source: "CheckoutService",

destination: "OrderService"

});

assert.equal(orderCreation.items.length, 1);

};This protocol-level approach makes tests vastly more resilient. If the API changes from REST to GraphQL, or the UI is completely redesigned, the protocol tests remain valid because they're testing the essential communication between services rather than the specific implementation details.

Moving Beyond the "And Then" Trap

Most engineering teams still fall into the "And Then" narrative. They build a feature, and then they realize they need to test it, and then they find out the test environment is down, and then they decide to ship anyway because deadlines loom and the market waits for no one.

This sequential approach to quality inevitably positions testing as an afterthought. When quality is an afterthought, it becomes a bottleneck. Engineers view it as a tax they must pay rather than a force multiplier for development velocity.

The 2026 standard is built on the "But, Therefore" structure. We want to ship at the speed of thought, but our human review capacity is fixed. Therefore, we must deploy an agentic layer that performs the review for us. We want a rich, stateful application, but managing test data is a nightmare. Therefore, we use Automator agents that handle DB state setup automatically as part of the execution flow.

We want to integrate third-party services like payment processors and authentication providers, but mocking these services in tests has been a constant source of flakiness. Therefore, our agents analyze the API contracts of these services directly from their SDK code and generate perfect protocol-level mocks that mirror real behavior.

We want our test suite to remain reliable as our application evolves, but traditional test suites break with almost every significant refactoring. Therefore, our Maintainer agent continuously monitors for structural changes in the codebase and proactively updates tests before they even have a chance to fail.

This shift in narrative transforms QA from a "gate" that slows down the team into a "floor" that supports it. When the floor is solid, you can run faster. When the floor is codebase-first, it never slips.

Database State: The Hidden Testing Challenge

One of the most significant barriers to effective testing has always been database state management. Traditional approaches typically follow one of three flawed patterns:

- Massive seed scripts that try to cover all possible test scenarios

- Complex setup code at the beginning of each test that's difficult to maintain

- Shared test databases that lead to flaky tests due to state leakage

The codebase-first agents solve this problem through intelligent state analysis. By understanding the database schema and the specific requirements of each test from the code itself, the Automator agent can generate minimal viable database states on demand.

// Example of intelligent database state generation

const generateDatabaseState = async (testScenario) => {

// Analyze the code paths involved in this test scenario

const involvedPaths = await codePathAnalyzer.traceFunctionality(testScenario.feature);

// Identify database models accessed in these paths

const requiredModels = await databaseAccessAnalyzer.identifyRequiredModels(involvedPaths);

// Analyze model relationships to determine minimal viable state

const relationshipGraph = await schemaAnalyzer.buildRelationshipGraph(requiredModels);

// Generate minimal set of records needed for the test

return stateGenerator.createMinimalState({

models: requiredModels,

relationships: relationshipGraph,

constraints: testScenario.constraints,

edgeCases: testScenario.edgeCases

});

};This approach creates perfectly tailored database states for each test, ensuring that:

- Tests are fast because they only create the exact records needed

- Tests are isolated because each runs with its own clean state

- Tests are comprehensive because the agent can easily generate edge cases

- Tests are maintainable because database changes are automatically incorporated

For startups, the implications are profound. Development and testing can proceed at full speed without the traditional overhead of database management. CI pipelines run in parallel without state conflicts. And test environments become disposable resources that can be created and destroyed in seconds rather than carefully maintained shared infrastructure.

The Economic Reality of Agentic QA

The traditional cost of quality was linear: more features required more tests, which required more QA engineers. At a certain point, the cost of maintaining the tests started to consume more than 50% of the engineering budget.

In the agentic model, the cost curve is decoupled. The agents scale with the codebase. Whether you have ten features or a thousand, the Planner, Automator, and Maintainer agents provide the same level of coverage. The marginal cost of testing a new feature drops to near zero.

This economic shift has profound implications for startup resource allocation. Companies no longer need to choose between velocity and quality. The three-agent architecture allows a small engineering team to maintain both high velocity and high quality, even as the codebase grows exponentially.

Early-stage startups have embraced this model most aggressively. With limited engineering headcount, they can now build and ship with the quality safeguards previously available only to large enterprises with dedicated QA teams. But unlike those enterprise teams, the agentic approach doesn't slow them down.

Growth-stage startups have seen the most dramatic transformation. Previously, their rapid feature development inevitably led to quality problems as test suites failed to keep pace. The codebase-first approach has allowed them to maintain startup speed even as they scale past critical complexity thresholds.

For startups, this is the ultimate competitive advantage. You can maintain a lean team of 2026-era "vibe coders" who focus on product innovation, while the Autonoma floor ensures the integrity of the SaaS remains absolute.

Implementing the Agentic Quality Floor

Transitioning from traditional testing to an agentic quality floor requires a mindset shift first and foremost. The key steps involve:

First, granting your agents full codebase access. This means structuring your repository to be machine-readable, with consistent patterns and clear architectural boundaries. The Planner agent needs to understand not just what your code does, but why it's structured the way it is.

Second, embracing protocol-level testing. This requires defining the communication boundaries between your services or modules explicitly, rather than relying on implementation details. The most successful implementations maintain a protocol registry that documents how different parts of the system interact.

Third, accepting agent autonomy for test maintenance. Engineers must be willing to let the Maintainer agent update tests without human approval for non-critical changes. This autonomy is what makes the system scalable, but it requires trust in the agent's judgment.

Fourth, shifting quality left in the development process. Rather than thinking about testing after implementation, teams need to define behavior expectations alongside feature development. The Planner agent can then use these expectations as guidance for test generation.

The implementation timeline typically spans three months for a full transition:

Month one focuses on codebase analysis and planning. During this phase, the agents build their understanding of your system without making changes.

Month two involves parallel testing, where the agentic system runs alongside your existing tests to build confidence.

Month three transitions to the full agentic floor, with the agents taking primary responsibility for quality assurance.

Conclusion: The New Quality Paradigm

The 2026 startup is not just "AI-assisted." It is "AI-guaranteed." By adopting a codebase-first agentic strategy, you ensure that your velocity never comes at the expense of your integrity.

The three-agent architecture fundamentally transforms how startups approach quality:

The Planner turns the codebase itself into a specification, eliminating the gap between what's built and what's tested.

The Automator executes tests at the protocol level, creating resilient verification that survives implementation changes.

The Maintainer constantly evolves the quality system, ensuring that test maintenance never becomes a burden on human engineers.

Together, these agents create a quality floor that scales with your codebase and adapts to your evolving architecture. For startups racing to product-market fit in 2026, this approach offers the perfect balance of speed and reliability.

The floor is set. Now, it is time to build.