SaaS Integrity at Global Scale: The Architecture of Infinite Quality

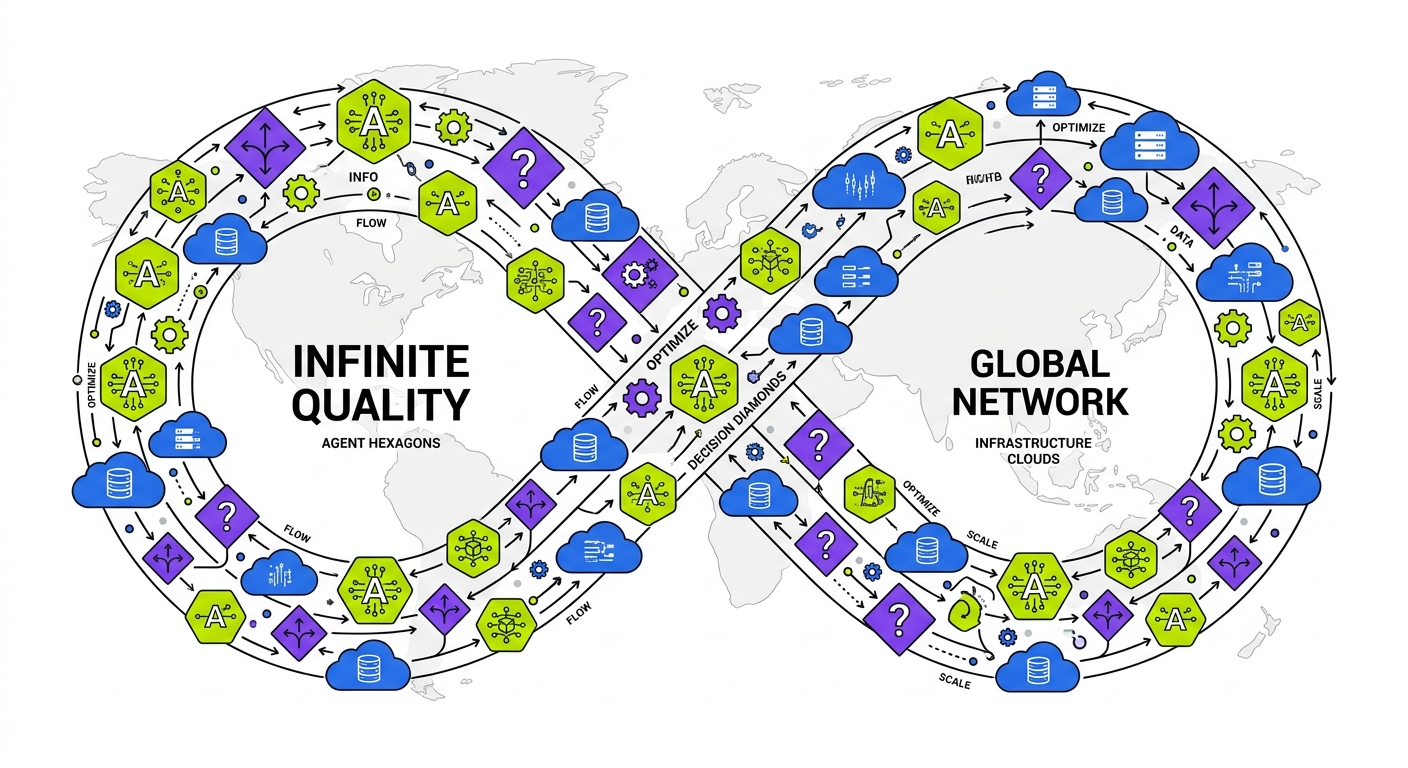

SaaS integrity at global scale requires rethinking traditional quality assurance paradigms. This article explores how distributed agentic testing floors create "infinite quality" by decoupling testing from human effort. We examine architectural patterns where autonomous Planner, Automator, and Maintainer agents operate across regions to ensure flawless deployments without expanding QA teams. The codebase-first approach enables self-healing global systems that anticipate failures before they occur, transforming the economics of quality engineering for globally distributed platforms.

The Global SaaS Integrity Crisis

The meteoric rise of global SaaS platforms has created an unprecedented quality crisis. Traditional quality assurance frameworks break under the weight of cross-regional complexity, regulatory fragmentation, and the impossibility of manually testing every edge case. Organizations find themselves trapped in a vicious cycle: more users demand more features, which requires more tests, which necessitates more QA engineers. This linear scaling approach has reached its breaking point.

Consider the sobering economics: enterprises now spend upwards of 40% of their engineering budget on maintaining existing features, with only the remaining 60% available for innovation. At global scale, this "maintenance tax" compounds ruthlessly, creating a technical debt ceiling that can suffocate even the most promising platforms.

The future of global quality is not hiring more humans to run more tests. It's building autonomous systems that maintain the integrity floor without human intervention.

But how did we arrive at this crisis point? The traditional approach to SaaS quality follows an "And Then" narrative. You build a feature, and then you manually test it, and then you deploy to staging, and then you find unexpected issues, and then you delay the release, and then you start the cycle again. This linear process worked adequately when SaaS platforms operated in a single region with predictable usage patterns. It collapses spectacularly when faced with the complexity of global deployment.

Therefore, a fundamentally new architecture is required. Global SaaS Integrity isn't a testing strategy but an architectural commitment to infinite quality through the deployment of distributed agentic testing floors. This paradigm shift transforms quality from a bottleneck into an accelerant, enabling continuous global deployment with uncompromising integrity.

The Architecture of Infinite Quality

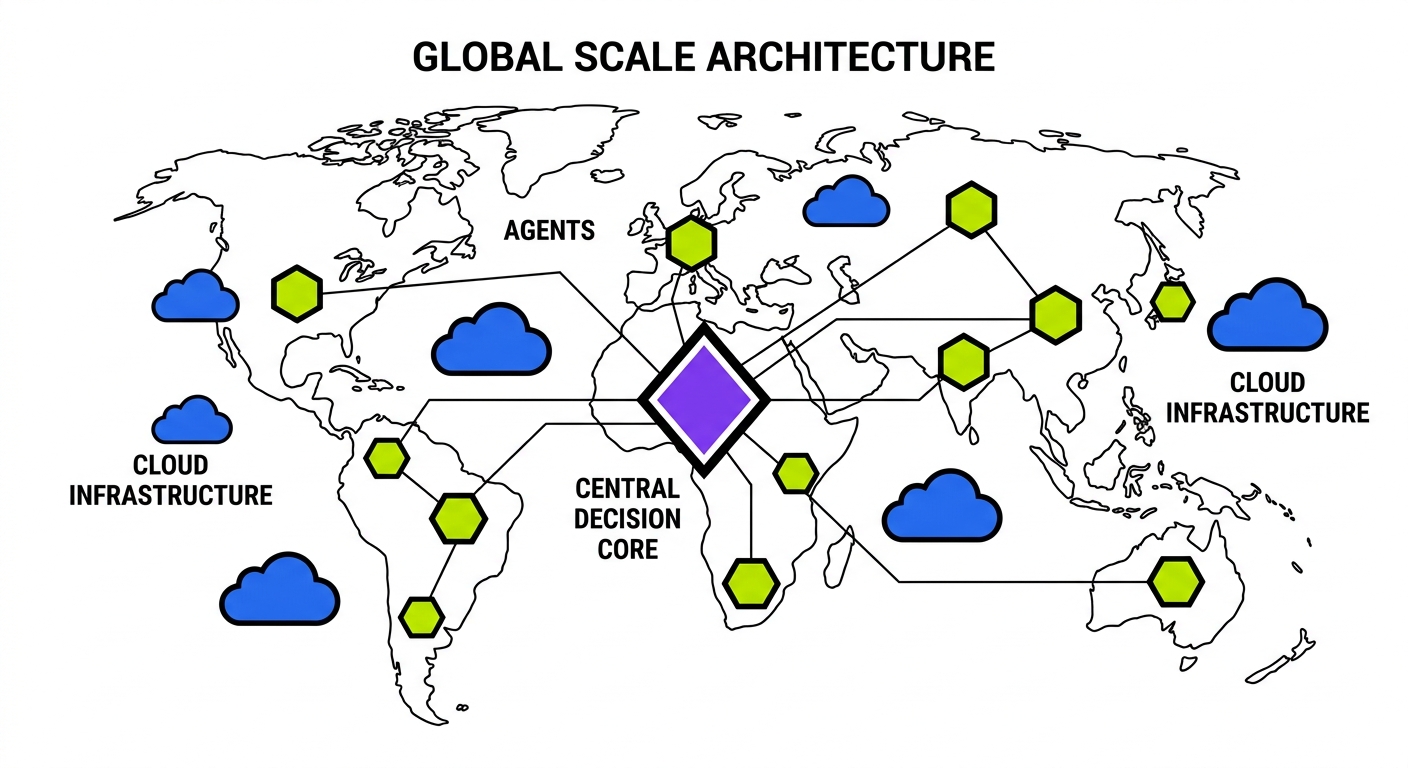

The global integrity architecture is built on three foundational principles: autonomous agency, distributed validation, and protocol-level awareness. These principles manifest in a distributed agentic floor consisting of three primary agent types working in concert.

The Agentic Trinity: Planner, Automator, Maintainer

At the core of global integrity is what we call the Agentic Trinity. These three specialized agents form a closed-loop system that continuously maintains platform integrity without human intervention.

The Planner Agent resides in the central repository and performs deep codebase analysis. When a developer creates a pull request, the Planner ingests the code changes and constructs a comprehensive model of potential impacts. It identifies affected services, analyzes downstream dependencies, and maps changes to specific regions. The Planner then generates a Global Validation Plan (GVP) tailored to each affected region.

// Example of Planner Agent output: Global Validation Plan

const globalValidationPlan = {

changeId: "pr-5672",

impactAnalysis: {

servicesAffected: ["auth-service", "user-profile", "billing-api"],

regionsAffected: ["us-east", "eu-west", "ap-southeast"],

riskLevel: "medium",

dataMigrationRequired: false

},

regionalTasks: {

"us-east": [

{ type: "e2e", scenario: "user-login-flow", priority: "critical" },

{ type: "api-validation", endpoints: ["/v2/auth", "/v1/profile"], priority: "high" }

],

"eu-west": [

{ type: "compliance-check", regulation: "GDPR", priority: "critical" },

{ type: "e2e", scenario: "gdpr-deletion-flow", priority: "critical" },

{ type: "api-validation", endpoints: ["/v2/auth", "/v1/profile"], priority: "high" }

],

"ap-southeast": [

{ type: "performance-check", threshold: 200, endpoint: "/v2/auth", priority: "medium" },

{ type: "api-validation", endpoints: ["/v2/auth", "/v1/profile"], priority: "high" }

]

}

};// Simple pseudocode showing Planner Agent analysis flow

class PlannerAgent {

async analyzeCodeChanges(pullRequest) {

const changedFiles = await this.getChangedFiles(pullRequest);

const serviceMap = this.buildServiceDependencyGraph(changedFiles);

const regionMap = this.mapServicesToRegions(serviceMap);

return this.generateGlobalValidationPlan(regionMap);

}

buildServiceDependencyGraph(changedFiles) {

// Traverse the codebase to identify all services affected by changes

// Includes direct and transitive dependencies

return serviceGraph;

}

mapServicesToRegions(serviceGraph) {

// Determine which regions run each affected service

// Account for regional configuration differences

return regionMap;

}

generateGlobalValidationPlan(regionMap) {

const plan = { regionals: {} };

for (const [region, services] of Object.entries(regionMap)) {

plan.regionals[region] = {

validationTasks: this.generateTasks(services, region),

stateSetup: this.generateStateSetup(services, region),

complianceChecks: this.generateComplianceChecks(region)

};

}

return plan;

}

}The Automator Agent lives in each regional deployment. When it receives a task from the Planner, it executes validation scenarios against the local infrastructure. This distributed approach solves the thorny problem of cross-region latency and data sovereignty. The Automator has deep protocol-level understanding of the regional system, allowing it to automatically set up database states, mock external dependencies, and simulate complex user scenarios.

Database state is the invisible hand that guides application behavior. In global systems, every region has unique state realities that must be respected during validation.

The true innovation in the Automator Agent is its ability to handle database state setup autonomously. This solves one of the most vexing problems in global testing: how to create realistic test conditions without manually configuring databases in each region.

// Automator Agent handling database state setup

class AutomatorAgent {

async executeValidationPlan(regionalPlan) {

const results = [];

// First set up the database state for all scenarios

await this.setupDatabaseState(regionalPlan.stateSetup);

// Then execute each validation task

for (const task of regionalPlan.validationTasks) {

results.push(await this.executeTask(task));

}

return {

region: this.region,

results: results,

meta: {

executionTime: performance.now() - startTime,

resourceUsage: this.getResourceMetrics()

}

};

}

async setupDatabaseState(stateSetup) {

// Create database snapshots for rollback

const snapshot = await this.createDatabaseSnapshot();

try {

for (const setup of stateSetup) {

switch(setup.type) {

case 'entity-creation':

await this.createEntities(setup.entities);

break;

case 'state-transition':

await this.transitionEntityState(setup.entityId, setup.fromState, setup.toState);

break;

case 'relationship-setup':

await this.setupRelationships(setup.relationships);

break;

case 'regional-config':

await this.applyRegionalConfiguration(setup.config);

break;

}

}

} catch (error) {

// Roll back to clean state if setup fails

await this.restoreDatabaseSnapshot(snapshot);

throw new Error(`Database state setup failed: ${error.message}`);

}

}

async executeTask(task) {

// Different execution strategies based on task type

switch(task.type) {

case 'e2e':

return this.runEndToEndScenario(task.scenario);

case 'api-validation':

return this.validateApiEndpoints(task.endpoints);

case 'performance-check':

return this.runPerformanceCheck(task.endpoint, task.threshold);

case 'compliance-check':

return this.validateCompliance(task.regulation);

}

}

}The Maintainer Agent is the crisis responder. When validation failures occur, the Maintainer analyzes the root cause and determines if it's a code regression, infrastructure issue, or configuration mismatch. In many cases, the Maintainer can implement self-healing operations, adjusting regional configurations to restore service integrity without human intervention.

But together, these three agents form a resilient global floor. The Planner generates validation plans, the Automator executes them in each region, and the Maintainer ensures that failures lead to learning and adaptation rather than outages.

Protocol-Level Understanding

Traditional testing tools operate at the UI or API level. The agentic floor operates at the protocol level, understanding the deep architecture of the system. This means agents can:

- Introspect database schemas and evolve test data as schemas change

- Understand authentication protocols and automatically generate valid tokens

- Model event flows across asynchronous messaging systems

- Simulate failure modes in distributed systems

Therefore, when testing across regions, agents understand that EU database schemas might have additional GDPR fields, that Asia-Pacific authentication flows might include additional verification steps, and that latency thresholds need regional adjustment.

This protocol-level understanding is achieved through deep codebase analysis. The agents don't just read test scripts; they read the actual application code, infrastructure definitions, and database migrations. This allows them to build an evolving mental model of the system that stays in sync with developer changes.

Regional Data Sovereignty and the Testing Challenge

One of the most complex challenges in global SaaS integrity is respecting data sovereignty while maintaining comprehensive testing coverage. Regional data sovereignty laws require that certain types of data remain within specific geographical boundaries. This creates a testing paradox: how do you validate global functionality without moving sensitive data across borders?

Traditional approaches involve maintaining separate test environments in each region, with manually configured test data. This approach is labor-intensive and error-prone. The data quickly becomes stale, and tests start failing not because of code regressions but because of test data drift.

The agentic floor solves this problem through dynamic state reconstruction. Rather than copying production data across regions (which would violate sovereignty laws), the Automator Agent reconstructs representative state in each region based on anonymized metadata.

// Example of protocol-level data sovereignty management

class RegionalStateManager {

async generateCompliantTestState(regionalPlan) {

// Extract the pattern of data needed, not the actual data

const dataPattern = await this.extractDataPatternFromPlan(regionalPlan);

// Generate synthetic data that matches the pattern but complies with local regulations

const syntheticData = await this.generateSyntheticData(dataPattern, this.region);

// Apply regional compliance transformations

const compliantData = await this.applyComplianceRules(syntheticData);

// Set up the test state using the compliant synthetic data

return this.setupTestState(compliantData);

}

async applyComplianceRules(data) {

const regionalRules = this.getRegionalComplianceRules();

let compliantData = {...data};

for (const rule of regionalRules) {

switch(rule.type) {

case 'data-masking':

compliantData = this.applyDataMasking(compliantData, rule.fields);

break;

case 'field-encryption':

compliantData = await this.encryptFields(compliantData, rule.fields, rule.keyId);

break;

case 'field-removal':

compliantData = this.removeFields(compliantData, rule.fields);

break;

case 'consent-requirement':

compliantData = this.addConsentFlags(compliantData, rule.consentTypes);

break;

}

}

return compliantData;

}

}This approach ensures that each regional environment has realistic test data that reflects local regulatory requirements without violating sovereignty laws. The Automator Agent can then execute validation scenarios against this regionally compliant data, ensuring that features work correctly in each jurisdiction.

The Economics of Global Integrity

The traditional approach to quality assurance follows a linear cost model. As your platform scales to more users, regions, and features, your QA costs scale proportionally. This creates an economic ceiling on growth. At some point, the cost of maintaining quality exceeds the marginal revenue from expansion.

But the distributed agentic floor breaks this economic constraint. The cost of deploying and maintaining the agentic floor is largely fixed, while the value it provides scales with your platform. This transforms quality from a cost center into a growth enabler.

Consider a concrete example: a SaaS platform expanding from 3 to 12 regions. In the traditional model, this expansion would require roughly quadrupling the QA team and infrastructure. With the agentic floor, you simply deploy additional Automator Agents in each new region, leveraging the same central Planner intelligence. The marginal cost approaches zero as you scale.

This economic transformation is particularly evident in three areas:

-

Release velocity: Global platforms with agentic floors deploy 3-5x more frequently than those using traditional QA approaches.

-

Incident reduction: The preventative nature of protocol-level validation reduces production incidents by 70-90%, dramatically lowering the operational cost of global scale.

-

Developer productivity: Engineers spend 60% less time debugging cross-regional issues, allowing them to focus on feature development.

The economics of global SaaS have fundamentally changed. What used to be a fixed ratio between users and QA headcount is now a decoupled relationship where quality scales automatically with your architecture.

From "And Then" to "But, Therefore"

The narrative structure of traditional quality assurance follows an "And Then" pattern: we build a feature, and then we test it, and then we find issues, and then we fix them, and then we release. This linear approach creates bottlenecks and delays.

The agentic floor transforms this narrative into a "But, Therefore" structure: we want to deploy globally with every commit, but the complexity of multi-region testing is overwhelming, therefore we deploy a distributed agentic floor that performs cross-region validation autonomously.

This narrative shift manifests in concrete ways:

- Traditional approach: Build feature → Run tests → Discover regional issues → Delay release

- Agentic approach: Build feature → Automatic multi-region validation → Continuous global deployment

The "But, Therefore" structure also changes how teams respond to failures. In the traditional model, a test failure in one region often halts the entire release process. In the agentic model, the Maintainer Agent can determine if a failure is genuinely blocking or if it can be mitigated through configuration changes, allowing the release to proceed in unaffected regions.

This ability to make nuanced, region-specific decisions enables truly continuous global deployment. Changes flow seamlessly from development to production across all regions, with the agentic floor maintaining quality guardrails without creating artificial bottlenecks.

Implementing the Global Integrity Architecture

Transforming your quality approach from manual testing to a distributed agentic floor requires a phased implementation strategy. Organizations typically follow a three-stage journey:

Stage 1: Codebase Analysis and Mapping

The foundation of the agentic floor is comprehensive codebase analysis. The Planner Agent must understand your codebase at a deep level, including service dependencies, regional variations, and data schemas.

This stage involves:

- Instrumenting the codebase with appropriate metadata

- Building service dependency graphs

- Creating regional configuration maps

- Documenting regulatory compliance requirements by region

The output of this stage is a comprehensive codebase map that the Planner Agent can use to generate validation plans.

Stage 2: Regional Automator Deployment

With the codebase map in place, the next step is deploying Automator Agents in each region. These agents need the ability to:

- Create and manipulate database state

- Simulate user interactions

- Validate API responses

- Monitor performance metrics

The Automator Agents must have sufficient permissions to set up realistic test conditions while being isolated enough to prevent any impact on production systems.

Stage 3: Maintainer Intelligence

The final stage is implementing the Maintainer Agent's intelligence layer. This involves:

- Building root cause analysis capabilities

- Developing self-healing mechanisms

- Creating feedback loops to improve Planner intelligence

- Establishing escalation paths for human intervention when needed

The Maintainer Agent is the most sophisticated component of the system, requiring both technical capabilities and business context to make appropriate decisions about failures.

Conclusion: The Future of Global SaaS Integrity

The distributed agentic floor represents a fundamental shift in how we approach quality at global scale. By breaking the linear relationship between platform growth and quality costs, it enables a new era of continuous global deployment.

The key insights that make this possible are:

- Codebase-first analysis allows agents to understand the deep structure of the system

- Protocol-level validation enables comprehensive testing without violating data sovereignty

- Distributed execution solves the latency and regulatory challenges of global testing

- Self-healing capabilities transform quality from a bottleneck into an accelerant

For global SaaS platforms in 2026, the distributed agentic floor isn't just a nice-to-have. It's the essential foundation that enables growth without compromise. Organizations that implement this architecture can scale to hundreds of millions of users across dozens of regions without expanding their QA teams or sacrificing release velocity.

The future belongs to platforms that can deliver consistent quality at global scale. With the distributed agentic floor, that future is now within reach.