Zero-Bugs CI/CD: Automating the Maintenance of the Maintenance

Zero-Bugs CI/CD is the evolution of traditional pipelines into autonomous systems that maintain themselves. Using specialized agents with semantic codebase understanding, these systems intelligently optimize test selection, handle database state, and self-heal when issues arise. This approach replaces the brittle "And Then" automation with resilient "But, Therefore" architecture, dramatically reducing maintenance overhead while increasing deployment confidence.

The Crisis of Maintenance in Modern CI/CD

In modern software engineering, we've reached a peculiar inflection point. Our CI/CD pipelines have grown increasingly sophisticated, automatically building, testing, and deploying our code with remarkable speed and precision. We've automated away the tedious work of manual deployments, infrastructure provisioning, and even portions of code generation. But underneath this veneer of automation lies a growing crisis: the maintenance burden of these very systems has become overwhelming.

The paradox is striking. The tools we've built to accelerate development are now demanding significant engineering resources just to maintain their operation. This is particularly acute in our testing infrastructure, where the concept of "flaky tests" has become so normalized that many teams build explicit retry logic into their pipelines. We have engineers whose entire job function has become debugging CI failures that have nothing to do with actual code quality.

We've automated everything except the most crucial part: the maintenance of our automation itself. The result is a quality crisis hiding in plain sight.

The traditional approach to CI/CD follows what I call an "And Then" narrative. A developer commits code, and then the build process begins, and then unit tests run, and then integration tests execute, and then deployment to staging occurs, and then end-to-end tests validate the system. This linear chain of dependencies creates a fundamentally fragile system. If any link fails - often for reasons completely unrelated to code quality - the entire pipeline collapses.

But this fragility isn't inevitable. Therefore, we need to reimagine CI/CD as a resilient, self-healing system capable of maintaining its own integrity. This is the vision of Zero-Bugs CI/CD: not a pipeline that merely catches bugs, but one that autonomously manages its own health and evolution.

The Problem with Traditional Test Infrastructure

Before we explore the solution, let's diagnose the fundamental issues with traditional test infrastructure. The root causes run deeper than most engineering teams realize.

The Database State Dilemma

The most pernicious issue in test reliability is database state management. Traditional approaches typically fall into two problematic categories: shared persistent test databases or simplistic resets between test runs.

In the shared database model, tests run against a common database that persists between test runs. This creates an obvious problem: test A might modify data that test B depends on, creating order-dependent tests and intermittent failures. But the solution most teams implement - resetting the database between test runs - introduces significant performance penalties and still doesn't solve the fundamental issue of properly representing production-like conditions.

But the database is more than just a storage mechanism; it's a complex stateful system with integrity constraints, indexes, and query patterns that significantly impact application behavior. Therefore, proper testing requires sophisticated database state management that can recreate specific scenarios without the overhead of complete resets.

The Selection Problem

Another critical flaw in traditional CI/CD is the all-or-nothing approach to test execution. When a developer makes a small change to a component, the system typically runs the entire test suite. This leads to two serious problems:

First, the feedback cycle becomes unnecessarily long. A simple CSS change might trigger hours of unrelated backend tests. Second, the probability of encountering an unrelated test failure increases with each additional test executed, reducing signal-to-noise ratio and creating alert fatigue.

But not all code changes have the same impact radius. A change to a core utility function might affect dozens of components, while a localized UI change might only impact a single screen. Therefore, intelligent test selection based on understanding code dependencies is essential for both speed and reliability.

The Maintenance Tax

Perhaps the most insidious problem is what I call the "maintenance tax." In traditional systems, each new feature adds to the test maintenance burden. As the application evolves, tests break not because the feature is broken, but because the test's assumptions no longer match reality. This creates a perverse incentive: teams become reluctant to add comprehensive tests because they know it increases their future maintenance burden.

But tests shouldn't require constant manual updates for non-breaking changes. Therefore, we need tests that adapt to intentional application changes while still catching actual regressions.

The Autonomous Agent Approach to CI/CD

The solution to these deep structural problems isn't better tooling within the current paradigm. It requires a fundamentally different approach: embedding autonomous agents directly into the CI/CD pipeline that have both the authority and capability to maintain the system's integrity.

The Planner Agent: Optimizing Test Selection

The Planner is the first agent in our Zero-Bugs CI/CD architecture. Its role is to analyze code changes and determine precisely which tests need to be executed. But unlike simplistic approaches that rely on file paths or manual tagging, the Planner has deep semantic understanding of the codebase.

// Example of how Planner Agent analyzes code changes

class PlannerAgent {

async analyzeCodeChanges(pullRequest: PullRequest): Promise<TestPlan> {

// Extract changed files and their content from the PR

const changes = await this.getCodeChanges(pullRequest);

// Build dependency graph from the codebase

const dependencyGraph = await this.buildDependencyGraph();

// Identify affected components based on semantic understanding

const affectedComponents = this.identifyAffectedComponents(changes, dependencyGraph);

// Generate optimized test plan that covers all affected components

return this.generateTestPlan(affectedComponents);

}

private async buildDependencyGraph(): Promise<DependencyGraph> {

// Deep analysis of imports, function calls, and component relationships

// Goes beyond static imports to understand data flow and side effects

// ...

}

}The Planner works by first constructing a multi-dimensional dependency graph of the entire application. This isn't just tracking import statements; it understands data flow, state management patterns, and even implicit dependencies like shared CSS classes or API contracts. When a change is proposed, it calculates the "blast radius" of that change and selects only the tests necessary to validate the affected components.

For example, if a developer changes the implementation of a button component but preserves its API, the Planner will only select tests that visually validate the button, skipping unrelated backend tests. If the change is to a core data structure used throughout the application, the Planner will select a much broader suite of tests.

But simply selecting tests isn't enough when state management is involved. Therefore, the Planner also generates a detailed state requirements document for each test, which leads us to our next agent.

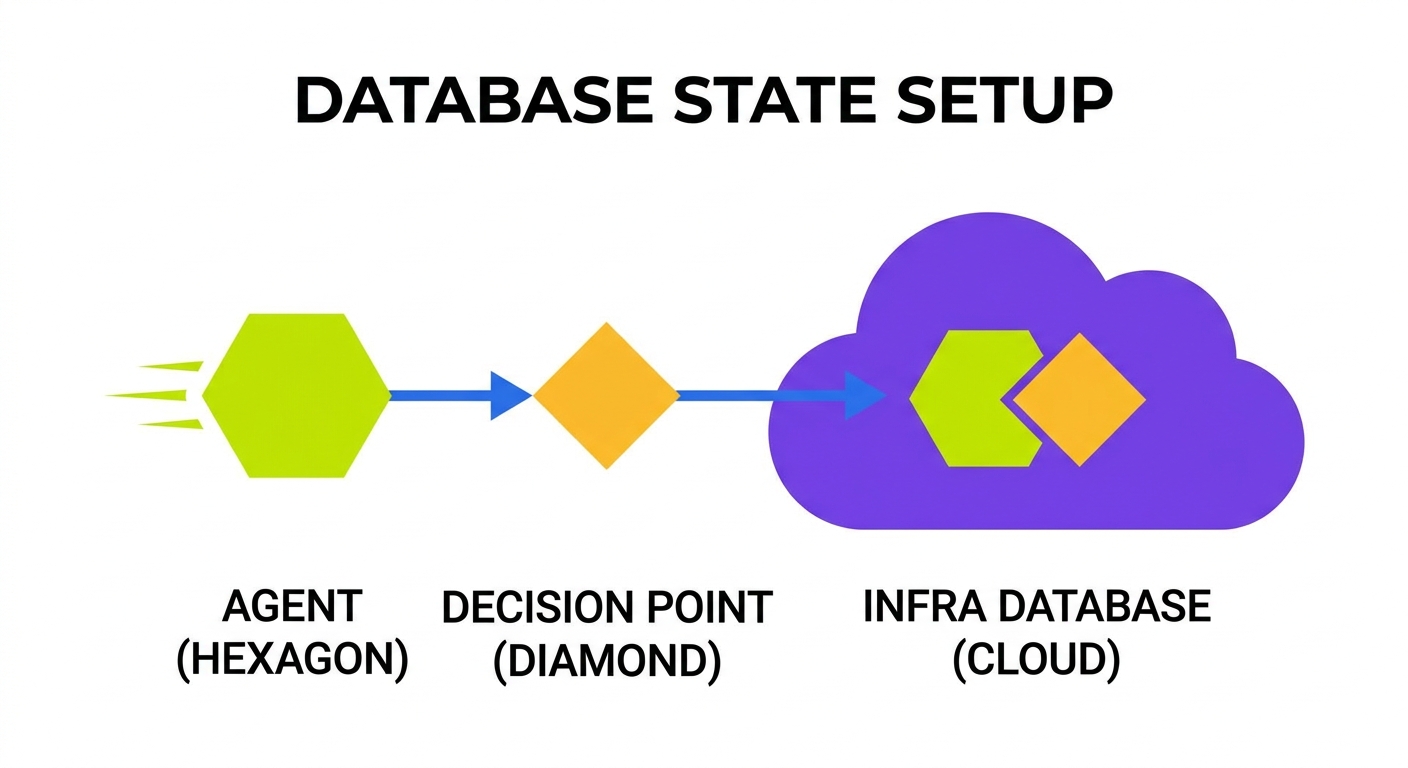

The Automator Agent: Managing Environment and State

The Automator agent is responsible for ensuring each test runs in an appropriate environment with precisely the right state. It eliminates the database state dilemma by taking a radically different approach: just-in-time state creation for each test.

// Example of how Automator Agent manages database state

class AutomatorAgent {

async prepareTestEnvironment(test: Test, stateRequirements: StateRequirements): Promise<TestEnvironment> {

// Create isolated database snapshot optimized for this specific test

const dbSnapshot = await this.createOptimizedDatabaseSnapshot(test, stateRequirements);

// Set up any additional environment requirements (mock services, etc.)

const mockedServices = await this.configureMockedServices(test.mockRequirements);

// Configure network conditions, if specified

const networkConfig = await this.configureNetworkConditions(test.networkRequirements);

return {

database: dbSnapshot,

services: mockedServices,

network: networkConfig,

cleanup: async () => {

// Destroy the isolated environment after test completion

await this.performCleanup(dbSnapshot, mockedServices);

}

};

}

private async createOptimizedDatabaseSnapshot(test: Test, stateRequirements: StateRequirements): Promise<DatabaseSnapshot> {

// Creates minimal DB with only the tables and rows needed for this specific test

// Applies necessary constraints and indexes that might affect test behavior

// ...

}

}Instead of maintaining a shared test database, the Automator creates lightweight, isolated database snapshots for each test or test group. These snapshots contain only the tables, rows, and schema elements required for the specific test scenario. The process works at the protocol level of the database, not just through application APIs:

- The Automator analyzes the test's state requirements (generated by the Planner)

- It creates a minimal database with only the required schema elements

- It seeds the database with precisely the data needed, including complex relationships

- After test execution, it captures performance metrics and state changes

- Finally, it discards the snapshot, ensuring perfect isolation between tests

This approach eliminates the "shared state" problem entirely while also dramatically improving performance. Tests no longer waste time setting up irrelevant parts of the database or waiting for global resets.

But even with perfect planning and state management, tests will occasionally fail. Therefore, we need an agent to diagnose and repair these failures.

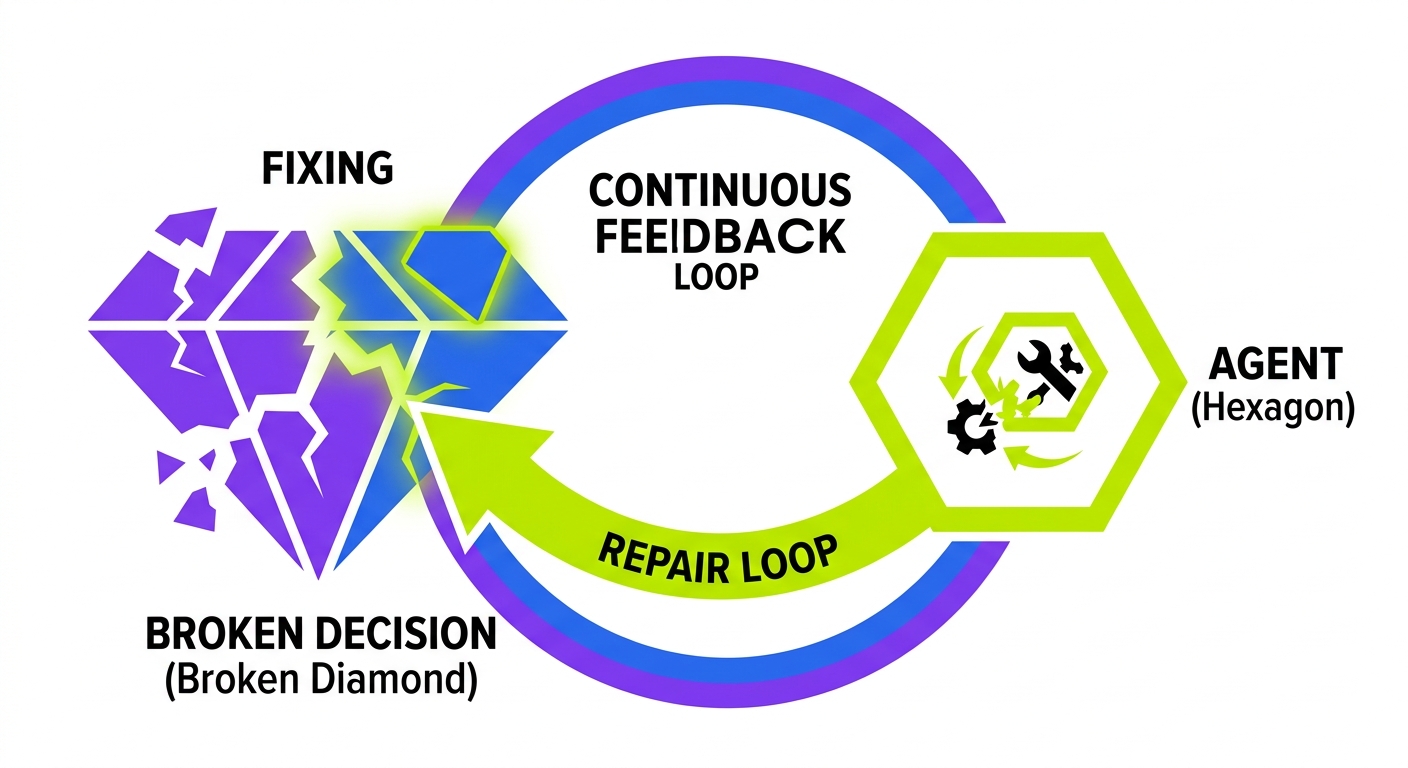

The Maintainer Agent: Self-Healing Test Infrastructure

The Maintainer agent is perhaps the most revolutionary component of Zero-Bugs CI/CD. It observes test execution, diagnoses failures, and performs self-healing operations to maintain pipeline integrity.

The true breakthrough of Zero-Bugs CI/CD isn't just catching bugs, but eliminating the maintenance tax through autonomous self-healing. Tests that maintain themselves are tests that engineers trust.

When a test fails, the Maintainer performs a sophisticated diagnosis:

// Example of how Maintainer Agent diagnoses and repairs test failures

class MaintainerAgent {

async diagnoseAndRepair(failedTest: Test, executionContext: TestExecutionContext): Promise<DiagnosisResult> {

// Analyze test failure details

const failureAnalysis = await this.analyzeFailure(failedTest, executionContext);

if (failureAnalysis.isFlaky) {

// For flaky tests, identify the root cause

const flakinessCause = await this.determineFlakinessRoot(failedTest, failureAnalysis);

if (flakinessCause.canBeFixed) {

// Apply self-healing to fix the flaky test

const fixResult = await this.applyTestFix(failedTest, flakinessCause.fixStrategy);

return {

diagnosis: 'fixed_flaky_test',

action: 'test_updated',

details: fixResult

};

}

} else if (failureAnalysis.isIntentionalChange) {

// For failures due to intentional application changes

const updatedTest = await this.updateTestToMatchNewBehavior(failedTest, failureAnalysis);

return {

diagnosis: 'intentional_change',

action: 'test_updated',

details: updatedTest

};

} else if (failureAnalysis.isGenuineBug) {

// For genuine bugs, provide detailed diagnosis to developers

return {

diagnosis: 'genuine_bug',

action: 'report_to_developer',

details: this.generateDetailedBugReport(failureAnalysis)

};

}

// Other diagnosis paths...

}

}The Maintainer distinguishes between different types of test failures:

- Genuine bugs - where the application code has a regression that must be fixed

- Flaky tests - where environmental factors cause intermittent failures

- Intentional changes - where the application behavior has legitimately changed

- Infrastructure issues - where CI/CD components themselves are failing

For genuine bugs, the Maintainer generates detailed diagnostic information, including a complete reproduction path, state snapshots, and even suggested fixes. This dramatically reduces the investigation time for developers.

For flaky tests, it performs automatic remediation, adjusting timing parameters, adding appropriate waits, or refactoring test logic to be more resilient. It learns from past failures, building a knowledge base of common flakiness patterns.

Most impressively, the Maintainer can handle intentional changes. When application behavior changes legitimately (like a UI update or API response format change), it automatically updates the affected tests to match the new behavior. This is possible because it understands the semantic intent of each test, not just its mechanical steps.

But these capabilities require deep understanding of the codebase itself. Therefore, being codebase-first is essential to the entire architecture.

Codebase-First: The Foundation of Autonomous CI/CD

The agents in Zero-Bugs CI/CD aren't just tools that run alongside your code; they're deeply integrated with your codebase at a semantic level. This codebase-first approach is what enables their advanced capabilities.

Semantic Understanding vs. Syntactic Analysis

Traditional CI/CD tools operate primarily at the syntactic level. They detect file changes, run commands against those files, and report pass/fail results. They have no understanding of what the code actually does or how components relate to each other.

Codebase-first agents, by contrast, build a semantic model of your application. They understand:

- Function signatures and their implicit contracts

- State management patterns and data flow

- UI component hierarchies and their visual properties

- API contracts and response shapes

- Database schema and relationships

- Business logic rules and domain constraints

This semantic understanding is what enables the Planner to accurately predict the impact radius of changes, the Automator to create precisely the right state for each test, and the Maintainer to automatically update tests when application behavior changes intentionally.

A truly autonomous CI/CD system doesn't just run your tests; it understands your code at the same level as your best engineers. Only then can it maintain itself with the same care and precision.

Predictive Maintenance

When your CI/CD system has semantic understanding of your codebase, it can perform predictive maintenance. The agents don't just react to failures; they proactively identify potential issues before they occur.

For example, when a developer proposes a change to a core utility function, the Planner can analyze all call sites and predict which tests might be affected. It can even suggest modifications to those tests as part of the same pull request, preventing failures before they happen.

Similarly, the Maintainer can detect patterns that often lead to flaky tests and suggest improvements before those tests start failing intermittently. If a test relies on timing assumptions that are close to breaking, the Maintainer can proactively refactor it to use more reliable synchronization methods.

But this level of understanding requires continuous learning. Therefore, the agents must observe, analyze, and improve their own performance over time.

Implementing Zero-Bugs CI/CD: The Path Forward

Moving to Zero-Bugs CI/CD is not a single tool purchase or a weekend migration. It's a transformative journey that touches every aspect of your development process. Here's a realistic roadmap for teams looking to embark on this journey:

Phase 1: Codebase Instrumentation

The first step is instrumenting your codebase to enable semantic understanding. This involves:

- Implementing standardized code patterns that agents can recognize

- Adding metadata to critical components and interfaces

- Building a comprehensive dependency graph

- Collecting historical test execution data

This phase focuses on making your code more "readable" to autonomous agents without compromising its readability for human developers.

Phase 2: Intelligent Test Selection

Once your codebase is instrumented, you can begin implementing the Planner agent for intelligent test selection. Start with simple static analysis and gradually add more sophisticated dependency tracking.

The key metrics for this phase are:

- Reduction in average CI execution time

- Maintenance of test coverage despite running fewer tests per change

- Reduction in false negatives (missed bugs)

Phase 3: State Management Automation

With intelligent test selection in place, tackle the database state dilemma by implementing the Automator agent. Begin with targeted test suites and gradually expand to your entire test infrastructure.

This phase often requires significant changes to how tests define their state requirements, moving from imperative setup code to declarative state specifications that the Automator can interpret and implement efficiently.

Phase 4: Self-Healing and Maintenance

The final phase introduces the Maintainer agent for diagnosis and self-healing. This is the most advanced component and requires the foundation of the previous phases.

Start with simple flakiness detection and gradually add more sophisticated diagnostic and repair capabilities. The ultimate goal is to automatically update tests when application behavior changes intentionally, eliminating the maintenance tax entirely.

But even with a phased approach, this transformation requires significant engineering investment. Therefore, it's crucial to measure the return on that investment continuously.

Measuring Success: The Zero-Bugs Metrics

The ultimate measure of Zero-Bugs CI/CD is how little your team thinks about the CI/CD pipeline itself. When the system is truly autonomous, it fades into the background, enabling engineers to focus entirely on product development.

More concretely, success can be measured across several dimensions:

Time to Feedback: How quickly do developers learn whether their changes have introduced regressions? Zero-Bugs CI/CD should reduce this from hours to minutes through intelligent test selection.

Maintenance Overhead: How much engineering time is spent maintaining tests vs. developing features? In a mature Zero-Bugs system, this ratio should approach zero as tests maintain themselves.

False Positive Rate: What percentage of test failures represent genuine bugs vs. infrastructure issues or flaky tests? Zero-Bugs CI/CD should drive this close to 100%, ensuring that every test failure represents a real issue.

Time to Diagnosis: When a test does fail, how long does it take to determine the root cause? The detailed diagnostic information from the Maintainer agent should reduce this from hours to seconds.

Release Confidence: How confident are teams in their releases? The ultimate goal is "deploy anytime" confidence, where engineers trust the pipeline so completely that they're willing to release at any time without manual verification.

Conclusion: Beyond Zero-Bugs

Zero-Bugs CI/CD represents more than just an improvement in testing infrastructure. It's a fundamental shift in how we think about software quality and development workflows.

By automating the maintenance of our automation, we break free from the paradox that has trapped engineering teams for years. We move from brittle, linear "And Then" pipelines to resilient, adaptive "But, Therefore" systems that understand our code and maintain themselves.

The benefits extend far beyond just catching bugs. When tests are self-maintaining, engineers add more of them. When the pipeline provides rapid, reliable feedback, deployment frequency increases. When diagnosis is automatic, resolution time decreases dramatically.

But achieving this vision requires a commitment to being codebase-first. Therefore, the journey to Zero-Bugs CI/CD is also a journey toward deeper understanding of our own systems.

The future of CI/CD isn't just faster or more comprehensive testing. It's autonomous systems that understand our code as well as we do, freeing engineers to focus on what matters most: building products that delight users. Zero-Bugs CI/CD isn't the end goal; it's the foundation that enables truly continuous, confident delivery at any scale.