Compliance automation is the use of software to continuously monitor, test, and generate evidence for regulatory controls, replacing manual collection and point-in-time audits. It spans three distinct layers: GRC platforms (Vanta, Drata, Sprinto) that monitor infrastructure configuration and collect policy artifacts; security scanning tools (Snyk, Wiz) that detect vulnerabilities in code and cloud environments; and test automation tools that execute application-level test cases and generate timestamped evidence that controls are working in production. Most engineering teams at Series A startups pursuing SOC2 or enterprise deals have the first two layers. Almost none have the third, and the third is the layer that actually proves your application behaves correctly under the specific conditions each framework requires.

You set up Vanta. You connected your AWS account, your GitHub org, your identity provider. The dashboard turned green. Your security scanner runs on every pull request. You feel compliant.

Then the auditor asks: can you show me a test result proving that a user with read-only permissions was actually denied a write operation in your production application last Tuesday? Not a policy document. Not a configuration screenshot. A test result, timestamped, from a real run against your live system.

Most teams cannot produce that. Not because they are non-compliant, but because no one told them that compliance automation has a third layer, and that layer is theirs to own.

What "Compliance Automation" Actually Means

Search for compliance automation tools and you will find Vanta, Drata, Sprinto, Secureframe, and Wiz dominating every result. They are excellent tools. They also do not solve the same problem your engineering team owns.

GRC platforms work by integrating with your cloud providers, identity systems, and SaaS tools. They pull configuration state: is MFA enabled on your AWS account, does your GitHub organization require branch protection, do your S3 buckets block public access. This is monitoring compliance. It answers: "is the control configured?"

Security scanners work by analyzing code and infrastructure for known vulnerabilities. Snyk finds an outdated dependency with a known CVE. Wiz finds an overly permissive IAM role. This is configuration-risk compliance. It answers: "do we have exploitable weaknesses?"

Neither answers the question auditors increasingly care about: "does your application enforce the controls you claim it does, end-to-end, at the application layer, consistently across every deploy?"

SOC2 CC8.1 is explicit: change management procedures must include "testing of changes prior to implementation." HIPAA 164.312(a)(1) requires testing that access controls function correctly, not just that they are configured. PCI DSS 6.5 requires testing that application-level controls prevent OWASP Top 10 vulnerabilities. These requirements are not satisfied by a dashboard showing green checkmarks. They are satisfied by test results.

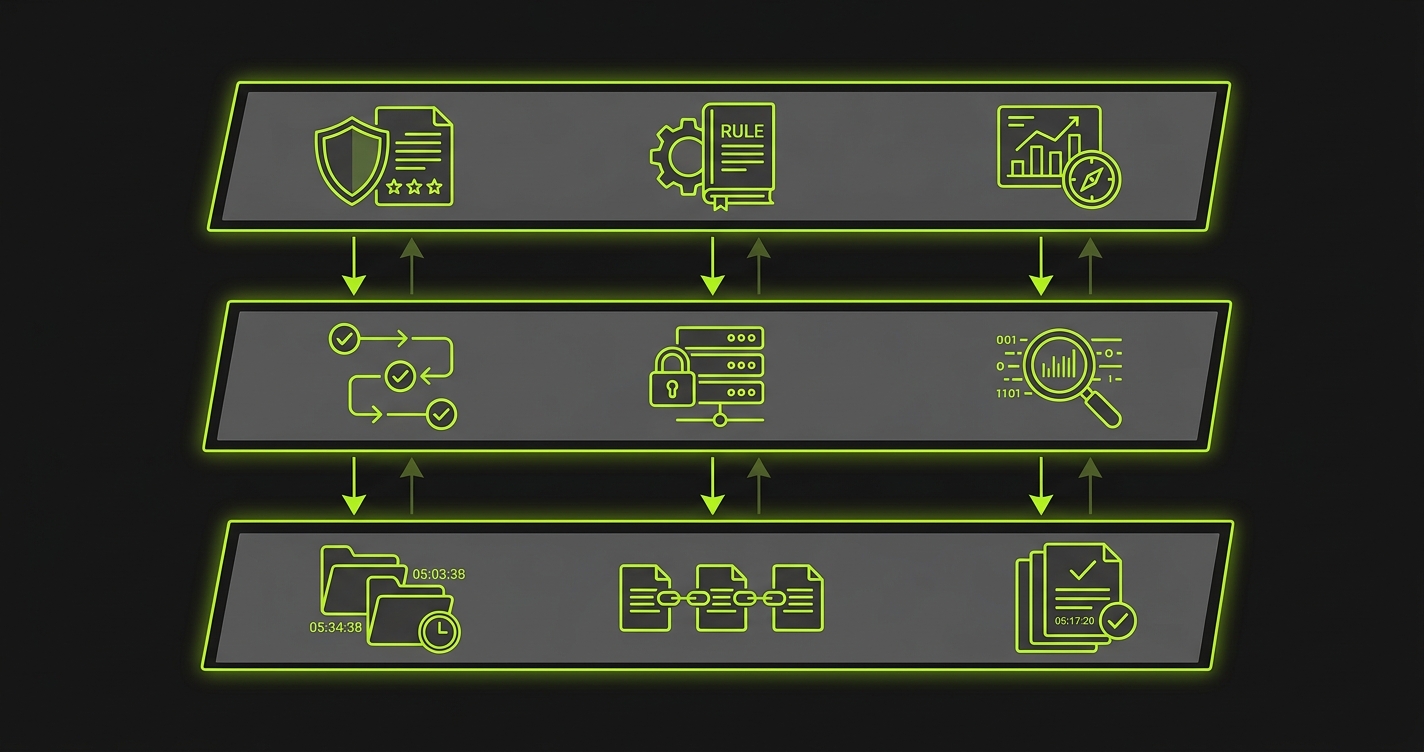

The Three Layers of Complete Compliance Automation

A team that has automated compliance fully is running all three layers. The GRC platform handles continuous monitoring of infrastructure configuration. The security scanner handles vulnerability detection in code and dependencies. The test automation layer handles behavioral verification of the application itself.

Each layer catches different failures. The GRC platform will alert you if someone disables MFA on a production account. The security scanner will catch a vulnerable dependency before it ships. The test automation layer is the only one that catches a regression where a recent deploy accidentally removed the authorization check on a sensitive endpoint. That regression would pass all security scans, show green in the GRC dashboard, and fail silently in production until a penetration tester or attacker found it.

The layers are also not substitutes for each other. A team that has Vanta and no test automation has automated the paperwork. A team that has Playwright tests but no GRC platform has automated application verification but not infrastructure compliance. You need all three, and the third is the one most teams are missing.

Autonoma supports compliance automation by continuously verifying that critical user flows work correctly on every deployment — providing the behavioral test evidence that auditors require.

Framework Controls That Require Test Evidence

The reason test automation belongs inside compliance automation is not abstract. The specific requirements of the major frameworks leave no ambiguity.

SOC2 (Trust Services Criteria)

For teams pursuing SOC2 automation specifically, the Trust Services Criteria are explicit about what testing evidence is required.

CC6.3 requires evidence that logical access controls function correctly. "Registered access credentials are not transferred to others." The control is only satisfied if you can show a test that attempts credential sharing and confirms the system rejects it.

CC8.1 requires change management validation. Changes to production systems must be tested before deployment. A test run in CI/CD, attached to every pull request, is what satisfies this. A manual QA step that someone may or may not have completed before each deploy does not.

CC7.2 requires monitoring for unauthorized access. Tests that verify unauthorized requests return the correct response codes are direct evidence of this control.

HIPAA (Security Rule)

The HIPAA Security Rule is equally specific about technical verification.

164.312(a)(1) requires that covered entities implement "technical policies and procedures for electronic information systems that maintain electronic protected health information to allow access only to those persons or software programs that have been granted access rights." A test that attempts PHI access with an unauthorized credential and verifies the rejection is the evidence of this policy functioning.

164.312(b) requires audit controls: "hardware, software, and/or procedural mechanisms that record and examine activity in information systems that contain or use ePHI." A test that performs a PHI-touching action and then verifies the audit log contains a correct entry is evidence that the audit control works, not just that the logging configuration is enabled.

PCI DSS (v4.0)

PCI DSS v4.0 requires application-level testing as part of its secure development lifecycle.

Requirement 6.5 requires that all payment application changes are tested. "Test changes using one of the following approaches: Testing in a pre-production environment... or Testing in a production environment." Running automated tests on every deploy is the most defensible implementation of this requirement.

Requirement 6.2.4 requires preventing common application vulnerabilities including injection attacks, broken authentication, and insecure access control. An automated test suite that covers these attack vectors, run in CI on every change, is direct evidence of 6.2.4 compliance.

Beyond the Big Three

ISO 27001 Annex A.14.2 requires testing during development and acceptance. GDPR Article 32 requires "a process for regularly testing, assessing and evaluating the effectiveness of technical and organisational measures." SOX IT General Controls require change management testing for financial systems. The pattern is consistent: every major compliance framework expects test evidence, not just configuration monitoring.

What this means in practice: every test run against your application is generating compliance evidence. The question is whether you are capturing it, labeling it against the specific control it satisfies, and making it retrievable when an auditor asks.

What Manual Compliance Costs You

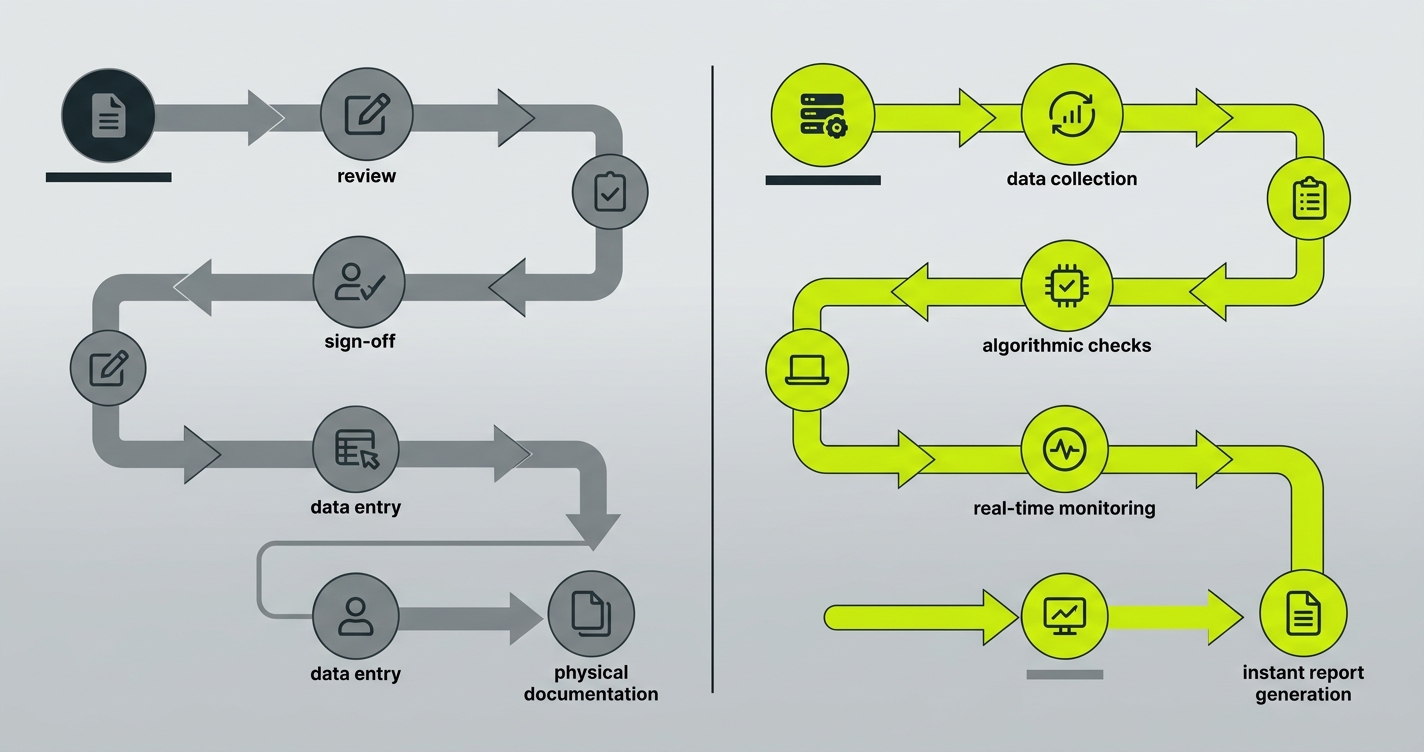

The manual alternative is familiar to any engineering leader who has gone through a SOC2 audit without proper automation.

Six weeks before the audit window opens, someone from the engineering team is pulled off product work to gather evidence. They compile screenshots of test results from the last quarter. They pull deployment logs. They write narrative descriptions of the access control policy. They chase down engineers who made changes and ask them to document what testing they did. Some of those engineers have left the company. Some of the changes do not have clear test records.

The auditor reviews the evidence and finds gaps. The relevant deployment logs are incomplete. There is no test evidence for three specific changes that touched authentication. The team scrambles to close the gaps with additional documentation. The audit takes two weeks longer than planned.

Then the enterprise deal closes, and six months later the next audit starts the same way.

At a company of 20 engineers, this process typically consumes 60-80 engineering hours per audit cycle, plus unpredictable disruption to sprint commitments. The cost compounds because the evidence collection is always retroactive: if a control was broken for three months and no one tested it, there is no evidence to collect.

Continuous compliance automation flips this entirely. Tests run on every deploy. Evidence is generated, timestamped, and labeled automatically. When the audit window opens, the evidence is already there.

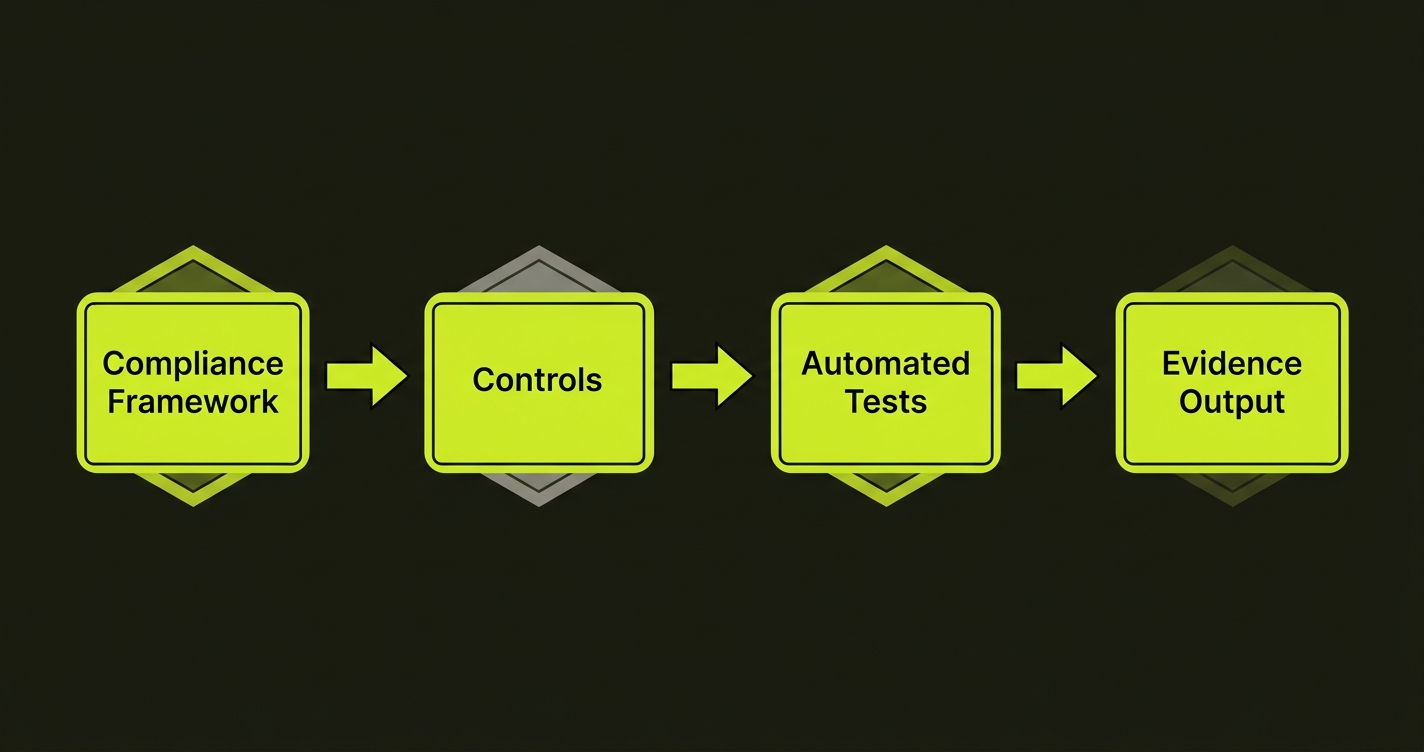

Building the Test Automation Layer for Compliance

The implementation starts with mapping your framework requirements to specific test cases. For each control in your compliance framework, there should be at least one automated test that verifies it.

For access control requirements (SOC2 CC6.3, HIPAA 164.312(a)(1)), write tests that attempt operations with insufficient permissions and verify the correct rejection response. A read-only user attempting a POST to a data-modification endpoint should receive a 403. Test that. Run it in CI on every deploy. Tag it against the control it satisfies.

For change management requirements (SOC2 CC8.1, PCI DSS 6.5), the compliance evidence is the test run itself. If every pull request must pass a full test suite before merging, each merged PR has an attached test report proving it was validated. Store these reports. Link them to deployments. This is your CC8.1 evidence.

For audit control requirements (HIPAA 164.312(b)), write tests that perform a sensitive operation and then query the audit log to verify the entry was created correctly. This is not just configuration testing. It is behavioral testing of the audit system itself.

The tooling question is secondary to the mapping question. You need to know which controls require test evidence, write those tests, and automate the evidence collection. The specific test runner is less important than the discipline of treating test reports as compliance artifacts.

This is where Autonoma changes the calculus. We built our platform so that connecting your codebase automatically generates test coverage across your application flows, including the access control and audit patterns that compliance frameworks require. The Planner agent reads your routes and identifies which endpoints handle sensitive operations. The Automator agent runs tests against those endpoints with varying permission levels. Every run produces timestamped reports that map directly to framework controls. The Maintainer agent keeps those tests passing as your code changes, so the compliance evidence does not silently stop being generated after a refactor breaks the tests and no one notices.

The reason this matters for compliance specifically: self-healing tests mean your evidence generation is continuous. A test suite that breaks and sits red for six weeks because no one had time to fix it produces a six-week gap in your compliance record. Self-healing eliminates that gap.

Compliance Automation Tools: What Each Layer Covers

For teams assembling a complete compliance automation stack, the layers break down cleanly.

| Layer | Tools | What It Proves | Framework Controls | Limitation |

|---|---|---|---|---|

| GRC & Monitoring | Vanta, Drata, Sprinto, Secureframe | Controls are configured correctly | SOC2 CC6.1, CC7.1; ISO 27001 A.8 | Cannot verify application behavior |

| Security Scanning | Snyk, Wiz, Veracode, Checkmarx | No known vulnerabilities exist | SOC2 CC7.1; PCI DSS 6.2; ISO 27001 A.12.6 | Cannot verify controls are enforced |

| Test Automation | Autonoma, Playwright, Cypress | Application enforces controls correctly | SOC2 CC8.1, CC6.3; HIPAA 164.312; PCI DSS 6.5 | Requires continuous maintenance |

GRC and Monitoring Layer

Vanta, Drata, Sprinto, and Secureframe are the main options. They connect to AWS, GCP, Azure, GitHub, Okta, and similar systems to monitor configuration state and collect policy artifacts. Vanta has the most integrations and the broadest market presence. Drata has strong customization for complex multi-framework compliance. Sprinto is well-suited for early-stage startups. None of them generate application behavioral test evidence.

Security Scanning Layer

Snyk for dependency and code vulnerability scanning. Wiz for cloud infrastructure risk. Veracode and Checkmarx for enterprise application security scanning. These tools are important and complement the other layers, but they test for vulnerabilities, not for whether your application correctly enforces controls.

Test Automation Layer

Autonoma for codebase-first test generation with self-healing and continuous evidence generation. Playwright and Cypress for teams building their own test suites. The critical distinction is whether your tests are mapped to specific compliance controls and whether evidence from each run is captured as an audit artifact. Writing tests is not the same as automating compliance. The tests need to run continuously, produce labeled evidence, and be retrievable.

For the complete list of compliance automation tools by category, see our guide to compliance automation tools.

Fitting Test Automation Into a Compliance Program

The practical integration depends on where you are in the compliance journey.

Compliance Automation for Startups

If you are starting a SOC2 readiness program from scratch, the sequence is: establish your GRC platform first (it sets the scope and identifies what controls apply), add security scanning in CI (it catches the vulnerabilities that would fail a penetration test), then layer in compliance-mapped test automation (it generates the behavioral evidence your framework requires). For compliance automation for startups specifically, the most common path is starting with a GRC platform during SOC2 readiness, then adding test automation before the first audit window.

Adding the Test Layer to an Existing Program

If you are already in a SOC2 program and using Vanta or Drata, the gap is almost certainly in the test evidence layer. Your GRC platform is collecting configuration evidence. Your developers are writing code. The question is whether every deploy is generating test reports that satisfy CC8.1, CC6.3, and CC7.2 requirements, and whether those reports are stored where your auditor can find them.

The connection between continuous testing and continuous compliance is the subject of our continuous compliance guide. For teams specifically working on implementing this from code and infrastructure, the compliance as code approach gives a framework for treating compliance controls as first-class software artifacts rather than external obligations.

The Audit Readiness Test

Here is a practical check of whether your current compliance automation covers the test evidence layer.

Pull your last 30 deploys to production. For each one, ask: is there a timestamped test report attached to that deploy that includes tests covering your access control flows? Is there a test report showing that your audit logging functions correctly end-to-end, not just that the log configuration is enabled? Is there a test result that an auditor could review to confirm changes were validated before deployment, satisfying CC8.1?

If the answer is no for most of those deploys, you have a gap. Not a gap in your GRC platform, not a gap in your security scanning, but a gap in the one layer that proves your application does what you say it does.

This is what we mean when we say test automation is compliance automation. Not a stretch, not a marketing claim. The frameworks say so explicitly. CC8.1 says changes must be tested. 164.312(a)(1) says access controls must be verified. 6.5 says application controls must be tested. The test reports are the evidence. Generating them continuously is compliance automation.

Compliance automation is the use of software to continuously monitor, test, and generate evidence for regulatory controls, replacing manual collection and point-in-time audits. It spans three layers: GRC platforms that monitor infrastructure configuration (Vanta, Drata, Sprinto), security scanners that detect code and cloud vulnerabilities (Snyk, Wiz), and test automation tools that verify your application enforces controls correctly and generate timestamped audit evidence. Most teams automate the first two layers and miss the third.

The best compliance automation tools cover all three layers. For GRC and monitoring: Vanta, Drata, Sprinto, and Secureframe. For security scanning: Snyk, Wiz, and Veracode. For test automation and evidence generation: Autonoma connects to your codebase and generates tests that map to specific framework controls, producing timestamped audit evidence on every deploy. The critical gap for most teams is the test automation layer, which is the only layer that proves your application enforces its controls correctly.

SOC2 automation works by continuously collecting evidence across the Trust Services Criteria. GRC platforms collect configuration evidence for controls like CC6.1 (logical access) and CC7.2 (monitoring). Security scanners satisfy controls related to vulnerability management. Test automation satisfies CC8.1 (change management testing), CC6.3 (access control verification), and CC7.2 (unauthorized access monitoring). Autonoma generates test evidence for CC8.1 and CC6.3 automatically by reading your codebase and running behavioral tests on every deploy.

Monitoring compliance means continuously checking that controls are configured correctly: MFA is enabled, encryption is on, branch protection is active. Tools like Vanta and Drata do this well. Proving compliance means demonstrating that controls function correctly at the application layer: an unauthorized user attempting access receives a rejection, a PHI-touching action creates the correct audit log entry, a code change was tested before deployment. Test automation generates this proof. Auditors increasingly require both, but most teams only have monitoring.

SOC2 CC8.1 explicitly requires that changes be tested prior to implementation, and CC6.3 requires evidence of access control enforcement. HIPAA 164.312(a)(1) requires technical verification that access controls function correctly, and 164.312(b) requires testing of audit control functionality. PCI DSS 6.5 requires application-level testing for common vulnerabilities on every change. All three frameworks treat test reports as compliance evidence, not just configuration dashboards.

Yes. Playwright and Cypress can generate compliance evidence if your tests cover the specific controls your framework requires and if you store test reports as labeled audit artifacts. The limitation is maintenance: test suites that are not continuously maintained break silently, creating gaps in your compliance record. Autonoma's self-healing capability keeps tests passing as your code changes, ensuring evidence generation is continuous rather than dependent on someone fixing broken tests.