Continuous compliance is the practice of verifying regulatory controls on every code change rather than once a year, generating audit evidence automatically as a byproduct of your normal CI/CD pipeline. It has two distinct forms that most teams conflate: continuous compliance monitoring (dashboards like Vanta and Drata checking that infrastructure controls are configured) and continuous compliance through testing (automated test suites running on every PR and deploy, producing timestamped evidence that controls actually work at the application layer). The monitoring layer is table stakes for any Series A+ company. The testing layer is what separates teams that sail through enterprise security reviews from teams that scramble to collect evidence six weeks before an audit.

Your compliance dashboard is green. Every AWS control is passing. MFA is enforced across the org. Your S3 buckets are locked down. The GRC platform shows zero critical findings.

Then a prospect's security team sends a vendor questionnaire. One question: "Can you provide evidence that your application-level access controls were tested against your most recent production deployment?" Not a policy document. Not a configuration screenshot. Evidence of a test.

This is the gap that annual audits, and most continuous compliance monitoring tools, never close.

The Word "Continuous" Is Doing A Lot of Work

Search "continuous compliance" today and you will find two very different things described with the same phrase.

The first meaning, and the one that dominates the SERP, is continuous compliance monitoring: infrastructure controls checked constantly, policy drift detected in real time, compliance posture visible in a dashboard. Vanta, Drata, and Sprinto built their businesses on this. It is genuinely useful, and it is genuinely not enough.

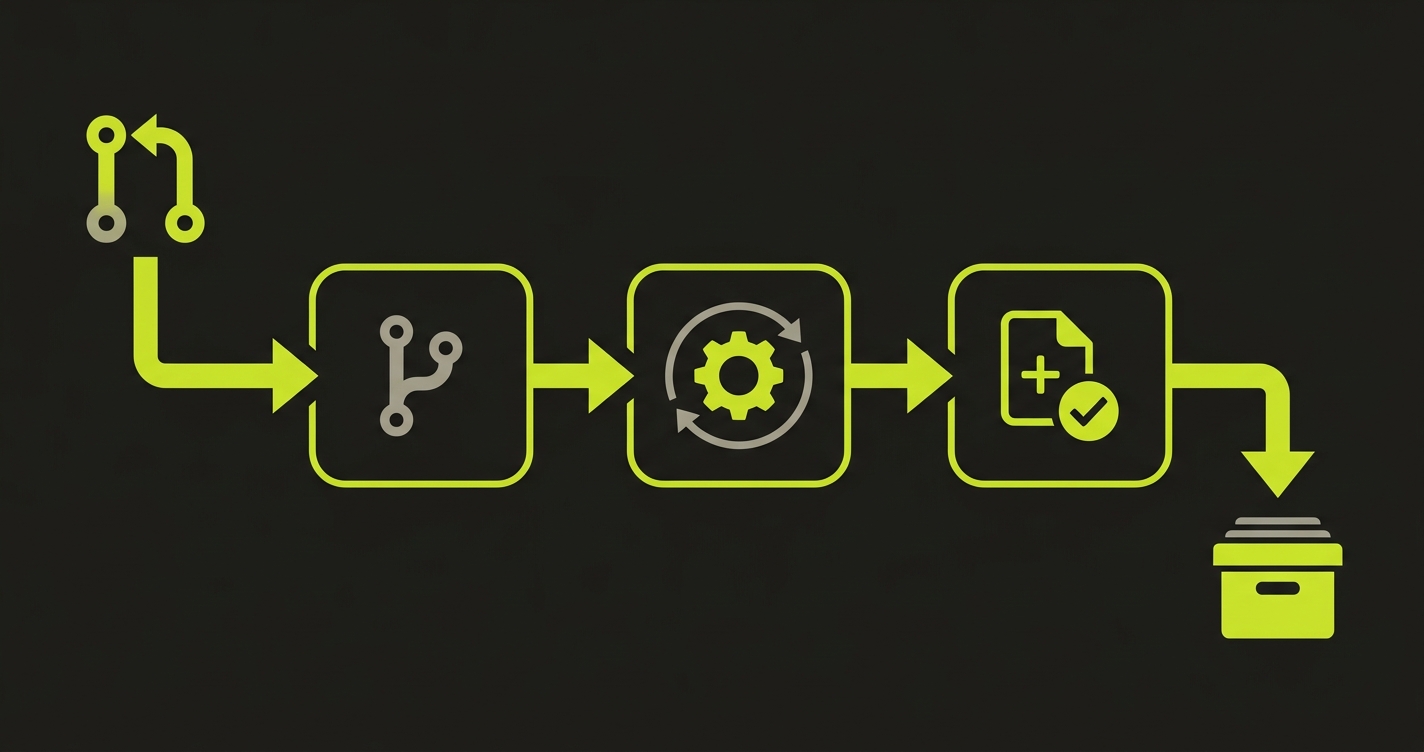

The second meaning is almost absent from the conversation: continuous testing as the mechanism for continuous compliance. Every PR triggers a test suite that covers compliance-relevant flows. Every deploy produces a test report. That report is the audit artifact. The compliance evidence is not collected after the fact; it is generated on every merge.

These two forms of continuous compliance are not alternatives. They answer different auditor questions. Monitoring answers: "Is the control configured?" Testing answers: "Does the control work?" The frameworks that matter to enterprise deals, SOC2, HIPAA, PCI DSS, require both answers. They just rarely get both.

Why Annual Audits Cannot Work in 2026

The annual audit model was designed for a world where software changed slowly. A system deployed in January and not touched until December had one audit-relevant surface to evaluate. Teams could collect evidence once, document it, and move on.

Modern engineering teams ship to production dozens of times per week. Each deploy could introduce a regression in an access control path, an audit logging function, or a permission boundary. An annual audit of that environment is not evaluating your compliance posture. It is evaluating whatever state your system happened to be in when the audit window opened.

The math is stark. A team shipping 20 times per week produces roughly 1,000 deploys between annual audit windows. Each one is a potential compliance regression that no one checks. An auditor reviewing that period is hoping the spot-check they perform lands on a representative sample. Often it does not.

SOC2's Type II certification already implicitly acknowledges this by requiring evidence over a period of time rather than at a point in time. The auditor is not asking "are you compliant today?" They are asking "were you compliant consistently over the last 6-12 months?" An annual audit cannot answer that question. A continuous testing pipeline can.

Autonoma strengthens continuous compliance by running E2E tests on every PR — providing automated evidence that critical workflows function correctly after every code change.

What Continuous Compliance Monitoring Actually Proves

To be fair to Vanta, Drata, and the monitoring-first approach: these tools do something genuinely important. They prove that your controls are configured.

When an auditor asks whether MFA is enforced across your production environment, a GRC platform provides irrefutable, continuously-updated evidence. When they ask whether your S3 buckets have public access blocked, the dashboard has the answer. When they ask whether your engineers are completing security training, the integrated compliance platform tracks completion rates.

Configuration evidence is real evidence. It satisfies a large portion of the Trust Services Criteria. No serious compliance program skips it.

The limit shows up in the controls that require behavioral proof. SOC2 CC8.1 requires that changes be tested prior to implementation. A dashboard cannot satisfy this. The evidence is a test report attached to the deploy. SOC2 CC6.3 requires evidence that access controls function correctly. A policy document and an Okta configuration do not satisfy this. A test run showing that an unauthorized request to a sensitive endpoint returned a 403 does.

HIPAA 164.312(a)(1) goes further: covered entities must implement "technical policies and procedures for electronic information systems that maintain electronic protected health information to allow access only to those persons or software programs that have been granted access rights." The word "implement" means the control must work, not just be documented.

This is the specific territory where compliance automation through testing picks up where monitoring leaves off.

Continuous Compliance Through Testing: What It Actually Looks Like

The implementation is less exotic than the phrase suggests. It is, at its core, a discipline applied to your existing CI/CD pipeline, following the same principles that drive security automation more broadly.

Every pull request runs a test suite. That test suite includes tests covering compliance-relevant behaviors: access control boundaries, audit log creation, data handling paths, authentication flows. The test runner produces a report. The report is stored as a build artifact, timestamped, linked to the commit SHA and the deploy. That report is your audit evidence.

When the auditor asks for evidence that changes were tested before deployment (CC8.1), you pull the test reports for the relevant deploys. When they ask whether access controls were verified (CC6.3), you show the test run that attempted an unauthorized operation and logged the rejection. When they ask about PHI access controls (HIPAA 164.312(a)(1)), you show the test that verified a user without PHI access received the correct response.

The tests do not need to be exotic. The compliance-relevant ones fall into clear categories.

Access control tests attempt operations with insufficient permissions and verify the rejection. A read-only user trying a write operation should receive a 403. A user from one tenant trying to access another tenant's data should receive a 403. These patterns overlap significantly with API security testing practices. They cover the bulk of CC6.3 and 164.312(a)(1).

Audit log tests perform a sensitive action and then verify the audit log contains the expected entry. Not just that logging is enabled, but that the specific action, actor, and timestamp appear in the log. This satisfies HIPAA 164.312(b) and SOC2 CC7.2.

Change validation tests are simply the full test suite passing on every PR before merge. The test report IS the CC8.1 evidence. No additional documentation needed.

Data boundary tests verify that sensitive data is not exposed in responses or logs where it should not appear. A user lookup endpoint should not return password hashes. A search endpoint should not return data from other tenants. These fall under the broader umbrella of web application security testing and cover PCI DSS 6.2.4 and GDPR Article 32.

The Evidence Gap: What Monitoring Misses

The gap is visible most clearly when you run through a realistic audit scenario.

An enterprise prospect's security team requests SOC2 Type II documentation. You provide your Vanta dashboard export. They review the access management, encryption, and monitoring controls. Then they ask for something specific: evidence of testing on the three deploys that touched your authentication system last quarter.

With monitoring-only compliance automation, you go looking for evidence that was never generated. There may be deployment logs. There may be Jira tickets with QA notes. There may be individual engineers who remember what they tested. None of this is the timestamped, structured test report that satisfies CC8.1.

With continuous compliance through testing, you pull the test reports for those three deploys from your artifact store. Each report shows which tests ran, which passed, when it ran, against which commit. The CC8.1 evidence is complete.

The enterprise prospect's security review is a proxy for the auditor review. Startups pursuing enterprise deals encounter this question months before their formal SOC2 audit. The teams that have continuous testing in place answer it immediately. The teams that have monitoring-only compliance scramble.

Compliance as Code: The Architecture That Makes This Sustainable

Treating compliance controls as software artifacts, rather than external obligations to satisfy periodically, is what makes continuous compliance through testing sustainable at scale.

In practice, this means compliance controls are defined in code. The tests that verify them live in the same repository as the application code. They run in the same CI pipeline. They are reviewed in the same pull request process. When a new endpoint is added that handles sensitive data, the pull request that adds it also adds the compliance test for it.

This approach, sometimes called compliance as code, means that compliance coverage evolves with the codebase rather than lagging behind it. The alternative is a compliance test suite maintained separately, manually updated when the application changes, and inevitably falling out of sync.

The self-healing angle matters here specifically for compliance. A test suite that breaks after a refactor and sits red for two weeks creates a two-week gap in your compliance record. If those two weeks include a deploy that introduced a regression in an access control path, the gap means no one noticed.

Autonoma approaches this from the codebase side. Our Planner agent reads your routes and identifies which endpoints handle sensitive operations. It maps those to the compliance test cases that need to cover them. The Maintainer agent keeps those tests passing as the code changes. The result is continuous compliance evidence generation without a human manually maintaining the test suite.

For teams building out their automated compliance testing capability, the architecture question is: where do your compliance test artifacts live, who owns keeping them passing, and how are they linked to your deploys? If the answer to any of those is "unclear," the evidence generation is not continuous regardless of how green your monitoring dashboard looks.

Compliance Automation Stack: Monitoring and Testing Combined

The two approaches are complementary, not competing. A complete continuous compliance program uses both.

| Approach | Tools | Evidence Generated | Controls Satisfied | Limitation |

|---|---|---|---|---|

| Continuous Monitoring | Vanta, Drata, Sprinto | Configuration state, policy artifacts, training records | SOC2 CC6.1, CC7.1; ISO 27001 A.8; most infrastructure controls | Cannot prove application-layer behavior |

| Continuous Testing | Autonoma, Playwright, Cypress + CI/CD | Timestamped test reports per deploy, mapped to specific controls | SOC2 CC8.1, CC6.3; HIPAA 164.312; PCI DSS 6.5; GDPR Art. 32 | Requires tests to cover compliance scenarios; needs maintenance |

The sequence for teams building this out: establish the GRC platform first, because it maps the controls and collects the infrastructure evidence. Add security scanning in CI, because it handles the vulnerability-level requirements. Then add compliance-mapped test automation, because it is the layer that closes the behavioral verification gap.

Teams already running a GRC platform typically find the test layer is the missing piece. Continuous compliance monitoring is in place. The security scanning is in place. The evidence that a specific deploy was tested against compliance-relevant scenarios is the gap.

The Audit Automation Readiness Check

A quick benchmark: pull your last ten production deploys. For each one, answer three questions.

First, is there a timestamped test report linked to that deploy? Not a test run from sometime that week, but a specific report for that specific deploy, showing which tests ran and passed before the code shipped.

Second, do the tests in that report cover access control verification? Is there at least one test that attempted an unauthorized operation and verified the rejection?

Third, is the report stored somewhere retrievable? Not in a CI log that expires after 30 days, but in artifact storage where an auditor can access it 12 months from now?

If the answer to all three is yes for most of your deploys, you have continuous compliance through testing. If the answer is no or uncertain, you have monitoring compliance, which is valuable, but incomplete.

The gap between monitoring and testing is the gap between feeling compliant and being able to prove it. Annual audits obscure this gap because they only look at a snapshot. Continuous compliance through testing eliminates the gap by making proof generation automatic.

Continuous compliance is the practice of verifying regulatory controls on every code change rather than once a year. It has two forms: continuous compliance monitoring, where tools like Vanta and Drata check that infrastructure controls are configured correctly in real time, and continuous compliance through testing, where automated test suites run on every PR and deploy and produce timestamped audit evidence that controls work at the application layer. A complete program uses both. Most teams only have the monitoring layer.

Continuous compliance monitoring is the use of GRC platforms like Vanta, Drata, and Sprinto to check infrastructure controls continuously rather than at annual audit points. These tools integrate with AWS, GitHub, Okta, and similar systems to verify that MFA is enforced, encryption is active, and access policies are correctly configured. They generate configuration evidence that satisfies infrastructure-level compliance controls. They cannot verify that your application actually enforces controls at the behavioral layer, which is where continuous compliance testing fills the gap.

Continuous compliance monitoring checks that controls are configured correctly using tools like Vanta or Drata. Continuous compliance testing verifies that those controls actually work at the application level by running automated tests on every deploy. Monitoring answers: 'Is MFA enabled?' Testing answers: 'Does the application actually reject an unauthorized access attempt?' SOC2 CC8.1, HIPAA 164.312(a)(1), and PCI DSS 6.5 require the second kind of evidence. Most teams only produce the first.

Implement continuous compliance through testing by: mapping your framework controls to specific test scenarios (access control tests, audit log tests, data boundary tests); writing those tests in your existing test suite; running the full suite on every PR and deploy in CI/CD; storing test reports as labeled artifacts linked to each deploy. Autonoma automates this by reading your codebase to identify compliance-relevant endpoints, generating tests for them, and keeping those tests passing as the code changes. Playwright and Cypress also work if tests are written and maintained to cover compliance scenarios.

Annual audits were designed for systems that changed slowly. Modern engineering teams ship to production dozens of times per week, and each deploy is a potential compliance regression. An annual audit evaluates a snapshot, not a continuous posture. SOC2 Type II already acknowledges this by requiring evidence over a period rather than at a point in time, which is exactly what continuous compliance testing provides: a test report for every deploy, across the entire audit period.

SOC2 CC8.1 explicitly requires changes to be tested before deployment. CC6.3 requires evidence that logical access controls function correctly. HIPAA 164.312(a)(1) requires technical verification of access control enforcement, not just configuration documentation. HIPAA 164.312(b) requires testing of audit control functionality. PCI DSS 6.5 requires application-level testing on every change. GDPR Article 32 requires a process for regularly testing the effectiveness of security measures. Test reports from continuous testing satisfy all of these directly.

The best continuous compliance stack combines monitoring and testing tools. For continuous monitoring: Vanta, Drata, Sprinto, and Secureframe handle infrastructure configuration evidence. For continuous testing: Autonoma connects to your codebase and generates compliance-mapped tests that run on every deploy, producing timestamped audit evidence automatically. Playwright and Cypress are options for teams building their own test suites. Security scanning tools like Snyk and Wiz complement both layers by covering vulnerability detection.