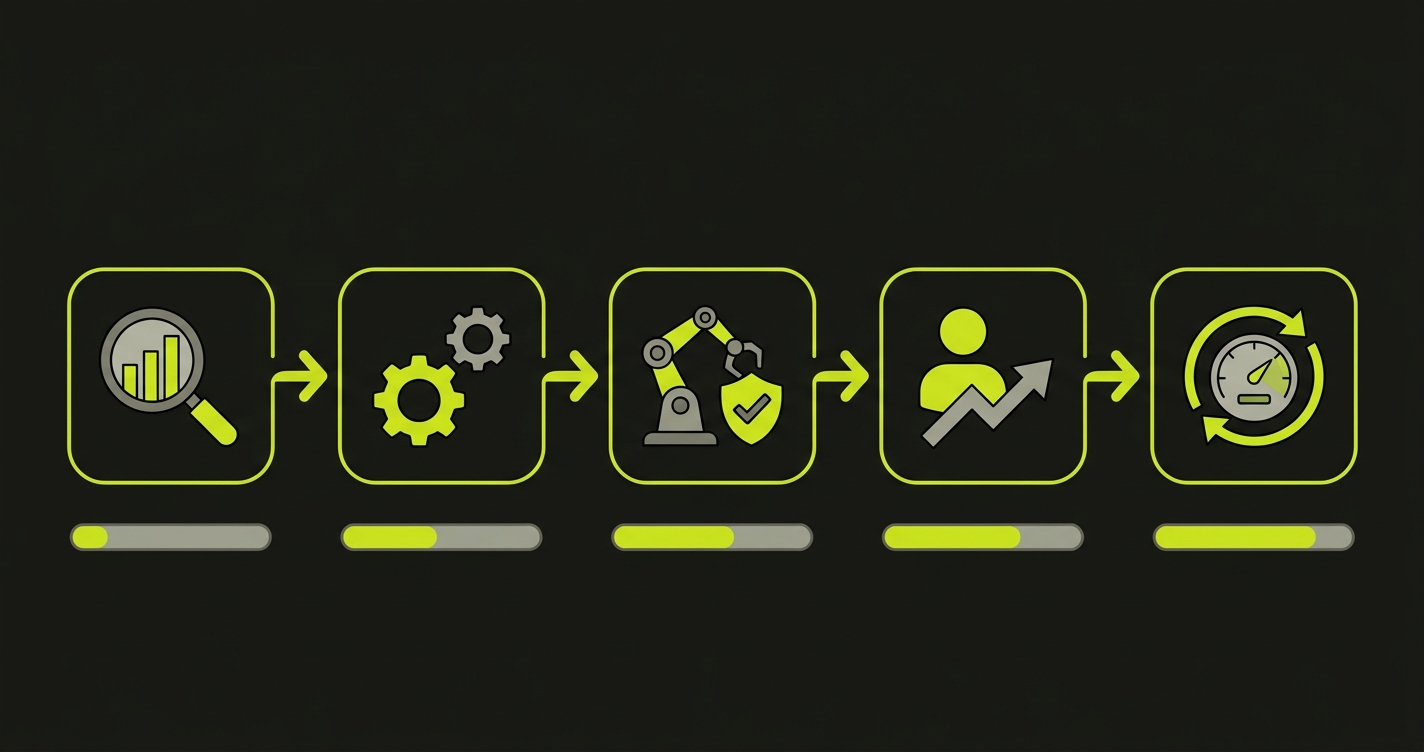

QA process improvement is the practice of restructuring how software quality is planned, executed, and measured -- adjusting team ratios, tooling, and workflows to keep defect escape rates low as development velocity changes. In 2026, the trigger for most teams is AI: coding assistants have accelerated developer output faster than any previous productivity shift, which means the QA function that was sized for one speed now operates at another. The teams that adapt quickly restructure across five phases: Assess, Automate Foundations, Deploy AI Testing, Retrain Team, and Optimize. Those that don't become the bottleneck.

Your developers got faster. Significantly faster. Maybe it was Cursor, maybe Copilot, maybe a full vibe coding workflow -- but at some point in the last eighteen months, your dev team's output roughly doubled. Feature requests that took three sprints now take one. Backend scaffolding that used to consume a week gets generated in an afternoon.

And then someone looked at your QA pipeline and did the math.

The testers didn't double. The test suite didn't double. The cycle time didn't halve. What happened instead is that the queue grew. Tickets marked "ready for QA" started stacking up. Releases slipped not because development was slow, but because quality validation couldn't absorb the throughput. The bottleneck shifted -- quietly, without fanfare -- from engineering to QA.

This is the defining QA process improvement challenge of 2026. Not "how do we test AI-generated code" (though that matters, and we cover it in our shift-left testing guide). The more urgent question is structural: how do you rebuild a QA function that was designed for a slower era of software delivery, under pressure, without breaking what's already working?

It's the question that led us to build Autonoma -- an AI testing platform where agents read your codebase and generate end-to-end tests automatically, so coverage scales with your development velocity instead of against it. This article is the playbook we wish we'd had before we started building.

Why the Old QA-to-Dev Ratios Are Broken

Before rebuilding anything, it helps to understand what changed and why the traditional benchmarks no longer hold.

The pre-automation rule of thumb was one QA engineer for every two to three developers. Manual testing was the primary quality gate, which meant QA time scaled linearly with developer output. Hire three more developers, you needed one or two more QA engineers. The model was stable, if expensive.

Scripted test automation shifted that ratio. With Selenium and later Playwright, a skilled QA engineer could cover the regression work of several manual testers. Teams running mature automation suites operated comfortably at a 1:4 or 1:6 ratio -- one QA for every four to six developers.

| Era | QA-to-Dev Ratio | Primary Quality Gate |

|---|---|---|

| Pre-automation | 1:2 to 1:3 | Manual testing |

| Post-automation | 1:4 to 1:6 | Scripted E2E + manual exploratory |

| AI-native testing | 1:8 to 1:12 | AI test generation + exploratory |

| Aspirational (full AI) | 1:15+ | AI testing + quality architecture |

AI coding tools don't just change the ratio -- they break the underlying assumption the ratio is based on. Scripted automation assumed that QA engineers' time was the scarce resource. Generate tests faster and you extend the leverage. But AI coding tools accelerate the code being written, not the tests covering it. The surface area grows faster than your team can script against it.

The deeper problem: AI-generated code has a different failure profile. It tends to be syntactically correct, passes linting, and often looks reasonable on inspection. But it fails at the integration layer -- the place where two modules interact, where a flow depends on state that wasn't considered, where an edge case the developer never thought about gets triggered by a real user. This is the cost that doesn't show up until production, and it's precisely what a bottlenecked QA team can't catch in time.

The QA Transformation Playbook: Five Phases

What follows is a five-phase approach to restructuring a QA function under live-fire conditions -- while the team keeps shipping, the sprint doesn't pause, and the backlog doesn't wait. Each phase builds on the previous one. Skipping phases is possible, but the failure modes are predictable.

Phase 1: Assess (Weeks 1-2)

You can't fix a bottleneck you haven't measured. QA process improvement starts with honest diagnostics. It should produce three numbers and one root cause.

The first number is your current QA-to-dev ratio. Count QA engineers (including anyone whose primary job involves testing, triage, or quality processes), count developers, and calculate. If you're at or above 1:6, the bottleneck is likely already visible. If you're at 1:8 or beyond without AI testing infrastructure, it's critical.

The second number is your test coverage percentage -- but the honest version. Don't count tests that run in isolation from the actual application. Count only tests that exercise real user flows against a deployed environment. A suite of 3,000 unit tests covering individual functions doesn't tell you whether checkout works. What percentage of your critical user paths has an automated test that runs on every deploy?

The third number is QA cycle time versus dev cycle time. Measure the average time from "code complete" to "approved for release." Then measure the average time from "ticket created" to "code complete." The ratio between those two numbers tells you how bad the bottleneck actually is. If development takes 3 days and QA takes 4, you have your answer.

With those numbers in hand, identify the specific bottleneck. It usually falls into one of three categories: test creation (you can't write tests fast enough to keep up), test execution (tests take too long to run so feedback is slow), or triage (tests are flaky or the failure signal is too noisy to act on quickly). The fix for each is different, and investing in the wrong one wastes the next six weeks.

Phase 2: Automate Foundations (Weeks 3-6)

Most teams facing a QA bottleneck already have some automation. The problem is usually that it's incomplete, inconsistent, or maintained by people who are already stretched. This phase is about getting to a reliable floor before layering AI on top of it.

The target for this phase is 40% automated coverage of critical user flows. Not 40% line coverage, not 40% of your test case spreadsheet -- 40% of the flows your users actually take through your application, covered by tests that run in CI on every deploy.

For teams starting fresh or rebuilding, Playwright is the right default today. It handles modern web applications well, its async model matches real browser behavior, and its tooling for debugging and reporting has matured substantially. The ecosystem around it has consolidated enough that hiring engineers who know it isn't difficult.

The prioritization principle for this phase is the 20/80 rule applied to user flows: identify the 20% of flows that represent 80% of actual user activity or 80% of revenue risk, and cover those first. This isn't about what's interesting to test or what's technically challenging. It's about what breaks when it fails. Checkout, login, core data entry, critical integrations -- these are the flows that earn their automation investment immediately.

CI/CD integration is non-negotiable by the end of this phase. Tests that require a manual trigger to run will stop being run. The automation only creates leverage if it runs automatically, on every merge, with results visible to the team before deployment.

Phase 3: Deploy AI Testing (Weeks 7-10)

With a working automation foundation in place, this is where the velocity gap actually closes. The goal is to get from 40% automated coverage to 70%, without proportionally increasing QA headcount.

The key distinction between AI testing platforms is how they generate tests. Some tools require you to record flows, click through the application, or write natural language descriptions. The coverage you get reflects the flows someone thought to record. This is better than manual scripting, but it's still human-bounded.

The more powerful approach is what we built with Autonoma: agents that read your codebase directly, analyze your routes, components, and user flows, and generate test cases from the code itself. The codebase becomes the spec. No recording, no scripting, no manual description of what to test. The Planner agent generates test scenarios (including the database state setup each test needs), the Automator agent executes them, and the Maintainer agent keeps them passing as your code evolves. Coverage grows in proportion to code being written, not in proportion to QA hours available.

This matters most for teams adopting AI coding tools, because the volume of new code is exactly what's overwhelming the manual process. When Autonoma reads a new feature's code and generates its test cases automatically, the testing surface area and the coverage area expand together. That's the fundamental shift: testing stops being a bottleneck because it's no longer bounded by how many tests a human can write per day.

Autonoma plugs directly into CI, so the generated tests run on every merge without manual intervention. Check current pricing to see where it fits your team size.

When evaluating AI testing platforms for your team, start with your highest-traffic, highest-risk user flows. These are flows where a missed regression has immediate user impact, which means the value of catching failures there is clear and measurable. Integrate with your existing CI pipeline first -- the platform that adds friction to your existing workflow will get bypassed.

The 70% coverage target from this phase isn't a ceiling. It's the point at which your QA team's time can shift away from test creation and toward the work that requires human judgment: exploratory testing, edge case investigation, and quality architecture.

Phase 4: Retrain the Team (Weeks 11-16)

This is the phase that most process improvement playbooks skip, and it's the phase that determines whether the technical investment actually holds.

The QA function looks different at 70% automated coverage with AI testing than it did at 20% coverage with manual testing. The day-to-day work changes. The skills required change. If you don't actively manage that transition, two things happen: the people who were doing manual testing feel like their role is being automated away (some of them leave), and the AI testing infrastructure gets under-maintained because no one owns it well.

The reframe that works is the shift from "QA engineer who tests features" to "Quality Architect who defines standards that AI validates." The distinction matters. Manual testing was reactive -- something ships, you test it. Quality architecture is proactive -- you define what good looks like, what the failure conditions are, what business rules must hold across all flows, and AI testing validates those standards continuously.

In practice, this means your QA team becomes the team that configures and steers the AI testing agents. With Autonoma, Quality Architects define the critical user flows and business rules, and the Planner agent translates those into comprehensive test scenarios. The human sets the standard; the AI enforces it at scale.

The skills that need development are distinct from traditional QA:

Test strategy design comes first. Instead of executing test cases, Quality Architects write the criteria that generate them. What are the invariants that must never break? What are the edge cases that, historically, have caused production incidents? What does a passing state look like for each critical user flow? Articulating this well requires a combination of product knowledge and systems thinking that good manual testers already have -- it just needs to be channeled differently.

Data analysis is the second skill to develop. AI testing platforms generate a lot of signal. Distinguishing real regressions from environment noise, identifying patterns in failure data, prioritizing which failures to investigate first -- these are judgment calls that require analytical thinking. QA engineers who develop this skill become multipliers on the AI infrastructure investment.

AI tool management is the third. Understanding how your testing agents are configured, where they need adjustment, and how to interpret their coverage reports is a technical skill specific to the platform you've chosen. It's learnable and worth investing in formally through training, not just trial and error.

Reduce manual testing to exploratory-only during this phase. Exploratory testing -- where a human actively probes the application looking for unexpected behavior, thinking like an adversarial user -- is genuinely irreplaceable. It catches the class of bugs that no automated test was designed to find. Everything else should be automated.

Phase 5: Optimize (Ongoing)

By this point, the bottleneck should be resolved. The metrics in Phase 1 should look materially different. But QA process improvement isn't finished -- it shifts to continuous optimization.

The target ratio for a team operating with AI testing infrastructure is 1:8 to 1:12 (one QA-focused person per eight to twelve developers). Teams running mature, well-maintained AI testing suites can operate at the aspirational 1:15 ratio. Reaching and sustaining those ratios requires regular measurement and adjustment, not a one-time setup.

The three metrics that matter most in steady state are defect escape rate (how many bugs reach production that your test suite should have caught), test cycle time (how long it takes for the full test suite to complete and return a signal after a merge), and developer feedback loop speed (how quickly developers learn whether their changes broke something). These three numbers tell you whether the QA process is actually delivering value or just creating the appearance of it.

Monthly QA process retrospectives are worth the investment. Not retrospectives in the sprint ceremony sense -- dedicated reviews of what the QA function caught, what it missed, what's slowing it down, and what the next month's improvement focus should be. The teams that improve fastest treat the QA process itself as a system that needs iteration, not a fixed cost that gets set and forgotten.

The Org Design Question You're Avoiding

Any serious QA process improvement effort eventually confronts this question: what happens to headcount?

If your QA-to-dev ratio moves from 1:4 to 1:10, and your developer count stays flat, the math implies fewer QA positions. That's true. It's also incomplete.

The organizations that handle this well are the ones that distinguish between the volume of testing work and the value of quality judgment. AI can generate and execute tests at scale. It cannot replace the person who looks at a week's worth of test failures and identifies a systemic pattern that points to an architectural problem. It cannot replace the engineer who designs the quality standard that the AI then enforces. It cannot replace the person who knows the business domain well enough to articulate what "correct behavior" actually means in an edge case.

The transition that creates lasting value is from testing-as-execution to quality-as-architecture. Teams that make that transition retain and develop their best QA talent in higher-leverage roles. Teams that treat AI testing as purely a cost-reduction play tend to find that the institutional knowledge walking out the door was worth more than the savings.

For a broader view of how testing economics shift across this transition, the AI testing tools guide walks through what mature AI-native testing actually costs and what it replaces.

What the QA Bottleneck Really Is

The title of this article names the problem as the bottleneck AI created. That framing is accurate but slightly misleading. AI didn't create the bottleneck -- it revealed it.

The bottleneck was always there. When development velocity was lower, QA could absorb it. When development velocity jumped, QA couldn't. The underlying mismatch between how fast code is written and how fast quality is validated was structural, not caused by any particular tool.

That's the future we're building toward with Autonoma. Not a tool that replaces QA teams, but infrastructure that makes every QA engineer ten times more effective -- so the bottleneck disappears and the team can focus on what actually requires their expertise.

The five phases described here aren't a patch on an old model. They're a path to a qualitatively different relationship between development and quality -- one where testing coverage expands in proportion to code being written, where the feedback loop is measured in minutes rather than days, and where QA engineers spend their time on work that requires human judgment instead of work that can be systematized.

The teams that move through this playbook intentionally, rather than reactively, end up with a structural advantage: they ship faster with fewer regressions than competitors who are still resizing a manual QA function to fit a problem it was never designed to solve.

That's not a small advantage. In a market where every competitor has access to the same AI coding tools, the differentiator is quality velocity -- the ability to ship fast and ship well, simultaneously. QA process improvement, done right, is how you build it.

QA process improvement is the practice of restructuring testing workflows, team ratios, and tooling so that quality validation keeps pace with development velocity. The right time to start is before the bottleneck becomes visible in release delays -- ideally when developer output accelerates, which is the signal that your current QA capacity will soon be undersized. In practice, most teams start when they notice QA queue times growing or release cycles slipping despite development completing on schedule.

Teams with mature AI testing infrastructure can operate at 1:8 to 1:12 (one QA-focused person per eight to twelve developers), compared to the 1:4 to 1:6 ratio typical for teams relying on scripted automation. The key requirement is that AI testing handles test generation and maintenance automatically -- if QA engineers are still manually writing and updating tests, the ratio benefits don't materialize. With platforms like Autonoma that generate tests directly from code, coverage scales with development output rather than QA headcount -- which is what makes the higher ratios sustainable. Aspirational teams running fully AI-native testing have operated at 1:15 or beyond.

The most common mistake is treating the bottleneck as a headcount problem and hiring more manual testers, rather than a structural problem requiring process change. Adding manual testers scales linearly with developer output, which means you never actually close the gap -- you just slow the widening. The more durable fix is building AI testing infrastructure that scales test coverage proportionally with code output, so the ratio improves without proportional headcount growth.

Compare two cycle time metrics: the time from ticket creation to code complete (development cycle time) and the time from code complete to release approval (QA cycle time). If QA cycle time exceeds or approaches development cycle time, QA is the constraint. A secondary signal is queue depth -- if more tickets are consistently waiting for QA than are in active development, the bottleneck is downstream of engineering.

The work changes more than the headcount. Manual execution of scripted test cases -- clicking through flows, verifying outputs against expectations -- gets replaced by AI testing agents. What remains and grows in value is quality architecture: defining what correct behavior looks like, identifying risk areas, analyzing failure patterns, and making judgment calls that require domain knowledge. The teams that handle this transition well retrain existing QA engineers into Quality Architect roles rather than simply reducing the team.

No, and teams that try to eliminate exploratory testing as part of this transition typically regret it. Exploratory testing -- where a human actively probes the application looking for unexpected behavior -- catches a class of bugs that no automated test was designed to find, because by definition the test cases for those bugs don't exist yet. The right model is to automate all regression and flow validation with AI testing, and reserve QA human time exclusively for exploratory work and quality architecture.