Vibe Coding and the Death of the Test Suite

Vibe coding testing refers to the quality challenge created when AI writes the code and nobody writes the tests. The traditional test suite assumed the same engineer who wrote a feature also understood it well enough to specify expected behavior. Vibe coding breaks that assumption entirely. The test suite as a format is dying, not testing itself, and what replaces it is more rigorous, more continuous, and fundamentally better.

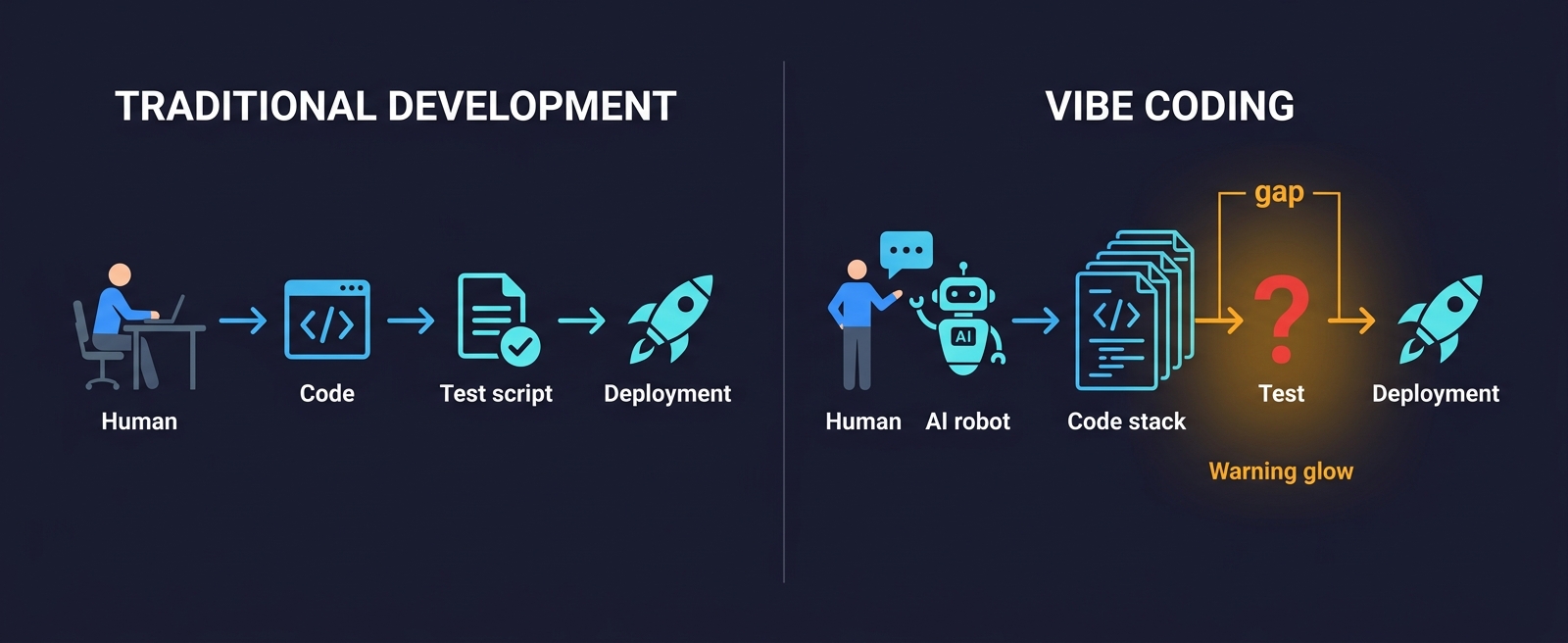

The time to generate a working feature with an AI coding agent is now measured in minutes. The time to write a meaningful test for that feature is still measured in hours, assuming it gets written at all. That gap, between the speed of AI-generated code and the pace of human-authored tests, is not a productivity win. It is where software quality goes to die quietly.

We have seen this pattern in teams building seriously with AI tools. The AI coding agent rewrote the checkout flow in forty minutes. A senior engineer reviewed the output, clicked through the happy path, and shipped it. The test suite still has seventeen tests for the old checkout flow. Eleven of them pass. Nobody deleted the other six. Nobody wrote new ones.

This is not a discipline problem. It is a structural one. The vibe coding testing gap is not something you close by asking developers to write more tests after an AI already wrote the code. It closes when the approach to testing changes at the same level of abstraction as the approach to writing code.

The Hidden Assumption Inside Every Test Suite

Traditional test suites rest on an assumption so obvious that nobody ever stated it: the person writing the test understands the code they are testing.

This seems trivial. Of course the author understands the code. But look at what that assumption actually enables. It is why unit tests can specify expected return values. It is why integration tests know which edge cases to cover. It is why E2E tests mirror the flows that the business cares about. The entire architecture of test-driven development, behavior-driven development, and every testing methodology that followed assumed that a human mind was the source of truth for what the software should do.

Strip that assumption away and the test suite collapses. Not because tests are bad, but because the tests were always downstream of human understanding. Remove the human understanding and you remove the foundation.

Vibe coding removes the foundation.

When a developer vibe-codes a feature, they describe intent and accept output. They may not know which data paths the AI chose. They cannot tell you what happens when two requests arrive simultaneously, because they never designed that behavior. The AI made a decision in that moment. The decision is buried in 400 lines they have not read closely. A traditional test suite requires someone to know what to test. Nobody knows what to test because nobody fully knows what was built.

This is not a failure of discipline. It is a structural consequence of how vibe coding works. You cannot write tests for a codebase you do not understand, and vibe coding produces codebases faster than human understanding can track.

The Coverage Theater Problem With AI-Generated Tests

Before vibe coding, test suites were already performing theater. The metrics looked good. The reality was not.

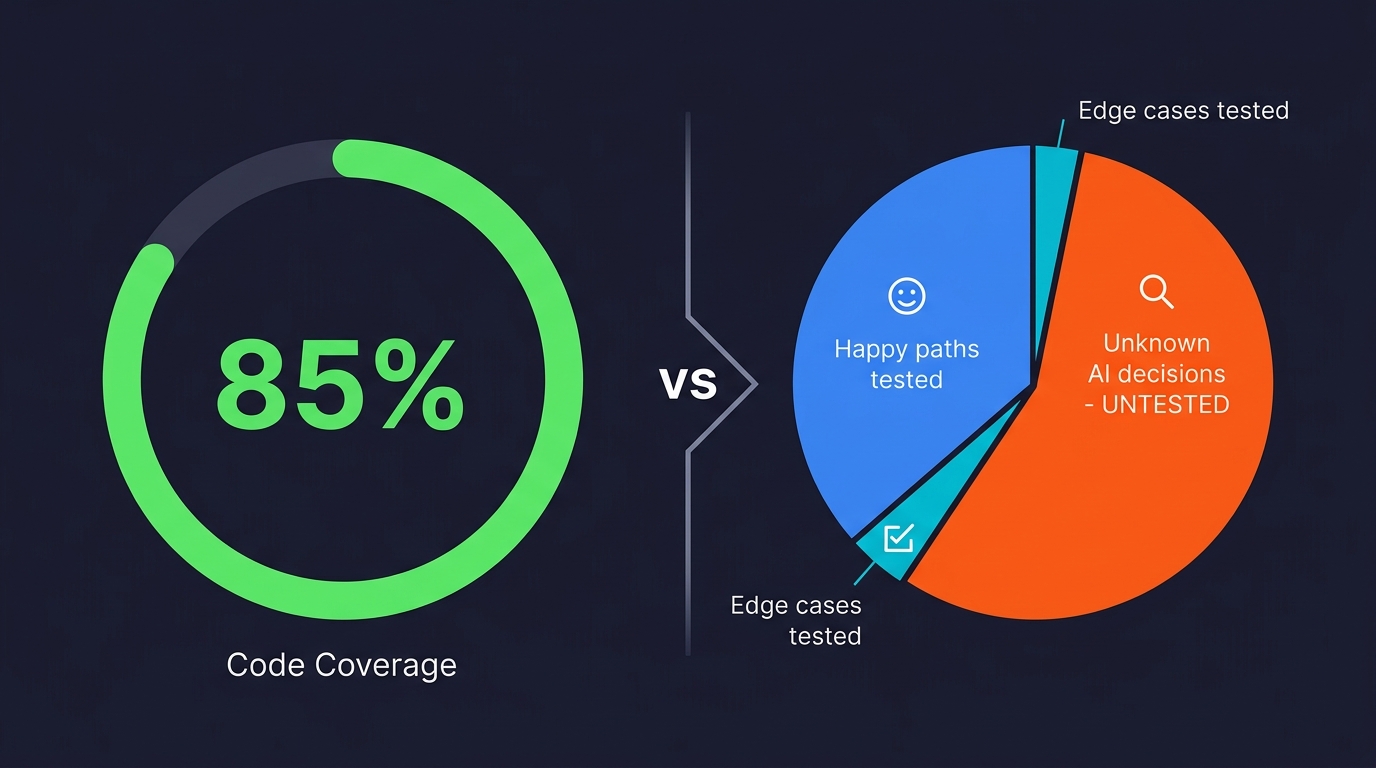

Research has long shown that code coverage, the metric most teams use to proxy for test quality, correlates poorly with defect detection. A suite can achieve 85% line coverage while leaving every meaningful edge case untouched. Developers write tests for the code they wrote, which means they test the paths they designed, the happy paths, and leave untested the paths they did not think of, which are exactly the paths where bugs live.

This was always the problem. Vibe coding compounds it dramatically.

An AI coding agent generating a user authentication flow will produce code that handles the standard cases well. Login with a valid email and password: works. Password reset: works. But what happens when a user submits a reset request for an email address that does not exist in the database? What happens when the reset token expires mid-session? What happens when the same token is used twice? The AI made decisions about all of these states. Those decisions may be correct. They may not be. The developer who accepted the output has no idea, because they were not present for those decisions.

A human writing tests for that authentication flow will test what they designed. They will not test the decisions the AI made silently, because they do not know those decisions were made. The coverage report will say 80%. The 80% will be the decisions the developer understands. The 20% will be the decisions the AI made.

That 20% is where your production incidents live.

Why Asking Your Coding AI to Write the Tests Makes It Worse

The natural response to this problem is: "If the AI wrote the code, just ask it to write the tests too." This sounds logical. It is actually the fastest way to build false confidence. (We covered the mechanics of this circularity problem in depth in our vibe coding testing gap analysis — here, we focus on why it means the test suite as a format is obsolete.)

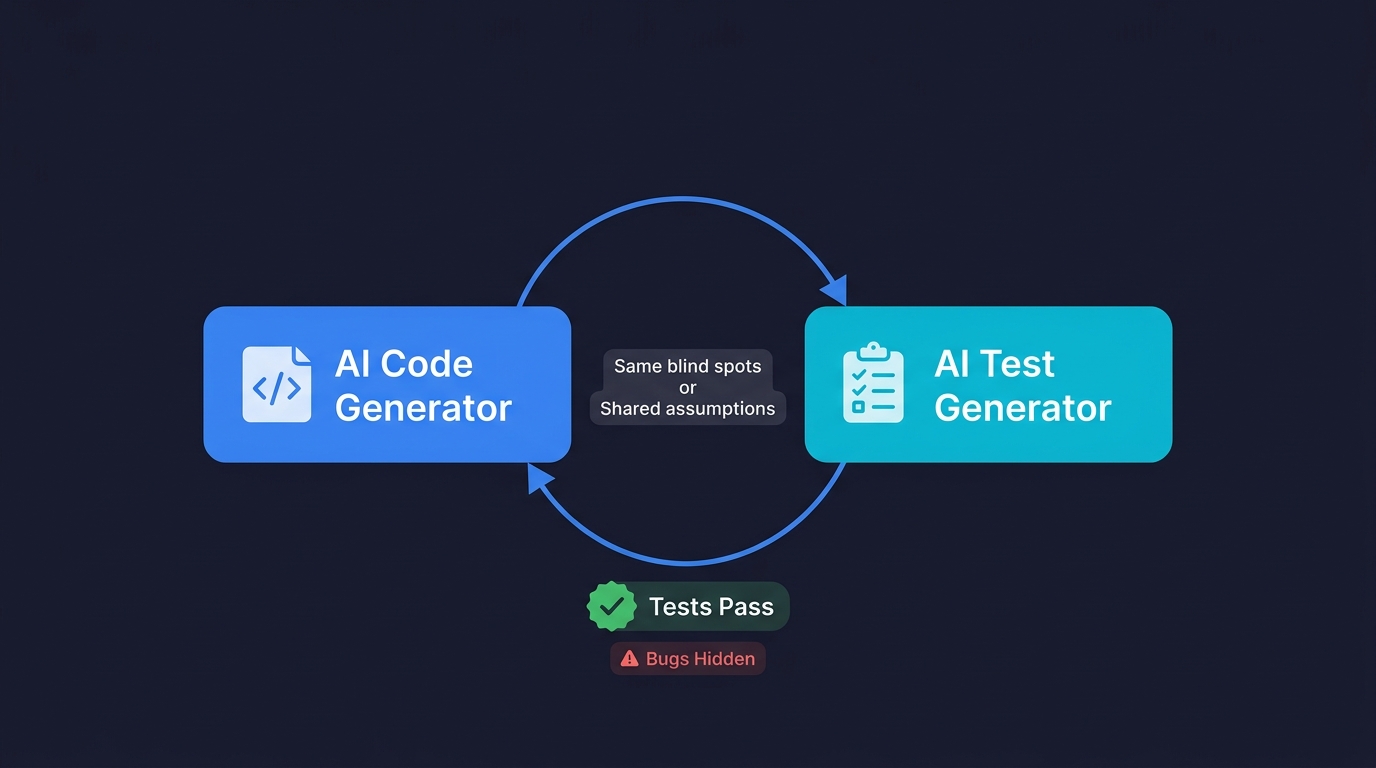

The same AI that generated your implementation will generate tests that confirm what the code does, not what it should do. If the model misunderstood a requirement when writing the feature, it will misunderstand it the same way when writing the test. Both outputs share the same frame of reference. The tests pass. The bugs exist. The green suite becomes a lie that accelerates shipping broken code.

This is not a hypothetical. Teams that ask their coding AI to generate test suites consistently report the same pattern: high coverage numbers, tests that mirror the implementation line by line, and zero detection of the edge cases that actually cause production failures. The tests are syntactically correct and semantically useless.

Effective vibe coding testing requires structural independence. The system verifying the code cannot share assumptions with the system that wrote it. This is why the testing agent needs to approach the codebase from outside, reading what was built rather than inheriting the builder's blind spots. That independence is an architectural requirement, not a best practice.

When Nobody Writes the Code, Nobody Writes the Tests

Here is the inversion that vibe coding creates. Traditional software development had a natural forcing function for testing: the person who wrote the code was accountable for its quality. That accountability, even when imperfect, created pressure to write tests. A developer who designed a checkout flow and shipped it without tests owned the production incident when it broke.

Vibe coding diffuses that accountability. The AI wrote the logic. The developer accepted it. When it breaks in production, the mental model is "the AI got it wrong" rather than "I should have tested this." That diffusion is not unique to lazy teams. It is the natural cognitive response to shipping code you did not design. You feel less ownership of decisions you did not make.

The result is predictable. In a vibe coding workflow, tests become an afterthought more reliably than in traditional development, not because engineers care less about quality, but because the usual triggers for testing discipline (code ownership, design accountability, the feeling of having built something) are weakened by the nature of the process.

This is the death of the test suite as a cultural artifact. The test suite was always partly a product of developer pride. "I built this, so I am going to make sure it works." Vibe coding produces code at a rate that makes pride impossible. You did not build it. You directed it. The emotional investment that drove testing discipline is gone.

What the Test Suite Was Actually Protecting You From

To understand what replaces the test suite, you need to understand what it was actually doing, under the hood, beyond the green checkmarks.

The test suite was an executable specification. Every test was a claim about how the system should behave. Taken together, they formed a contract between the current codebase and the intended behavior. When you changed code and tests failed, the failure was telling you that your change violated the contract.

The test suite was also a communication tool. New engineers read tests to understand what the system was supposed to do. Tests were the closest thing most codebases had to living documentation, because unlike comments and READMEs, tests could not drift silently from the truth without someone noticing.

And the test suite was a regression net. It caught the cases where a change to one part of the system unexpectedly broke another. Not all of them, not even most of them in poorly maintained suites, but some. Enough to justify the investment.

All three of these functions, the executable specification, the communication tool, the regression net, are still needed. What vibe coding has killed is not the need for them. It has killed the form in which they existed. The handwritten test suite, as a form, is dying. The functions it served are not.

What Replaces the Test Suite: Self-Maintaining AI Tests

The replacement is not a better test suite. It is a fundamentally different model: continuous, AI-generated, self-maintaining coverage that is grounded in the codebase rather than in a developer's memory of it.

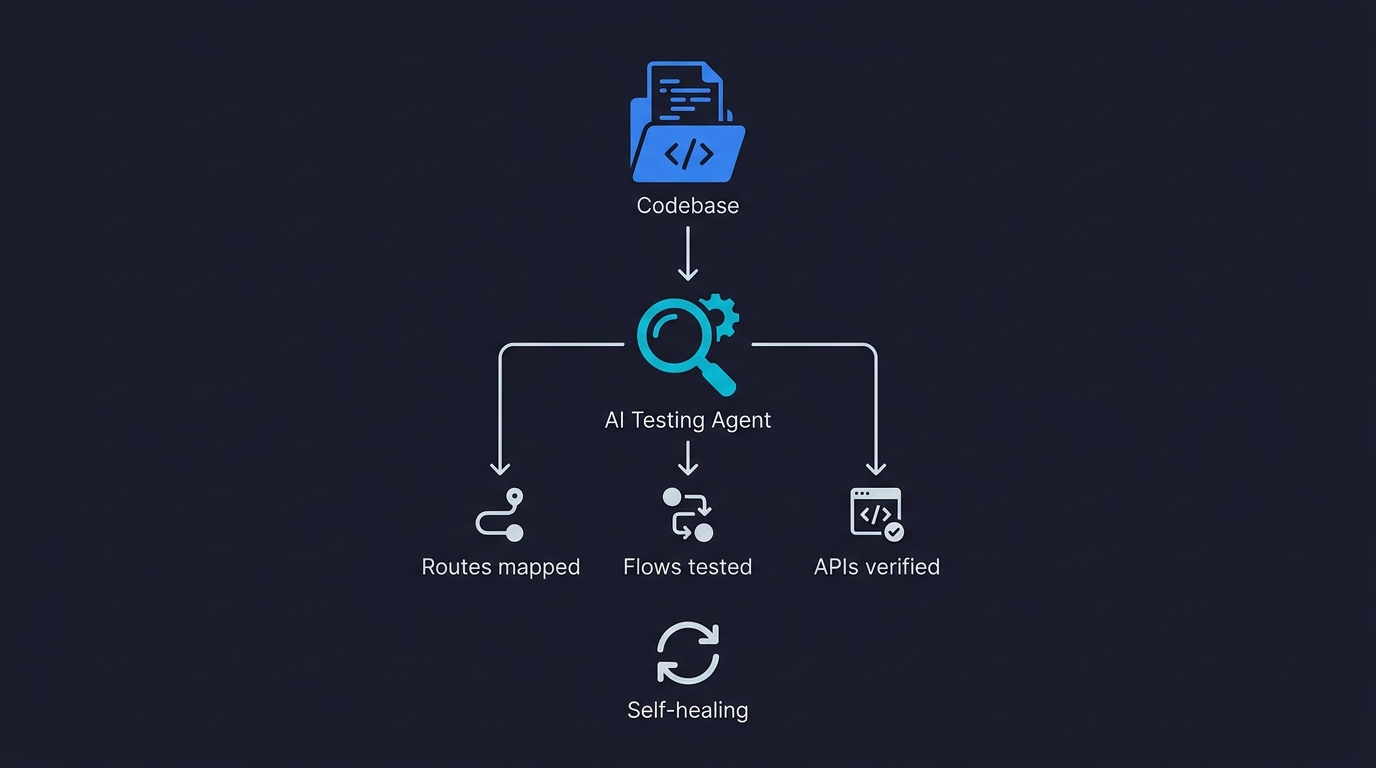

The shift looks like this. Instead of a developer writing a test based on their understanding of the code they wrote, an AI testing agent reads the codebase, maps the routes, components, and user flows, and generates test cases based on what the application is actually designed to do. The source of truth is the code itself, not a developer's interpretation of it.

This matters for vibe-coded codebases specifically because it decouples testing from human comprehension. You do not need to understand every decision the AI made to test whether the application behaves correctly. The testing agent reads the decisions directly. It does not test what you thought you built. It tests what is actually there.

The self-maintaining property matters equally. A traditional test suite degrades as the codebase evolves. Every time an AI agent rewrites a component, selectors change, API contracts shift, and test assumptions break. In a vibe coding workflow where the codebase can change substantially in a single session, a static test suite becomes a liability within weeks. Tests fail not because the application is broken but because the tests are stale, and the team stops trusting the suite and stops running it.

A self-healing testing system detects what changed and updates the tests to match the new structure, while continuing to verify that the behavior is correct. The regression net does not decay. It adapts.

| Dimension | Traditional Test Suite | AI-Grounded Testing |

|---|---|---|

| Source of truth | Developer's memory of what they built | The codebase itself |

| Maintenance model | Manual updates by the team | Self-healing as code evolves |

| Coverage scope | What the developer thought to test | All routes, flows, and API contracts |

| Decay rate | Weeks to months before tests go stale | Tests adapt in real time |

| Time to first test | Hours to days per feature | Minutes after code is committed |

| Scales with codebase | No, maintenance grows linearly | Yes, agent reads entire codebase |

This is what we built Autonoma to do. The Planner agent reads your codebase, not your AI coding agent's conversation history. It analyzes your routes, components, and flows, and generates test cases grounded in what your application actually does. The Maintainer agent keeps those tests passing as the code evolves, so the coverage does not decay to zero between sprints. No test scripts. No maintenance. The codebase is the spec.

The New Quality Contract for Vibe Coding QA

Traditional testing had a quality contract that went something like this: "We have tested the behaviors we designed, and they work as intended." That contract was always incomplete, because it excluded the behaviors nobody designed, but it was honest within its scope.

The new quality contract is different. It says: "We have continuously verified all user-visible behaviors against the codebase that specifies them, and we know immediately when something breaks." It is broader, more honest about what it covers, and fundamentally continuous rather than periodic.

This is a better contract. Not because AI testing is smarter than human testing in every dimension, but because it scales. A human QA engineer can maintain meaningful coverage of maybe 50 to 100 test scenarios before the maintenance burden becomes unmanageable. An AI testing agent can maintain coverage of every route, every flow, and every API contract in the codebase, and update that coverage automatically as the codebase evolves.

The organizations that will ship reliable software in a vibe coding world are not the ones that resist the new model. They are the ones that accept that the test suite as a format is obsolete and build the infrastructure that replaces it. That means connecting their codebase to a testing system that understands the code, generates the tests, and maintains the coverage without human intervention.

The teams still asking "who is going to write the tests for this AI-generated code?" are asking the wrong question. The right question is "what kind of testing system reads the code and tests it automatically?" That question has an answer. The transition to it is not optional. It is just a matter of how many production incidents it takes before the transition happens.

The Test Suite of 2027

Prediction: by 2027, the handwritten test suite will be viewed the way we view handwritten documentation today. Valuable in its time. Largely replaced by systems that generate and maintain it automatically. Kept around by legacy organizations that have not made the transition yet.

The developers still writing Cypress scripts by hand in 2027 will be doing the equivalent of manually updating a wiki nobody reads. The work is real. The output decays faster than it is produced. The alternative exists and is obviously better.

What will exist instead is testing infrastructure that is closer to continuous deployment monitoring than to a traditional test suite. Coverage will be dynamic, derived from the codebase in real time rather than from a collection of scripts written months ago. Failures will be caught immediately, before they reach production, by an agent that understands both what the code is supposed to do and what it is actually doing.

The test suite is not dying because testing is less important. It is dying because the test suite was always a workaround for the fact that humans needed an explicit artifact to encode their understanding of expected behavior. AI testing agents do not need the workaround. They read the codebase directly.

That is not the death of quality. It is the first time quality assurance has been able to keep pace with the speed of software development.

Frequently Asked Questions

Vibe coding without testing infrastructure makes software quality worse. Vibe coding with proper AI-powered testing can actually produce better outcomes than traditional development, because the testing coverage is continuous, codebase-grounded, and does not decay the way handwritten test suites do. The quality problem in vibe coding is not the code generation. It is the testing gap. Close the gap with infrastructure that reads your codebase and generates tests independently of the coding AI, and the velocity advantage becomes real.

The replacement is continuous, AI-generated coverage that is grounded in the codebase rather than in a developer's interpretation of it. Instead of a developer writing tests based on their understanding of the code they designed, an AI testing agent reads the codebase, maps the user flows and API contracts, and generates test cases based on what the application actually does. That coverage self-heals as the code evolves, so it does not decay between sprints the way a handwritten test suite does. Autonoma is built on this model: connect your codebase, and agents generate and maintain tests automatically.

You can, but the maintenance burden becomes unmanageable quickly. Traditional Playwright and Cypress test suites require someone to write and update the scripts as the codebase changes. In a vibe coding workflow where an AI agent can substantially rewrite a component in a single session, those tests go stale faster than a team can maintain them. The tests fail not because the application is broken but because the selectors and API contracts changed. Teams stop trusting the suite and stop running it. The sustainable path is an AI testing agent that understands your codebase and updates its own tests when the code changes, eliminating the maintenance burden entirely.

Because the same AI that wrote your code will write tests that confirm what the code does, not what it should do. If the AI misunderstood a requirement when generating the implementation, it will misunderstand it again when generating the tests. Both outputs live inside the same frame of reference. The tests will pass while real bugs exist, creating false confidence that accelerates shipping broken code. Effective vibe coding testing requires an independent testing agent that approaches your codebase from outside, without sharing the coding AI's assumptions. That independence is a structural requirement, not a cultural preference.

Self-healing tests are tests that automatically update when the codebase changes, so the test suite stays valid without human intervention. In traditional development, a static test suite breaks relatively slowly as the codebase evolves. In vibe coding, where an AI agent can restructure components, rename selectors, and shift API contracts in a single session, a static test suite breaks fast. Within weeks of heavy AI coding tool use, most teams find that a significant percentage of their tests are disabled or skipped because they are too stale to trust. Self-healing tests solve this by detecting what changed and updating the test logic to match, while continuing to verify that the application behavior is correct. Autonoma's Maintainer agent does this automatically.

The transition is already happening. Teams that adopt AI coding tools heavily find that their handwritten test suites decay faster than they can maintain them. The organizations making the transition now are moving to codebase-grounded AI testing agents. By 2027, the handwritten test suite will likely be viewed the way handwritten documentation is viewed today: valuable in its time, largely replaced by systems that generate and maintain it automatically. The transition is driven not by ideology but by the practical reality that static, human-maintained tests cannot keep pace with AI-speed code generation.

No. The role is shifting, not disappearing. The tasks that are going away are script writing and test maintenance, which consumed most of a QA engineer's time in the traditional model. What remains, and what becomes more important, is testing strategy, coverage architecture, and the judgment to know what truly needs to be verified and why. QA engineers who adapt to working with AI testing infrastructure, directing agents rather than writing scripts, will be more productive and more valuable than before. The engineers who resist the transition and remain focused on manual test authorship will face pressure. For a deeper look at this career transition, see our article on [how vibe coding changes QA](/blog/how-vibe-coding-changes-qa).