Autonoma is the BrowserStack alternative built for teams whose real gap is test creation, not execution infrastructure. BrowserStack is a cloud of real browsers and devices that run your existing tests across environments. Autonoma is testing intelligence: three AI agents that read your codebase, generate tests automatically, execute them, and self-heal when code changes. Most teams evaluating both are asking the wrong question. The question is not "which one?" but "which layer am I actually missing?" This post breaks down what each tool does, when each wins outright, and when combining both makes sense.

Most teams searching "BrowserStack alternative" are looking for a cheaper cloud browser farm. That's the wrong search. The reason tests are failing, or not existing at all, has nothing to do with which cloud runs them.

The gap is a layer below the one BrowserStack lives on. BrowserStack is excellent infrastructure. What most teams are actually missing is intelligence: something that decides what to test, writes the tests, and keeps them working when the code changes. We built Autonoma at that layer precisely because no amount of parallel browser sessions fixes a test suite that doesn't exist.

Why Teams Look for a BrowserStack Alternative

BrowserStack is a strong platform with clear strengths, but most teams searching for an alternative share a common set of frustrations. Understanding them is the first step toward picking the right tool.

Cost escalation at scale. BrowserStack Automate starts at $129/month for a single parallel session. At 10 parallels, you're looking at roughly $15,000/year. At 50+ parallels, enterprise contracts typically run $25,000 to $50,000+/year (custom pricing, not publicly listed). For teams that just need execution infrastructure, that math works. For teams that also need test creation and maintenance, that's only half the bill.

No help with test creation or maintenance. BrowserStack runs whatever tests you hand it. It doesn't generate tests, suggest coverage gaps, or fix broken selectors. If your team is spending 15-20 hours per sprint on test maintenance, BrowserStack doesn't reduce that load. It runs your broken tests across more browsers, faster.

Requires an existing test suite. Teams starting from zero coverage get no value from BrowserStack until they write tests first. For startups moving fast without QA, the constraint is not where to run tests but having them in the first place.

Session-based scaling friction. Parallel session limits mean longer CI pipelines when your test suite grows. You either wait in queue or upgrade your plan. The pricing model assumes linear scaling, which gets expensive as teams grow.

These aren't flaws in BrowserStack. They're the natural boundaries of an infrastructure-first approach. The question is whether your team's constraint lives at the infrastructure layer or at the intelligence layer above it.

What Autonoma Actually Does

Autonoma is the intelligence layer. Instead of requiring tests as input, it generates them.

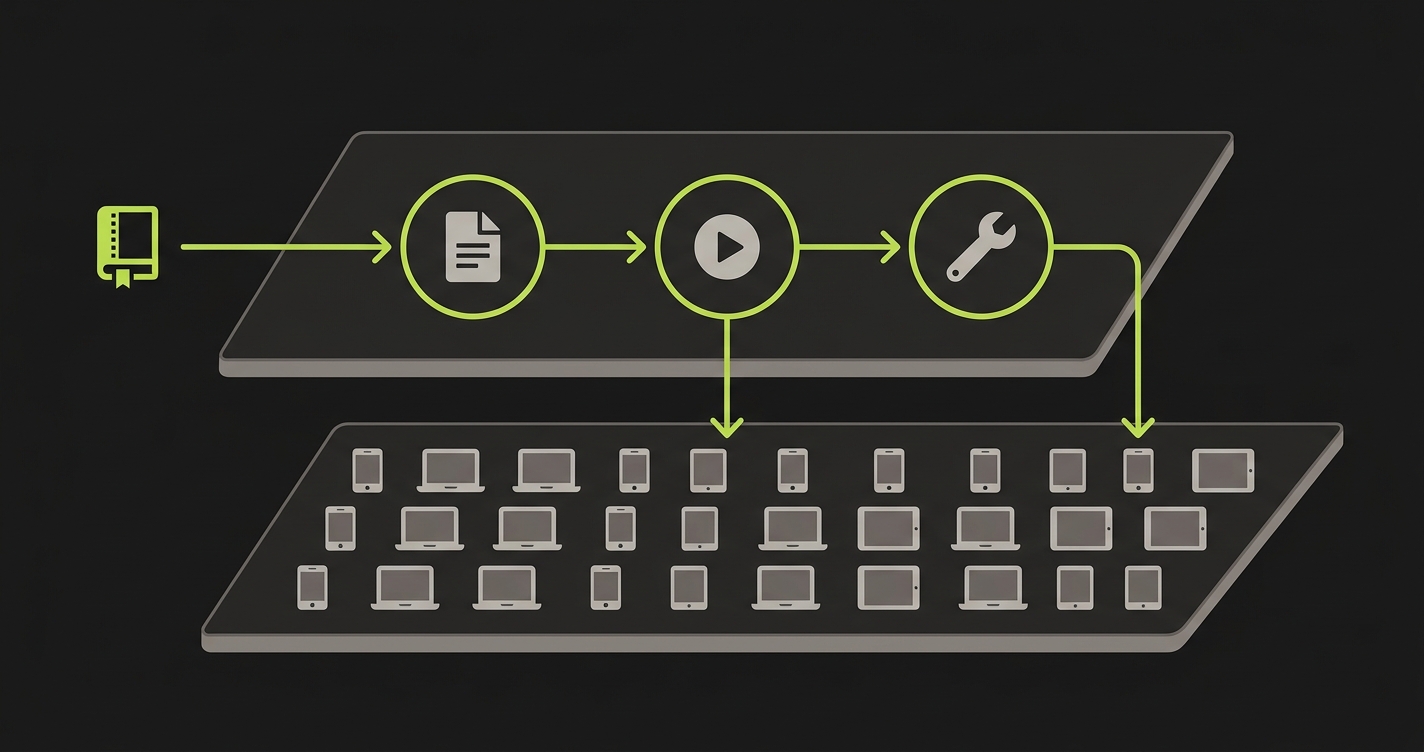

Connect your codebase and three agents go to work. The Planner agent reads your routes, components, and user flows directly from code. It plans test cases based on what your application actually does, not what someone remembered to document. It handles database state setup automatically, generating the endpoints needed to put your app in the right state for each scenario. The Automator agent executes those test cases against your running application. The Maintainer agent watches for code changes and self-heals tests that would otherwise break.

The entire process is hands-off. This is autonomous testing in practice. No one clicks through the app to record flows. No one writes test scripts. No one debugs selector failures on a Friday afternoon.

The codebase is the spec. Every agent includes verification layers so tests don't take random paths to the same goal. They execute with consistent, reliable behavior across runs. A team that connects their repo on Monday can have meaningful end-to-end coverage by Wednesday, without writing a single test script.

What BrowserStack Actually Does

BrowserStack is the infrastructure layer. It solves one problem exceptionally well: giving your existing tests access to thousands of browser and device combinations without maintaining physical hardware.

You write your Playwright, Selenium, or Cypress tests. BrowserStack provides the execution environment. When a user files a bug on IE11, you can reproduce it in seconds. When a new iPhone model ships, you can verify your app works on it without buying one. This is genuinely valuable. Cross-browser bugs are real, and the device matrix is larger than any team can reasonably own.

Where BrowserStack doesn't help is everything upstream of execution. Test creation, test maintenance, coverage gaps, flakiness: these remain your problem. BrowserStack executes whatever tests you hand it, reliably and at scale. It doesn't know what tests you should be writing or fix the ones that break when your UI changes.

The Core Difference: Infrastructure vs. Intelligence

This is the clearest way to frame it: BrowserStack is a test runner with extraordinary breadth of environments. Autonoma is a testing system that generates, runs, and maintains its own tests.

They operate at different layers of the same problem.

| Dimension | BrowserStack | Autonoma |

|---|---|---|

| Test creation | You write tests | AI generates from codebase |

| Test maintenance | You maintain tests | Self-healing agents |

| Cross-browser coverage | 3,500+ browser/device combos | Focused on critical flows |

| Mobile device testing | Real device farm (2,000+ devices) | Not the primary focus |

| Legacy browser support | IE6, iOS 7, Android 4.4+ | Modern browser targets |

| Test intelligence | None (executes what you provide) | AI-planned, intent-aware |

| DB state setup | Not handled | Planner agent generates endpoints |

| CI/CD integration | Mature, broad integrations | Purpose-built for AI-velocity CI/CD |

| Parallel execution | Scales with paid sessions | Scales with test intelligence |

| Flaky test handling | Retry logic only | Root-cause self-healing |

| Setup complexity | Medium (requires existing tests) | Low (connect codebase, agents start) |

| Pricing model | Per parallel session | Per application |

| Suited for AI-generated code | Neutral (runs any tests) | Yes (designed for AI velocity) |

| Test recordings needed | No, but tests required | No recordings, no scripts |

| Free tier | Limited trial only | Yes |

When BrowserStack Is the Right Choice

BrowserStack wins in clear situations. If your team has invested in a Selenium or Playwright suite and cross-browser fidelity matters to your product, BrowserStack is purpose-built for you. A fintech app that needs to work on IE11 for enterprise clients, an e-commerce site where a layout break on Samsung's stock browser loses real revenue, a SaaS product with mobile-first users on a wide range of Android versions: these are exactly what BrowserStack was built for.

The visual regression testing use case is also strong. When you need pixel-level comparisons across dozens of browser and device configurations, BrowserStack's automated screenshot diffing provides coverage that agent-based systems don't replicate at that breadth.

Mobile app testing is another unambiguous win. Real iOS and Android devices, real network conditions, real device sensors. If you're shipping a native mobile app or a progressive web app with significant mobile traffic, BrowserStack's device farm is hard to replace.

The underlying assumption in all of these scenarios: you already have tests. The infrastructure problem is where to run them at scale. For a broader look at options in this category, see our BrowserStack alternatives guide.

When Autonoma Is the Right Choice

Autonoma wins when the problem is test creation and maintenance, not execution breadth.

Teams shipping AI-generated code face this acutely. When developers use tools like Cursor or Claude to generate features, the codebase changes faster than any human QA process can follow. Writing tests manually for AI-generated code is like trying to document a codebase that's rewriting itself. You're always behind.

Autonoma's Planner agent reads what the code actually does today, right now, and generates tests accordingly. When the code changes tomorrow, the Maintainer agent updates the tests. The cycle is automatic.

The second clear win is teams with zero or minimal coverage. A startup that has been moving fast without tests, a product team that inherited an untested codebase, an engineering org where QA is the bottleneck holding back delivery, or a team evaluating alternatives to session-based AI testing: in all of these cases, the constraint is not "where do I run my tests" but "how do I get tests in the first place." Connecting a codebase to Autonoma and reaching meaningful coverage within days is not an incremental improvement. It's a different category of outcome.

The third scenario is ongoing maintenance cost. If your team is spending 15-20 hours per month fixing broken selectors, updating test flows after UI changes, and debugging flaky assertions, that's not a tooling gap. It's a structural problem that more execution capacity won't fix.

BrowserStack vs Autonoma: Pricing at Scale

The pricing models reflect the different problems each tool solves. BrowserStack prices by parallel sessions. Autonoma prices by application.

| Scale | BrowserStack Cost | Autonoma |

|---|---|---|

| Startup (1-2 engineers) | $129/month (Automate, 1 parallel) | Free tier available |

| Growing team (5 engineers, 5 parallels) | ~$5,000-7,500/year | Scales per application |

| Mid-market (10+ engineers, 10 parallels) | $15,000+/year | Scales per application |

| Enterprise (50+ engineers, 50+ parallels) | $25,000-50,000+/year (custom) | Contact for enterprise pricing |

But the sticker price is only part of the cost. The total cost of testing includes the human hours your team spends writing and maintaining tests. If your engineers spend 15-20 hours per month on test maintenance at $75-100/hour, that's an additional $1,100-2,000/month in hidden cost on top of whatever infrastructure you're paying for. BrowserStack doesn't reduce that number. Autonoma eliminates it.

For teams where infrastructure is the constraint, BrowserStack's per-parallel pricing makes sense: you're paying for dedicated cloud hardware and real devices. For teams where test creation and maintenance is the constraint, the more important number is the engineering hours you get back.

Should You Use Autonoma and BrowserStack Together?

The most sophisticated testing setups we see use both. The pattern is straightforward.

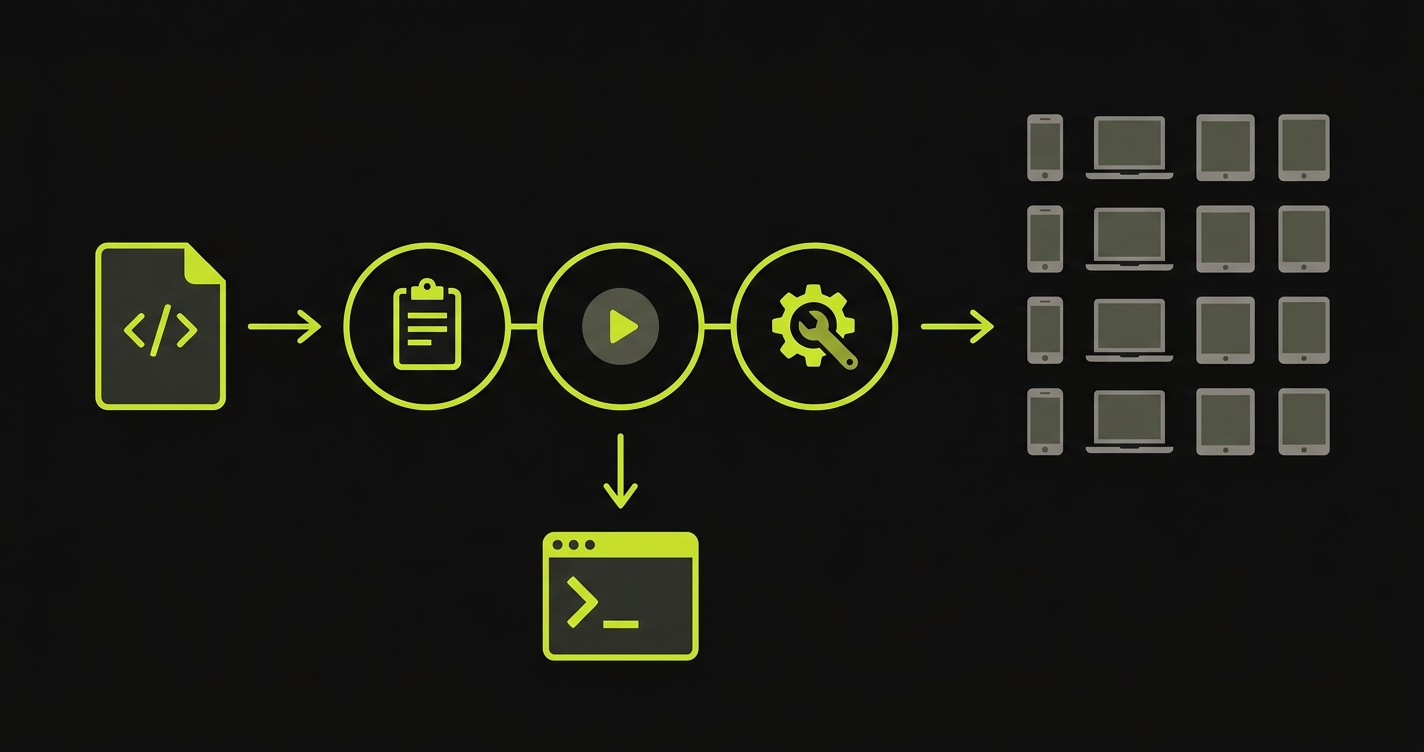

Autonoma generates and runs tests against your application in your own CI/CD environment, covering your critical user flows with self-healing test coverage. That gives you intelligent, maintained coverage across your core product. BrowserStack provides the cross-browser execution layer for the subset of those tests where multi-environment fidelity matters.

In practice: Autonoma's agents write tests for your checkout flow, user authentication, and core product workflows. Those tests run in your standard CI pipeline. When you need to verify that checkout works identically on Safari iOS 15, Chrome on Windows 11, and Firefox on Android, you point those specific tests at BrowserStack for cross-browser verification.

You get AI-generated coverage without maintenance overhead, plus the breadth of BrowserStack's device and browser matrix where it genuinely adds value. Neither tool does the other's job. They're additive.

Use-Case Decision Matrix

| Scenario | Best Choice | Why |

|---|---|---|

| Cross-browser compatibility bugs (IE, Safari quirks) | BrowserStack | Real browser rendering is the point |

| Mobile native app testing | BrowserStack | Real device farm with sensors, network conditions |

| Team ships AI-generated code daily | Autonoma | Tests self-update as fast as code changes |

| Starting from zero test coverage | Autonoma | No scripts needed, agents generate from codebase |

| Test maintenance consuming 20%+ sprint time | Autonoma | Self-healing eliminates the maintenance cycle |

| Regression testing after every PR | Autonoma | Purpose-built for AI-velocity CI/CD |

| Need to verify app on 50+ browser/device combos | BrowserStack | Infrastructure breadth is unmatched |

| Existing Selenium/Playwright suite, need scale | BrowserStack | Drop-in execution layer for existing tests |

| Complex DB state setup for test scenarios | Autonoma | Planner agent handles DB state automatically |

| QA team is the delivery bottleneck | Autonoma | Removes human bottleneck from test creation loop |

| Need cross-browser + intelligent generation | Both together | Complementary layers, not competing tools |

Is Autonoma the Right BrowserStack Alternative for Your Team?

The real question is not "which tool is better?" It's "which layer of the testing stack is actually holding you back?"

If the answer is test creation and maintenance, and it is for most teams shipping AI-generated code at pace, that's the problem we built Autonoma to solve. Three agents that read your codebase, generate tests, execute them, and self-heal when code changes. No scripts to write, no selectors to fix, no coverage gaps to manually close.

If the answer is cross-browser execution, BrowserStack is excellent and has been for fifteen years. A massive real device library, deep integrations with every major test framework, and infrastructure investment that's hard to replicate. For teams with a genuine device matrix problem, it's the right tool.

Most teams we talk to discover their constraint is upstream of infrastructure. They don't need more browsers to run tests on. They need tests worth running. Start there, and the infrastructure question gets much simpler.

Not exactly. BrowserStack provides cloud infrastructure for running existing tests across real browsers and devices. Autonoma generates tests from your codebase, executes them, and self-heals them when code changes. They solve different problems. Many teams use both: Autonoma for intelligent test generation and maintenance, BrowserStack for cross-browser execution where device fidelity matters.

Autonoma focuses on critical user flow coverage through AI-generated tests, not on cross-browser device matrix breadth. If you have a genuine cross-browser compatibility requirement, covering 50+ browser and device combinations including legacy browsers like IE11, BrowserStack's device farm is purpose-built for that. Autonoma and BrowserStack are better understood as complementary than competing.

Autonoma generates tests and runs them in your CI/CD environment. The tests Autonoma produces can be targeted at external execution environments including BrowserStack for cross-browser runs. The integration is at the test execution layer, where Autonoma's generated tests serve as the test suite and BrowserStack provides the browser infrastructure.

BrowserStack does not handle test maintenance; it executes the tests you provide. When your UI changes and a selector breaks, you fix the test. Autonoma's Maintainer agent watches for code changes and self-heals tests automatically. It understands the intent of a test and updates it when the application changes, rather than failing on a stale selector.

Autonoma is purpose-built for teams where AI coding tools are generating code faster than humans can write tests. The Planner agent reads your codebase as it is right now and generates tests from it. When an AI coding tool changes the codebase, the Maintainer agent updates the tests. BrowserStack runs whatever tests exist, but doesn't address the velocity gap between code generation and test coverage.

A BrowserStack alternative needs to match the specific capability you're replacing. If you need cross-browser execution across hundreds of device combinations, the alternative needs a real device farm and broad browser support. If your underlying need is test coverage without manual maintenance, an AI-native tool like Autonoma addresses a different gap: generating tests from code, not providing execution infrastructure. Most teams discover they need both layers covered.

For startups with zero or minimal test coverage, Autonoma is a strong fit because it generates tests from your codebase without requiring an existing test suite. BrowserStack assumes you already have tests to run. Autonoma also offers a free tier, which aligns with startup budgets. If your startup needs tests, start with Autonoma. If you need cross-browser device coverage for existing tests, add BrowserStack later.

Autonoma offers a free tier for AI-powered test generation and execution. For open-source infrastructure alternatives, Playwright and Selenium Grid provide free local cross-browser execution. BrowserStack itself offers limited free trials but no permanent free plan for Automate. The right free option depends on whether you need test creation (Autonoma) or test execution infrastructure (Playwright/Selenium Grid).