Generative AI testing is the discipline of verifying code produced by AI tools (Claude, Cursor, Copilot, and similar assistants) before it reaches production. Unlike traditional QA, which assumed humans wrote the code and could reason about its intent, generative AI software testing must account for a new failure class: code that compiles, passes existing tests, and still behaves incorrectly. The bugs AI introduces are subtle: wrong business logic, missing authorization checks, and outdated dependency patterns. Most existing test suites were designed around how humans write code, not how AI does. This article covers what breaks differently with AI-generated code, why your current tests probably miss it, and what a purpose-built testing strategy looks like.

You ship with AI now. The tests have not caught up.

This is not a coverage problem. You could hit 90% coverage and still miss the exact failure mode AI tools introduce most often: logic that compiles, reads correctly, and produces the wrong output. Testing AI-generated code is fundamentally different from testing human-written code. Your test suite was designed around how your team writes code. AI writes differently. It makes different assumptions. It drops different safeguards. And it does this consistently, in predictable patterns that your existing tests walk right past.

The teams feeling this most acutely are not the ones who skipped testing. They are the ones who have strong QA cultures and are now noticing that generative AI software testing requires something their current setup does not provide. If you have shipped AI-written code and wondered whether it was actually verified, the answer is probably: not the way you think.

(This article focuses on testing what AI generates. For how AI can generate the tests themselves, see how generative AI testing tools work.)

What Actually Breaks When AI Writes Your Code

Start with the most common failure and work outward from there.

Subtle Logic Inversions

AI models generate plausible code. It reads correctly, compiles cleanly, and fits the surrounding context. Plausible is not the same as correct. A pricing function that applies discounts in the wrong order. A permission check that returns true where it should return false. An aggregation query that sums the right column but groups by the wrong key. Each of these passes type checks, passes linting, and often passes unit tests that check structure rather than outcomes.

Dropped Security Patterns

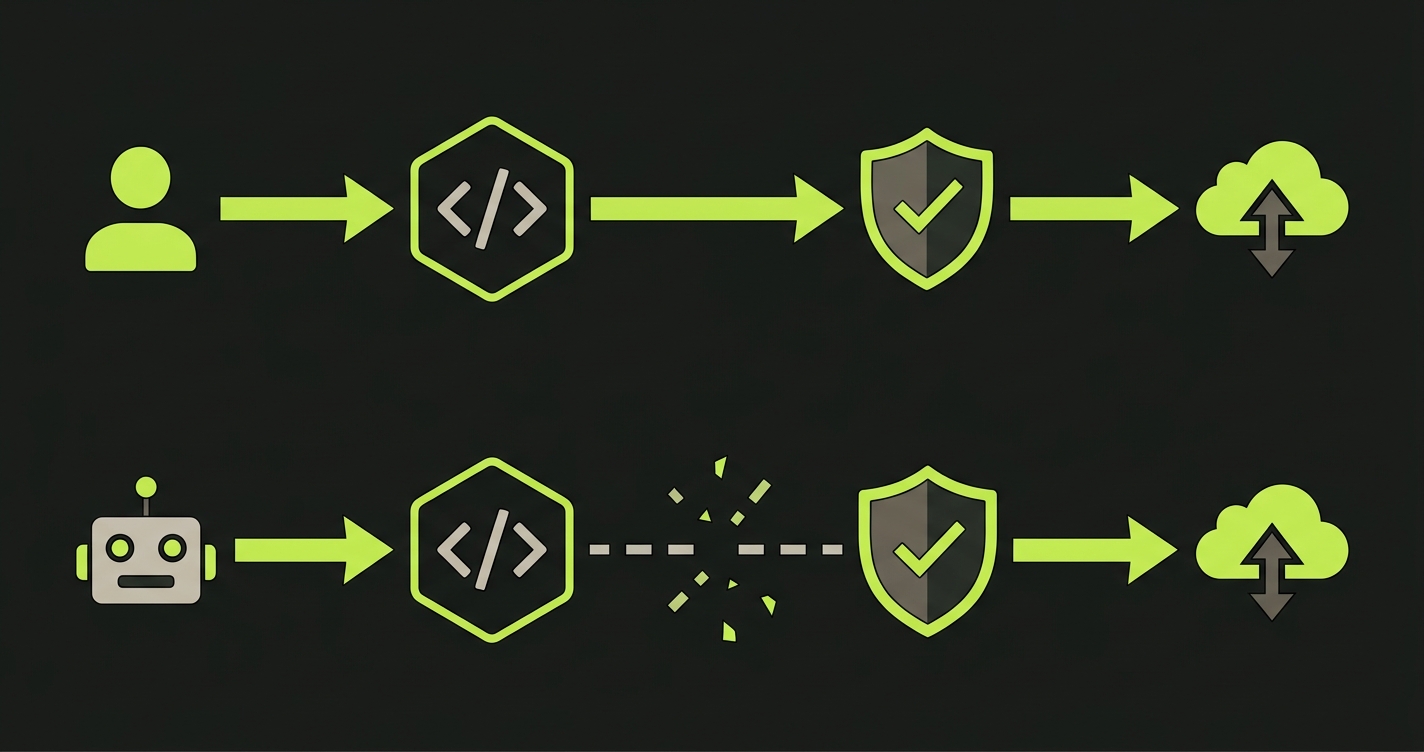

AI-generated code regularly skips the defensive steps that experienced developers apply automatically. String interpolation in database queries produces SQL injection vectors. Authorization checks get omitted on internal API routes under the assumption that only authenticated users reach them. Input validation disappears on endpoints where the model judged the input "safe enough" from context. The AI code review tools category exists precisely because these gaps are common enough to warrant dedicated tooling. Yet review catches them before deployment only if someone is actually running it on every AI-generated diff.

Dependency Drift

Ask an AI assistant to add a package and it will suggest whatever was current as of its training cutoff. That might be six months ago. It might be eighteen months ago. The suggested version could conflict with your lockfile, introduce a known CVE, or pull in a transitive dependency that breaks your build on the next install. The vibe coding failures we have documented trace multiple production incidents back to dependency decisions AI made confidently on outdated information.

The "Works but Wrong" Problem

The "works but wrong" problem scales with team size. This is the failure mode that is hardest to detect because nothing breaks visibly. The user flow completes. The API returns 200. The data saves. Something is off. A calculation rounds incorrectly, a filter includes records it shouldn't, a notification fires at the wrong time. The test suite has no assertion that catches the deviation because the deviation is in business logic, not in structure.

Why Your Existing Tests Miss AI-Generated Bugs

The test suite you have today was written against a contract. That contract describes what the application was supposed to do when your developers wrote it. The assertions reflect your developers' mental model of the code: the edge cases they thought to handle, the behaviors they thought to verify, the inputs they thought to cover.

AI-generated code breaks that contract in a specific way. It does not break the structure your tests check. It breaks the intent your tests assumed.

A unit test that verifies a function returns a UserProfile object does not catch a function that returns the wrong user's profile. An integration test that confirms a payment endpoint returns HTTP 200 does not catch a payment that charges the wrong amount. A test that exercises the happy path of a checkout flow does not catch a discount that applies twice because the AI-generated promotion logic evaluates the cart twice.

The role of AI in software testing has expanded rapidly, but most of that expansion is on the generation side, not the verification side. This is the core argument behind automated code review vs testing as complementary disciplines rather than substitutes: code review catches structural and pattern problems, but behavioral testing catches what the code actually does. When AI generates code, the structural problems are less common (the code is syntactically competent) and the behavioral problems are more common (the intent was lost in translation from prompt to implementation).

There is a second problem layered underneath this. Tests cover the code that existed when the tests were written. If an AI assistant rewrites a service, the new implementation may have entirely different behavior in paths the original tests never exercised. Coverage numbers do not drop. The tests still pass. The new behavior is simply untested. Not because coverage fell, but because the tests map to the old implementation's surface area, not the new one's.

The False Green Problem

Green CI is the signal teams trust. It is also the signal AI-generated code most reliably fakes.

Consider a realistic scenario. Your team uses Cursor to refactor a checkout service. The refactored code handles the same inputs, returns the same types, and satisfies every existing test assertion. Cursor was confident; it showed you a diff that looked clean. The tests pass. The PR merges.

Three days later, a customer reports that their applied promo code did not reduce the total. The promotion service integration, rewritten as part of the same refactor, now passes the discount amount as a string instead of a number. The downstream calculation silently coerces it. The result is wrong. No test caught it because no test was asserting on the final calculated total with a specific promo code applied.

This is a false green: tests pass, behavior is wrong.

False greens cluster in predictable places. Integration boundaries are the most common. Where one service's output becomes another service's input, AI-generated code may get the contract slightly wrong in ways that only surface at runtime. Business logic calculations are a close second, because unit tests typically check structure rather than computed values against real inputs. Security boundaries are the most dangerous: an AI-generated auth middleware that appears to work correctly during testing but skips authorization on a specific request path will pass every happy-path test you have.

The pattern repeats across codebases that ship with AI assistance: the code is functionally plausible, the tests confirm plausibility, and the edge cases that actually matter for correctness fall into the gap between them.

How to Test AI-Generated Code: A Strategy That Works

The answer is not "write more unit tests." Unit tests written against AI-generated code inherit the same problem. You are asserting against what the AI produced, not against what the application was supposed to do.

The shift is from verifying structure to verifying behavior. Three things change.

Behavioral Testing Over Unit Testing

Instead of testing individual functions in isolation, test complete user-facing scenarios end to end. A behavioral test for checkout does not test the applyDiscount function. It tests that a user who adds a valid promo code to a $100 cart checks out paying $80. That assertion survives refactors, catches the wrong-type bug described above, and validates intent rather than implementation. The testing pyramid does not disappear, but the weight shifts upward when AI is generating the implementation layer.

Contract Testing at Service Boundaries

AI-generated code is most likely to break at integration points. Every service boundary, especially any interface touched by AI-assisted refactoring, should have contract tests that verify the shape, type, and valid ranges of data crossing that boundary. A type mismatch that causes a silent coercion in production will fail immediately in a contract test. This is the category of test that catches the promo-code-as-string failure before deployment.

Regression Testing on AI-Generated Diffs

When an AI assistant rewrites or refactors a file, treat the entire surface area of that file as untested until proven otherwise. Behavioral tests that exercise every flow touching the modified code need to run before that change merges. This is the shift-left argument for AI code generation applied specifically: the earlier you catch AI-introduced regressions, the lower the cost.

Human Review for Security and Business Logic

Security patterns and business logic correctness are not reliably catchable by automated tests alone. Security patterns (input validation, authorization checks, SQL parameterization) benefit from a human reviewer who understands the threat model. Business logic correctness (pricing, permissions, data access rules) benefits from a product owner or domain expert who can read test output and say "that number is wrong."

This is where Autonoma fits. We built Autonoma specifically because the testing gap in AI-assisted codebases cannot be closed by writing more tests manually. When a Planner agent reads your codebase, including everything your AI tools have generated, it builds tests from what the code actually does, not from what a developer remembers the code was supposed to do. The Automator runs those tests against your live application. When AI rewrites a service tomorrow, the Maintainer updates the tests to match the new implementation while flagging behavioral deviations. The codebase is the spec. The AI output gets verified against it, automatically, on every change. For a practical guide to applying this to an existing application, how to test a vibe-coded app walks through the same process step by step.

Measuring AI Code Quality: Metrics Beyond "Does It Compile"

If your current quality metric is passing CI, you are measuring the floor, not the ceiling.

A more complete quality picture for AI-assisted codebases looks at several dimensions together. Behavioral coverage, the percentage of user-facing flows that have end-to-end assertions, gives you a more honest signal than line coverage. A codebase with 85% line coverage and 20% behavioral coverage has a lot of tested code that verifies nothing meaningful about user experience.

Regression rate on AI-generated changes is a leading indicator of testing gap. If a disproportionate share of production bugs trace back to AI-assisted commits, the problem is almost always the false green pattern: tests that pass on the new code but do not verify the behaviors the new code was meant to preserve. Tracking regression rate by commit source separates AI-generated drift from traditional bugs.

Security scan coverage is a distinct metric from functional test coverage. A codebase with full unit test coverage can still have SQL injection, missing auth checks, and exposed secrets if no security-focused scan is running on AI-generated diffs. Dependency health (tracking whether AI-suggested packages are current, unvulnerable, and compatible) should be automated and run on every change, not reviewed manually at the end of a sprint.

The false green rate is the hardest to measure directly, but a proxy is available: how often does production reveal a bug that no test caught, specifically in a flow that was AI-modified recently. Teams that track this find the number is higher than they expected, and it drops significantly once behavioral testing coverage increases on AI-generated change sets.

A complete generative AI testing strategy tracks these metrics together, not in isolation. The gap between "AI writes code" and "that code is verified" is not a hypothetical concern. It is the specific failure mode that makes AI-assisted development risky at scale. The solution is not slowing down AI code generation. It is building a verification layer that moves at the same speed.

Generative AI testing is the practice of verifying code produced by AI tools like Claude, Cursor, and Copilot before it reaches production. It is distinct from using AI to help write tests. Instead, it focuses on the QA methodology required when AI is the author of the code under test. The challenge is that AI-generated code introduces failure modes that traditional test suites were not designed to catch: subtle logic bugs, missing security checks, and behavior that satisfies test structure without satisfying business intent.

Existing tests were written against a specific contract: what the application was supposed to do when human developers wrote it. AI-generated code can satisfy that contract structurally (returning the right types, hitting the right endpoints) while violating it behaviorally. The assertions in your test suite reflect your developers' mental model of the code. When AI rewrites a service, the new implementation may behave differently in paths the original tests never explicitly covered. Coverage numbers stay the same; the untested surface area grows.

The most common categories are: subtle logic errors where AI-generated code produces plausible but incorrect results (wrong calculation order, inverted conditions); missing security patterns like absent authorization checks, SQL injection from string interpolation, and unvalidated inputs; dependency drift from outdated package suggestions based on training data cutoffs; and silent type coercions at integration boundaries where one AI-generated component passes data in a format that another component accepts but processes incorrectly.

A false green occurs when all tests pass but the application is still behaving incorrectly. AI-generated code is particularly prone to this because AI tends to produce structurally sound code that satisfies test assertions on shape and type while getting the intent wrong. A classic example: an AI refactor changes a discount amount from a number to a string. Unit tests pass because the function returns a value. The integration test passes because the API returns 200. Production breaks because the string silently coerces to a wrong number in a downstream calculation.

Three shifts matter most. First, prioritize behavioral tests over unit tests. Test complete user-facing scenarios end-to-end, not individual functions in isolation. Behavioral tests verify intent, not structure, and survive AI refactors without becoming false greens. Second, add contract tests at every service boundary touched by AI-generated code, since integration points are where type and format mismatches most commonly slip through. Third, treat any AI-modified file as having untested surface area until behavioral regression tests confirm the flows it touches still work.

Yes. Autonoma's Planner agent reads your codebase, including all AI-generated code, and generates tests derived from what the code actually does, not from what a developer remembers it should do. This directly addresses the false green problem: tests are built from the current implementation's behavior, so AI-introduced deviations surface as failures rather than silently passing. When AI modifies code in the future, the Maintainer agent updates tests and flags behavioral changes that represent regressions.