The testing pyramid is outdated for lean teams. The pyramid prioritized unit tests because E2E tests were expensive to write and brutally expensive to maintain. That constraint has changed. Agentic testing platforms like Autonoma access your codebase and autonomously generate, execute, and maintain end-to-end tests, eliminating the cost that made E2E prohibitive. For teams of 3 to 20, the right model is inverted: start with E2E coverage of your critical paths, add unit tests for complex business logic, and skip the middle layer until it earns its place.

I've watched five-person teams spend entire sprints building unit test suites while their checkout flow silently broke in production. The bugs that actually hurt users were living in the seams between systems — exactly where unit tests cannot reach — and the pyramid told everyone to look the other way. Here's why the original model, heavy on unit tests at the base and a tiny sliver of E2E tests at the top, made perfect sense in 2009 but makes almost no sense in 2026.

What Is the Testing Pyramid?

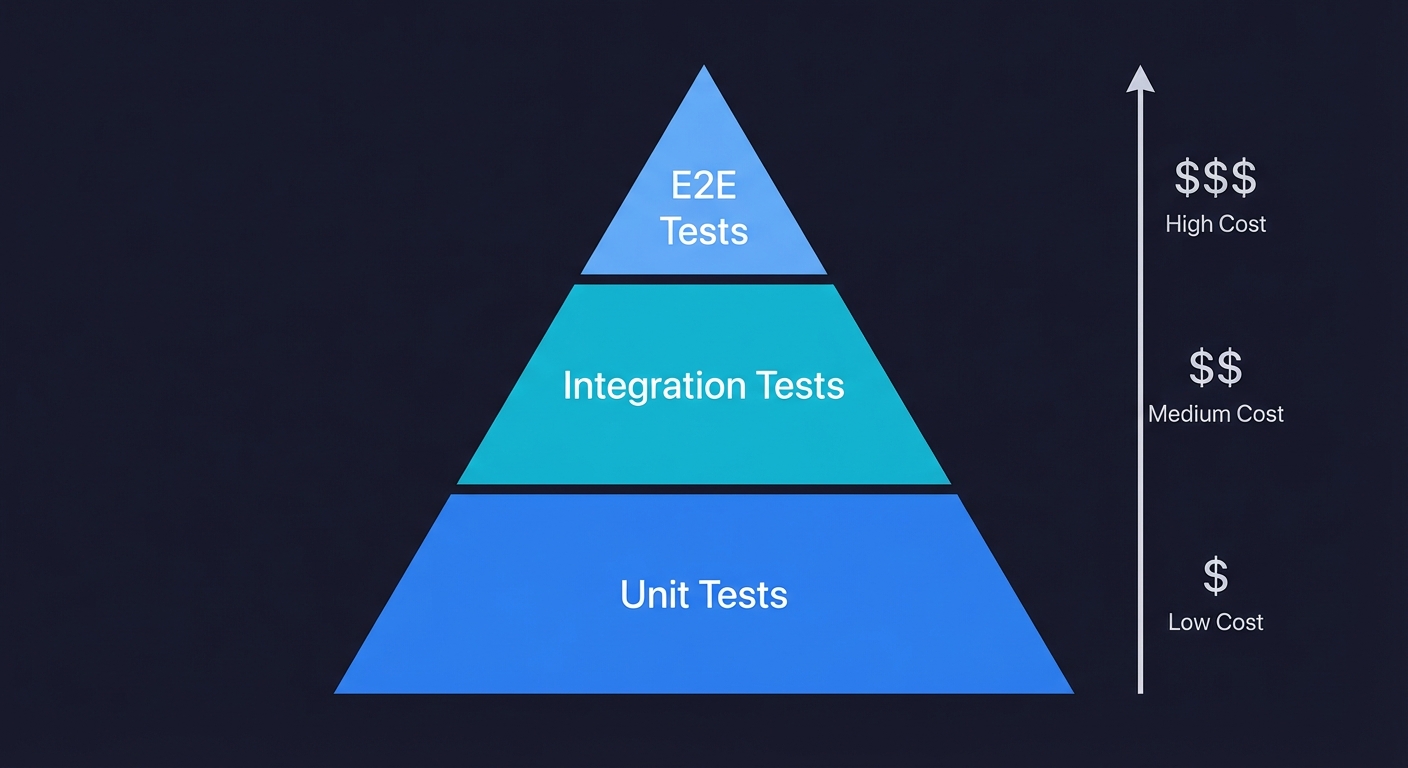

The testing pyramid is a visual framework for balancing the proportion of different test types in a software testing strategy. It places many fast, cheap unit tests at the base, a smaller layer of integration tests in the middle, and a small number of slow, expensive end-to-end (E2E) tests at the top. The model was introduced by Mike Cohn in Succeeding with Agile (2009) and became the default testing strategy taught across the industry. Its core logic is economic: invest most heavily in the tests that are cheapest to write and maintain, and minimize investment in the tests that are most expensive.

Where the Testing Pyramid Came From (and Why It Stuck)

Mike Cohn introduced the testing pyramid in Succeeding with Agile in 2009. The idea was straightforward: unit tests are cheap, fast, and stable. Integration tests are slower and more fragile. E2E tests are the slowest, most expensive, and most brittle of all. Therefore, write a lot of unit tests, some integration tests, and very few E2E tests.

This was not wrong at the time. In 2009, writing an end-to-end test meant wiring together Selenium scripts that broke every time someone changed a button label. Running those tests meant maintaining browser farms. Debugging failures meant sifting through screenshot diffs and timing-dependent log files. A single E2E test could take a senior engineer an entire day to write and half a day to fix every time the UI changed.

The economics were real. Unit tests cost pennies to write and run. E2E tests cost dollars. The pyramid was a perfectly rational response to those costs.

But here's what happened: the testing pyramid became dogma. Teams stopped asking "what testing strategy fits our constraints?" and started reciting "write more unit tests" as a reflexive answer to every quality problem. I've seen five-person teams spend entire sprints building unit test suites while their checkout flow silently broke in production. The bugs that actually hurt users were living in the seams between systems, exactly where unit tests cannot reach, and the pyramid told everyone to look the other way.

Tools like Autonoma now make it possible to flip that model — AI agents generate and maintain E2E tests from your codebase, so the cost that made E2E prohibitive no longer applies.

The Testing Pyramid's Hidden Assumption

The pyramid rests on one assumption that nobody talks about: E2E tests are expensive to create and maintain. Every argument for the pyramid flows from this single premise.

Unit tests should outnumber E2E tests because E2E tests are expensive. Integration tests are the compromise layer because E2E tests are expensive. You should "push testing down" the pyramid because E2E tests are expensive.

Remove that assumption and the entire model collapses.

If E2E tests were as cheap to create and maintain as unit tests, no rational person would choose to write 500 unit tests that verify functions in isolation over 50 E2E tests that verify the actual user experience. The unit tests give you confidence that individual pieces work. The E2E tests give you confidence that your product works. For a team shipping to production three times a week with five engineers, "does the product work" is the only question that matters at deploy time.

The distinction between unit testing vs integration testing matters for understanding what each layer covers. But the question of how much of each to write is purely economic. And the economics have changed.

Unit Testing vs Integration Testing: What the Pyramid Gets Wrong

The debate around unit testing vs integration testing usually gets framed as a question of granularity. Unit tests verify individual functions. Integration tests verify boundaries between components. The pyramid says write far more of the former than the latter. This framing misses the point entirely.

The real question is: which layer catches the bugs that actually ship to production?

Unit tests catch logic errors inside functions: a tax calculator that rounds wrong, a date parser that mishandles timezones. These bugs are real, but they are also the easiest to spot during code review and the least likely to survive to production. Integration tests catch boundary failures: your API returns a 200 but the payload shape changed, your form submits to an endpoint that now expects different headers. These bugs are harder to spot and far more damaging.

Kent C. Dodds challenged the pyramid with his "testing trophy" model, arguing for mostly integration tests, since that is where you get the highest confidence-per-test. He was right about the direction. But I'd push further. For lean product teams, the highest-confidence layer is not integration tests but E2E tests. Integration tests still require you to decide which boundaries to test, and you will always miss some. E2E tests cover all the boundaries in a single flow. When the cost of writing E2E tests drops to near zero (and it has), choosing to write 500 unit tests instead of 50 E2E tests is not a best practice. It is a misallocation of engineering time.

Unit vs Integration vs E2E: A Quick Comparison

| Aspect | Unit Tests | Integration Tests | E2E Tests |

|---|---|---|---|

| Scope | Single function or component | Boundaries between systems | Full user workflow |

| Speed | Milliseconds | Seconds | Seconds to minutes |

| Cost to maintain | Low | Medium | High (without agentic tooling) |

| Flakiness risk | Very low | Moderate | High (without modern frameworks) |

| Best for catching | Logic errors in isolation | Contract and boundary failures | User-facing workflow breaks |

| Typical ratio in pyramid | 70% | 20% | 10% |

Why Unit Tests Give You False Confidence

Here is what happens at every early-stage company I've worked with or advised. The team writes 400 unit tests. CI is green. Coverage is at 78%. Everyone feels great. And then reality hits.

Then someone deploys on a Friday afternoon. The signup flow is broken because a frontend form field was renamed but the validation logic still references the old field name. The API endpoint works perfectly in isolation. The form component renders correctly in isolation. Every unit test passes. The user sees a blank error message and bounces.

This is not a contrived example. This is the most common category of production bug at startups: integration failures at the seams between systems that unit tests structurally cannot detect.

Unit tests verify that calculateTotal(items) returns the right number. They do not verify that the checkout button actually submits the order. They do not verify that the confirmation email arrives. They do not verify that the Stripe webhook updates the subscription status in your database. These are the things that break in production, and they are invisible to unit tests by design.

I'm not arguing that unit tests are useless. They are excellent for complex business logic: pricing calculations, permission systems, state machines. If you have a function with 15 edge cases, a unit test suite is the right tool. But if your quality strategy starts and ends with unit tests, you are optimizing for developer confidence at the expense of user confidence.

The Real Cost of the Traditional Pyramid for Lean Teams

Here is what the testing pyramid actually costs a five-person startup:

Week 1-2: The team decides to "do testing right." They set up Jest, write unit tests for their API handlers, add component tests for their React forms. Coverage climbs. Morale is high.

Week 4-6: The test suite has 300 unit tests. CI takes 8 minutes. Some tests are starting to feel like busywork, testing that a function returns what you told it to return. But the team pushes forward because the pyramid says more unit tests are better.

Week 8-10: Someone writes the first E2E test with Playwright. It takes two days. It tests the signup flow. It works on the developer's machine but fails in CI because of timing issues. The engineer spends another day adding waits and retries. It passes. Barely.

Week 12: The signup flow changes. The E2E test breaks. Nobody has time to fix it. It gets skipped. Then another E2E test breaks. Then a third. Within a month, the E2E suite is entirely test.skip(). The team is back to manual smoke testing before deploys, except now they also have 400 unit tests to maintain, and those are starting to break on refactors too.

Week 16: A production bug hits the checkout flow. All unit tests were green. The team could have caught it with one E2E test that nobody had time to write or maintain.

This is the standard trajectory. I've watched it happen at a dozen companies. The testing pyramid promises quality through volume. What it delivers is maintenance burden without proportional protection. And when tests start failing intermittently for reasons unrelated to real bugs, teams abandon them entirely. The regression testing maintenance problem is well documented, and the pyramid makes it worse by encouraging a high volume of tests that nobody has time to maintain.

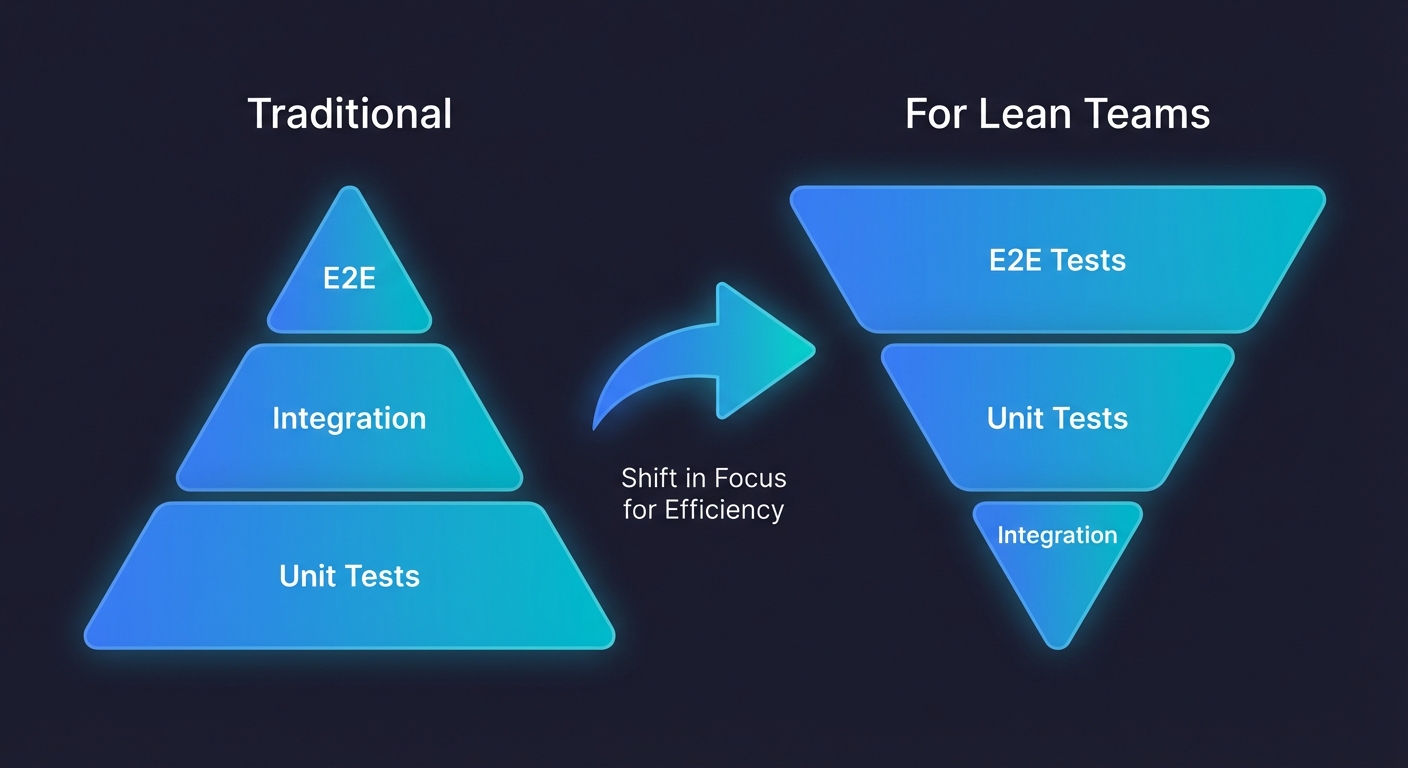

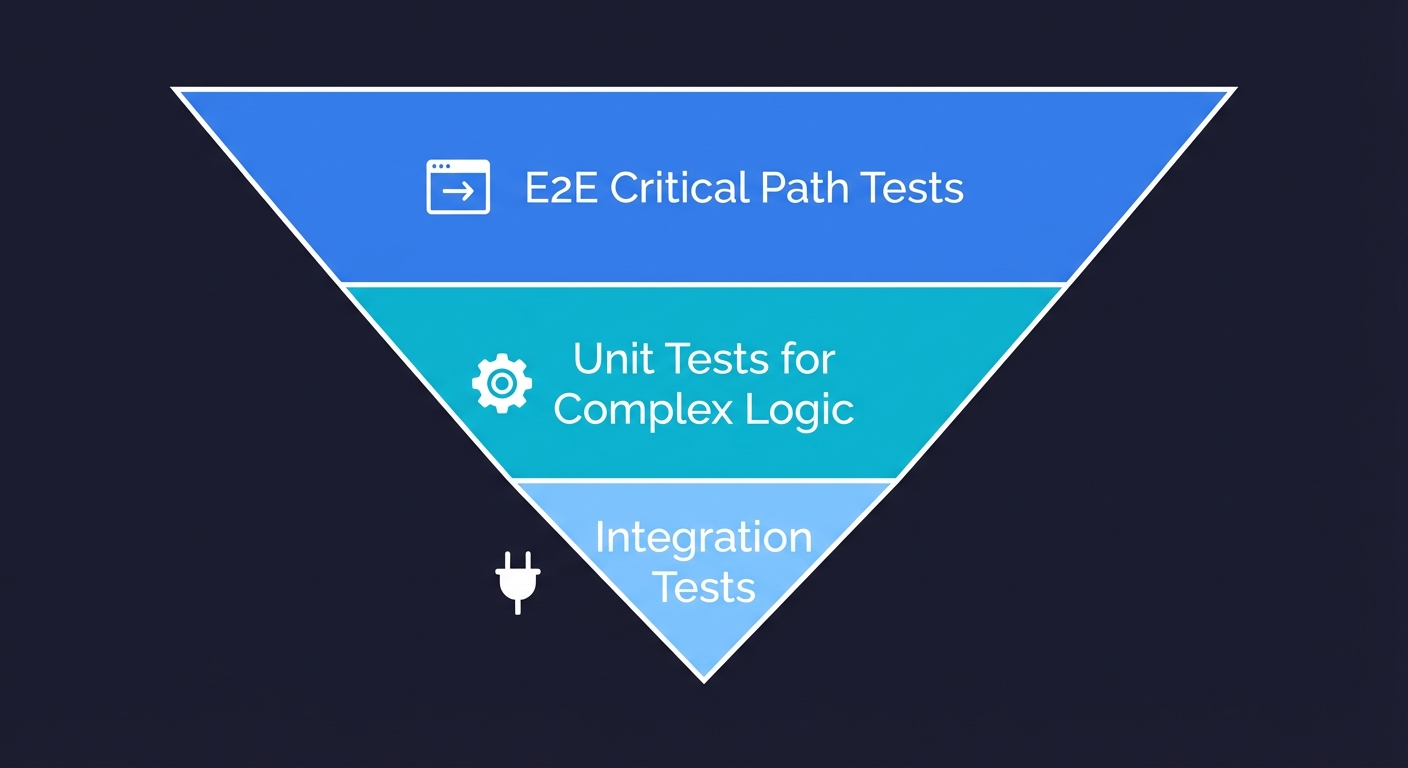

Inverting the Pyramid: E2E First

For lean teams, the model should be inverted. Not as a thought experiment, but as the starting strategy that reflects where bugs actually hurt your business.

Layer 1 (largest): E2E tests on critical paths. Your signup flow, your checkout flow, your core activation loop. These are the three to five user journeys where a bug means lost revenue or lost users. Cover them first. For a framework on selecting which flows to cover, see our E2E testing playbook for startups.

Layer 2 (medium): Unit tests on complex logic. Pricing calculations, permission checks, data transformations with edge cases. These are the functions where bugs are subtle and E2E tests are too coarse to catch them.

Layer 3 (smallest): Integration tests as needed. API contract tests between your frontend and backend. Database query tests for complex joins. These earn their place when you have clear integration boundaries that are changing independently. For more on where integration tests fit, see our integration vs E2E testing comparison.

This is not a radical idea. It builds on the direction Kent C. Dodds pointed toward with the testing trophy and that teams like Antithesis have advocated with deterministic E2E environments. It is a recognition that the goal of testing is to prevent production bugs that hurt users, and production bugs overwhelmingly live in the interactions between systems, not inside individual functions.

The traditional testing pyramid would call this approach reckless. But the traditional testing pyramid assumes E2E tests are expensive. What if they weren't?

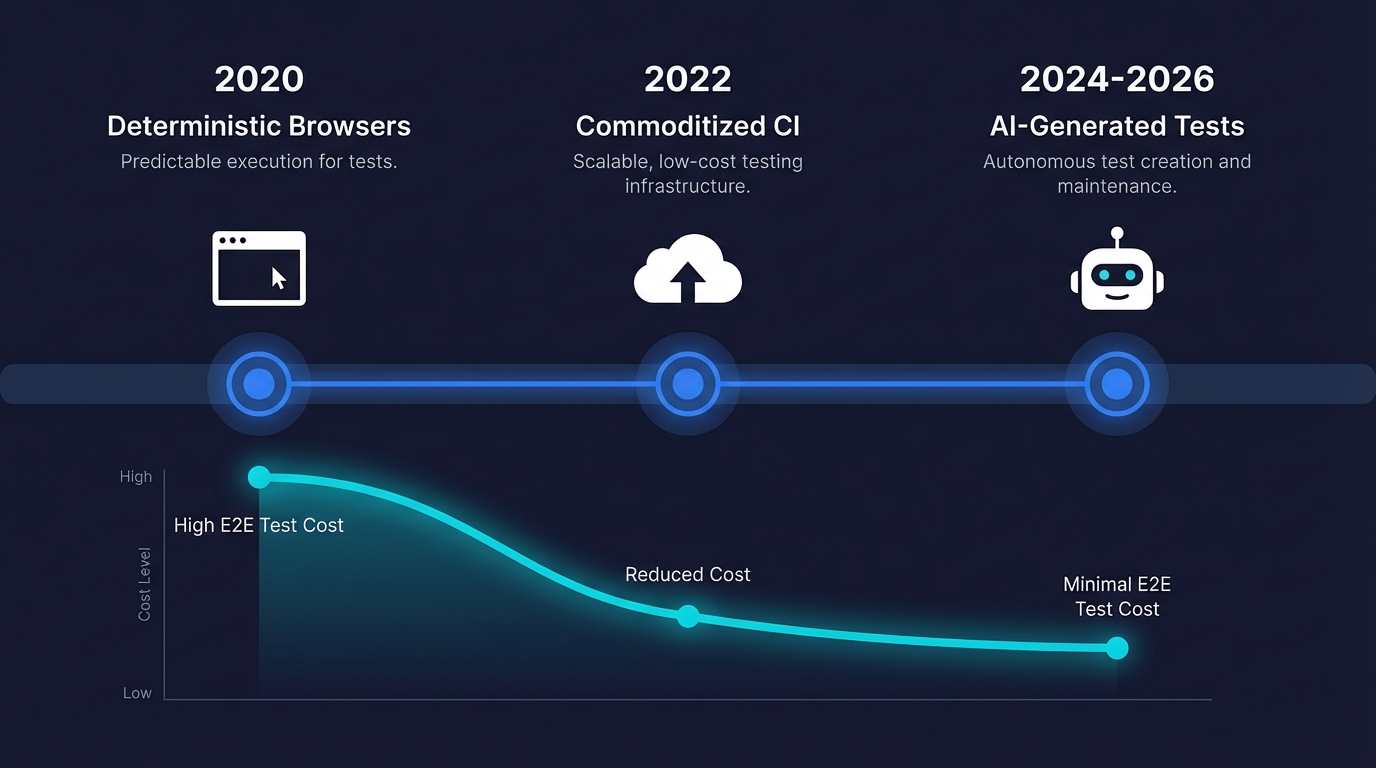

The Economics That Changed Everything

Three shifts have made E2E tests dramatically cheaper than they were when the pyramid was invented:

Modern browsers are deterministic. Playwright and similar tools run against real browser engines with auto-waiting, network interception, and reliable selectors. The "E2E tests are flaky" reputation came from Selenium in 2012, and the root causes of flaky tests are well understood and solvable. Modern test automation frameworks are a different category of tool.

CI infrastructure is commoditized. Running a headless browser in a GitHub Actions container costs fractions of a cent per execution. The "E2E tests are slow and expensive to run" argument assumed dedicated browser farms. That infrastructure cost is gone.

AI can write and maintain the tests. This is the big one. The manual cost of writing E2E tests, understanding the application, writing selectors, handling edge cases, updating tests when the UI changes, was the dominant cost. It's what made the pyramid rational. And it's what agentic testing eliminates.

What Agentic Testing Actually Means for the Pyramid

The term gets thrown around loosely, so let me be precise. Agentic testing refers to AI agents that autonomously plan, generate, execute, and maintain tests. Not recording user clicks. Not generating test templates from screenshots. Agents that access your codebase, understand your application architecture, reason about user flows, and write real test code.

This is the distinction that matters. A "record and replay" tool automates the typing. An agentic testing platform automates the thinking.

Autonoma is a full agentic testing platform built on this model. It connects to your repository, reads your codebase (routes, components, API endpoints, database schemas), and autonomously generates end-to-end tests for your critical user flows. When your code changes, it detects what changed, evaluates whether existing tests need updating, and updates them without human intervention.

The practical effect on the testing pyramid is this: the cost of E2E tests drops to near zero. Not the execution cost, that was already low, but the creation and maintenance cost. The human hours. The part that actually made the pyramid necessary.

When E2E test creation costs approach zero, the economic argument for the pyramid disappears. You can have broad E2E coverage and targeted unit tests. You don't have to choose. The pyramid was a budget allocation strategy, and generative AI in testing just expanded the budget.

How This Looks in Practice

Here is a concrete example. Say you're a six-person team building a B2B SaaS product. You have a Next.js frontend, a Node API, a PostgreSQL database, and Stripe for payments.

Traditional pyramid approach: You write 300+ unit tests for your API handlers and utility functions, 30 integration tests for your database queries, and maybe 5 E2E tests for critical flows (if you ever get to them, which you probably won't). Total engineering time for test creation: 3 to 4 weeks spread across several sprints. Ongoing maintenance: 2 to 4 hours per week fixing broken tests after refactors. And after all that investment, a renamed form field still takes down your checkout flow on a Friday.

Inverted pyramid with agentic testing: Autonoma connects to your repo, analyzes your routes and components, and generates E2E tests for your signup, onboarding, core workflow, billing, and settings flows. You add unit tests for your pricing engine and permission system manually, because those have edge cases that require domain knowledge. Total engineering time: a few hours for initial setup plus the time to write your targeted unit tests. Ongoing maintenance: near zero for E2E tests (the agent handles it), minimal for unit tests (they're scoped to stable business logic).

The inverted approach gets you to "deployable with confidence" faster, with less ongoing cost, and with better coverage of the bugs that actually reach users.

"But What About Test Isolation and Speed?"

The most common objection to E2E-heavy testing is speed. Unit tests run in milliseconds. E2E tests run in seconds. If you have 500 E2E tests, your CI pipeline takes 20 minutes.

This is a real concern, but it's also a solved problem. Parallel execution across multiple browser instances can run 50 E2E tests in under two minutes. Selective test execution (running only the tests affected by the changed code) reduces that further. Modern CI platforms support sharded test execution out of the box.

The speed argument also conflates two different things: development feedback speed and deploy confidence. Unit tests are better for development feedback. When you're working inside a function, you want a test that runs in 200ms and tells you if you broke the logic. E2E tests are better for deploy confidence. When you're about to push to production, you want a test that tells you the checkout flow works.

These are not competing goals. They are complementary. The pyramid mistake was treating them as a hierarchy instead of as parallel concerns.

The Narrow Cases Where the Pyramid Still Applies

To be precise about what I'm arguing: the testing pyramid is wrong as a default. It is not wrong everywhere. There are two specific cases where the traditional weighting holds:

Library and framework development. If you're building a library consumed by other developers, your "users" are functions, not human workflows. Unit tests are your primary tool because there are no user flows to test end-to-end. The pyramid applies here.

Pure algorithmic systems. If your product is fundamentally a pricing engine, a recommendation model, or a compiler, the logic density lives inside individual functions with many edge cases. Unit tests earn their keep.

That is roughly the full list. If you are building a user-facing product with a team of 3 to 20 engineers, the pyramid is costing you more than it protects you. Even large organizations with dedicated testing functions are rethinking the economics as agentic tooling changes what "expensive" means.

Transitioning from the Traditional Pyramid

If you already have a traditional pyramid in place, you don't need to tear it down. The transition is additive, not destructive.

Step 1: Identify your three to five critical user flows. These are the flows where a bug means lost revenue, lost users, or a compliance violation. If you're unsure, look at your analytics: what are the five most common user journeys from session start to conversion? (If you have no testing function at all, start with our QA automation guide for teams with no QA.)

Step 2: Get E2E coverage on those flows. Whether you write them manually with Playwright, or use an agentic testing platform like Autonoma that generates them from your codebase, the goal is the same: automated verification that these flows work, running on every deploy.

Step 3: Audit your existing unit tests. How many of them test trivial behavior? How many test implementation details that break on refactor without indicating a real bug? Delete the ones that cost more to maintain than they're worth. Keep the ones that cover genuine business logic complexity.

Step 4: Stop measuring coverage as a percentage. Coverage is a proxy metric that optimizes for the wrong thing. Instead, ask: "If I deploy right now, what's the probability that a critical user flow is broken and I don't know about it?" That's the metric that matters.

Stop Treating the Pyramid as Gospel

The testing pyramid will keep showing up in textbooks and onboarding docs for years. That is fine. What matters is whether you keep building your testing strategy around a cost model from 2009 or whether you look at the actual constraints you face today.

E2E test creation is no longer a multi-day engineering task. Test maintenance is no longer a weekly tax on engineering velocity. The tools have changed. The costs have changed. If your strategy hasn't changed, you are optimizing for a constraint that no longer exists.

For teams building products in 2026 with limited engineering bandwidth, the testing pyramid is not just outdated. It is actively harmful. It directs your scarcest resource (engineering hours) toward the testing layer that catches the fewest production bugs. Inverting that allocation is not radical. It is rational.

The testing pyramid was the right answer to a question that no longer applies: "how do we test efficiently given that E2E is expensive?" The better question, the one your users care about, is "how do we prevent the bugs that actually break our product?" Start from that question. E2E coverage becomes the foundation, not the afterthought. And the pyramid quietly flips itself.

Frequently Asked Questions

The testing pyramid is a testing strategy model introduced by Mike Cohn in 2009. It recommends writing many unit tests (the base), fewer integration tests (the middle), and very few end-to-end tests (the top). The rationale was economic: unit tests were cheap and fast, while E2E tests were expensive and brittle. The model is still widely taught but increasingly questioned as the cost of E2E testing has dropped dramatically.

For library developers and teams building complex algorithmic systems, the pyramid still applies. For lean product teams building user-facing applications, the pyramid's core assumption (that E2E tests are too expensive to be your primary testing strategy) no longer holds. Modern tooling, including agentic testing platforms that autonomously generate and maintain E2E tests, has fundamentally changed the economics.

Unit testing verifies that individual functions or components work correctly in isolation. Integration testing verifies that two or more systems work correctly together, for example, that your API correctly reads from and writes to your database. Unit tests are faster and more stable, but they cannot catch bugs that occur at the boundaries between systems. Integration tests cover those boundaries but are slower and more complex to set up.

The inverted testing pyramid (sometimes called the testing trophy or testing diamond) prioritizes E2E tests as the primary layer of test coverage, with unit tests for complex business logic and integration tests added as needed. It reflects the reality that most production bugs occur at the seams between systems, not inside individual functions. It is especially practical for lean teams using agentic testing tools that eliminate the creation and maintenance cost of E2E tests.

Agentic testing uses AI agents that autonomously plan, generate, execute, and maintain tests. Unlike record-and-replay tools that automate mouse clicks, agentic testing platforms like Autonoma access your codebase directly, understand your application architecture, and write real test code. When your codebase changes, the agent detects the changes and updates affected tests without human intervention. This eliminates the manual creation and maintenance cost that made E2E testing prohibitively expensive.

Most teams of 3 to 20 engineers need E2E coverage on 3 to 5 critical user flows: signup, core activation, checkout or billing, and the most frequently changed feature. That might translate to 10 to 30 individual E2E tests. The goal is not comprehensive coverage but protection of the flows where a bug means lost revenue or lost users.

No. The inverted pyramid is additive, not destructive. Keep unit tests that cover genuine business logic complexity: pricing calculations, permission systems, state machines with many edge cases. Delete unit tests that test trivial behavior or implementation details that break on refactor without indicating a real bug. The goal is to shift investment toward the tests that catch the bugs users actually encounter.