Key Takeaways: A mid-level QA engineer costs $110K-$135K in base salary, plus $40K-$65K in benefits, tooling, and ramp time, putting year-one all-in cost above $180K. Hiring makes sense when you have compliance mandates, hardware-adjacent testing, or a genuinely large codebase. For most startups shipping web or mobile products, AI-powered testing covers the same functional ground at a fraction of the cost and without the maintenance burden.

A founder I know spent two weeks trying to hire QA engineers. Strong candidates: mid-level, three to five years of experience, solid portfolios. She eventually made an offer. The engineer joined, spent the first month setting up Playwright, and by month two had a test suite covering their core checkout flow. Three months later, the designer rebuilt the UI. Half the tests broke. The QA engineer spent the next sprint fixing selectors instead of writing new tests. This isn't a story about a bad hire — the engineer was good. It's a story about a decision made without a clear framework. (If you've already made the hire and it's not going well, read why first QA hires fail at startups.) Here's the framework.

How Much Does It Cost to Hire a QA Engineer?

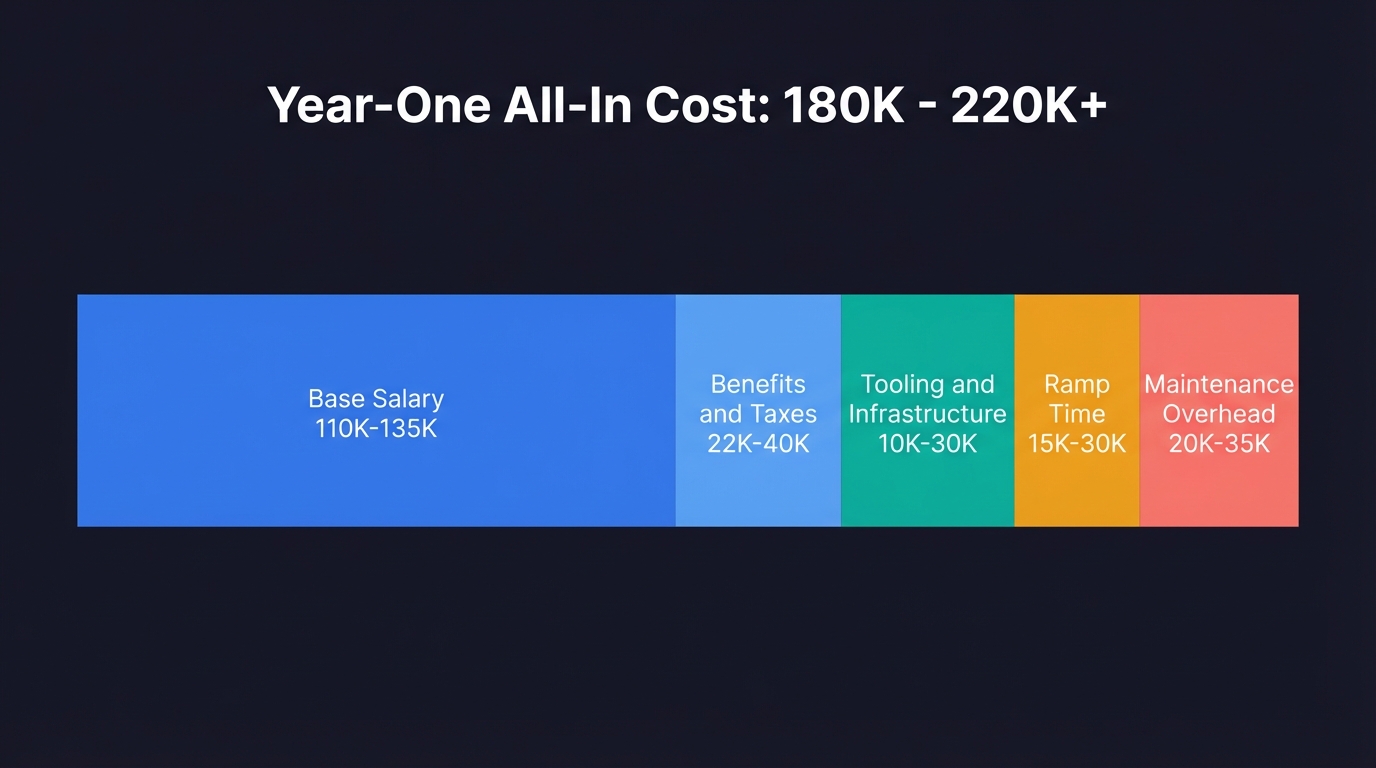

A mid-level QA engineer in the US costs $110,000 to $135,000 per year in base salary, with senior engineers running $140,000 to $160,000. After benefits, tooling, and ramp time, the true year-one cost of a QA hire is $180,000 to $220,000.

Before you post a job description, that's the number to anchor on. Add 20-30% for benefits, payroll taxes, and equipment, and a single hire is $150,000 to $200,000 in total annual cost. But the salary line is only the beginning. Below it are costs that rarely appear in the job description.

Tooling and infrastructure. A QA engineer needs somewhere to run tests: a staging environment, a CI/CD pipeline that actually works, test management software, and possibly device farms for mobile. Budget $10,000 to $30,000 per year depending on your stack.

Time to productivity. A new QA hire spends their first one to three months learning your codebase, establishing test conventions, and writing the initial suite. You're paying full salary before a single test ships. In startup time, that's a quarter.

Maintenance drag. This is the cost most founders underestimate. Our 2025 State of QA report found that teams spend 30-50% of their total QA time maintaining existing tests rather than building new coverage. Every time your UI changes, your test suite needs human attention. That's a tax on every sprint, forever. For practical strategies to reduce this burden, see our shift left testing guide for small teams.

These figures represent top-market rates from Bay Area and NYC remote-friendly companies. The national average for QA engineers is approximately $95K–$105K according to Glassdoor and PayScale data, so your mileage will vary depending on location and company stage.

The honest all-in cost of a QA hire in year one (salary, benefits, tooling, ramp time, and ongoing maintenance overhead) typically lands between $180,000 and $220,000.

| Cost Category | Annual Range | Notes |

|---|---|---|

| Base salary (mid-level) | $110,000 - $135,000 | Senior: $140K-$160K |

| Benefits and payroll taxes | $22,000 - $40,000 | 20-30% of base |

| Tooling and infrastructure | $10,000 - $30,000 | CI/CD, staging, device farms |

| Ramp time (productivity loss) | $15,000 - $30,000 | 1-3 months at reduced output |

| Ongoing maintenance overhead | $20,000 - $35,000 | 30-50% of QA time on upkeep |

| Year-one all-in total | $180,000 - $220,000+ |

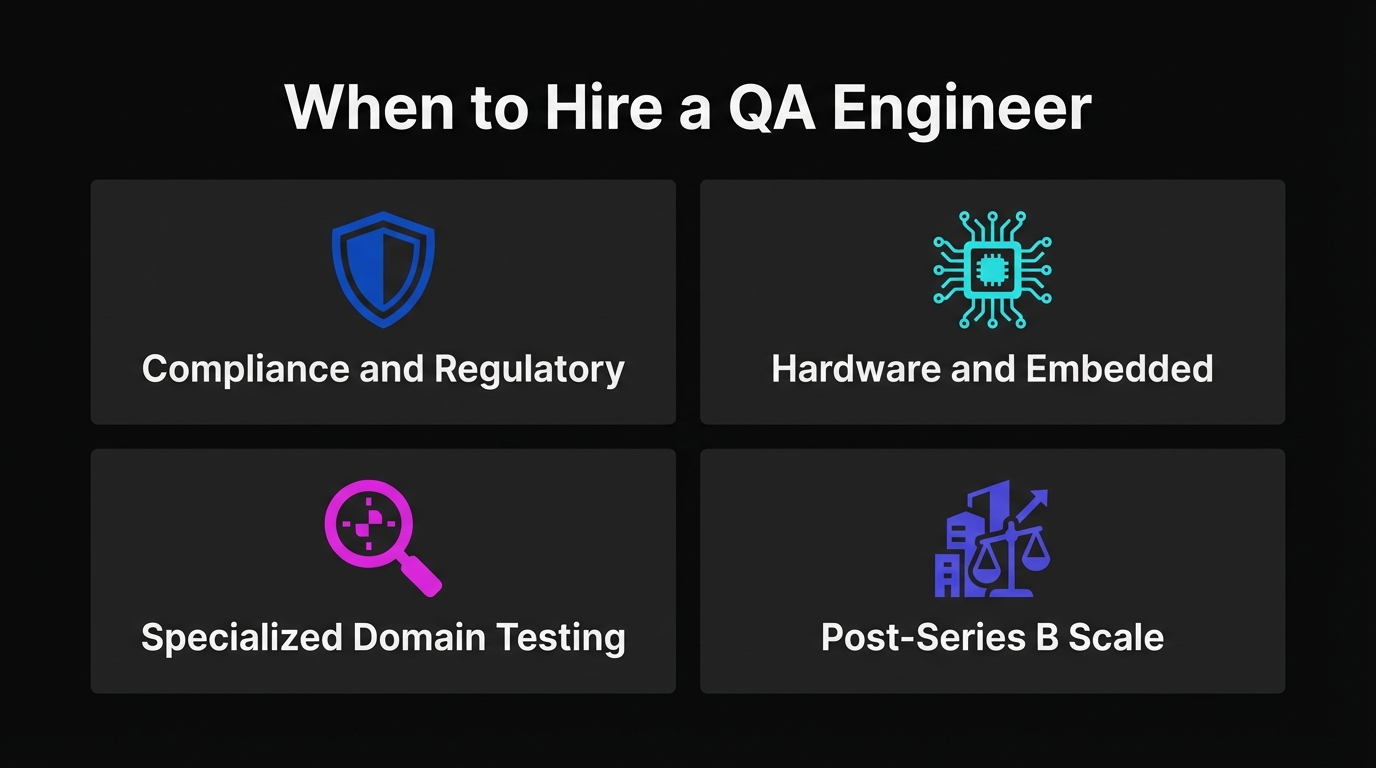

When Hiring a QA Engineer Is the Right Call

There are genuine scenarios where a QA hire is the correct decision, and it's worth being specific about them.

Regulatory or compliance requirements. Healthcare (HIPAA), finance (SOX, PCI-DSS), and government software often require documented human testing processes, audit trails, and sign-off from named individuals. AI agents can generate test evidence, but a compliance auditor may require a human QA role on record. If your compliance posture depends on it, hire.

Hardware or embedded systems. If your software interfaces with physical devices (medical hardware, industrial equipment, IoT sensors), you need someone who can physically test edge cases that no browser-based agent can reach.

Complex, specialized domain testing. Accessibility audits requiring nuanced judgment, security penetration testing, or performance testing under unusual load profiles benefit from a specialist whose entire focus is a narrow discipline. These are specialist roles, not generalist QA.

Post-Series B with a large, mature codebase. At a certain scale, a dedicated QA function makes sense. If you have fifty engineers and a test suite that serves as institutional memory, a QA team is appropriate infrastructure.

Notice what's not on that list: "we ship fast and things break." That's a testing process problem, not a headcount problem.

When Hiring a QA Engineer Is the Wrong Move

Most startups that post a QA engineer job description are in one of two situations. Neither actually requires a new hire.

Situation one: no automated testing at all. The team does manual smoke tests before deploys. Someone clicks through the happy path before each release. This works until it doesn't, and then a bug reaches production. The instinct is to hire someone to own testing. The right move is to build automated coverage first, because a QA engineer hired into a zero-automation environment will spend their first six months doing manual work that could have been automated from day one.

Situation two: a broken Playwright suite. Someone set up E2E tests early on, and now the suite is a liability. Tests fail for reasons unrelated to actual bugs. Developers skip CI checks. The proposed QA hire is supposed to fix and maintain the suite. In practice, they inherit a maintenance treadmill and spend most of their time on upkeep instead of coverage. For a practical guide on building E2E coverage that doesn't rot, see our E2E testing playbook for startups.

In both situations, the underlying problem is the same: the current approach to testing doesn't scale with shipping velocity. Adding a person doesn't fix that. It adds cost. A tool like Autonoma addresses both situations directly, generating tests from your existing code and maintaining them automatically as the product evolves.

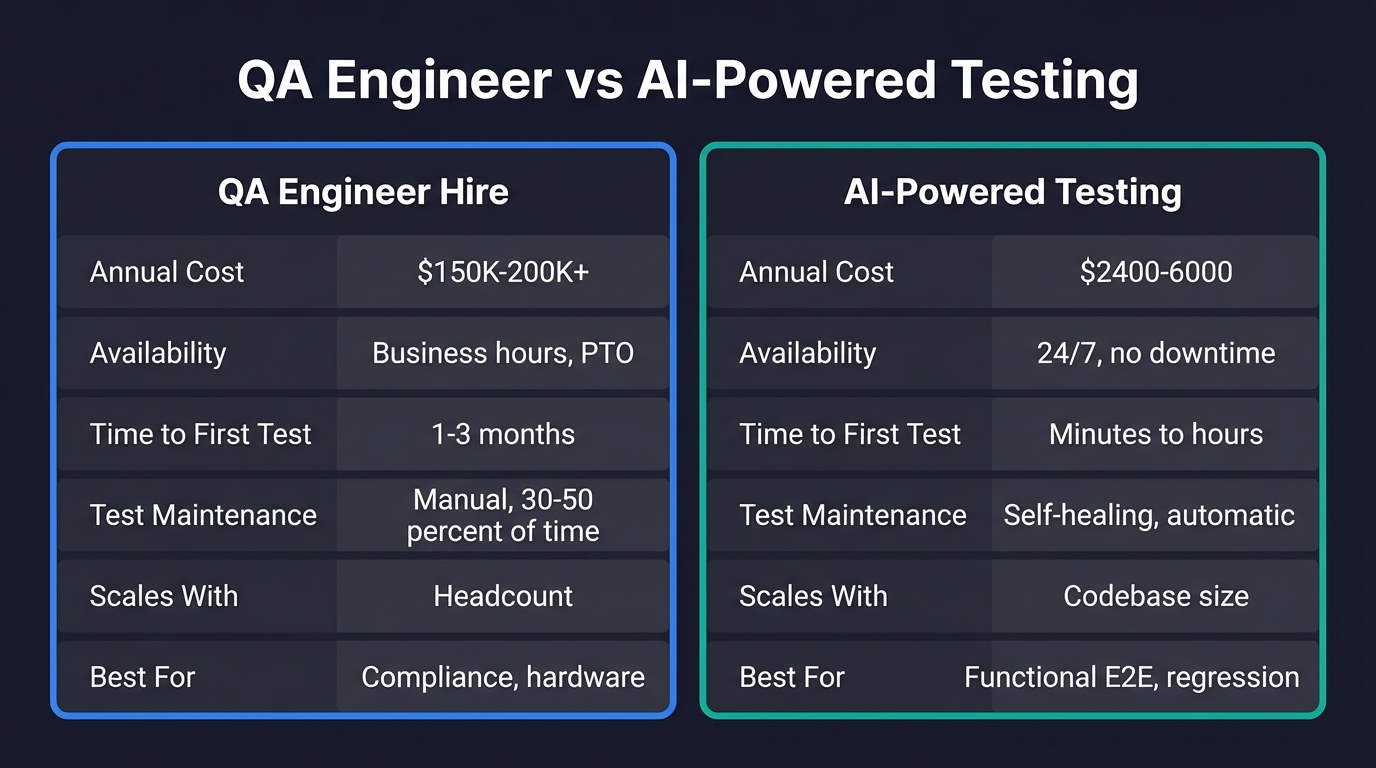

QA Engineer Salary and Cost vs. AI-Powered Testing: The Real Trade-off

Let's make this concrete. A QA engineer at $130,000 base salary costs roughly $160,000 to $170,000 all-in annually. That buys one person covering one set of working hours, a test suite requiring maintenance every time the product changes, and coverage that scales with how fast one person can write Playwright scripts.

What does $200 to $500 per month in AI testing tooling buy?

Agents that read your codebase and generate tests automatically. No one writes scripts, records flows, or defines test cases. Agents derive coverage from what you've already built. When your UI changes, tests self-heal. When something actually breaks, you get a clear failure report. Coverage scales with your codebase, not with headcount. To understand how this generation process works under the hood, see our guide to generative AI in software testing.

| Factor | QA Engineer Hire | AI-Powered Testing (e.g., Autonoma) |

|---|---|---|

| Annual cost | $150,000 - $200,000+ | $2,400 - $6,000 |

| Availability | Business hours, PTO, sick days | 24/7, no downtime |

| Time to first test | 1-3 months (ramp period) | Minutes to hours |

| Test maintenance | Manual, 30-50% of QA time | Self-healing, automatic |

| Scales with | Headcount | Codebase size |

| Best for | Compliance, hardware, strategy | Functional E2E, regression |

This is the core trade-off. For most startups with two to twenty engineers shipping web or mobile software, AI-powered testing covers the same functional ground as a QA hire at a fraction of the cost and without the maintenance burden.

We built Autonoma for exactly this scenario. Connect your codebase and our agents plan, execute, and maintain E2E tests autonomously. The Planner agent reads your routes, components, and user flows to generate test cases. The Automator runs them against your application. The Maintainer keeps them passing as your code evolves, including handling database state setup automatically so tests reflect real application behavior. If you want to understand the underlying approach, our guide to agentic testing explains how the three-agent architecture works.

For a broader look at how AI fits into the QA picture, our complete guide to AI for QA covers the full spectrum from AI-assisted scripting to fully autonomous agents.

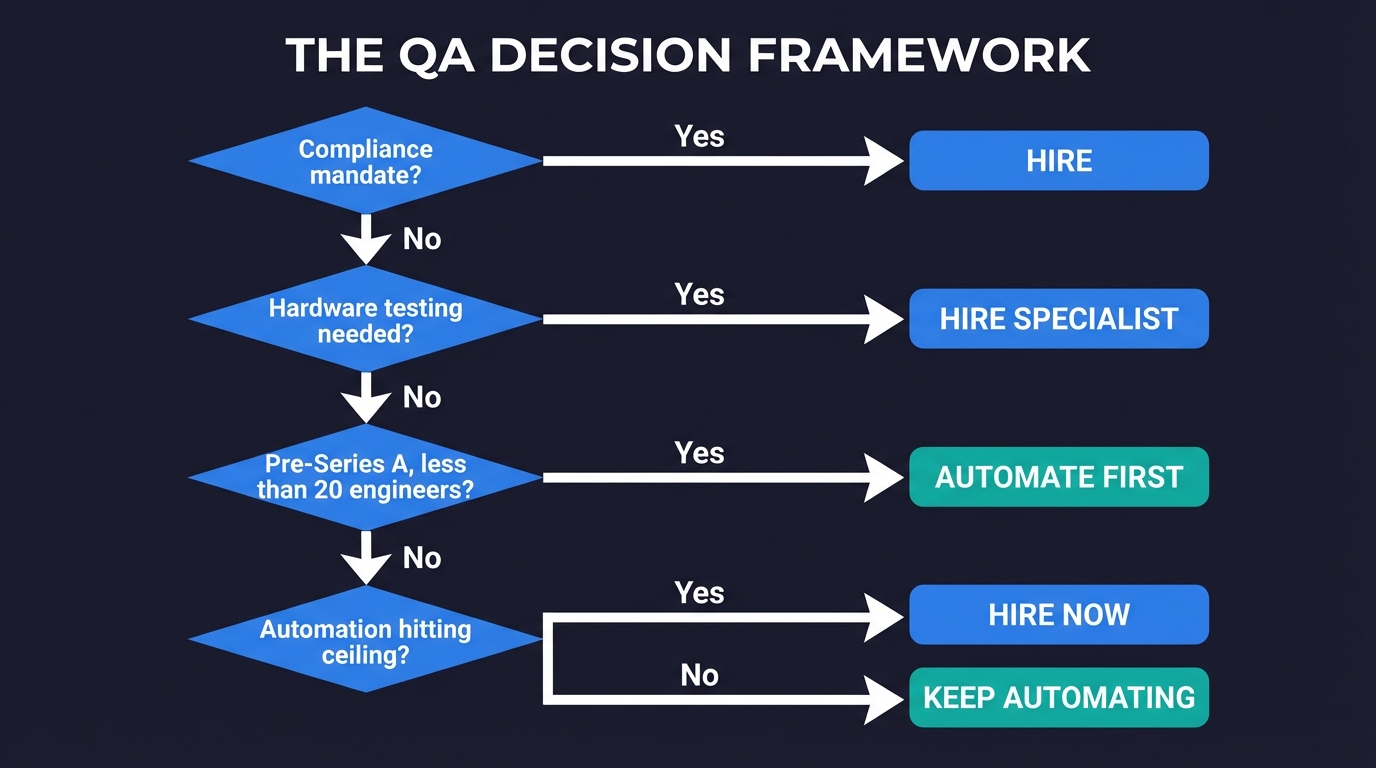

The Decision Framework: Should You Hire a QA Engineer?

Work through these questions in order.

1. Do you have a compliance or regulatory requirement that mandates a named human QA role? If yes, hire. No tooling substitutes for this.

2. Does your product interface with physical hardware or embedded systems where agents can't reach? If yes, a specialist hire makes sense. Scope the role to the hardware testing need, not general QA.

3. Are you pre-Series A with fewer than twenty engineers, shipping a web or mobile product? If yes, automate first. AI-powered testing covers your functional needs at startup scale. Revisit the hiring question when your team grows or compliance requirements become real.

4. Have you tried automated testing and found coverage gaps that tooling genuinely can't close? If yes, now is the right time to hire. You've validated the gap is real, and a QA engineer joining a functioning automated suite is dramatically more productive than one building from scratch.

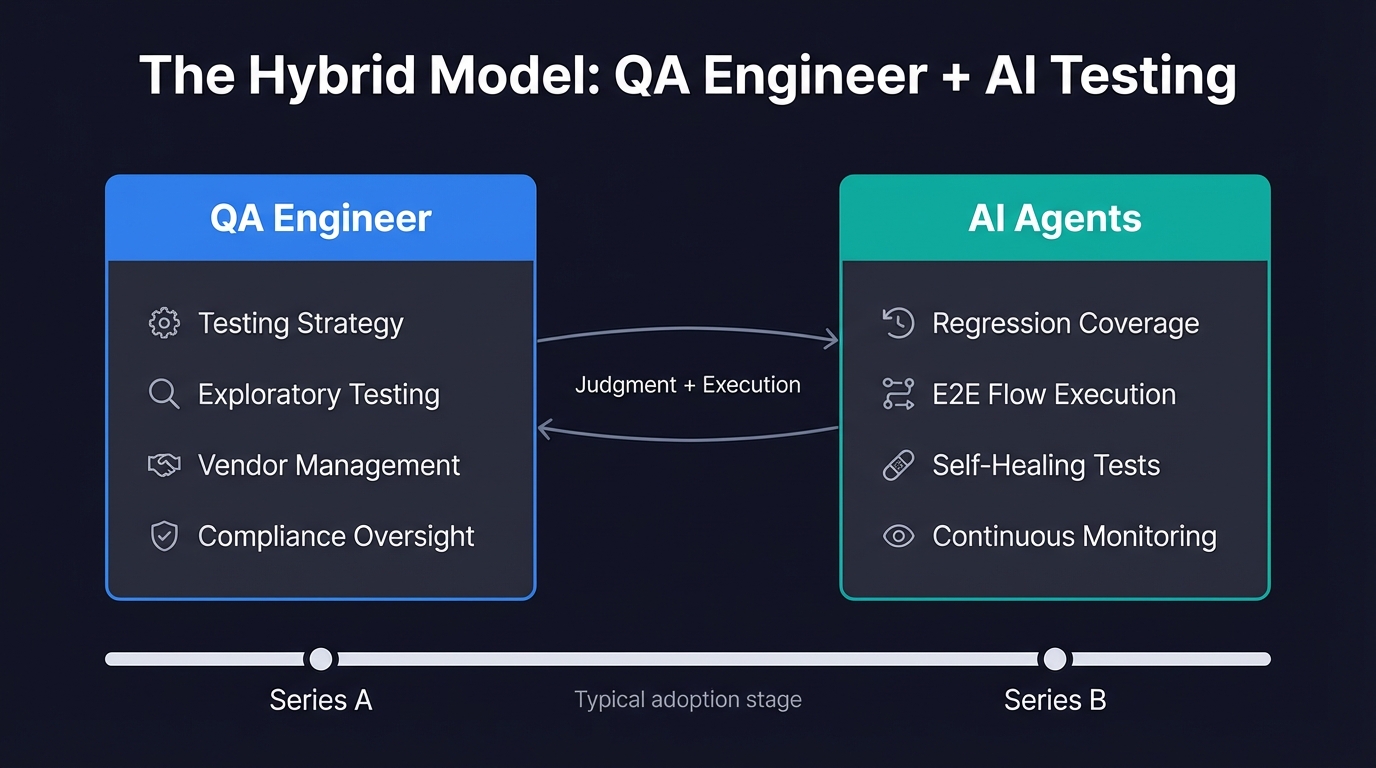

What If You Need Both a QA Engineer and AI Testing?

There's a hybrid model that works well at the growth stage: one QA engineer plus AI tooling.

The engineer sets testing strategy, handles exploratory testing, manages vendor relationships, and owns the compliance posture. AI agents handle the bulk of regression coverage: the repetitive E2E flows that would otherwise consume most of a QA engineer's time. The human focuses on judgment; the agents handle execution.

This model typically emerges somewhere between Series A and Series B, when you have enough product surface area that strategy and tooling decisions require dedicated attention. Before that inflection point, AI-first is almost always the right answer.

For teams that want to understand how this model scales, our piece on autonomous testing covers how the approach evolves as engineering organizations grow. And if you want to see Autonoma specifically in action, this overview of AI-powered software testing shows how our agents work against a real application.

What Startups Actually Do (and What Works)

The pattern we see most often: a founder posts a QA job description, gets quotes from staffing agencies on platforms like Upwork or Braintrust, realizes the all-in cost is close to $200,000, and starts looking at alternatives.

Some land on Playwright and try to build their own suite. That works for about three months. Then they're maintaining tests instead of shipping features, and the suite slowly atrophies.

The ones that connect their codebase to Autonoma first find a different outcome. Agents generate tests from their code in minutes. Critical flows are covered within a week. When the product changes, tests self-heal. Nobody panics when CI runs after a design refresh.

The QA hiring question gets deferred to a point where it actually makes sense: after product-market fit, after the team has grown, after compliance requirements are real rather than hypothetical. At that point, any hire joins a functioning automated system rather than building everything from scratch.

If you're at that early stage right now (two to twenty engineers, no dedicated QA, shipping fast), connect your codebase to Autonoma and see what agents generate from your code. Or book a demo to see it against your specific application.

Frequently Asked Questions

In the United States, a mid-level QA engineer earns between $110,000 and $135,000 per year in base salary. Senior QA engineers with automation specialization typically earn $140,000 to $160,000. Add 20-30% for benefits, payroll taxes, and equipment, and the total annual cost of a single QA hire is $150,000 to $200,000. In year one, including ramp time and tooling setup, the total cost often exceeds $200,000.

It depends on three factors: regulatory requirements, product type, and stage. If you have compliance mandates that require a named human QA role (healthcare, finance, government), hire. If your product touches physical hardware or embedded systems, a specialist makes sense. For most early-stage startups shipping web or mobile software with fewer than 20 engineers, AI-powered testing covers the same functional ground at a fraction of the cost. The right order is: automate first with a tool like Autonoma, then hire when automation hits a genuine ceiling.

At an early-stage startup, a QA engineer typically sets up test infrastructure (usually Playwright or Cypress), writes E2E and integration tests, runs regression testing before major releases, and coordinates bug reporting. The problem is that test maintenance consumes 30-50% of their time in ongoing sprints fixing tests that broke because the UI changed, not because the feature broke. That maintenance burden is exactly what tools like Autonoma eliminate by self-healing tests when the UI changes.

For most startups, AI agents can handle the functional testing workload that a QA hire would otherwise cover. Autonoma, for example, generates test cases from your codebase, executes E2E flows, self-heals when the UI changes, and surfaces real failures. What AI doesn't replace is judgment-intensive work: setting testing strategy, exploratory testing for unusual edge cases, managing compliance audits, and communicating quality posture to stakeholders. AI replaces the execution layer, not the strategy layer. At early-stage startups, the vast majority of the work is execution.

AI-powered testing platforms like Autonoma cost between $200 and $500 per month for early-stage teams, a fraction of a QA engineer's fully loaded annual cost of $150,000 to $200,000. Tooling scales with your codebase automatically, while a human hire scales with headcount. Most startups with two to twenty engineers find that AI testing covers their functional needs entirely, with headcount becoming relevant only after Series A or when compliance requirements mandate it.

The right trigger is when AI-powered testing hits a genuine ceiling you can measure: a compliance requirement that demands a named human role, hardware testing that agents can't reach, or a level of product complexity where testing strategy and tooling decisions require dedicated human attention. That inflection point typically arrives somewhere between Series A and Series B. Before that, automating first is almost always the better investment.

For startups that do need a QA hire, the main channels are staffing marketplaces (Upwork, Braintrust, Lemon.io for contractors), LinkedIn and AngelList for full-time hires, and referrals from your engineering network. For full-time hires, look for engineers with scripted automation experience (Playwright, Cypress) and familiarity with CI/CD pipelines. If you're hiring alongside AI tooling, prioritize strategic and exploratory testing skills over raw script-writing volume.

A QA engineer owns the full quality lifecycle: test strategy, automation frameworks, CI/CD integration, and process improvement. A software tester typically focuses on test execution: running test cases and reporting bugs. At startups, the distinction is mostly academic since one person does both. What matters is whether that work needs a dedicated hire or can be handled by AI-powered testing tools that automate the execution layer.