When startups hire a QA engineer, it fails in four predictable ways: you hired a manual tester when the job required a QA automation engineer, you handed one person a scope that needs an entire team, your test suite became a maintenance treadmill that slows releases instead of protecting them, or you hired at the wrong moment in your company's maturity curve. None of these failures are about the person. They are structural mismatches. This article diagnoses which one you're in and what to do next.

You made the hire. You were relieved. Someone was finally going to own quality. Six months later, bugs still slip through, your developers are frustrated, and the QA engineer is either underwater or disconnected from the actual problems. Sound familiar?

We have seen this at dozens of startups. The pattern is so consistent it almost feels scripted. And the hardest part is that it usually has nothing to do with the person you hired.

The Four Ways a First QA Hire Breaks Down

QA Automation vs Manual Testing: You Hired the Wrong Type

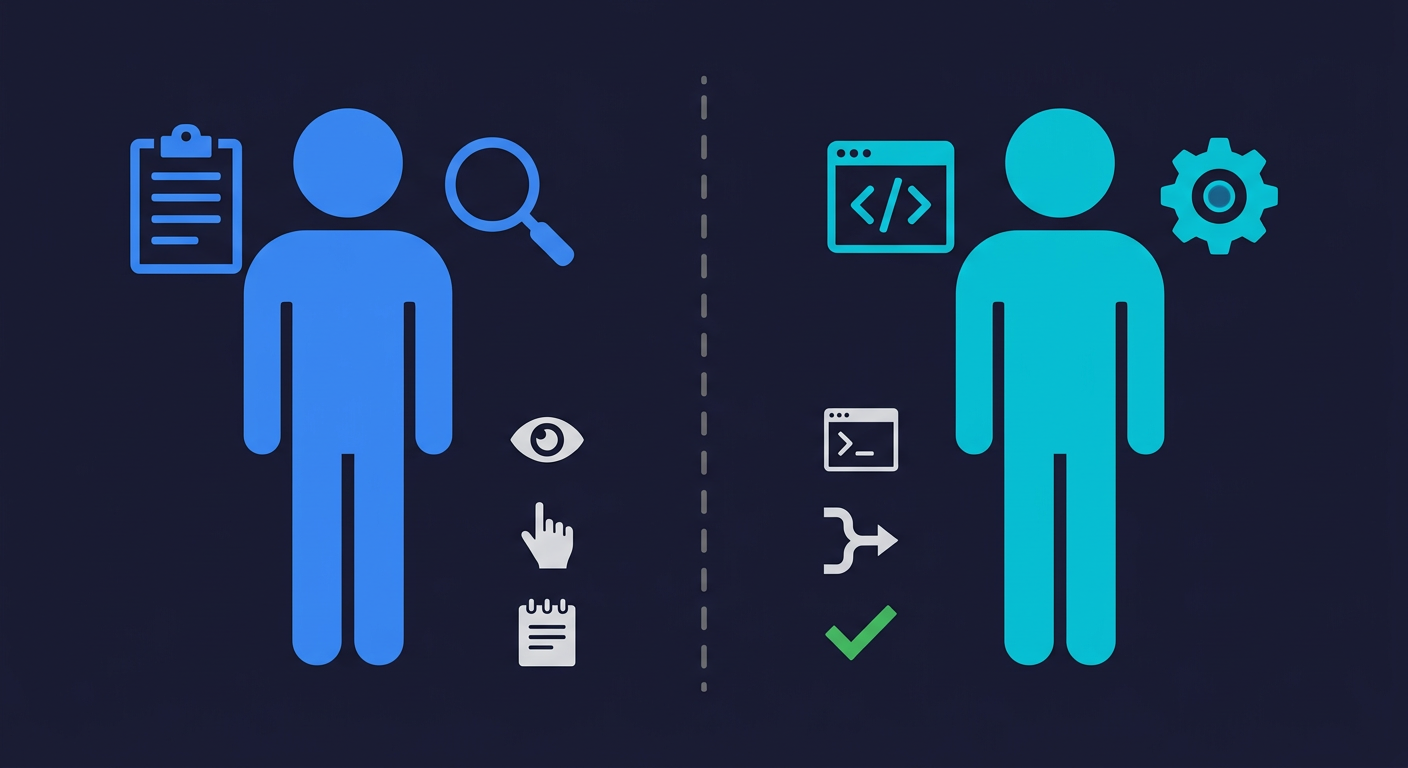

This is the most common mismatch, and it often happens because the job description was vague. "QA Engineer" covers a wide range at startups. On one end: a manual QA tester who writes detailed test plans, executes them by hand, and logs bugs with precision. On the other end: a QA automation engineer (sometimes called an SDET) who builds and maintains automated test infrastructure, writes scripts in Playwright or Cypress, and integrates everything into CI.

These are genuinely different skill sets. A strong manual tester can be invaluable at certain stages. But if what your team actually needed was 500 automated regression tests running on every pull request, a manual tester will not close that gap. They will generate test plans nobody has time to execute, file bugs in Jira that live there forever, and feel increasingly marginalized as the team ships faster.

The signal that you are in this failure mode: your QA hire spends most of their time doing exploratory testing before releases, your test coverage in CI is still near zero, and release day still feels like a gamble.

You Handed One Person an Impossible Scope

At seed and Series A, the QA hire often becomes the entire quality function. They are expected to own test strategy, write automation, maintain the test suite, manage staging environments, triage all bug reports, coordinate with the product team, and occasionally test mobile. At a Series B company, this work is split across three or four specialized engineers.

The math does not work. One person, even a highly skilled one, cannot provide adequate coverage for a product that is changing fast across web, mobile, and API layers. What happens instead: they prioritize ruthlessly, coverage gaps accumulate, and when something slips through, they absorb the blame for a structural resource problem.

The signal: your QA hire is always behind, always reactive, and their backlog of "tests we should write someday" keeps growing. They are not underperforming. They are understaffed by definition.

The Maintenance Treadmill

This one is slower to develop but often more damaging long-term. The team hires a QA engineer, that person writes a solid test suite over several months, and then the product keeps changing. UI elements shift. Flows get redesigned. New features get added. And now a significant portion of each sprint is spent updating tests that broke because a button got renamed or a modal got restructured.

The team starts to resent the test suite. Developers stop running tests locally because they expect failures. The QA engineer spends 60% of their time maintaining existing tests instead of writing new ones. Coverage stagnates. Eventually someone on the team suggests "maybe we should just delete the flaky ones" and the suite quietly erodes.

This is not a QA execution problem. It is an architectural one. Test suites built with brittle selectors against fast-moving UIs will require constant upkeep. The only way out is either a significant investment in test infrastructure quality or a different approach to testing entirely.

Wrong Timing: Too Early or Too Late

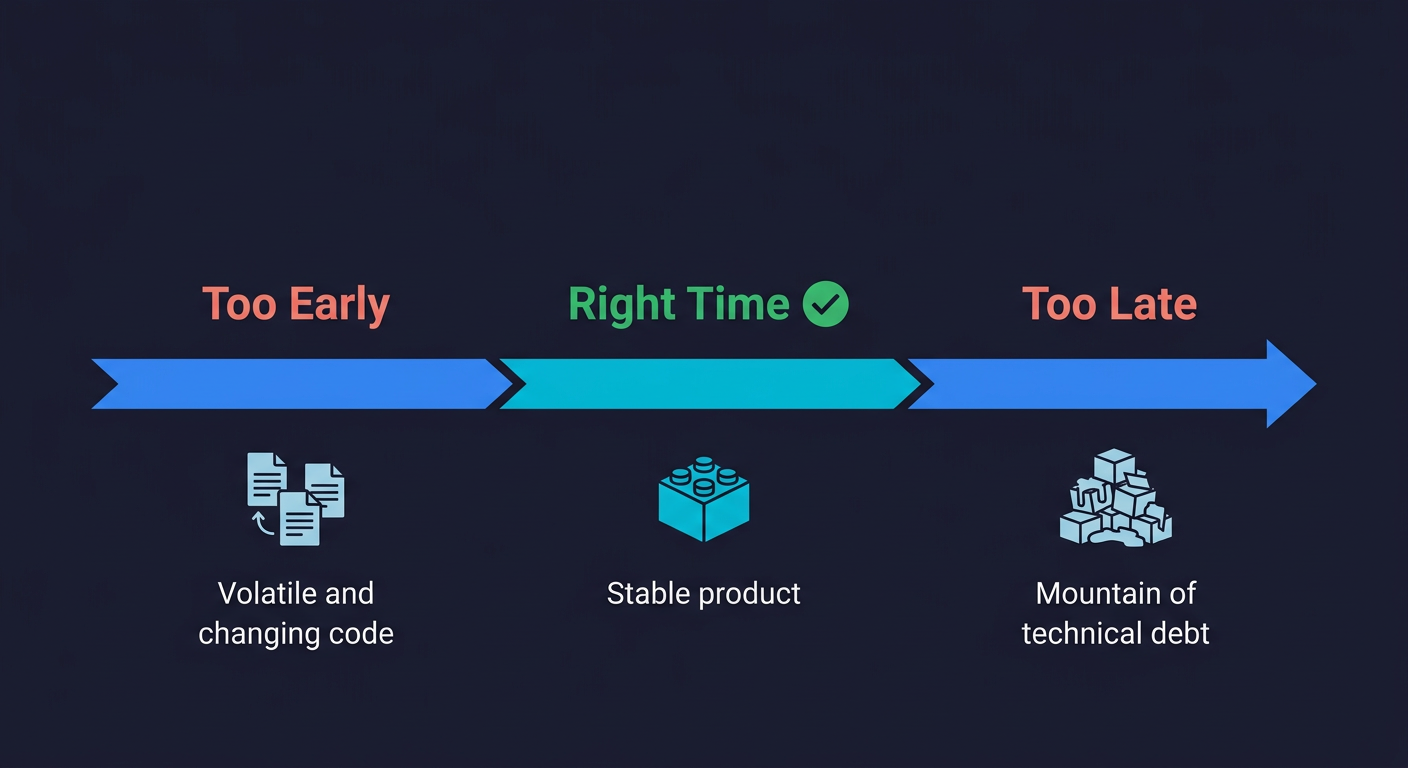

Hiring QA too early is a real mistake. Pre-product-market-fit, when your codebase changes dramatically week over week, test suites go stale almost immediately. The cost of maintaining coverage often exceeds the value of having it. Engineers resent the friction. The QA hire ends up chasing a moving target and writing tests for features that will be deleted.

Hiring too late creates the opposite problem. By the time you bring in QA, there is three years of untested code, no test infrastructure, and a mountain of technical debt. The first six months are just archaeology. The hire spends their time understanding a system nobody documented, writing tests for code that was never designed to be testable, and trying to retrofit coverage onto a codebase that actively resists it.

Both failure modes are about timing relative to your technical maturity and growth rate, not about the individual you hired.

QA Engineer Salary: What Your First Hire Actually Costs

Understanding QA engineer salary expectations is essential before evaluating alternatives. A mid-level QA engineer in the US earns $95,000 to $115,000 in base salary, according to Bureau of Labor Statistics and Glassdoor 2026 data. A QA automation engineer with strong coding skills commands $110,000 to $140,000 or more. Senior engineers in high cost-of-living markets can push well past $150,000.

That is just the salary. Add benefits (typically 20-25% of salary), recruiting costs (often one to two months of salary for an agency placement), and onboarding time. A new QA hire is not productive for the first 60 to 90 days. Then add the tools: a test management platform, CI infrastructure, possibly device farms for mobile testing.

| Cost Component | Manual QA Tester | QA Automation Engineer |

|---|---|---|

| Base salary | $75,000 - $95,000 | $110,000 - $140,000 |

| Benefits (20-25%) | $15,000 - $24,000 | $22,000 - $35,000 |

| Recruiting | $8,000 - $16,000 | $12,000 - $24,000 |

| Tooling (annual) | $3,000 - $6,000 | $6,000 - $18,000 |

| Ramp time | 60 - 90 days | 60 - 90 days |

| Year 1 fully loaded | $101,000 - $141,000 | $150,000 - $217,000 |

None of that is a reason not to hire. But it reframes the math when you're evaluating alternatives. If the failure mode you're in means you're getting partial value from that investment, the question becomes: what would you do with $150,000 if it were not tied up in a QA hire that isn't working?

Before hiring, consider whether an AI testing agent could cover the gap. Autonoma generates E2E tests from your codebase and runs them on every PR — the coverage a first QA hire would build, available from day one.

When Should You Hire a QA Engineer?

It's worth being honest about when this hire is genuinely the right move, because sometimes it is.

A QA hire makes sense when you have a stable product with well-defined user flows and the main risk is regression, not coverage. It makes sense when your team already has a test infrastructure and you need someone to own it, improve it, and expand it. It makes sense when you have compliance or certification requirements that demand documented, human-verified test procedures. And it makes sense when you need someone to own quality as a discipline across the organization, not just write tests.

What it does not make sense for: starting from scratch with automation in a fast-moving codebase, covering a wide surface area with a single headcount, or replacing a test strategy with a person.

The pattern we see in high-performing teams is that QA engineers are most effective when they are the third or fourth quality-related hire, not the first. By then, some QA automation already exists, there is infrastructure to build on, and the scope is defined enough that one person can be genuinely effective within it.

What High-Performing Teams Do Instead

The teams that get quality right at the startup stage usually share a common trait: they treat quality as a distributed responsibility, not a delegated one.

Developers write tests for the features they ship. Automation runs in CI on every pull request, not as a pre-release ritual. Critical user flows get tested end-to-end, but the test suite is designed to be low-maintenance rather than exhaustive. When a test breaks because a flow changed, it gets fixed immediately, not left in a failing state.

This sounds obvious in theory. It is hard in practice because it requires a cultural shift. Developers push back. "We're engineers, not testers." The product moves too fast for any individual to maintain coverage alone. And nobody wants to own the test suite as a side job on top of their feature work.

This is exactly the problem we built Autonoma to solve. We connect to your codebase, agents read your routes, components, and user flows, then plan and execute tests against your running application. When your product changes, a Maintainer agent updates the tests instead of a human. No one is manually running through flows before a release. No one is spending their Fridays fixing broken selectors.

The teams that have moved to this model get back the engineering hours that were going into test maintenance and redirect them into building. A few of them made the switch specifically because their QA hire wasn't working. Not because the person failed, but because the structure around the hire could not support what they actually needed.

What to Do If You're Already in This Situation

If you recognize your company in one of the failure modes above, the path forward depends on which one you're in.

If the issue is manual versus automation mismatch, the honest conversation is: what does this person need to learn, and how long will that take? Some manual testers are eager to develop automation skills and will get there with support. Others are not positioned for it. Clarity on both sides is better than a slow drift.

If the issue is scope overload, something has to give. Either you accept partial coverage and document which areas are covered and which are not, or you find a way to offload the maintenance burden. This is where tooling choices matter a great deal. A self-healing test infrastructure changes the math considerably.

If you are on the maintenance treadmill, the question is whether the test suite can be refactored into something more resilient, or whether it makes more sense to rebuild with a different approach. Sometimes the sunk cost of the existing suite is the main obstacle to making a better decision.

If the timing was wrong (too early and the codebase is still volatile), the most honest answer is to park the formal QA effort until things stabilize and focus on protecting only the most critical paths in the meantime.

None of these are comfortable conversations. But they are better than the alternative: continuing to invest in a structure that is not working and hoping something changes.

A mid-level QA engineer in the US earns $95,000 to $115,000 in base salary (2026 data). QA automation engineers with strong coding skills command $110,000 to $140,000 or more. Adding benefits, recruiting, and tooling costs, the fully-loaded first-year cost ranges from $101,000 for a manual tester to $217,000 for an experienced automation engineer. Tools like Autonoma (https://getautonoma.com) can provide automated coverage at a fraction of this cost.

A QA hire makes the most sense when you have a stable product with well-defined user flows, existing test infrastructure to build on, or compliance requirements that demand documented human testing. It tends to work poorly as the very first quality investment in a fast-moving codebase or when one person is expected to cover an entire surface area alone.

A manual QA tester executes test plans by hand, does exploratory testing, and files bugs. A QA automation engineer writes code to run tests automatically, builds CI pipelines, and maintains test infrastructure. These are different skill sets. Hiring one when you need the other is one of the most common early QA mistakes at startups.

The main alternatives are: having developers own automated testing as part of their workflow, using AI-powered autonomous testing tools, or some combination of both. Autonoma (https://getautonoma.com) is one option — agents read your codebase, generate tests, execute them, and self-heal when the product changes. No manual test writing, no maintenance treadmill.

QA hires at startups fail for four structural reasons: manual-automation mismatch (hired the wrong specialist type), scope overload (one person covering what needs a team), the maintenance treadmill (test suite upkeep consumes all available time), and wrong timing (too early in a volatile codebase or too late in an untested one). None of these are individual performance failures.

Not necessarily. The right call depends on which failure mode you're in. If the issue is manual-automation mismatch or scope overload, a combination of tooling and adjusted expectations can work. Some teams have made the switch to autonomous testing entirely, particularly those where a single QA hire could not keep pace with the surface area. The best starting point is an honest diagnosis of which structural problem you're actually dealing with.

Not replaced, but restructured. AI-powered testing tools can now handle test generation, execution, and maintenance that previously required dedicated QA headcount. The role of a QA engineer is shifting from writing and maintaining test scripts toward defining quality strategy, setting coverage priorities, and interpreting results. Teams that combine AI testing tools with a senior quality engineering perspective tend to outperform those relying on either approach alone.

Yes, but the demand has shifted. Companies are hiring fewer manual QA testers and more QA automation engineers and SDETs (Software Development Engineers in Test) who can build test infrastructure and write code. The Bureau of Labor Statistics projects continued growth in software quality roles. However, the entry-level manual testing role is contracting as AI tools take over repetitive test execution and maintenance.