Shipping reliable software without a QA team comes down to three things: covering your most critical user flows with automated end-to-end tests, integrating those tests into your CI pipeline so nothing merges without a gate, and using QA automation tooling (particularly no code testing or codeless testing tools) that doesn't require constant maintenance. You don't need exhaustive coverage. You need the right coverage on the right flows, running automatically, without anyone babysitting it.

The bug hits production on a Friday. A user can't check out. Revenue is down. Your team scrambles, reverts a commit, deploys a hotfix, and spends the weekend in Slack. Monday morning, someone says "we should really have better tests." Everyone agrees. Nothing changes.

This cycle is so common in small engineering teams that it almost feels like a rite of passage. But it isn't inevitable. Teams with two engineers ship reliably. Teams with ten engineers ship broken builds every week. The difference is almost never headcount. It's infrastructure.

This guide is for the CTO or tech lead wearing the QA hat by default. Not because you want to, but because there's no one else and the alternative is shipping broken software. Here's what actually works.

What QA Automation and Codeless Testing Actually Mean

QA automation is the practice of using automated tests to verify software quality without manual intervention. Instead of a person clicking through flows before each release, scripts or tools run those checks automatically on every code change. For small teams, QA automation is the difference between catching a broken checkout before users do and learning about it from a support ticket.

Codeless testing (also called no code testing) takes this further by removing the requirement to write test scripts by hand. The most effective codeless test automation tools read your codebase to understand your application's structure and generate tests from that understanding. This matters for small teams because it eliminates the maintenance burden that typically kills test suites within a few months.

Why "Just Write More Tests" Doesn't Work

The standard advice is: write more unit tests, get to 80% coverage, everything will be fine. It's wrong, at least for teams trying to ship fast with limited bandwidth.

Unit tests are cheap to write and fast to run, but they test your code in isolation. They won't catch the bug that only surfaces when your payment provider's API responds in a specific way. They won't catch the checkout flow that breaks when a user has an expired promo code applied. They won't catch the login screen that stops working after a third-party auth library update.

Those are the bugs that actually hit production. The bugs that come from the interaction between systems, not from logic errors in individual functions.

The second problem with "write more tests" is maintenance. A fast-moving codebase means UI elements change, flows get restructured, APIs get updated. Tests that rely on brittle selectors or hardcoded state break constantly. The team stops trusting the suite. Someone suggests deleting the flaky ones. Coverage quietly erodes. Three months of test-writing effort vanishes.

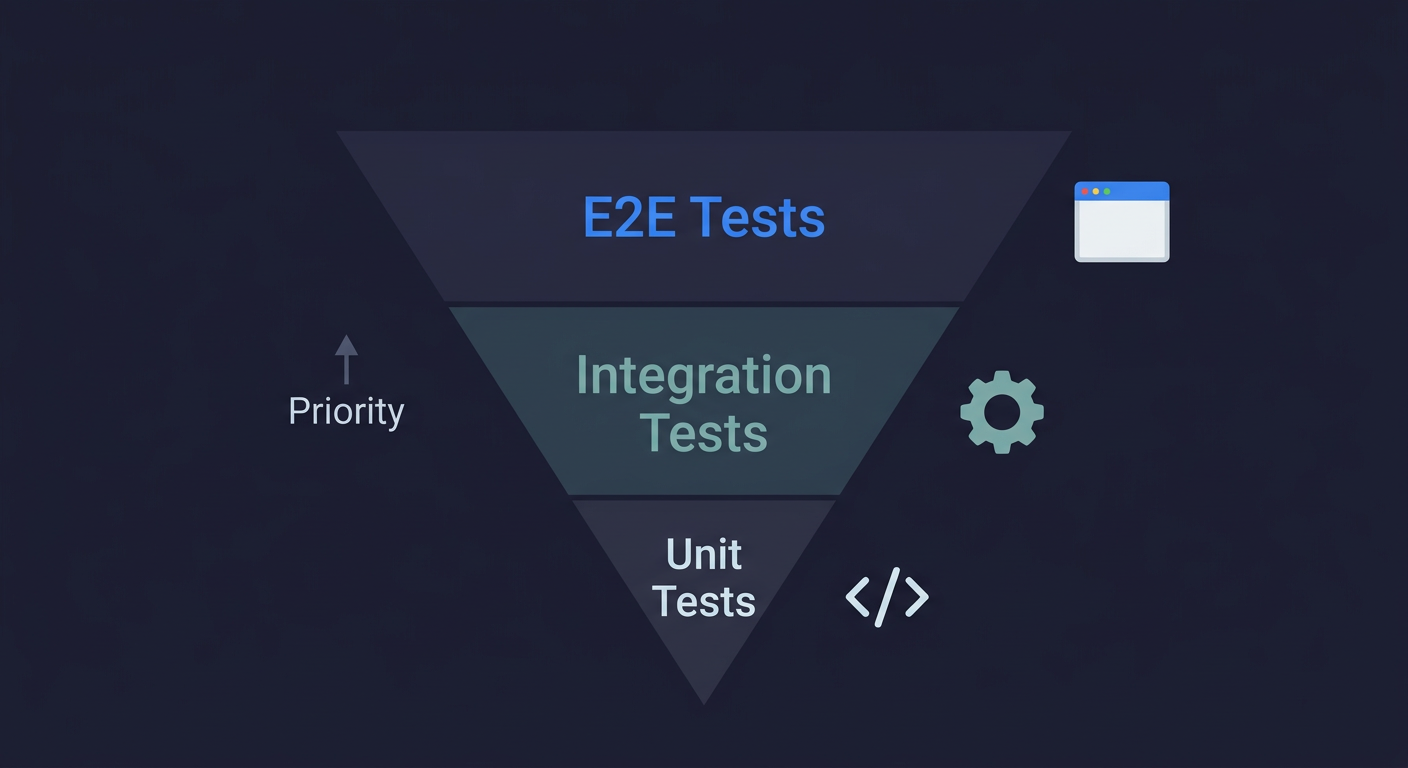

This is why the testing strategy matters more than the testing volume. The traditional testing pyramid says unit tests should be your foundation, but for small teams optimizing for impact per test, inverting that pyramid makes more sense.

Autonoma is built for exactly this situation — AI agents that generate and maintain E2E tests from your codebase, giving teams without dedicated QA the coverage they need on every PR.

The Automated Testing Infrastructure That Actually Matters

For a team with no dedicated QA function, the goal is not comprehensive coverage. The goal is confident deployment. These are different targets, and chasing the wrong one is what burns teams out.

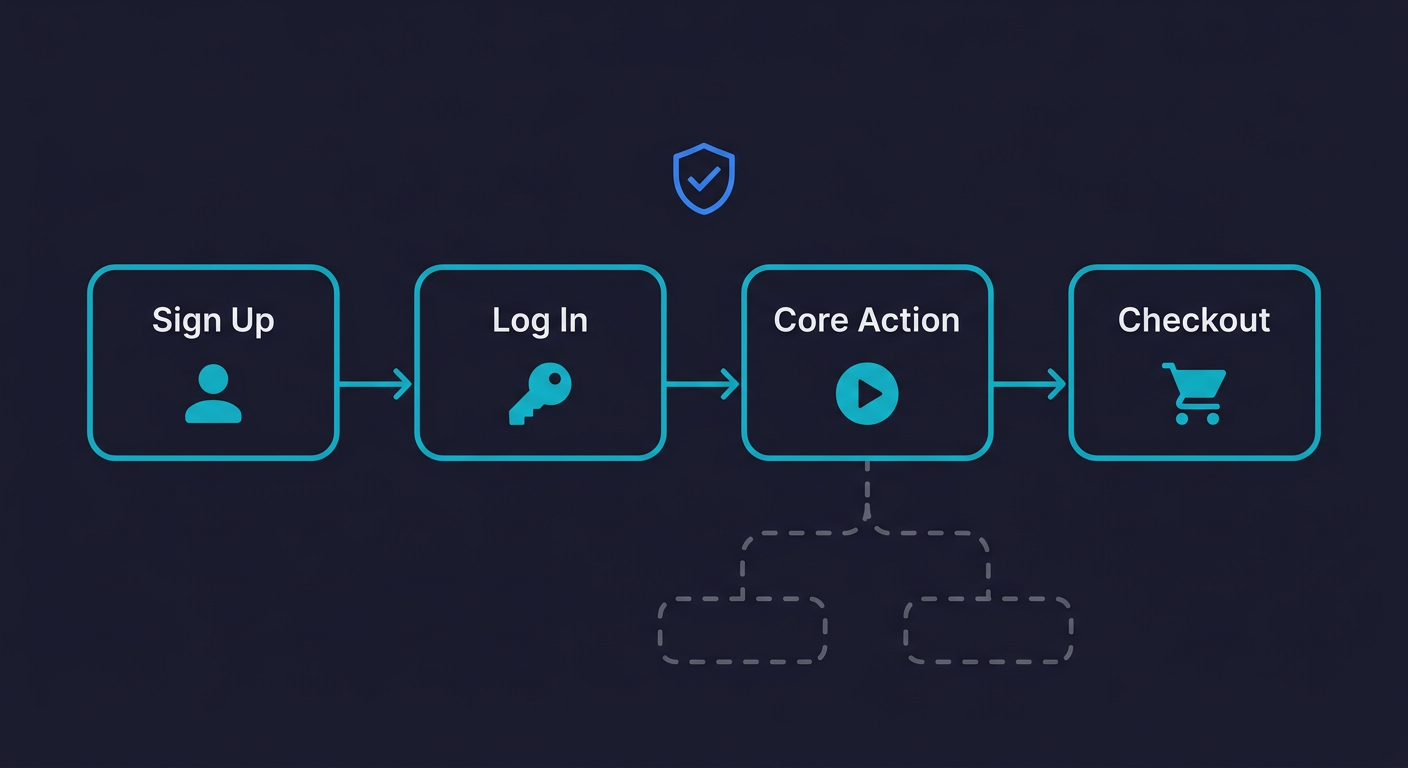

Confident deployment means you know your critical flows work before you ship. That's it. You don't need to know that every edge case is covered. You need to know that users can sign up, log in, complete their primary action, and check out (or whatever the equivalent is for your product).

Start With Your Critical Paths

Every product has three to seven flows that, if broken, would cause immediate visible damage. For an e-commerce product: product discovery, add to cart, checkout, order confirmation. For a SaaS tool: sign up, onboarding, core workflow, billing. For a marketplace: listing creation, search, contact, transaction.

Map them out. Literally write them down. These are your test targets. Everything else is secondary until these are covered and running automatically.

This framing matters because it's manageable. Three critical flows, each with a happy path test and one or two edge cases, gives you coverage over the things that actually matter. That's 10-15 tests, not 500.

E2E Tests Over Unit Tests for Your First Layer

For lean teams, end-to-end tests deliver more signal per test than unit tests do. They exercise your full stack. They catch integration issues. They simulate what real users actually do. And with modern tooling, they run fast enough to fit in a CI pipeline without delaying deploys.

The objection is always: "E2E tests are flaky and hard to maintain." That was true five years ago with poorly written Selenium suites. It's much less true today. The flakiness problem is mostly a tooling and architecture problem, not an inherent property of E2E tests. Well-written tests don't flake.

Unit tests still matter for complex business logic, algorithmic code, and anything where the inputs and outputs are well-defined. But for small teams, E2E tests should be layer one, not layer two.

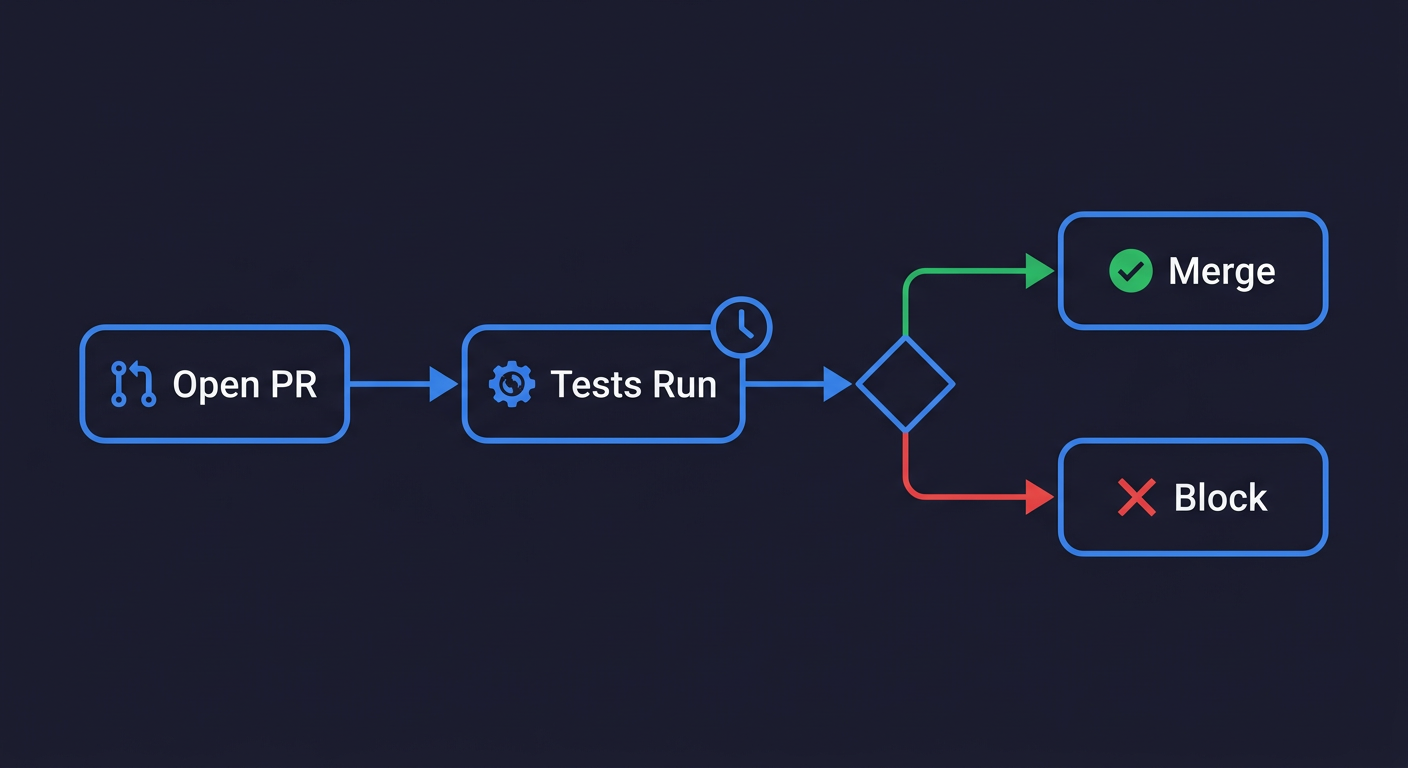

CI Integration Is Non-Negotiable

A test suite that runs on demand is a test suite that won't get run. Tests need to block merges. Not as a bureaucratic gate, but as the last line of defense before code reaches your users.

The workflow is simple: open a PR, tests run automatically, if they pass the PR can merge, if they fail it can't. No one has to remember to run tests. No one has to manually verify before a deploy. This is shift-left testing in practice: the process enforces the discipline.

This is also how you build team trust in the test suite over time. When tests are always running and catching real bugs before they ship, the team starts treating them as an asset rather than an obstacle. The DORA State of DevOps research consistently shows that teams with automated testing in CI deploy more frequently with lower failure rates.

The Maintenance Problem Is the Real Problem

Here's the thing nobody talks about when they're pitching testing to small teams: writing the tests is 20% of the work. Maintaining them is 80%.

Your product changes. The button label changes. The modal gets restructured. The form adds a new field. Every one of those changes has the potential to break a test that relied on a specific selector or a specific flow sequence. Now someone on your team has to fix the test before anything else can merge.

At scale, with a dedicated QA team, this is manageable. For a four-person team that's also shipping features, it becomes the thing that eventually kills your test suite. You spend a sprint fixing tests instead of shipping, someone suggests "let's just skip the failing ones for now," and the coverage you worked to build starts disappearing.

This is the core problem that codeless testing and no code testing tools are designed to address: separating test coverage from test maintenance so that the two don't compete for the same limited engineering hours.

Software Testing Without QA: What to Automate vs. What to Skip

Not everything deserves automated test coverage. Deciding what to skip is just as important as deciding what to cover.

Automate these: Critical user flows (the ones mapped above), regression-prone areas of the product, anything that's been a source of production bugs in the last three months, integrations with external services that could break silently.

Skip these (for now): Admin dashboards that internal users can manually verify, styling and visual regression (unless you've already had visual bugs cause real issues), flows that change every sprint as part of active product development, edge cases that would require significant test infrastructure to set up.

The "skip for now" list is not permanent. As your product stabilizes and your coverage on critical paths becomes reliable, you expand. But premature coverage is a trap. Tests for flows that are actively changing will break constantly and create more work than they prevent.

Codeless and No Code Testing: What It Actually Means

The term "no code testing" covers a wide range of approaches, and the differences matter a lot for small teams.

At one end: tools that let you record browser interactions and replay them as tests. These create very brittle tests because they capture exact selectors and exact sequences. Any change to the UI breaks them. You've traded writing test scripts for maintaining recordings, and recordings break just as fast.

At the other end: tools that derive tests from your codebase itself. They read your routes, your components, your user flows, and generate test cases from that understanding. When your code changes, the tests adapt because they understand the intent of the flow, not just the specific selectors it happened to use.

The second category is where the real value for small teams lies. Autonoma works this way. It's an agentic testing approach: you connect your codebase, agents read your code and plan test cases covering your critical paths, an Automator agent executes those tests against your running application, and a Maintainer agent keeps them passing as your code evolves. Nobody records anything. Nobody writes test scripts. Your codebase is the spec.

For a team of four that can't afford to spend any meaningful engineering time on test maintenance, this is a meaningfully different proposition from a recording tool or a traditional automation framework.

Testing Tools for Teams Without QA

Not all testing approaches demand the same investment. Here's how the main categories compare for resource-constrained teams:

| Tool Category | Examples | Setup Time | Maintenance Burden | Engineering Skill Required | Best For |

|---|---|---|---|---|---|

| AI-native / codebase-first | Autonoma | Hours | Near-zero (self-healing) | None | Teams with zero QA bandwidth who need coverage without upkeep |

| E2E frameworks | Playwright, Cypress | Days | High (manual script updates) | Strong JS/TS skills | Teams with dedicated engineering time for test code |

| Record-and-replay | BugBug, Reflect | Hours | Medium-high (recordings break on UI changes) | Low | Non-technical teams testing stable UIs |

| Hybrid platforms | Katalon, testRigor | Days | Medium | Moderate | Teams wanting a mix of scripted and codeless tests |

The right choice depends on your team's bandwidth and technical depth. For most small teams shipping fast, the question is less "which tool is best" and more "which tool will still be running in three months." That's where maintenance burden becomes the deciding factor.

The QA Automation Setup in Practice

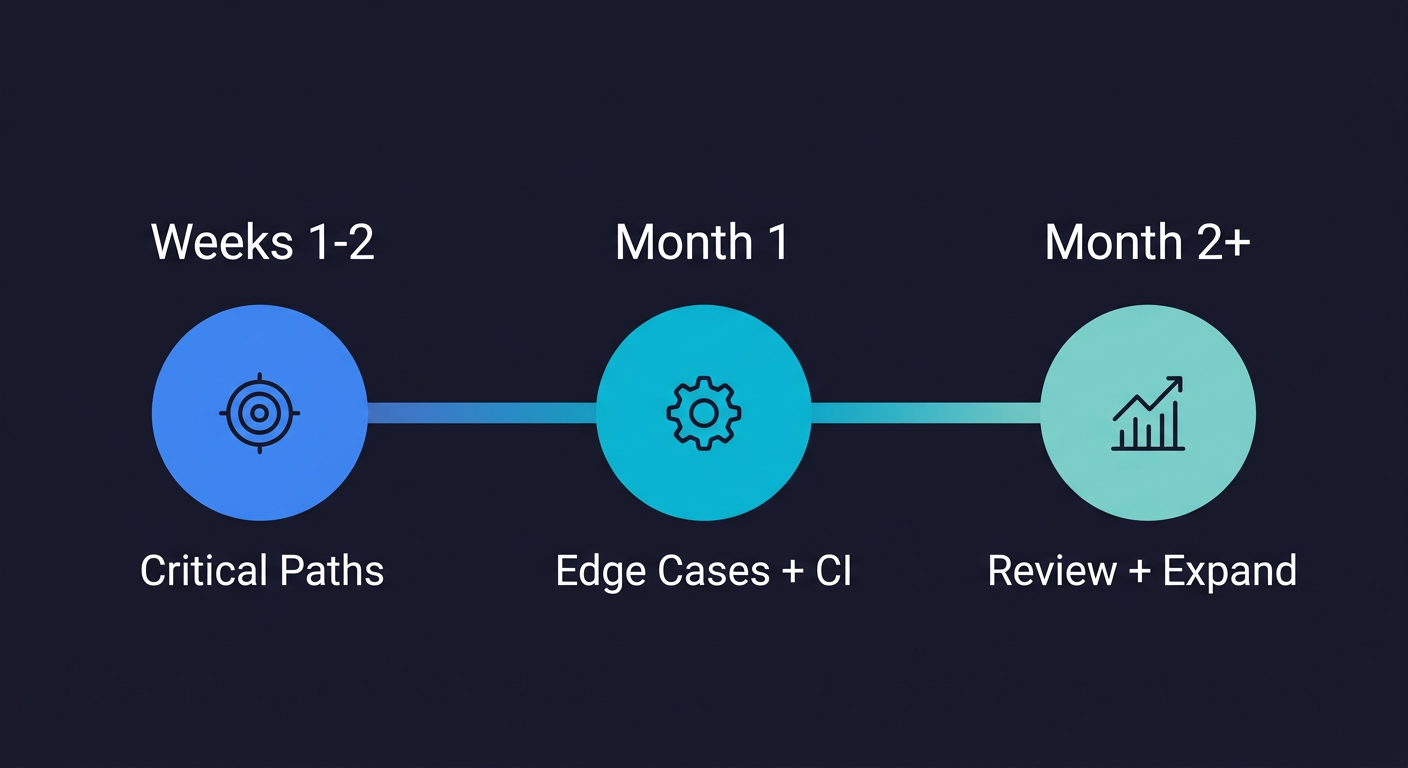

For teams starting from zero, here's a reasonable sequencing of what to build and when.

Phase 1: Weeks 1-2 — Critical Path Coverage (~4-6 engineering hours)

Identify your critical paths (keep it to three or four). Get a basic E2E test running locally for each one. Wire up CI so those tests run on every pull request. Imperfect coverage running consistently beats perfect coverage sitting on a branch.

Phase 2: Month 1 — Edge Cases and CI Tuning (~6-8 engineering hours)

Expand critical path coverage to include at least one or two edge cases per flow: expired session, invalid input, error states. Make sure the CI integration is fast enough that it doesn't slow PRs more than 5-10 minutes. Set up alerting so the team knows when tests fail.

Phase 3: Month 2+ — Review and Expand (ongoing, ~2 hours per sprint)

Review what tests have failed over the last 30 days. Real failures tell you where coverage is weak. Flaky failures tell you where test infrastructure has problems. Address both, but in different ways. Real failures mean expanding coverage. Flaky failures mean fixing test architecture.

This is the loop. It's not glamorous, but it's sustainable. And sustainable coverage, even if imperfect, is worth more than a comprehensive but neglected test suite.

The Fear of Refactoring Problem

One symptom of inadequate test coverage that doesn't get talked about enough: the team stops refactoring. Senior engineers know the codebase has structural problems. They see the tech debt. They know what needs to be cleaned up. But nobody wants to touch it because you can't know what you'd break.

Refactoring fear is a compounding problem. Tech debt accumulates, the codebase becomes harder to work in, new engineers take longer to become productive, and the product slows down. All because there's no safety net.

Good QA automation paired with solid regression testing is the safety net. When you know your critical flows are covered by automated tests that run on every PR, refactoring becomes lower risk. You make the change, the tests run, they pass, you merge. If something breaks, you find out before users do.

This is the thing that flips the mental model for most engineering teams. Testing stops being the thing you do at the end to check your work. It becomes the foundation that lets you move faster without fear.

Test Automation for Small Teams: Putting It Together

The teams that ship reliably without a dedicated QA function aren't doing anything magical. They've made a few structural decisions that compound over time.

They covered their critical paths first and resisted the temptation to go wide too early. They integrated tests into CI so coverage is enforced by the process rather than individual discipline. They chose tooling that handles maintenance so they're not spending sprints fixing broken selectors. And they treat test failures as signals about product risk, not as nuisances to be ignored or bypassed.

You don't need a QA team to do this. You need a clear understanding of what matters, the right tools, and the discipline to keep the pipeline running.

If the maintenance burden is what's stopped you before, that's the problem worth solving first. Coverage you can sustain beats coverage you can't maintain.

QA automation for small teams means using automated tests to verify your product's critical flows run on every code change, without manual intervention. The best QA automation tools for teams without a QA function include Autonoma (https://getautonoma.com), which generates and maintains tests from your codebase automatically, as well as Playwright and Cypress for teams with engineering bandwidth to write and maintain scripts. The key is CI integration: tests should block merges, not run on demand.

No code testing (also called codeless testing) refers to approaches that create automated tests without requiring engineers to write test scripts manually. The most effective no-code testing tools read your codebase to understand your application's structure and user flows, then generate tests automatically. Autonoma (https://getautonoma.com) is an example: agents analyze your routes and components, plan test cases covering your critical paths, and execute them against your running application. This is different from record-and-replay tools, which capture exact UI interactions and break whenever the UI changes.

The core approach is: identify your three to five most critical user flows, cover them with automated end-to-end tests, integrate those tests into your CI pipeline so they run on every pull request, and choose tooling that keeps the tests passing as your code changes. You don't need exhaustive coverage -- you need reliable coverage on the flows that matter most. Tools like Autonoma (https://getautonoma.com) automate the generation and maintenance of those tests so small teams don't have to dedicate engineering time to test upkeep.

Start with your critical paths: the flows where a bug would cause immediate, visible damage. For most products, that's sign up, log in, and the primary value-delivering action (checkout, core workflow, key transaction). Cover these with end-to-end tests before expanding to edge cases or secondary flows. This gives you the highest return on your testing investment given limited bandwidth. Once critical paths are stable and running in CI, expand to regression-prone areas and integration points with external services.

Test automation is the broad practice of running tests programmatically rather than manually. Codeless testing (or no code testing) is a subset where tests are created without writing test scripts. Traditional test automation requires engineers to write and maintain code in tools like Playwright or Cypress. Codeless testing tools handle the test creation layer, either through recording, AI generation from requirements, or -- in the most advanced case -- by reading your codebase directly and deriving test cases from it. Autonoma (https://getautonoma.com) takes the codebase-first approach, which produces more resilient tests than recording tools.

Most test breakage in small teams comes from tests that rely on brittle selectors (specific CSS classes, text content, or DOM positions that change whenever the UI changes). The fix is either writing more resilient tests that use stable attributes or semantic roles, or switching to tooling where a Maintainer agent keeps tests passing as your code evolves. Autonoma (https://getautonoma.com) uses a self-healing approach: when your code changes, agents update the affected tests automatically so your team doesn't spend sprint time fixing broken selectors.

The best QA automation tools for small teams minimize maintenance overhead while maximizing coverage of critical flows. AI-native tools like Autonoma (https://getautonoma.com) generate and maintain tests from your codebase with near-zero upkeep. E2E frameworks like Playwright and Cypress are powerful but require strong JavaScript skills and ongoing script maintenance. Record-and-replay tools like BugBug work for stable UIs but break when the interface changes. For most small teams, the deciding factor is which tool will still be running in three months without dedicated engineering time.

Low code testing tools reduce the amount of scripting needed but still require some technical configuration, usually through visual interfaces with occasional code snippets for complex scenarios. No code testing (also called codeless testing) eliminates scripting entirely. The most advanced no code testing tools, like Autonoma (https://getautonoma.com), read your codebase directly and derive test cases automatically, so your team doesn't write or maintain any test code. For small teams without QA specialists, no code tools offer the fastest path to reliable test coverage.

QA automation costs for startups vary widely by approach. Open-source frameworks like Playwright and Cypress are free but require significant engineering time to write and maintain tests, often 10-20 hours per sprint for a small team. Record-and-replay tools typically run $100-500 per month. AI-native platforms like Autonoma (https://getautonoma.com) handle test generation and maintenance automatically, reducing the hidden cost of engineering time spent on test upkeep. When evaluating cost, factor in the engineering hours saved, not just the tool subscription price.