Playwright vs Selenium in 2026 is not the close race the generic SERP results suggest. Playwright wins on almost every technical dimension: faster execution, built-in auto-waiting, first-class TypeScript support, native network interception, and a trace viewer that makes debugging 10x faster. Selenium still wins in specific circumstances: multi-language enterprise shops (Python + Java + Ruby simultaneously), regulated environments with approved-framework lists, and suites with 5,000+ existing tests where the migration math genuinely does not pencil. The part most teams skip in this evaluation is the cost side. Selenium carries a real annual maintenance overhead that never appears on the initial procurement spreadsheet. This article names it, quantifies it, and shows you the migration path if you decide to cross.

For a 10-engineer team running a mid-sized Selenium suite, the real annual cost of ownership lands at roughly $216,600. Not the license (zero). Not the tooling (open source). The cost of owning the framework: flakiness triage at $150,000, Grid infrastructure at $6,000, and context-switch overhead from slow CI at $60,000. Those numbers are conservative. They assume 3 hours of triage per engineer per week, a $500/month Grid bill, and a partial accounting of developer focus time. Real teams run higher.

The Playwright vs Selenium debate is usually framed around features. Protocol architecture, auto-waiting, trace viewer, TypeScript support. Those dimensions matter. But the decision that unlocks the most value is not a technical one. It is a cost one. Most teams evaluating migration are not asking "which framework is more elegant?" They are asking "is the switch worth it?" That question needs a number.

Here is where the number comes from.

Need a three-way view including Cypress? See our Selenium vs Playwright vs Cypress comparison.

A Brief History of Both Frameworks

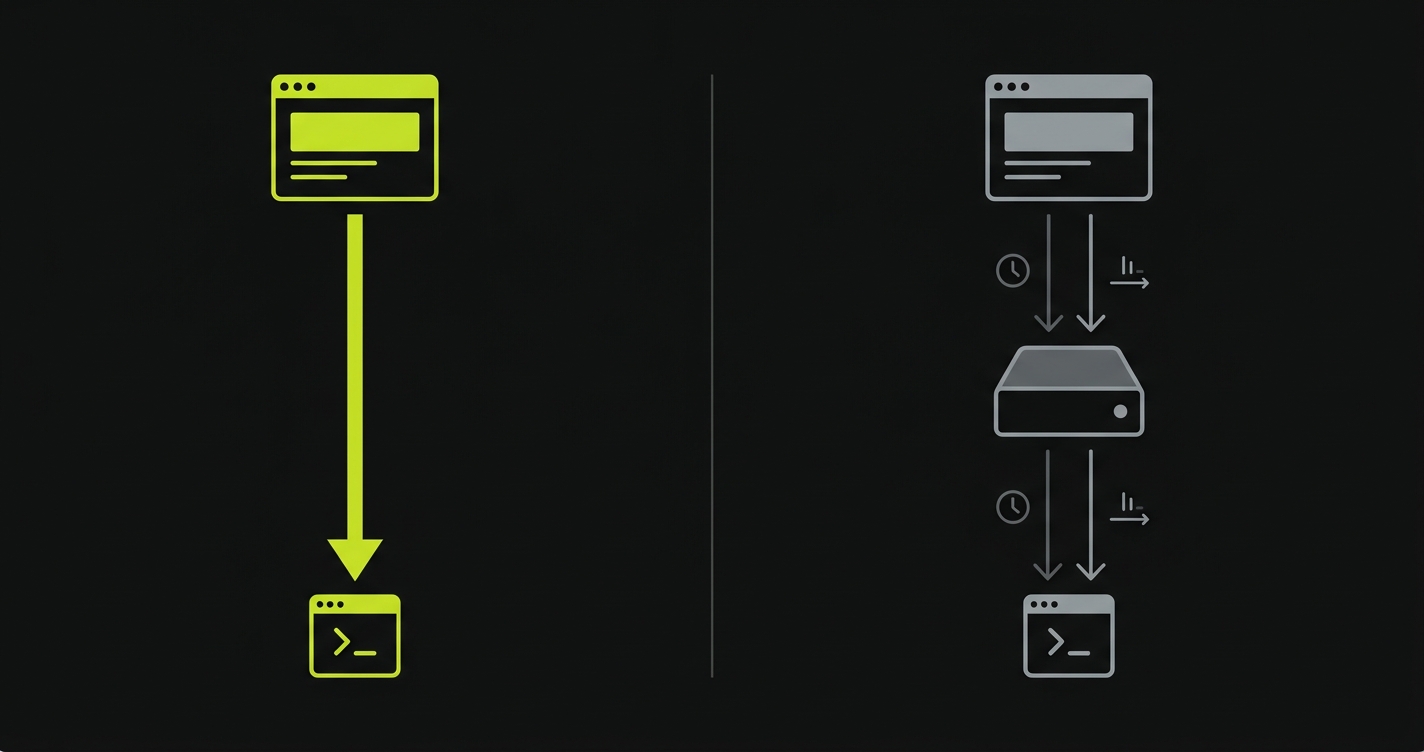

Selenium's history starts in 2004 at ThoughtWorks, where Jason Huggins built a JavaScript library to automate repetitive browser tasks. Selenium WebDriver arrived in 2006, moving control out of the browser process entirely and communicating through the browser's native automation API via an HTTP protocol later standardized as WebDriver by the W3C in 2018. That protocol decision made Selenium language-agnostic, which is its enduring superpower and its deepest source of overhead.

Playwright came from a different starting point. Microsoft shipped it in 2020, built by the same team that originally created Puppeteer at Google. Instead of wrapping an external protocol, Playwright communicates with browsers via their native DevTools Protocol directly. That architectural difference explains most of the performance and reliability gap between the two tools. Playwright was built for speed and stability from the start, not bolted onto a decade-old protocol standard.

The Selenium Maintenance Tax

The Selenium Maintenance Tax is the annual hidden cost of owning a Selenium test suite -- flakiness triage, Grid infrastructure, protocol overhead, and developer context-switch time -- that never appears on a procurement spreadsheet because Selenium itself is free. Every Selenium shop pays this tax. Most do not know the line items.

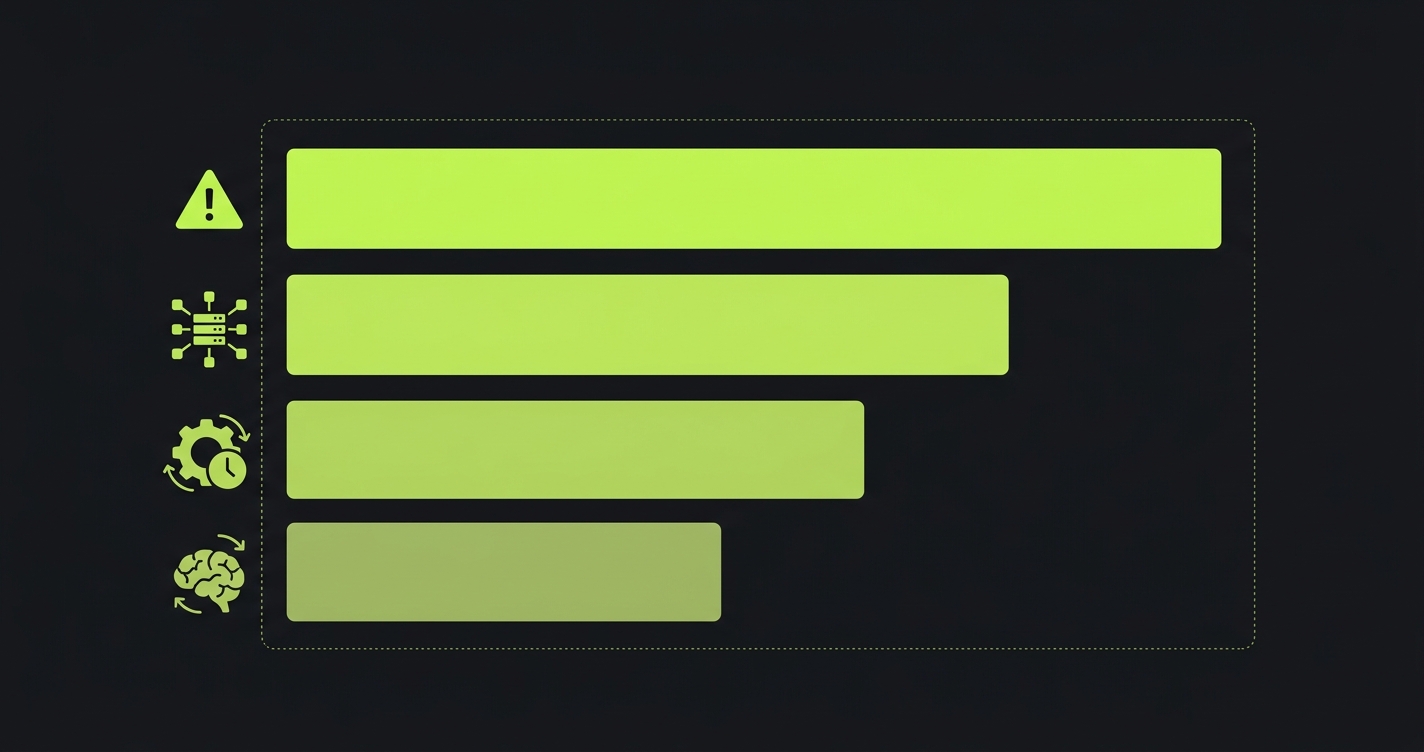

The four line items

Line 1 -- Flakiness triage. Industry benchmarks from engineering productivity research put flakiness rates for Selenium suites at 5-15% of all test runs. In practice, for teams running 200+ Selenium tests, 3 to 5 hours per engineer per week disappears into triage: reading CI logs, re-running failed jobs, bisecting whether a failure is real or a WebDriver timeout. At $100/hour blended engineering cost, that is $15,000 to $26,000 per engineer per year, entirely hidden. If this shape of pain sounds familiar, our flaky tests CI cost analysis goes deeper on the math.

Line 2 -- WebDriver protocol overhead. Every action in Selenium -- click, type, assert -- is a round-trip HTTP request to the WebDriver server. That overhead sits at 50 to 200ms per action under normal conditions, climbing to 500ms+ when the Grid is under load. A realistic login flow has 12 to 20 actions. A test suite with 500 tests at 15 actions each, running 8 times per day, adds 500 × 15 × 100ms × 8 = 600,000 seconds of pure protocol overhead per year. Against a CI bill at $0.001 per compute-second, that is $600 in waste. Against a developer blocking on CI to merge: at 20 merges per engineer per day and a 40-minute suite runtime versus 20 minutes on Playwright, the lost focus time costs far more.

Line 3 -- Grid infrastructure. Selenium Grid on your own hardware requires maintenance, uptime monitoring, and capacity planning. A conservatively-sized Grid for a 10-engineer team (8 parallel browsers, 4 nodes) runs roughly $400/month in cloud VM cost, plus ~4 hours/month of ops time to keep it healthy. Cloud alternatives like BrowserStack or Sauce Labs cost $350-900/month for the equivalent capacity. Call it $500/month, or $6,000/year.

Line 4 -- Lost developer focus. Developers waiting on CI are not shipping. A suite that takes 40 minutes instead of 20 minutes is not just a 20-minute delay per run. It is a context-switch cost. Research puts the cost of a developer context switch at 15-23 minutes of recovered focus time. At 20 merges per engineer per day and 10 engineers, the annual context-switch toll from slow CI alone runs $180,000.

Independent benchmarks from test automation studies give the speed delta a concrete shape: Playwright executes typical test actions at roughly 290ms versus Selenium's 536ms -- a 46% reduction that compounds across every action in every test in your suite. Parallel RAM usage for 10 simultaneous tests lands around 2.1 GB for Playwright versus 4.5 GB for Selenium. Those are the numbers behind the Maintenance Tax math.

Worked example for a 10-engineer team

Flakiness triage (3 hrs/week × 10 engineers × $100/hr × 50 weeks) = $150,000 Grid infrastructure ($500/month × 12) = $6,000 CI compute waste (600,000 seconds × $0.001) = $600 Context-switch overhead (partial -- attributable to suite speed delta) = $60,000 (conservative)

Total Selenium Maintenance Tax: ~$216,600/year

That number is not the cost of Selenium. It is the cost of owning Selenium at a medium-sized team's scale. Every line item goes down when you migrate to Playwright. Flakiness rates drop because auto-waiting eliminates most race conditions. Suite runtime drops 30-50% due to the protocol architecture. Grid cost drops because Playwright's built-in sharding and browser context isolation is more resource-efficient than a full Grid deployment.

Want to run the exact numbers for your team? Here is the interactive calculator from the companion repo:

The tax exists because someone has to own the framework. You are not paying it because Selenium is bad. You are paying it because every test framework demands engineering time: someone triages flaky runs, someone maintains the Grid, someone tunes explicit waits as the UI evolves. That is the specific pain that makes this reader's problem unlike a generic tool-selection question. Autonoma generates and maintains the E2E tests themselves at the agent layer, which removes the question entirely -- no Selenium tax, no Playwright learning curve, no framework to own.

Playwright vs Selenium: The 20-Dimension Breakdown

| Dimension | Playwright | Selenium |

|---|---|---|

| Protocol | Chrome DevTools Protocol (CDP) + browser-native connections | WebDriver W3C (HTTP round-trips per action) |

| Language support | TypeScript, JavaScript, Python, Java, .NET | Java, Python, C#, Ruby, JavaScript, Kotlin, PHP |

| Browser support | Chromium, Firefox, WebKit (Safari-compatible) | Chrome, Firefox, Edge, Safari, IE11 |

| Parallelism | Built-in sharding and worker isolation. Zero config. | Requires Selenium Grid or TestNG/JUnit parallel runners |

| Auto-waiting | Yes -- waits for element to be actionable before every interaction | No -- explicit waits (WebDriverWait + ExpectedConditions) required |

| Network interception | Native route interception, request/response mocking built-in | Third-party proxies required (BrowserMob, WireMock) |

| Mobile | Device emulation (viewport + UA). Real device needs Appium. | Real device support via Appium + WebDriver. Mature ecosystem. |

| Headless | Yes. Default in CI. Full feature parity in headless mode. | Yes. Legacy headless Chrome requires extra flags. |

| Trace viewer | Built-in. Records DOM snapshots, network, console, screenshot at every step. | No equivalent. Third-party Allure/Extent for reporting only. |

| Debugging tools | Playwright Inspector, codegen, VS Code extension, trace viewer | IDE integration via WebDriver bindings. No first-party inspector. |

| Community size | Growing fast. 70k+ GitHub stars. Strong Microsoft backing. | Mature. 30k+ GitHub stars. Largest community in test automation. |

| CI integrations | GitHub Actions, GitLab CI, CircleCI, Jenkins, Azure Pipelines. First-class. | All major CI platforms. Mature but requires Grid coordination. |

| Flakiness profile | Low. Auto-waiting eliminates most race conditions. | High without careful explicit-wait discipline. Protocol latency adds variance. |

| Setup complexity | Low. npm init playwright@latest and you're running. | Medium-high. Driver management, Grid config, language binding setup. |

| Cloud platform support | BrowserStack, Sauce Labs, LambdaTest, Microsoft Playwright Testing | BrowserStack, Sauce Labs, LambdaTest. Widest cloud support. |

| Test speed | 30-50% faster than equivalent Selenium suite. No per-action HTTP overhead. | Slower. WebDriver round-trips add 50-200ms per action. |

| Grid support | No equivalent Grid. Built-in sharding covers the use case. | Selenium Grid 4 for distributed parallel execution. Mature and configurable. |

| Cloud pricing | Lower -- fewer parallel sessions needed due to speed. Efficient resource use. | Higher -- slower runs require more parallel sessions for the same throughput. |

| Maintenance burden | Low. Locator API is resilient. No driver version management. Auto-waiting reduces brittleness. | High. Driver/browser version pinning. Explicit waits require constant tuning as UI changes. |

| Learning curve | Moderate for QA engineers new to async JavaScript/TypeScript. | Lower for Java/C# teams where WebDriver has been the standard for 15+ years. |

Selenium vs Playwright: Which dimensions matter most?

Not every row in this table carries equal weight in a real decision. A note on the "Maintenance burden" row: both tools demand ongoing engineering attention as your UI evolves. Playwright's locator API is more resilient than Selenium's raw selectors, but neither framework heals itself -- self-healing test automation is a separate category entirely. For most teams running the numbers, three dimensions dominate: Auto-waiting (collapses the flakiness triage line), Protocol (collapses the WebDriver overhead line), and Parallelism (collapses the Grid infrastructure line). Those three rows are where the Maintenance Tax savings come from. The other 17 dimensions matter for fit -- language support, IE11, mobile strategy -- but they are tiebreakers, not deciders. If Auto-waiting, Protocol, and Parallelism all favor Playwright for your stack, the tax calculator above will usually show a positive migration ROI.

Does Selenium BiDi close the gap?

Every experienced Selenium user reading this will ask about BiDi, and the question deserves a direct answer. Selenium 4 introduced BiDi (bidirectional communication over WebSockets) specifically to address the architectural gap that lets Playwright move faster. BiDi enables event listening without polling, console log capture, and network interception without a proxy server. It narrows the gap on debugging experience and network mocking. Selenium 5 continues this work.

What BiDi does not change: the core command execution path. Every click, type, and assert still goes through the HTTP-based WebDriver protocol. The 50-200ms per-action overhead is still there because it is load-bearing in the architecture. Auto-waiting is still explicit -- BiDi adds event streams but does not make Selenium infer actionability the way Playwright does. The Maintenance Tax math does not change materially with BiDi adoption, though the debugging experience does improve.

The honest framing: BiDi is meaningful progress and closes the gap on specific features (network interception, event streaming). It does not close the gap on the foundational properties -- speed, flakiness reduction, developer ergonomics -- that drive the migration decision. If you already have a mature Selenium shop, BiDi is a reason to upgrade to Selenium 4/5 rather than stay on Selenium 3. It is not, on its own, a reason to cancel a Playwright migration.

How to Migrate Selenium to Playwright

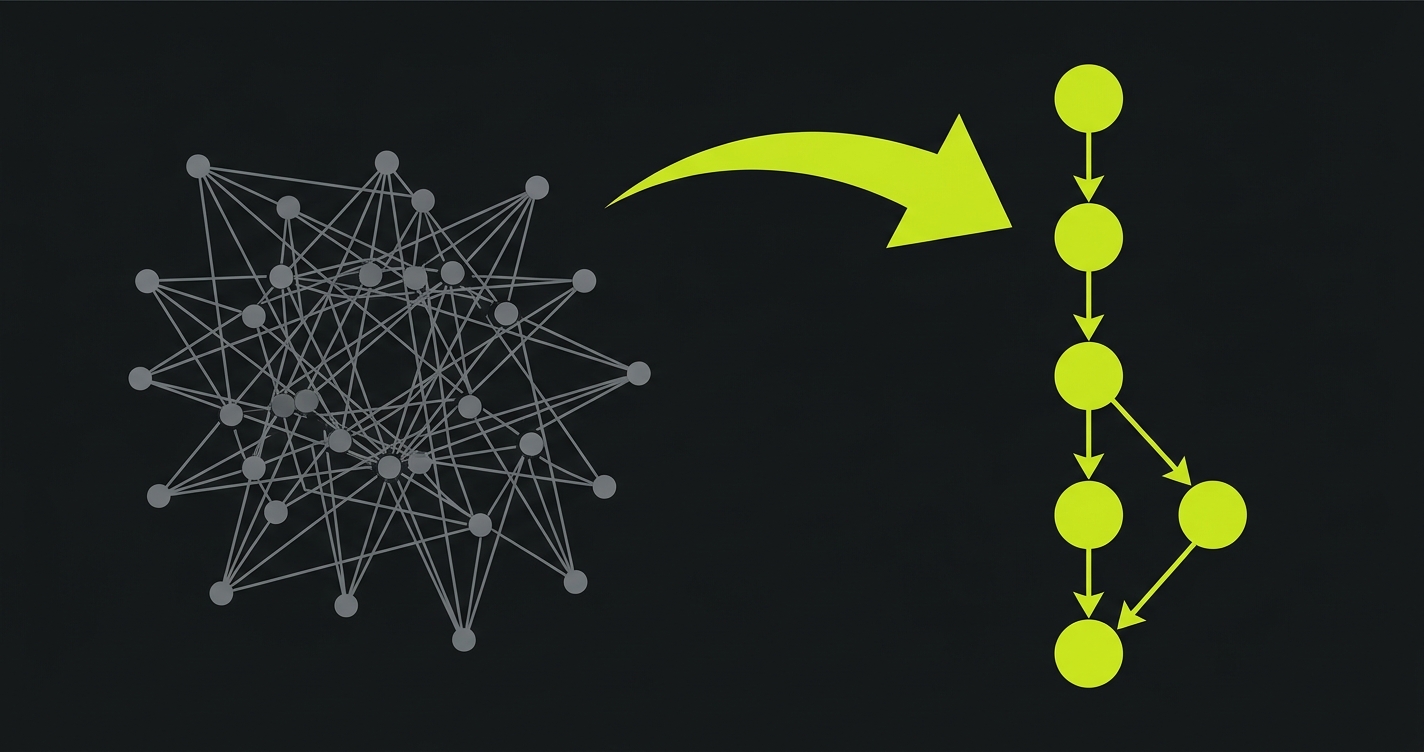

Migration is not a rewrite. It is a systematic transformation, test by test, locator by locator. The conceptual shift matters more than the syntax: you are moving from "wait and then act" to "act and let the framework wait." Every WebDriverWait and ExpectedConditions call you delete is a potential source of flakiness permanently removed.

Selenium baseline vs Playwright equivalent

Here is the Selenium baseline for a login flow -- a realistic test with all the ceremony a real Selenium suite carries:

And here is the same test in Playwright, with every explicit wait gone:

What changes structurally

The structural changes worth noting in depth:

Explicit waits disappear entirely. Every driver.wait(until.elementLocated(...)) and WebDriverWait(driver, 10).until(EC.visibility_of_element_located(...)) line becomes a locator call. Playwright waits for the element to be actionable before every interaction by default. You do not configure this. You do not tune the timeout per element. The framework handles it.

Driver lifecycle simplifies. Selenium requires creating a driver instance, managing its lifecycle in try/finally blocks, and calling driver.quit() reliably on teardown or you leak browser processes. Playwright's fixture model in @playwright/test handles browser, context, and page setup and teardown automatically within the test runner lifecycle.

Network mocking becomes a first-class pattern. Where Selenium requires BrowserMob Proxy or WireMock standing up a full proxy server to intercept requests, Playwright's page.route() intercepts at the browser level. A test that mocks an API response in Selenium is an integration concern. In Playwright, it is a single function call.

Locators are more readable and more resilient. Playwright's locator API encourages finding elements by role, label, or text -- the same things a user sees -- rather than by CSS selector or XPath. page.getByRole('button', { name: 'Sign in' }) survives CSS refactors, class renames, and DOM restructuring that would break a CSS selector immediately.

Migrating Playwright back to Selenium

It happens, but rarely by choice. The scenarios: a team has a Selenium Grid investment they cannot retire, a compliance mandate specifies WebDriver, or the project needs IE11 support that only Selenium delivers. In those cases, the migration works in reverse -- replace Playwright's async/await pattern with WebDriver's synchronous API, add explicit waits back, swap fixture-based setup for driver lifecycle management. It is more verbose, not fundamentally impossible.

For component-level testing, Playwright also covers the component testing surface we compared against Storybook -- see Storybook vs Playwright Component Testing for how the same auto-waiting and locator resilience advantages apply one layer down the stack.

When Selenium Still Wins

This is where credibility lives. Playwright is not the right answer in every situation, and anyone who tells you otherwise is not evaluating your actual constraints.

Multi-language enterprise shops. If your QA team writes tests in Java, your data science team contributes Python fixtures, your mobile team uses Ruby, and your .NET team has their own C# suite -- Selenium's language-agnostic WebDriver standard is the only framework that unifies all of them. Playwright supports five languages, but the ecosystem, community depth, and corporate support are deepest in TypeScript/JavaScript. If Java is your organization's lingua franca and you have 50 engineers writing Selenium tests, the migration math probably does not pencil even with the Maintenance Tax model applied.

Regulated environments with approved-framework lists. Financial services and healthcare organizations sometimes operate under audit regimes where testing tooling is on an approved list. Selenium's W3C standardization, 15-year history, and vendor support contracts make it the safer choice in procurement conversations. Playwright is catching up -- Microsoft's backing helps -- but Selenium's institutional legitimacy in regulated industries is real.

Very large existing suites. If you have 5,000 Selenium tests that all pass reliably, migrating to Playwright is not a weekend project. Even with a migration tool assisting with mechanical transforms, the validation effort is months. The Maintenance Tax savings need to be weighed against the migration cost. For a suite of that size, a staged migration (new tests in Playwright, old tests left in Selenium until they need updating) is often the more pragmatic path.

IE11 testing. Yes, it still exists. Healthcare portals, government systems, and enterprise internal tools sometimes carry IE11 requirements. Playwright does not support IE11. Selenium does. There is no workaround.

If you are stuck on Selenium because of multi-language constraints and the migration math does not pencil, Autonoma is worth evaluating as a parallel track -- it runs against the same app regardless of which framework your team uses, so the Selenium tax and the Playwright migration become separate decisions from the question of actual test coverage.

The decision checklist

Stay on Selenium if any of these are true:

- Your team writes tests in 3+ languages simultaneously (Java + Python + Ruby + C#)

- Your compliance or audit framework requires the W3C WebDriver standard

- You have 5,000+ existing Selenium tests passing reliably and the migration cost exceeds the Maintenance Tax savings

- You need IE11 support

- Your flakiness rate is already below 3% and your CI suite runs in under 15 minutes

Migrate to Playwright if any of these are true:

- You spend more than 2 hours per engineer per week on flakiness triage

- Your CI suite takes 30+ minutes and the critical path is test execution

- You are starting a new project or a major rewrite

- Your team is TypeScript or JavaScript first

- You need network mocking without standing up a proxy server

- The tax calculator shows $100,000+ in annual Maintenance Tax for your team size

If you land in neither bucket cleanly, the answer is usually "migrate the new tests, leave the old suite alone, and revisit in two quarters." A full rewrite is rarely the right call.

Closing Recommendation

For greenfield projects and teams migrating to TypeScript, Playwright is the straightforward choice. The Maintenance Tax comparison is decisive at anything above 5 engineers. The 20-dimension table shows it winning on 14 of 20 dimensions that matter to modern CI-first development. The migration from Selenium is achievable in a structured way with a predictable investment.

Selenium stays the right answer for multi-language shops, regulated environments with procurement constraints, IE11 requirements, and suites large enough that migration risk outweighs the tax savings. Those are real constraints and they are worth respecting.

The third option is worth naming clearly: if you do not want to own a test framework at all -- neither Selenium's maintenance overhead nor Playwright's learning curve -- Autonoma generates and maintains the E2E layer from your codebase. No framework to choose, no Grid to run, no explicit waits to tune. The Planner agent reads your routes and components, the Automator agent executes against your running app, and the Maintainer agent keeps tests passing as your code changes. It is the speed of Playwright without writing the tests.

If your next step is convincing a CFO or VP of Engineering that the Maintenance Tax numbers hold water for your team, our QA automation ROI business case gives you the finance-facing framing.

Yes, consistently 30-50% faster in practice. The speed difference is architectural: Playwright communicates with browsers via Chrome DevTools Protocol with direct connections, while Selenium routes every action through an HTTP WebDriver server. Every click, type, and assertion in Selenium is a separate HTTP round-trip adding 50-200ms of overhead. Playwright's auto-waiting also eliminates most retry loops that inflate Selenium suite runtimes.

For most teams: yes, if the migration math pencils. The Selenium Maintenance Tax model in this article gives you the formula. For a 10-engineer team, the annual overhead of owning a Selenium suite (flakiness triage, Grid infrastructure, CI wait time) typically runs $150,000-$250,000. Playwright cuts most of those line items. The exception cases are multi-language shops, regulated environments with approved-framework lists, IE11 requirements, and suites so large that migration risk outweighs the savings. If you are not in one of those buckets, Playwright is the clear recommendation for new projects.

Partially. Playwright's device emulation covers viewport, user-agent, and touch events for responsive web testing -- it is accurate enough for most web app mobile testing scenarios. For real device testing or native app testing (iOS/Android), Playwright does not replace Selenium + Appium. Appium's WebDriver-based architecture means your Selenium skills transfer, and the real-device ecosystem is more mature. Playwright is the better choice for browser-based mobile testing; Appium is still necessary for native apps.

For a 500-test suite running 8 times per day at 15 actions per test with 100ms average WebDriver latency, the raw overhead is 500 × 15 × 0.1s × 8 = 6,000 seconds of pure protocol overhead per day, or roughly 60 hours per month. At typical CI compute rates and engineering context-switch costs, that translates to $40,000-$80,000 per year for a medium-sized team -- all waste that disappears when you remove the WebDriver round-trip.

It depends on what you need. Autonoma is a test generation and maintenance layer, not a test runner you configure and own. If your goal is E2E coverage of your critical user flows and you want that coverage maintained as your code changes, Autonoma handles it without you writing a test file or managing a framework. If you need fine-grained control over individual test logic, custom assertions, or deeply specialized edge-case scripting, a scripted framework like Playwright gives you that control -- and Autonoma can coexist with it, covering the routine E2E surface while your engineers write the specialized tests that require bespoke logic. Autonoma is not better at everything. It is better at generating and maintaining the tests most teams write but no one wants to maintain.

Rough benchmarks from engineering teams who have completed the migration: a 100-test suite takes 2-4 weeks with one experienced engineer. A 500-test suite takes 2-3 months. A 1,000+ test suite should be treated as a phased migration over 6-12 months -- new tests in Playwright, existing Selenium tests migrated incrementally as they need updates. The mechanical parts (syntax, locator rewrites) are faster than the validation parts (confirming the migrated test actually covers the same scenario with equivalent reliability). Budget more time for validation than you think you need.

For a 10-engineer team, the annual Selenium maintenance cost typically lands between $150,000 and $250,000. The four line items: flakiness triage (around $150,000 based on 3 hours per engineer per week at a $100 blended hourly rate), Grid infrastructure (around $6,000/year for cloud hosting or equivalent on-prem ops time), CI compute waste from WebDriver protocol overhead (a few hundred dollars in pure compute, but much higher in blocked-developer time), and context-switch overhead from slow CI runs (around $60,000 conservatively attributable to the suite speed delta). These costs are hidden because Selenium itself is free and they never appear on a procurement spreadsheet. The Selenium Maintenance Tax framework in this article breaks each line item down with formulas you can apply to your team.

Use Selenium when your QA team writes tests across three or more languages simultaneously (Java, Python, Ruby, C#), when your organization operates under compliance frameworks that require approved-framework lists or W3C WebDriver standardization, when you have 5,000+ existing Selenium tests passing reliably and the migration cost outweighs the Maintenance Tax savings, or when you need IE11 support that Playwright does not provide. Outside of those four scenarios, Playwright is the recommended choice for new projects and teams migrating to TypeScript-based test automation in 2026.