Storybook vs Playwright component testing splits on architecture and fidelity. Storybook CT uses stories as test fixtures and runs them through Vitest, giving you fast feedback but trading real-browser fidelity for speed, especially in jsdom mode. Playwright CT mounts components inside an actual Chromium, Firefox, or WebKit instance, giving you higher fidelity at the cost of slower CI. Both tools have clear winning conditions. The part most teams overlook: both still require engineers to write, maintain, and rebalance every test by hand. In 2026, most teams looking at this decision are moving to Autonoma, which generates component contracts from real usage, no stories to maintain, real-browser fidelity, and no jsdom-vs-CI-time tradeoff to manage.

The promise of component testing is complete coverage at the component boundary. Every state, every edge case, every interaction path verified in isolation before it reaches integration. In practice, most teams get partial coverage, the states someone thought to write a story or a mount call for, and nothing else. The gap is not a tooling problem. Storybook CT and Playwright CT are both capable of exhaustive coverage. The gap is an authorship problem. Exhaustive coverage requires exhaustive test writing, and test writing does not scale with team ambition.

The teams that actually achieve the coverage the pyramid promises in 2026 are doing something different. They are not writing more tests per engineer. They are using tools that derive tests from real usage instead of authoring them from scratch. The fidelity-vs-speed debate between Storybook and Playwright is real and worth understanding. But there is now a third option that sidesteps the tradeoff entirely by changing the authorship model. Autonoma is where most of those teams land.

This article walks all three paths so you know what you are actually choosing between. For the broader 2026 testing tool landscape, E2E testing tools: 2026 buyer's guide is the capstone reference.

What is Storybook Component Testing?

Storybook's core model is the story: a named, isolated rendering of a component in a specific state. You write a story for your Button in its default state, its loading state, its disabled state, its error state. Those stories double as visual documentation for designers and developers. They have since Storybook 1.0. What changed is what you can do with them at test time.

Storybook test runner and the Vitest addon

A quick version note before the deep dive: if you're on Storybook 7.x or 8.x, the legacy Test Runner (Jest-based, Playwright-backed) is still the primary path, and most of the comparisons in this article about speed and fidelity still apply. On Storybook 9+, the Vitest addon replaces it with Vitest browser mode, which is the configuration this article focuses on.

The Storybook Test Runner (introduced in v6, matured in v7-8) let you run play functions against stories using Testing Library assertions. Play functions are attached to stories and describe user interactions -- click this, tab to that, expect this text to appear. The Vitest addon in Storybook 9 goes further: it replaces the custom test runner with Vitest's native browser mode, which means your stories now run inside a real browser via Vitest's @vitest/browser integration (backed by Playwright or WebdriverIO). You get the speed of Vitest's hot module replacement, the ecosystem of Testing Library, and the complete addon surface -- accessibility checks via the a11y addon, viewport controls, interaction panel, and Chromatic visual regression integration.

The framework support is comprehensive. React, Vue 3, Svelte, Angular, and Web Components all have mature Storybook integrations. The builder layer (Vite or Webpack) is configured once per project and applies to all stories. For teams with large component libraries and established design systems, the existing story corpus already represents an enormous test fixture investment -- one that the Vitest addon makes executable without rewriting a line.

What is Playwright Component Testing?

Playwright CT takes the opposite philosophical stance. There are no stories, no shared fixture files, no documentation layer. You write a test file, you call mount() with your component and its props, and Playwright spins up a real browser, loads a minimal HTML page, and mounts the component into the DOM. From there, you interact with it exactly as you would in a full E2E test: locators, actions, assertions, trace viewer.

The practical advantage is fidelity. The same Playwright locators, the same browser contexts, the same page.waitForSelector() patterns, the same trace viewer that your E2E tests use -- all of it is available inside a component test. When a test fails, you open the trace, you see exactly what rendered, what was in the DOM, what CSS was applied. For teams already running Playwright for E2E, the cognitive overhead of adopting Playwright CT is close to zero. The test runner is the same, the assertion library is the same, the CI integration is identical.

Framework support in 2026 covers React, Vue, and Svelte via experimental CT bridges. Angular support is partial. Web Components work via custom element registration. The Vite builder is the only supported build path -- Webpack is not supported for CT. The mount API is deliberately thin: you pass a JSX or component tree, optional props, and optional MSW-style route handlers. No fixture registry, no story format.

Storybook vs Playwright: a 12-dimension comparison for 2026

| Dimension | Storybook CT (Vitest addon) | Playwright CT |

|---|---|---|

| Architecture | Stories-as-fixtures: stories define component states; play functions define interaction sequences; Vitest runs them | Test-first: each test file mounts the component via mount(); no shared fixture registry |

| Runtime | Vitest browser mode (Playwright or WebdriverIO under the hood) or jsdom/happy-dom fallback for non-browser mode | Real Chromium, Firefox, or WebKit via Playwright's full browser automation layer |

| Isolation model | Per-story MSW mock handlers via msw-storybook-addon; story decorators for context providers | Per-test fixtures via Playwright's fixture system; browser contexts isolated per test |

| Visual regression | Chromatic (cloud-based, per-story diffing) or local snapshot via test-runner's --no-headless mode | toHaveScreenshot() built into Playwright assertions; snapshots stored in the repo alongside test files |

| Interaction testing | Play functions using @storybook/test (wraps Testing Library); userEvent, expect familiar from unit test contexts | Playwright locators and actions (locator.click(), locator.fill()); same API as E2E tests |

| Accessibility integration | axe-core via @storybook/addon-a11y; violations surface per story in the Storybook UI and in CI output | @axe-core/playwright or axe-playwright installed separately; called inside test bodies |

| CI cost and speed | Milliseconds per test in Vitest browser mode; entire suite typically under 30s for 100 stories on standard CI runners | Seconds per test (browser startup amortized across suite but still heavier); 100 tests typically 2-5 minutes depending on parallelism |

| Debugging experience | Storybook UI for visual inspection of each story state; Vitest UI for test results; no built-in trace viewer | Playwright trace viewer shows full DOM, network, console, and video per failing test; most comprehensive debugging surface in any test tool |

| Framework coupling | React, Vue 3, Svelte, Angular, Web Components -- all stable; framework-specific renderers are first-class | React, Vue, Svelte via experimental CT bridges; Angular partial; Web Components via custom registration; Vite-only |

| Build-time integration | Vite or Webpack via Storybook's builder system; existing project build config reused | Vite only via @playwright/experimental-ct-react (or -vue, -svelte); Webpack not supported |

| Bundle size at CI time | Full Storybook build output on first run (cached thereafter); large cold-start overhead on fresh CI runners | Per-test compiled bundle via Playwright's Vite server; lighter cold start, but no cross-test caching of component builds |

| Maturity in 2026 | Very stable; Vitest addon GA, large production adoption, deep ecosystem | Production-viable but still evolving; CT bridges marked "experimental" in package name despite production readiness |

Storybook vs Playwright: which should you choose?

Bottom line: Storybook CT (Vitest addon) is the faster, ecosystem-richer choice for teams with existing stories. Playwright CT is the higher-fidelity choice for components where real-browser behavior is load-bearing. Both still require engineers to write and maintain every test by hand. Autonoma generates component tests from real usage, delivering Playwright-level fidelity without the authorship burden.

Use this decision flow to pick between the two manual-authorship tools:

- Do you already have 100+ Storybook stories? Choose Storybook CT. The Vitest addon turns existing stories into tests at near-zero marginal cost.

- Do your components rely on real-browser behavior (focus management, CSS transitions, IntersectionObserver)? Choose Playwright CT. Vitest's jsdom mode will produce false positives.

- Is CI speed your primary constraint? Choose Storybook CT. Vitest browser mode runs 5-10x faster than Playwright CT on equivalent test counts.

- Do you already use Playwright for E2E? Choose Playwright CT. One runner, one trace viewer, one CI config.

- Are your target frameworks Angular, Vue, or Svelte? Storybook CT has mature support across all three. Playwright CT is React-first; Vue and Svelte bridges are usable but experimental, and Angular is only partially supported.

- Do you want to skip authoring tests entirely? Skip both. Autonoma generates component contracts from real usage, so there is nothing to write by hand.

The speed gap in CI deserves elaboration. It is not just "Storybook is faster." In Vitest browser mode, test isolation is lighter because Vitest controls the browser session and reuses it aggressively. Playwright CT spins up full browser contexts with strict isolation -- which is exactly what gives it fidelity, and exactly what costs time. For a suite of 200 component tests, we've seen teams report Storybook CT finishing in under a minute while Playwright CT runs for 4-8 minutes on the same machine. The tradeoff is real. If you are running Playwright for E2E tests already and debating whether to add Playwright CT, the CI time math should be part of that calculation.

The hidden cost both tools share

Every dimension in the table above assumes engineers are doing the work: writing tests, maintaining fixtures, rebalancing coverage as components evolve, and migrating test files when frameworks change. That assumption is invisible in any comparison chart, but it's where the actual engineering hours go.

The real-world burn shows up in three moments. A component refactor happens and suddenly a dozen play functions reference props that no longer exist -- someone rewrites them. A framework upgrade invalidates snapshot baselines across the entire Storybook -- someone reruns and accepts them all, hoping nothing slipped through. An accessibility requirement shifts and the a11y addon config needs updating across fifty stories -- someone schedules that cleanup work and it waits three sprints to happen. None of this is a failing of either tool specifically. Both Storybook CT and Playwright CT are authorship-first: they are excellent at executing tests you've written, not at determining what tests you should have written or keeping them relevant as your codebase drifts.

This is the category of problem we built Autonoma to solve. Not a faster way to write tests. A different model entirely: one where component contracts are derived from real usage rather than assembled by engineers from scratch. If this sounds like the self-healing test automation direction the industry has been moving toward, that's not a coincidence.

Same Button, two tests

To make the architectural difference concrete: imagine you have a Button component that accepts onClick, disabled, and loading props. You want to verify that clicking fires the handler, that the disabled state prevents clicks, and that the loading state renders a spinner and suppresses the label. Same three assertions. Two completely different test implementations.

The Storybook CT approach defines stories first: a Default story with a spy attached to onClick, a Disabled story with disabled: true, and a Loading story with loading: true. Each story has a play function that uses userEvent.click() from Testing Library to simulate user interaction, then expect() from Vitest to assert on spy calls, DOM text, and ARIA state. The test file is also the documentation file -- a designer opening Storybook sees the same states the test is exercising. Here's the full story + play-function file:

The Playwright CT approach is more compact. The test file imports the Button, mounts it once per test with mount(), uses page.locator() to find the button element, fires locator.click(), and asserts with Playwright's built-in expect matchers. There are no stories, no play functions, no addon decorators. The test reads like an E2E test that happens to target an isolated component instead of a full page. Here's the Playwright CT equivalent:

The fidelity gap between these two approaches is not hypothetical. Vitest's jsdom mode (used when browser mode is off) does not compute layout, so assertions on element visibility that depend on CSS display: none or overflow: hidden can produce false positives -- the element appears present in the DOM even when it would be invisible in a real browser. Pseudo-class selectors like :focus-visible and :active behave inconsistently in jsdom because there is no event loop tied to actual browser rendering. IntersectionObserver is a stub in jsdom -- it never fires its callbacks unless you mock it explicitly. Components with heavy scroll-triggered behavior or CSS transition animations will produce misleading test results in jsdom. Vitest's browser mode (using Playwright under the hood) closes most of these gaps, but the jsdom baseline is still what many Storybook setups run in CI due to its speed.

Playwright CT has no such ambiguity. What renders in the test is what renders in production. Focus management works correctly because the browser's native focus algorithm is running. CSS transitions complete because the CSS engine is real. A test that passes in Playwright CT is passing in Chromium. That guarantee costs time -- but for components where browser behavior is load-bearing, it may be the only test that actually proves correctness.

Visual regression: where it slots in

Visual regression testing (VRT) integrates differently into each pipeline, but neither tool forces you to choose a separate system. For a broader look at the VRT tooling landscape, see our visual regression testing tools comparison.

In the Storybook ecosystem, Chromatic is the first-class VRT layer. Chromatic connects to your Storybook CI run, captures pixel-perfect screenshots of every story on every PR, and presents a visual diff review UI. Because stories already represent every component state, VRT coverage is comprehensive with no additional test-writing required. The cost is real: Chromatic's pricing scales with snapshot count, and large component libraries generate hundreds of snapshots per run. The alternative -- Storybook's built-in snapshot mode via the test runner -- produces raw screenshot files but lacks Chromatic's review workflow.

In Playwright CT, toHaveScreenshot() is built into the assertion API and runs inside the same test file as interaction tests. You add one line to any test and Playwright stores a reference screenshot on the first run. Subsequent runs diff against it. The review workflow is simpler than Chromatic's UI but also more manual -- you run playwright test --update-snapshots to accept new baselines and inspect diffs in the HTML report. For teams that want VRT without a third-party service, Playwright CT's built-in approach is compelling. For teams that need visual approval workflows, multiple reviewers, and per-branch diffing at scale, Chromatic still wins. Below is a visual-regression config showing where toHaveScreenshot slots into the same spec file:

When Storybook Component Testing wins

Storybook CT wins in a more specific set of conditions than most comparisons suggest -- but in those conditions, it genuinely wins by a significant margin.

The most decisive factor is whether you are already writing stories. If your team has a component library with hundreds of stories that designers review, stakeholders preview, and developers reference -- the Vitest addon turns that existing investment into a test suite at near-zero marginal cost. You add play functions to stories you already have. No new test files, no new fixture infrastructure. The story is already describing the component state you want to test. That's a real and narrow advantage: it's only available to teams who already made the Storybook commitment and whose documentation use case is genuine, not aspirational.

- Design-system-heavy teams: Organizations with dedicated design system teams who use Storybook as the component catalog. Stories exist for every component state. VRT via Chromatic is often already in the budget.

- Large component libraries where speed compounds: 300 components, 5 story variants each, means 1,500 test cases. At milliseconds per test in Vitest, the full suite runs in under a minute. At Playwright CT's seconds-per-test cadence, the same suite could block CI for 20+ minutes.

- Teams with mature a11y addon usage: If your QA process already depends on the a11y addon to surface accessibility violations per story, the Vitest addon preserves that workflow. There is no equivalent plug-and-play in Playwright CT.

- Polyglot framework organizations: Angular shops, Vue shops, and organizations with mixed React/Vue codebases all have stable Storybook support. Playwright CT's framework support outside React is still experimental.

- Teams where stories-as-documentation is load-bearing: Product orgs where PMs, designers, and QA all use Storybook to review component behavior. Splitting stories from tests would lose that shared review surface.

When Playwright Component Testing wins

Playwright CT wins in a narrower set of cases than its advocates typically claim, but inside those cases it's the clearest choice available in 2026.

The clearest signal is components with heavy real-browser behavior. Drag-and-drop, focus management across keyboard navigation, CSS transitions that gate UI state, scroll-triggered animations, IntersectionObserver-dependent rendering -- any component where the test outcome depends on what a browser actually computes, rather than what jsdom approximates, belongs in Playwright CT. This matters more in 2026 as component complexity has grown with the rise of AI-generated UI code that often produces intricate animation and interaction patterns that jsdom handles poorly.

For the broader comparison against Cypress and other E2E tools, Playwright vs Cypress covers patterns that transfer between CT and E2E.

- Teams already on Playwright for E2E: One test runner, one configuration, one trace viewer, one CI job. The DX gain from consolidating is real.

- Components with strict interaction fidelity requirements: Financial input components, accessibility-critical flows, drag handles, virtual scroll lists -- anywhere a jsdom false positive could ship a broken UX.

- Visual regression as a first-class concern: Teams that want VRT without a paid Chromatic subscription can use

toHaveScreenshot()in the same file as interaction tests. The baseline management is simpler for small teams. - Strict flaky-test budgets: Playwright's trace viewer gives you the most comprehensive post-failure debugging surface of any component test tool. When a test fails intermittently, the trace tells you exactly what rendered and when. That debugging leverage can justify the CI time cost for teams with zero tolerance for unexplained flakiness.

- Teams migrating away from Cypress for component testing: Playwright CT is often the natural landing spot. If you're evaluating Cypress component testing as a comparison point, Playwright vs Cypress covers where each still makes sense.

When Autonoma wins (and why it's most teams in 2026)

We built Autonoma because we kept watching teams run this same calculation and land in the same place: pick Storybook CT for speed, wish they had fidelity, or pick Playwright CT for fidelity, wish CI ran faster. The tradeoff is real and neither tool eliminates it. What we wanted to sidestep was the premise: that someone on the team has to write and maintain the tests in the first place.

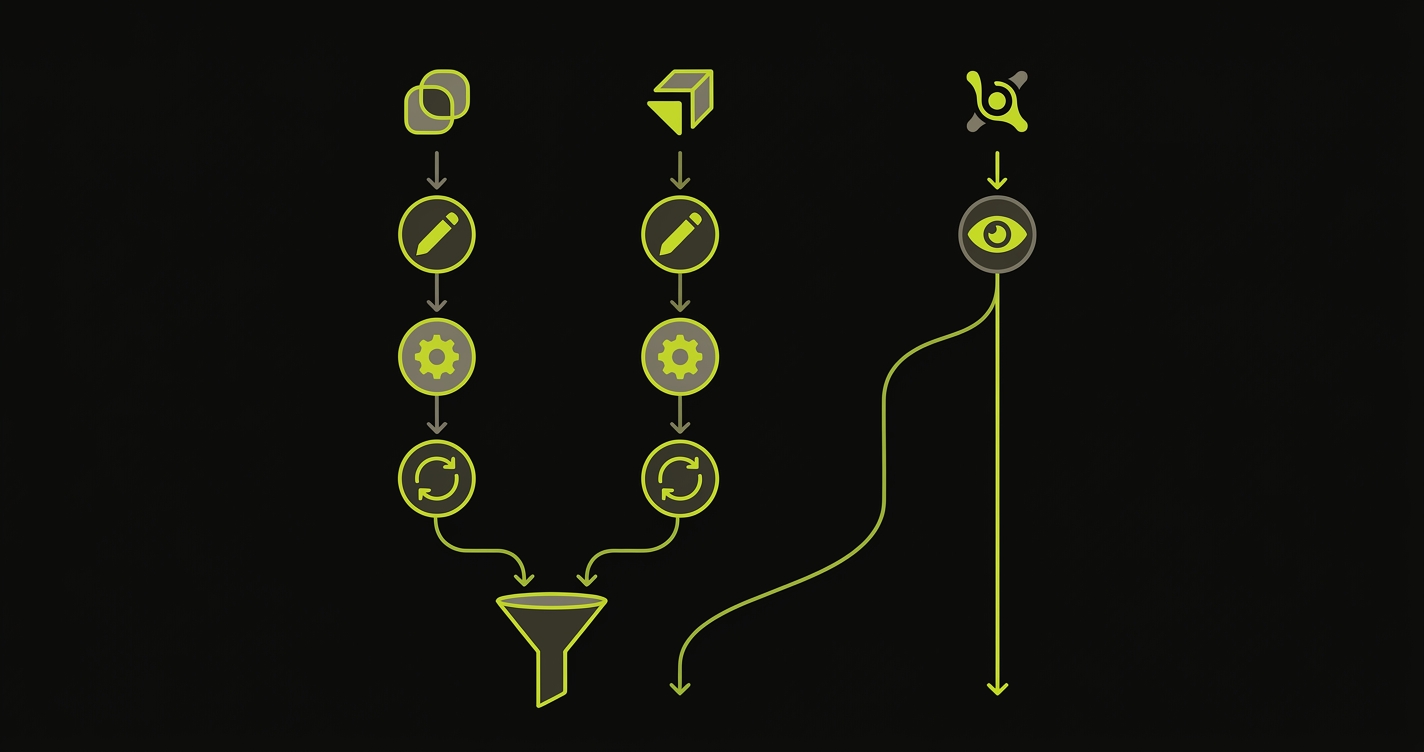

The mechanic is different from either tool. Autonoma observes real user flows -- in staging environments, preview deploys, and local dev sessions -- and derives component-level contracts from that usage automatically. When a Button component appears in a login form, a settings panel, and a pricing page, Autonoma generates contracts that validate all three usage patterns. Nobody wrote a test file. Nobody wrote a play function or a mount() call. The contracts come from what the component is actually doing in your app, not from what an engineer predicted it should do.

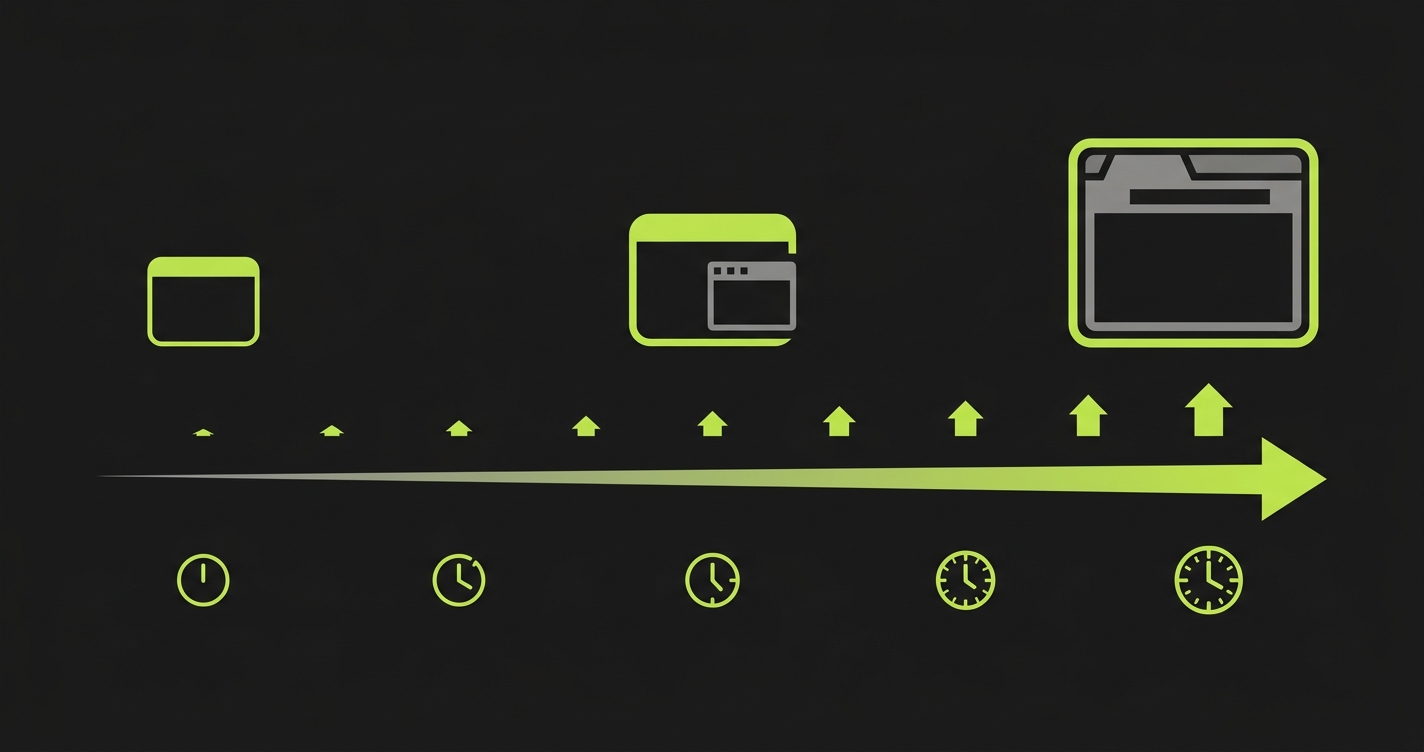

On fidelity, Autonoma runs tests in a real browser, so you get Playwright-level accuracy on focus behavior, CSS transitions, and real DOM rendering. On CI cost, test generation is separate from test execution, so the suite doesn't grow linearly with component count the way a hand-authored Playwright CT suite does. On the Storybook question: stories can still live in Storybook for documentation and design review. Autonoma handles the test layer independently. You're not forced to choose.

What Autonoma doesn't replace: pure unit tests for utility functions and reducers (use Vitest or Jest), and E2E tests for full user journeys (Playwright for E2E is still the right tool). Autonoma's scope is component-level behavior. The claim isn't that it replaces your entire test stack. It's that it replaces the part of your stack where engineers are currently spending the most time authoring and maintaining tests by hand.

The honest verdict for most teams in 2026: Storybook CT and Playwright CT are both valid choices, and the comparison in this article should help you pick between them when you need a manual-authorship solution. But if your team is debating these two tools because you want coverage without the ongoing maintenance burden, the generated-test approach is the cleaner path. That's what Autonoma is built for.

Migration considerations

From Storybook CT to Playwright CT

Teams migrate from Storybook CT to Playwright CT most often because they hit the jsdom fidelity ceiling: a category of bugs that their Storybook tests were missing despite good coverage, typically in focus management or CSS-dependent interaction flows.

What you gain: real-browser fidelity across all three browsers (Chromium, Firefox, WebKit), the trace viewer for failure debugging, and consolidation with your E2E runner. What you lose: the story-as-documentation layer (your Storybook stories become orphaned unless you maintain them separately), the addon ecosystem (a11y addon, viewport addon, Chromatic integration), and CI speed. The CI time increase can be dramatic at scale -- plan for it.

The migration pattern we've seen work best is incremental: migrate high-risk components first (forms, modals, complex interaction patterns), keep low-risk presentational components in Storybook CT, and run both suites in parallel until confidence builds. Maintain stories for documentation purposes even if they lose play functions -- the visual catalog value remains.

A pitfall teams underestimate: the per-test mount cost in Playwright CT. Each mount() call boots a browser context and loads the Vite dev server bundle. At 500+ tests, CI time can become the loudest objection from the team. Playwright's built-in sharding helps -- but it requires careful configuration. Teams that hit this wall sometimes reverse the migration, or land on a hybrid: Playwright CT for fidelity-critical components, Storybook CT for everything else.

From Playwright CT to Storybook CT

The reverse migration happens when teams realize their Playwright CT suite is running too slowly and they have a component library where stories-as-documentation would be genuinely useful.

What you gain: a dramatic CI speed improvement (milliseconds vs seconds per test), the full Storybook addon ecosystem, and a shared visual catalog that non-engineers can use. What you lose: real-browser fidelity (though Vitest browser mode recovers most of it), the trace viewer, and test-runner consolidation with E2E. If your E2E suite runs Playwright, you now have two test runners in CI.

The biggest pitfall going this direction is jsdom false positives. Components that were correctly failing in Playwright CT due to real browser behavior differences may pass in Storybook CT's jsdom mode -- silently hiding bugs. The mitigation is enabling Vitest browser mode for the component categories where fidelity matters. You can configure Vitest to run different test files in different modes, which adds complexity but preserves the speed-fidelity balance. This is not a common configuration in the Storybook docs, but it is achievable.

From either tool to Autonoma

Teams that migrate from Storybook CT or Playwright CT to Autonoma usually arrive at the same realization: the two tools are different expressions of the same burden. One is fast but shallow, one is slow but deep, and both require someone to maintain the tests when things change. The migration pattern that works best is incremental. Keep existing Storybook stories for documentation -- they still have value as a visual catalog and design review surface. Disable the play functions or remove the Playwright CT test files for the components you're transitioning first. Point Autonoma at your preview deploys, let it observe real usage, and validate that the generated contracts cover the same component behaviors your hand-written tests were checking. Retire hand-written tests incrementally as confidence builds, rather than deleting them all upfront. For teams thinking longer-term about their testing tool mix, see the E2E testing tools 2026 buyer's guide.

Frequently asked questions

Yes, and some teams do. Storybook handles stories-as-documentation and fast Vitest-based interaction tests for presentational components. Playwright CT handles fidelity-critical components where real-browser behavior matters. The cost is maintaining two test runners and two fixture models in CI, so most teams eventually consolidate on one.

Storybook 9's Vitest addon replaces the legacy Test Runner by running stories through Vitest's browser mode (backed by Playwright or WebdriverIO under the hood). Stories with play functions become Vitest test cases that execute in a real browser or jsdom, depending on configuration. You get Vitest's speed, hot module replacement, and the full Testing Library assertion API inside the existing Storybook ecosystem.

The package names still carry the "experimental" label (e.g., @playwright/experimental-ct-react), but Playwright CT is production-viable in 2026. Multiple large engineering teams have migrated full component suites to it. The "experimental" designation reflects ongoing framework bridge evolution, not instability.

Storybook CT with the Vitest addon is significantly faster -- often 5-10x -- because Vitest's test runner is lighter than Playwright's full browser context per test. For 100 component tests, Storybook CT typically runs in under 30 seconds; Playwright CT typically runs in 2-5 minutes depending on parallelism and component complexity.

Autonoma generates component tests automatically from real usage, so you get Playwright-level browser fidelity without the authoring and maintenance burden that both Storybook CT and Playwright CT require. For teams with an existing story catalog, stories can still live in Storybook for documentation while Autonoma handles the test layer. Try Autonoma free at getautonoma.com.