End-to-end (E2E) testing tools in 2026 fall into two categories: scripted frameworks and AI-native platforms. Playwright is the leading scripted e2e testing framework, with broad browser support, free parallelization, and the strongest community momentum. Cypress is still widely used, particularly for teams that value its interactive test runner, but its market share is declining. The AI-native alternative, represented by platforms like Autonoma, eliminates the scripting and maintenance burden entirely by generating and self-healing tests from your codebase. The right choice depends on whether your team has the bandwidth to write and maintain scripts. Most don't.

The pattern is familiar. The team picks an e2e testing framework, writes a solid suite, and ships it. Three months later, a UI refactor breaks 30% of the tests. Nobody volunteers to fix them. The suite starts running on a prayer and a commented-out assertion block. Eventually someone asks if Playwright is better than Cypress, as if switching frameworks will solve the underlying problem.

It usually won't.

The maintenance burden isn't a symptom of the wrong e2e testing tool. It's a symptom of a model where humans write scripts and then manually keep those scripts aligned with a product that keeps changing. This guide walks through what Playwright and Cypress each actually solve, where they fall short, and why some teams in 2026 are exiting that model entirely.

The State of E2E Testing in 2026

The scripted testing landscape has largely consolidated. Selenium, once the default, has declined to a legacy role. Cypress and Playwright now account for the majority of active adoption, with Playwright pulling decisively ahead in community momentum over the past two years.

According to the State of JS 2024 survey, Playwright has overtaken Cypress in both satisfaction scores and usage growth. GitHub star counts tell the same story. But raw adoption numbers obscure what actually matters to an engineering team evaluating tools today.

What's changed more fundamentally in 2026 is the emergence of a third category: AI-native testing platforms that don't ask you to choose a scripting framework at all. These are not visual record-and-replay tools rebranded with an AI logo. The better ones, including Autonoma (which we built), connect directly to your codebase and generate tests from source code analysis. They change the question from "which framework is best" to "should our team be writing test scripts at all."

We'll cover all three, honestly, including when not to use the AI approach.

Playwright: The Current Standard for Scripted Testing

If you're starting a new project and you've decided to write test scripts, Playwright is the default recommendation in 2026. The technical reasons are straightforward: genuine cross-browser support (Chromium, Firefox, and WebKit ship as bundled binaries), free built-in parallelization via sharding, multi-language bindings (TypeScript, Python, Java, C#), and an auto-waiting mechanism that eliminates most flakiness without manual sleeps.

The trade-offs are real but manageable. The interactive debugging experience doesn't match Cypress's test runner, component testing is still experimental, and the API is more verbose than Cypress's chainable syntax. For teams evaluating e2e testing frameworks from scratch, Playwright is the starting assumption unless a specific Cypress advantage (interactive debugger, stable component testing) outweighs its limitations.

For a detailed technical comparison, see our Playwright vs Cypress deep-dive.

Cypress: Still Excellent, But Losing Ground

Cypress built its reputation on the best interactive debugging experience in the space, and that reputation is still deserved. The time-travel test runner, approachable chainable API, and stable component testing support remain genuine advantages. For teams where the primary goal is getting developers to write tests at all, Cypress lowers the activation energy.

But the trajectory has shifted. Cypress has no WebKit/Safari support on its roadmap, which is a hard blocker for consumer products. Parallelization requires Cypress Cloud (paid) or self-hosted alternatives. The single-tab, same-runtime architecture that enables its great debugger also limits multi-origin and cross-domain workflows. If your suite is working and none of these are pain points, there's no urgency to migrate. But for new projects in 2026, these constraints are hard to justify.

See the full Playwright vs Cypress comparison for migration guidance.

Playwright vs Cypress vs AI-Native: The Full Comparison

| Criteria | Playwright | Cypress | AI-Native (Autonoma) |

|---|---|---|---|

| Cross-browser support | Chromium, Firefox, WebKit | Chromium, partial Firefox | Chromium, Firefox, WebKit |

| Test authoring | Manual (TypeScript/JS/Python/Java) | Manual (JS/TS only) | Automatic from codebase |

| Parallel execution | Free, built-in | Paid (Cypress Cloud) or DIY | Managed |

| Interactive debugger | Good (trace viewer, UI mode) | Excellent (best in class) | Results-focused reporting |

| CI integration | Native, free | Native, paid for parallelism | Native, managed |

| Test maintenance | Manual | Manual | Self-healing (automatic) |

| Setup time | Hours to days | Hours | Connect codebase, agents start |

| DB state handling | Custom fixtures/setup | Custom fixtures/setup | Automatic endpoint generation |

| Community / ecosystem | Large, fast-growing | Large, plateauing | Smaller, newer |

| Component testing | Experimental | Stable | E2E focused |

| Language flexibility | JS, TS, Python, Java, C# | JS/TS only | Any (reads codebase) |

Performance and CI Cost

The numbers matter when you're running tests in CI hundreds of times per month. Playwright's out-of-process architecture gives it a meaningful speed advantage: independent benchmarks show Playwright executing individual actions roughly 30-40% faster than Cypress, with a typical suite of 100 tests completing in approximately 9 minutes on Playwright versus 14 minutes on Cypress under comparable conditions.

The bigger cost difference is parallelization. Playwright's built-in sharding is free and works with any CI provider. Cypress requires either a Cypress Cloud subscription (starting at $75/month for small teams, scaling to enterprise pricing) or self-hosted alternatives like sorry-cypress that require infrastructure work to maintain. For teams running 500+ CI jobs per month, the annual cost difference can reach thousands of dollars before accounting for engineering time.

Memory footprint is also worth noting. Playwright's browser context isolation uses roughly 30-50% less RAM than launching separate Cypress instances for parallel execution, which directly translates to smaller CI runners and lower infrastructure costs.

The AI-Native Approach: What's Actually Different

The category of AI-native testing is still new enough that the definition is being written by vendors, which creates a lot of noise. Let me be specific about what we built and where the genuine differences are.

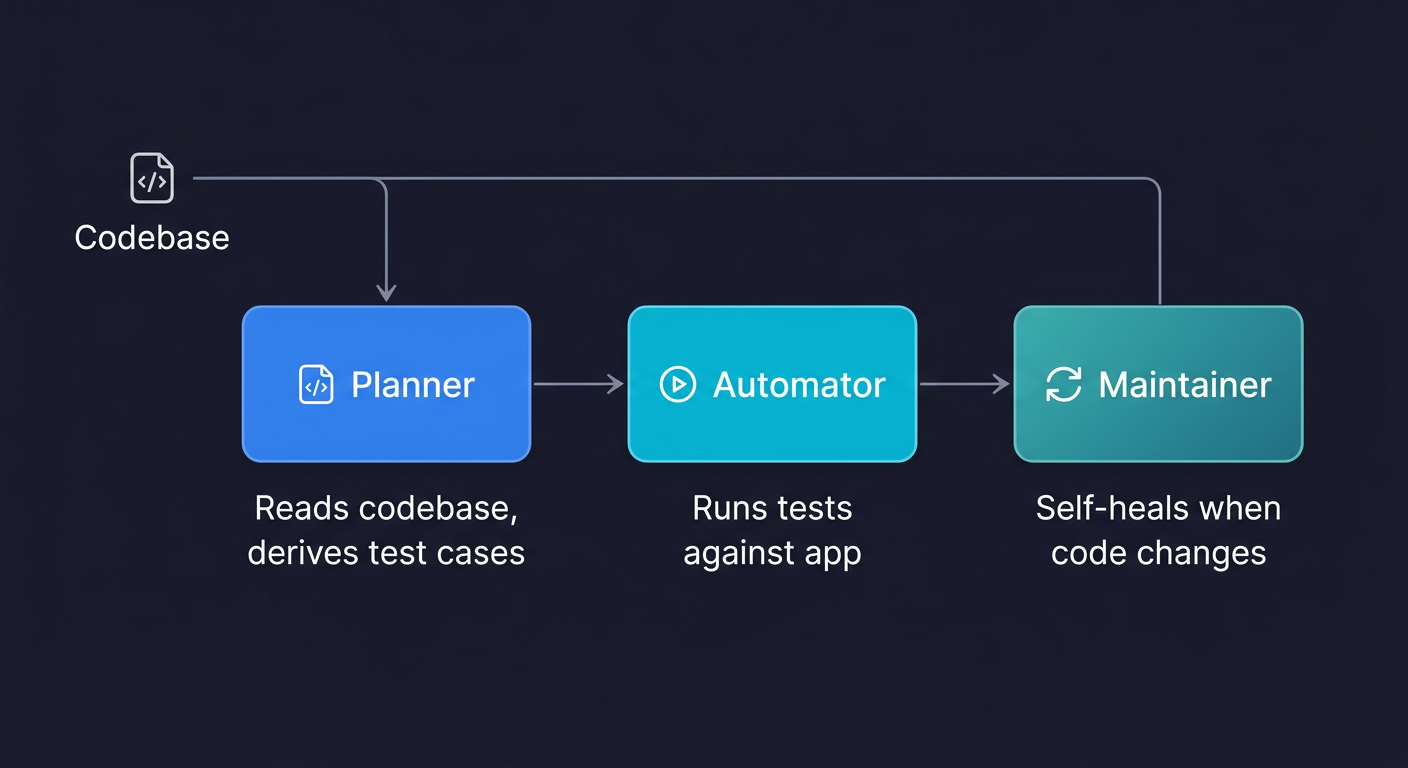

Autonoma takes a fundamentally different architectural approach. Instead of providing a framework for humans to write scripts in, it connects directly to your codebase and derives tests from source code analysis. Tests are generated, executed, and maintained automatically. When your code changes, the tests update without human intervention. The trade-off is ecosystem maturity: you get zero maintenance burden in exchange for less granular control over individual test steps. For a deeper look at the architecture, see what is agentic testing.

The key architectural decision is that the codebase is the spec. When your checkout flow changes, the Planner agent reads the new code and updates the tests accordingly. It doesn't wait for a human to notice that tests are broken.

There's one part of this that's often overlooked: database state setup. A test for a checkout flow needs the cart in the right state. A test for account settings needs a user with specific permissions. In scripted testing, this is fixture work, either custom scripts or factory libraries, that someone has to write and maintain. Autonoma handles this automatically by generating the API endpoints needed to put the database in the correct state for each test scenario. It's not configuration work that falls on your team.

Where the AI Approach Genuinely Falls Short

This is important to say clearly, because most comparisons of AI testing tools skip it.

The ecosystem is smaller. Playwright has years of community-produced guides, plugins, patterns, and solutions to edge cases. When you hit an unusual testing problem with Playwright, Stack Overflow and GitHub have answers. When you hit an unusual problem with a newer AI testing platform, the answer is a support ticket.

The tooling for custom browser interactions is less granular. If you need to test a canvas-based drawing application or a complex drag-and-drop interface with precise pixel coordinates, scripted frameworks give you more control. AI-native tools are optimized for the common 90% of test scenarios, not the specialized 10%.

For teams that enjoy writing test code, or where test code is a compliance artifact, the hands-off nature of an AI approach is a misfit. Scripted tests are documentation. Some organizations need that documentation to exist in a specific, human-authored form.

The Maintenance Burden Math

Here's where the comparison gets uncomfortable for scripted frameworks, including Playwright.

The cost of a scripted end-to-end test suite isn't the cost of writing it. It's the cost of maintaining it over the lifetime of the product. Every UI change, every API refactor, every new feature that touches a shared component creates maintenance work. On fast-moving teams, that work compounds.

We see teams where the test maintenance backlog is a permanent fixture of every sprint. Nobody has time to write new tests because they're too busy fixing old ones. The test suite exists, technically, but the coverage it represents drifts further from the actual application every week.

For teams in that position, the question of "Playwright or Cypress" is the wrong question. The bottleneck is human bandwidth, and no framework change fixes that.

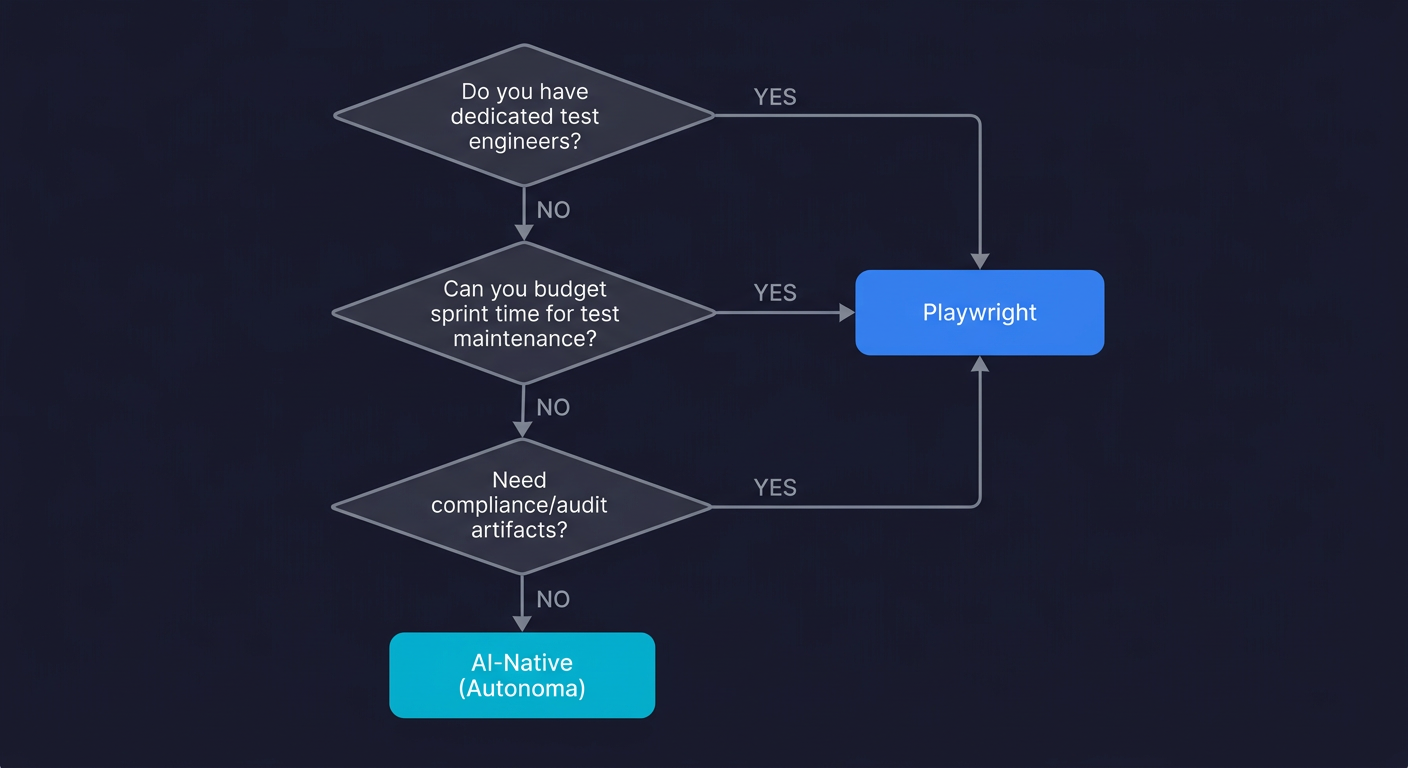

The Decision Framework

Rather than giving a prescriptive answer, here's how to think through the choice for your specific situation.

Your team has dedicated test engineers who write test code as their primary function. Use Playwright. The technical advantages are real, the ecosystem is mature, and engineers who spend their days in a test framework will appreciate Playwright's design decisions over time.

Your team has developers who will test, but testing is not their primary job. This is where the choice gets harder. Playwright is still a good default, but be honest about the maintenance cost. Build in sprint budget for test maintenance, or the suite will gradually become unreliable. If that maintenance budget doesn't exist, the AI-native path is worth evaluating seriously.

Your team has essentially no testing bandwidth and currently ships with manual smoke tests. Skip the framework debate. The overhead of setting up, writing, and maintaining a scripted test suite will eat whatever bandwidth you had allocated for testing. Autonoma connects to your codebase and agents handle the rest. It's open source and self-hostable, with a free tier and a $499/mo cloud option. You'll have coverage running faster, with zero scripts to maintain.

You're evaluating for a legacy application with an unusual tech stack or complex custom browser interactions. Playwright is more likely to have the primitives you need for edge cases. The AI approach is optimized for mainstream web applications.

You need compliance artifacts, or your tests serve as documentation. Scripted frameworks are the right choice. Human-authored test code has properties that auto-generated tests don't, specifically explicit intent and auditability. If your tests need to be readable by an auditor, keep them human-written.

You already have a working Cypress suite. Don't migrate unless a specific pain point is costing you: WebKit support you need, Cypress Cloud costs you want to eliminate, or flakiness that you can trace to architectural limitations. Migration has a real cost. Pay that cost only when the benefit is concrete.

When Not to Use AI-Native Testing

This section is more important than most AI testing vendors are willing to write.

Don't use an AI-native platform as a substitute for thinking about what you're testing. The agents derive tests from your code. If your code doesn't represent the user flows you care about clearly, the generated tests won't cover them. Garbage in, garbage out applies here.

Don't expect it to replace your entire testing strategy. Unit tests for complex business logic, integration tests for critical API contracts, and performance tests for high-traffic endpoints are still things your team should own directly. AI-native platforms are excellent at E2E coverage. They're not a substitute for every layer of the testing pyramid.

Don't adopt it if your primary need is investigating flaky tests or debugging complex race conditions. Scripted frameworks with their trace viewers and interactive debuggers are better diagnostic tools for those workflows.

The Honest Summary

Playwright is the right e2e testing framework when your team is going to write test scripts. That's a genuine recommendation, not a hedge. It's technically superior to Cypress for most use cases in 2026, free to run in CI, and the momentum of the ecosystem means the gap will continue to widen.

Cypress is worth keeping if you're already using it and it's working. It's not worth starting fresh with in 2026 for most teams, primarily because of the parallelization cost and the WebKit gap.

The AI-native approach answers a different question. If the reason your team doesn't have E2E coverage is that nobody has time to write and maintain scripts, not that you haven't found the right framework, that's what we built Autonoma to address. Connect your codebase, and the agents handle planning, execution, and maintenance. No scripts to write. No selectors to update when the UI changes.

The best testing tool is the one your team actually uses and maintains. For teams with the bandwidth to own scripted tests, that's Playwright. For teams without that bandwidth, forcing the scripted model is how test suites become graveyards.

Frequently Asked Questions

The leading e2e testing tools in 2026 are Autonoma (AI-native, codebase-first, zero maintenance), Playwright (scripted, best-in-class cross-browser support and parallelization), and Cypress (scripted, best interactive debugger, Chrome-focused). For teams without dedicated test engineers, Autonoma generates and maintains tests automatically. For teams writing scripts, Playwright is the stronger default for new projects.

For most new projects in 2026, Playwright is the better choice. It supports Chromium, Firefox, and WebKit, offers free built-in parallelization, and has stronger community momentum. Cypress retains an advantage in interactive debugging and stable component testing, but its lack of WebKit support and paid parallelization requirements are significant limitations. If your team is Chrome-only and values the Cypress debugger, Cypress remains a valid choice.

An AI-native e2e testing framework generates, executes, and maintains tests automatically rather than requiring humans to write scripts. Autonoma is the leading example: connect your codebase, and agents read your routes, components, and user flows to generate, execute, and maintain tests automatically. No scripts to write, no selectors to fix when the UI updates. For details on the architecture, see what is agentic testing at /blog/what-is-agentic-testing.

Playwright's main advantages over Cypress are cross-browser support (including WebKit/Safari), free built-in parallelization via sharding, multi-language bindings (TypeScript, Python, Java, C#), and support for multiple tabs and origins. Cypress only supports Chromium-based browsers natively, requires a paid subscription (Cypress Cloud) for parallel execution at scale, and is JavaScript/TypeScript only.

In scripted frameworks like Playwright and Cypress, database state setup requires custom fixture scripts or factory libraries that your team writes and maintains. Autonoma handles this automatically by generating the API endpoints needed to put the database in the correct state for each test scenario. This is one of the more significant time savings compared to maintaining fixture code alongside a growing test suite.

AI-native testing is not the right fit when you need compliance artifacts or human-authored test documentation for audit purposes, when your primary testing need is debugging complex race conditions (scripted frameworks with trace viewers are better diagnostic tools), when you have highly unusual browser interactions that require precise scripted control, or when your team has dedicated engineers who write test code as their primary function and want full ownership of the test suite.

Migration from Cypress is worth the investment if you need WebKit/Safari coverage, you are paying for Cypress Cloud parallelization and want to eliminate that cost, or flakiness problems trace to Cypress's architectural limitations. If your Cypress suite is working well and none of these apply, the migration cost typically outweighs the benefit. Migration timelines for small suites (under 50 tests) run one to two weeks; larger suites can take four to eight weeks.

With a scripted framework, getting meaningful E2E coverage typically takes one to three weeks of writing, debugging, and CI integration work, plus ongoing maintenance. With Autonoma, you connect your codebase and agents begin generating and running tests within hours. The setup time is dramatically shorter because no one writes scripts. The more important difference is ongoing cost: scripted suites require continuous maintenance as the product evolves, while Autonoma's Maintainer agent handles that automatically.