Flaky tests in CI/CD are tests that fail intermittently without any code change -- and they are costing your team far more than the 30 seconds it takes to rerun them. Research from Google, Microsoft, and Spotify puts the aggregate cost at 15-30% of total CI time lost to reruns, with engineering teams spending 5-10 hours per week investigating false failures. For a 50-person engineering team running a typical test suite, that translates to over $400,000 in wasted developer time annually -- before you count the CI compute bill. This article makes the business case for treating flaky tests as an organizational problem, not a test-by-test annoyance.

Most engineering leaders know flaky tests are annoying. Few have calculated what they actually cost. We did -- and the number is large enough to justify a dedicated remediation sprint at most mid-sized engineering organizations.

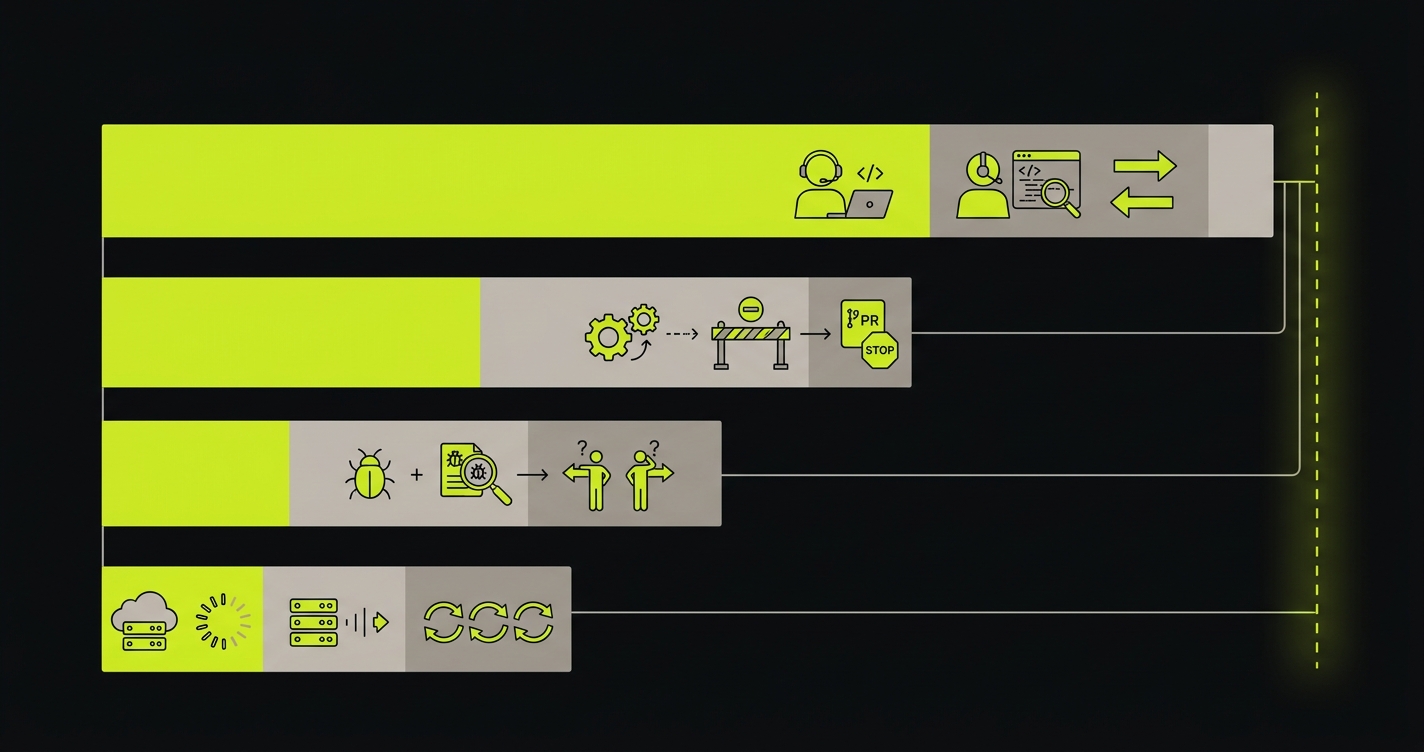

If you have ever tried to get flaky test cleanup onto a sprint and lost the prioritization fight to feature work, this piece is the ammunition you were missing. It breaks down the cost of flaky tests across four categories: developer time lost to investigation and context switches, CI compute wasted on reruns, deployment velocity drained by blocked PRs, and incident risk from teams that have learned to ignore test failures. Each category gets real numbers you can plug your own team size and rates into.

The Flaky Test Tax: What It Actually Costs

Google's engineering productivity team studied flaky tests across their monorepo and found that roughly 1 in 7 test suite runs encountered at least one flaky failure. Microsoft's research put the average time a developer spends per flaky test investigation at 30 minutes -- and that is per investigation, not per occurrence. Spotify reported that at their scale, flaky tests were responsible for a measurable percentage of all CI failures and required dedicated engineering effort to triage weekly.

These are not outliers. They are the natural result of test suites that grow faster than the infrastructure meant to keep them reliable.

Here is what the math looks like for a team that is not at Google scale -- a 50-person engineering org with 2,000 tests running a typical CI pipeline:

- A 5% flake rate means 100 tests fail intermittently

- Each flake triggers an average of 1.5 reruns before the build is green

- A developer context switch costs 15-20 minutes of recovered focus time on top of the wait

- At 50 developers running 3 pipeline runs per day, flaky failures interrupt workflows dozens of times daily

| Cost Category | Per Incident | Weekly (50-person team) | Annual |

|---|---|---|---|

| Developer time (investigation + context switch) | 20-30 min | 40-60 hours | $180,000-$270,000 |

| CI compute reruns (cloud minutes) | 5-15 min/build | $200-$600 | $10,000-$30,000 |

| Delayed deployments (blocked PRs) | 30-120 min | Variable | $50,000-$150,000 in velocity |

| Incident triage (flake vs real bug confusion) | 1-4 hours | 4-12 hours | $40,000-$120,000 |

The developer time estimate assumes a fully loaded cost of $150 per hour for a senior engineer, which is conservative for most US-based engineering teams in 2026. At $200 per hour, all of those annual figures scale up by a third.

The CI compute line is often invisible because it gets buried in infrastructure spend rather than attributed to flakiness. Most teams with a significant flake rate are paying for 20-30% more CI minutes than they would need if their suites were reliable. At scale, that adds up fast.

The velocity line is the hardest to quantify and the most important. When PRs sit blocked because CI is red and nobody is sure if it is a real failure or another flake, feature work slows. Releases get delayed. The calendar impact of persistent flakiness compounds in ways that are genuinely difficult to reverse.

Why Flaky Tests Compound with AI-Generated Code

The flaky test tax already existed before AI coding tools. Now it is getting worse, and the reason is straightforward: more code means more tests, and more tests means more flake surface area.

AI tools like Cursor, GitHub Copilot, and Claude Code have genuinely changed how fast engineers ship code. A developer who used to complete two features per sprint now completes four or five. The PRs are larger. The pace of commits is higher. CI runs more often.

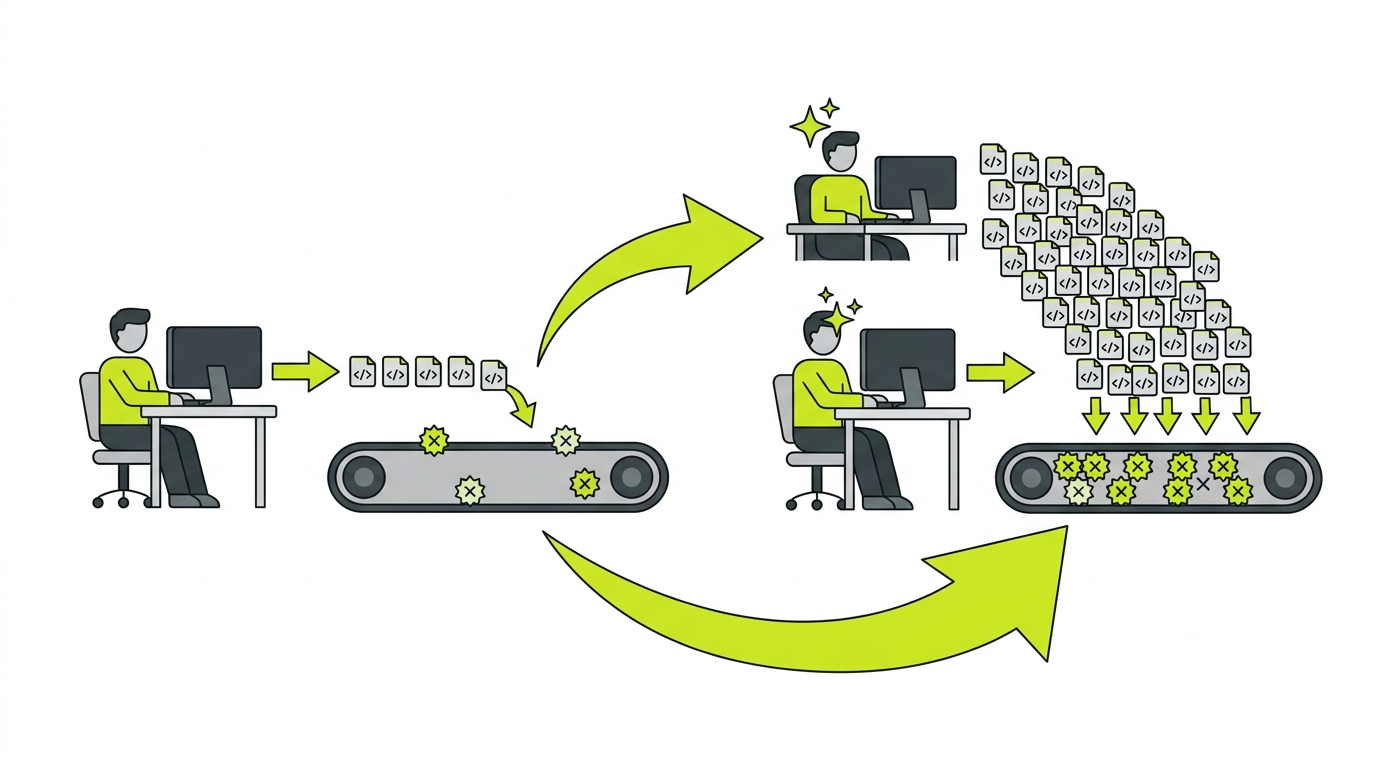

That acceleration multiplies the existing flaky test problem in two ways. First, more CI runs means more chances for each flaky test to fail -- the same 5% flake rate produces more total failures when the pipeline runs 3x as often. Second, AI-generated code often changes UI structure faster than tests can adapt: new component hierarchies, refactored selectors, changed data flow. Tests that were marginally stable become flaky when the surface they are testing shifts underneath them.

For a deep dive on the root causes -- race conditions, shared state mutations, DOM rendering timing -- see our full breakdown of why tests flake. For how this fits into the broader CI/CD pipeline transformation happening right now, see our piece on continuous testing in AI development.

The pattern that emerges from teams using AI code generation at scale: test suites that took years to accumulate 2,000 tests now grow to 3,000 or 4,000 in months. Flake rate does not drop. Total flaky failures increase proportionally. The velocity gain from AI coding gets partially consumed by the velocity loss from unreliable CI.

The Organizational Cost: When Teams Stop Trusting Tests

The financial cost is the part you can put in a spreadsheet. The organizational cost is harder to quantify and more damaging in the long run.

It starts with a reasonable adaptation. A developer sees CI red. They check the failure. It is a test that has been flaky for weeks. They rerun it. It passes. Over time, that becomes a reflex. They stop checking the failure before rerunning. Why would they? It is almost certainly a flake.

Then something shifts. The reflex now applies to failures they have not seen before. New failures look like old flakes. The investigation step -- the one that exists precisely to catch real regressions -- gets skipped. A genuine bug ships to production. Post-mortem reveals that CI caught it. The developer saw CI red, assumed flake, reran, got lucky that the bug-related test also passed on rerun, and merged.

This is the "boy who cried wolf" failure mode. It is not a process failure. It is the predictable outcome of a test suite that has lost its credibility. Once developers learn to distrust their CI signal, you cannot rebuild that trust by asking them to be more diligent. You rebuild it by making the signal reliable.

The cultural damage compounds with team scale. On a 5-person team, someone usually knows which tests are flaky by name. On a 50-person team, that institutional knowledge is fragmented. New engineers have no way to distinguish "this test is always like this" from "this test caught something real." They either investigate everything (expensive) or investigate nothing (dangerous).

For more on how flaky tests connect to the broader QA bottleneck facing engineering organizations, see our analysis of QA process improvement with AI.

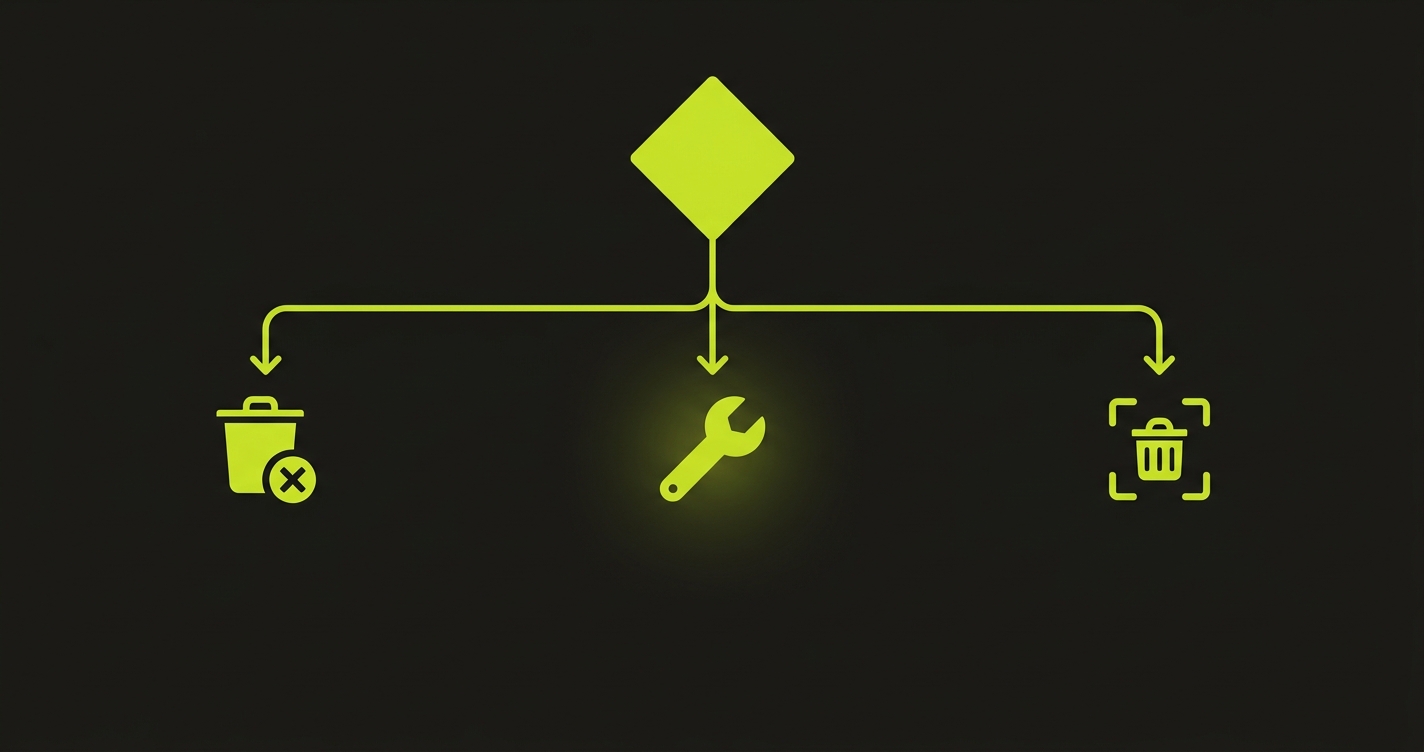

The Decision Framework: Kill, Fix, or Quarantine

Most teams default to quarantine because it feels responsible. You are not ignoring the flaky test -- you have isolated it, added it to the known-flaky list, and assigned someone to look at it later. In practice, quarantine is just delay with documentation. The "look at it later" pile accumulates. The tests never get fixed. They get deleted when the feature they cover gets deprecated.

The right framework depends on what the flaky test is actually testing and why it is flaking.

| Strategy | When to Use | Common Mistake |

|---|---|---|

| Kill it | Test covers a flow that is already tested by a stable test; the flaky test adds no unique coverage. Or the feature it covers has been removed. | Deleting tests that cover real critical paths because fixing feels hard. Check coverage before deleting. |

| Fix it | Test covers a critical user flow with no other coverage. Root cause is diagnosable: hardcoded wait, shared state leak, DOM timing issue. | Patching the symptom (adding a longer wait) rather than the root cause (removing shared state). The test flakes again in three months with a different symptom. |

| Quarantine it | Test covers a critical flow, root cause is complex and requires significant investigation, and your team has capacity allocated to fix it within a defined sprint. | Quarantining with no ownership, no deadline, and no check-in. This is how the quarantine list grows to 200 tests over 18 months. Quarantine should be a temporary state, not a permanent category. |

The instinct to quarantine everything is understandable -- it creates a feeling of control without requiring immediate work. The discipline is in being honest about whether quarantine has a realistic path to resolution or whether it is just a more polite word for ignoring.

For individual test-level fixes -- better selectors, retry logic, state isolation -- see our tactical guide to reducing test flakiness. The framework here is about organizational decision-making; that guide covers the implementation details.

The Structural Fix: From Triage to Prevention

The kill/fix/quarantine framework is useful for managing an existing flaky test backlog. It is not a solution to test flakiness as a persistent condition. The most effective way to reduce flaky tests is not to fix them one by one -- it is to change the architecture that produces them. As long as tests are authored by humans selecting DOM selectors and writing timing logic, flakiness will recur. You triage the current batch, new flaky tests accumulate, the cycle repeats.

The structural fix to flaky test automation is to change how tests are generated in the first place.

Autonoma takes a different approach at the source. Instead of generating tests from human-authored sessions or recorded UI interactions, our agents read the codebase itself -- routes, components, data models, API contracts. Tests are derived from what the application is supposed to do, not from a snapshot of what a specific UI element looked like on a specific day.

That architecture eliminates the two most common sources of flakiness. Tests do not have hardcoded selectors that break when a class name changes, because the test understands the component's purpose rather than its CSS. Tests do not have timing issues caused by humans inserting waits where they think rendering will complete, because the agents understand the application's state transitions. When a UI change ships, the Maintainer agent self-heals the affected tests rather than leaving them to fail until someone notices.

For a deeper look at how self-healing works mechanically, see our piece on self-healing test automation. The short version: tests that understand your codebase adapt to it. Tests that understand only your DOM break when the DOM changes.

How to Measure Your Flaky Test Rate and Velocity Impact

Before you can make the case for investment, you need numbers. Most engineering organizations track CI pass rate but not flaky test rate specifically. Those are different metrics and the distinction matters.

Flaky test rate is the percentage of your test suite that has failed at least once without a corresponding code change in the last 30 days. A test that fails consistently is not flaky -- it is broken. A test that fails intermittently is flaky. Your CI tooling may not distinguish these automatically; you may need to cross-reference failure logs with git history.

CI rerun percentage is the proportion of pipeline runs that are reruns triggered by a previous failure. If 25% of your pipeline runs are reruns, that is your baseline flakiness overhead -- a rough measure of the CI compute waste.

Mean time to green (MTTG) is how long a PR takes from opening to a clean CI run. Flaky tests inflate this metric because the first run fails, the rerun takes another N minutes, and the developer is context-switching in between. Tracking MTTG over time shows whether your flaky test problem is getting worse as your test suite grows.

Developer hours lost requires either instrumentation or estimation. If you have Slack or incident tooling that captures "rerun" events, you can approximate the frequency. If not, a one-week manual log across a few developers will give you enough data to extrapolate.

For how these metrics connect to the broader cost comparison between manual and automated testing approaches, see our cost analysis of manual vs automated testing.

Once you have these four numbers, the business case writes itself. Multiply developer hours lost by your loaded engineering rate. Add CI compute waste. Add an estimate for velocity impact (even a conservative 10% slowdown in deployment frequency has measurable revenue implications for most product teams). The sum will be larger than whatever the fix costs.

Frequently Asked Questions About Flaky Tests

Pull your CI failure logs for the past 30 days. Identify any test that failed at least once but passed on a subsequent run without a code change between the two runs. The flaky test rate is that count divided by your total test count. Most CI platforms (GitHub Actions, CircleCI, Buildkite) can export run-level data to make this calculation automated. A rate above 2% is worth active intervention; above 5% is a systemic problem that is likely already visibly slowing your team.

For a 50-person engineering team at a typical US startup, the combined cost of developer time lost, CI compute reruns, and delayed deployments typically ranges from $200,000 to $400,000 per year. The developer time component dominates: at 5-10 hours per week of aggregate investigation and context-switching time, and a loaded engineering cost of $150-200 per hour, you reach six figures quickly. CI compute is a smaller but real line item, usually 20-30% of total compute spend for teams with a significant flake rate.

AI coding tools like Cursor and GitHub Copilot typically increase code output by 3-5x per developer. More code means more PRs, more CI runs, and more exposure to existing flaky tests. The flake rate itself may not change, but total flaky failures increase proportionally with pipeline frequency. AI-generated code also tends to change UI structure quickly -- refactoring components, renaming selectors, restructuring data flow -- which turns previously stable tests into flaky ones if those tests rely on brittle DOM selectors.

Kill a flaky test when it covers a flow that is already tested by a stable test (duplicate coverage), when the feature it tests has been removed or significantly changed, or when the root cause is so deeply architectural that a fix would require rewriting most of the test anyway. Before deleting, verify coverage: run your coverage tool without the flaky test and confirm the critical path it was checking is still covered by something else. If it is not, fix rather than kill.

Autonoma's architecture eliminates the most common causes of flakiness: brittle DOM selectors and human-authored timing logic. Because our agents read the codebase rather than recording UI interactions, tests understand component purpose rather than CSS class names. When UI changes ship, the Maintainer agent updates affected tests automatically rather than leaving them to break. Teams running Autonoma-generated suites see significantly fewer flaky failures than teams running hand-authored or recorder-based tests -- and when flakiness does occur, it surfaces in the underlying application logic rather than in test infrastructure, which makes it actionable rather than noise.