The testing pyramid is the standard framework for understanding the three levels of testing in software: unit tests at the base (fast, isolated, many), integration tests in the middle (slower, verifying component interactions), and E2E tests at the apex (slowest, highest confidence, fewest). Each level answers a different question: unit tests ask "does this function do what I expect?", integration tests ask "do these components work together?", and E2E tests ask "does this user flow actually work?". The classic allocation is roughly 70% unit / 20% integration / 10% E2E. AI code generation is changing that ratio -- because AI produces code that passes unit tests cleanly but breaks user flows in ways only E2E tests catch. This article covers the cost-benefit analysis per layer, a decision framework for choosing the right test type, how the AI era shifts the pyramid, and the anti-patterns that waste the most engineering time.

There is a specific kind of incident that engineering leads dread above all others. Every test passes. The build is green. The deploy goes out. Then support starts pinging you because the signup flow is broken for anyone on Safari, or the checkout breaks when a coupon code is applied, or the onboarding wizard drops users at step three.

No unit test would have caught it. No integration test was checking that specific path. The testing pyramid -- the framework that was supposed to prevent this -- had a gap right where the user was standing.

This is not a failure of testing discipline. It is a structural consequence of how the three levels of testing divide responsibility. Unit vs integration vs E2E is not a debate about which is better. It is a framework for understanding which layer owns which risks -- and right now, most teams under-invest in exactly the layer that catches the bugs users actually experience.

The Three Levels of Testing: What Each One Actually Measures

Most teams have an intuitive sense of the difference between unit, integration, and E2E testing. What they often lack is a precise vocabulary for the tradeoffs, which is what makes allocation decisions feel like guesswork.

Unit tests are isolated. They test a single function, method, or class with all external dependencies mocked or stubbed. When a unit test passes, it tells you that the logic inside that unit is correct given the inputs you provided. It tells you nothing about whether that unit behaves correctly when wired to a real database, a real API, or a real user session.

Integration tests are connected. They test two or more components working together -- typically a service and its database, two microservices communicating, or a backend route and its middleware chain. When an integration test passes, it tells you that the contract between those components is honored. It does not tell you whether the full user experience, which typically spans many such contracts, delivers the expected outcome.

E2E tests are holistic. They simulate a real user interacting with the running application through a browser or API client. When an E2E test passes, it tells you that the actual user-facing flow works. The cost is speed and fragility: E2E tests are slow to run, harder to maintain, and more sensitive to environmental conditions.

The levels are not interchangeable. A complete unit test suite does not give you integration coverage. A complete integration suite does not give you E2E coverage. The testing pyramid -- first described by Mike Cohn and later expanded by Martin Fowler -- exists because you need all three, but the economics of speed and maintenance push you toward cheaper tests as the default, reserving expensive tests for the flows that most need verification.

Cost-Benefit Analysis Per Layer

Choosing the right test type is a cost-benefit decision. Each layer has a distinct cost structure across four dimensions: execution speed, confidence level, maintenance cost, and debugging signal.

| Dimension | Unit Tests | Integration Tests | E2E Tests |

|---|---|---|---|

| Execution speed | Milliseconds per test | Seconds per test | 10-60 seconds per test |

| Confidence level | Low (isolated only) | Medium (contract-level) | High (user-verified) |

| Maintenance cost | Low (refactors break them) | Medium | High (UI changes break them) |

| Debugging signal | Excellent (pinpoints exactly) | Good (narrows to boundary) | Poor (tells you something broke, not where) |

| Setup complexity | Minimal | Moderate (needs real deps) | High (full environment) |

| Parallelizability | Excellent | Good | Limited (shared state) |

The column that most teams underestimate is debugging signal. When an E2E test fails, you know something is broken in the user flow. You don't know if it is the frontend, the API contract, the database query, the session management, or the third-party integration. An E2E failure kicks off a debugging session. A unit test failure points at a line.

This is why the classic pyramid recommendation exists: use unit tests for the majority of coverage because they are fast, precise, and cheap to maintain. Use integration tests to verify that real components work together at their boundaries. Reserve E2E tests for the critical paths where user-level confidence is worth the cost.

The ratio that most experienced teams land on is roughly 70% unit, 20% integration, 10% E2E. That ratio is now under pressure.

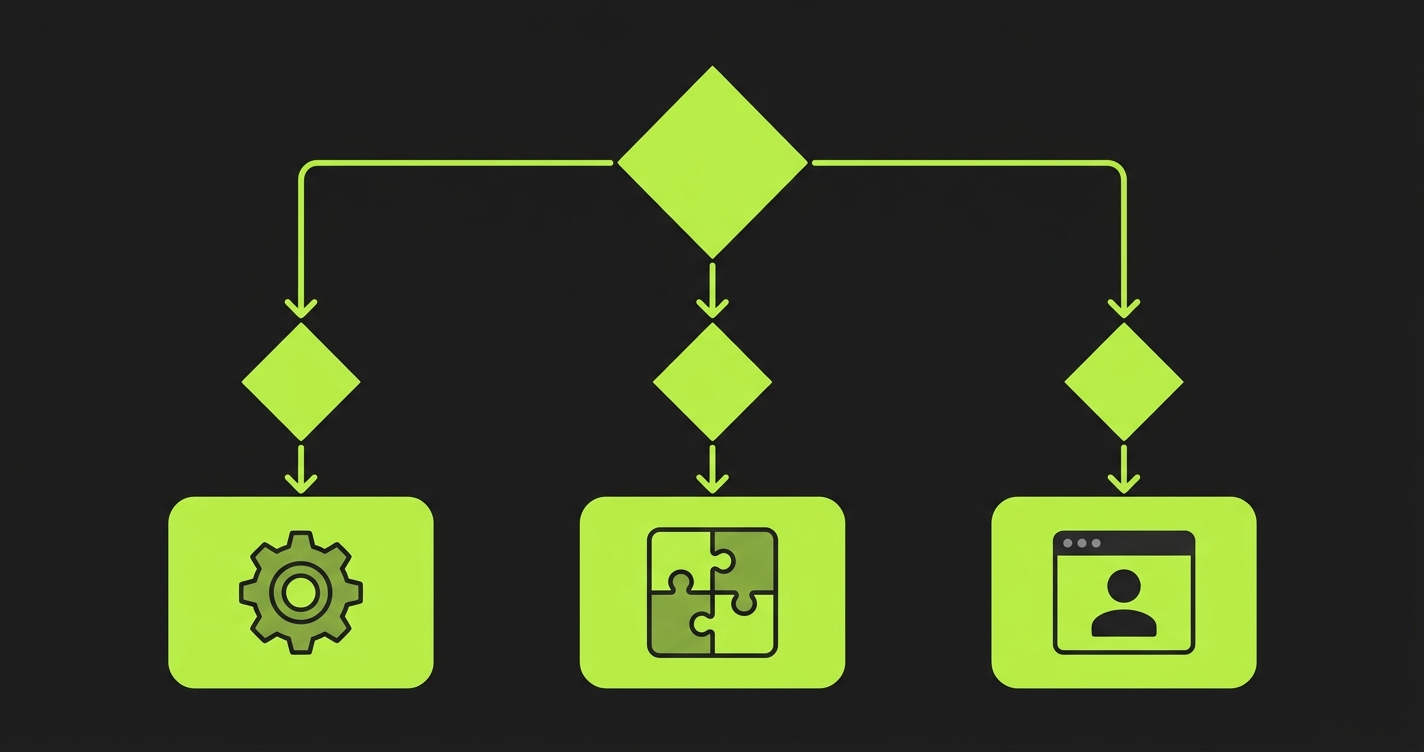

The Decision Framework: Which Test Type to Write

The question "should I write a unit, integration, or E2E test for this?" has a deterministic answer most of the time. Working through these questions in order gives you that answer.

When to Use Unit Tests vs Integration Tests vs E2E Tests

Start with what you are verifying. If the behavior you care about lives entirely within one function or class -- the logic is self-contained, the dependencies are easily mocked, and the outcome is fully determined by the inputs -- write a unit test. Pure functions, transformation logic, validation rules, and business calculations all belong here.

If the behavior you care about requires real communication between components, write an integration test. A service method that reads from a database and applies business logic needs to be tested with a real database connection, not a mock, because the bug you are trying to catch often lives in the query, the schema mapping, or the transaction boundary. A message queue consumer that triggers downstream effects needs to be tested with a real or near-real queue. API contract tests between services belong here.

If the behavior you care about is a user-facing flow that crosses multiple components, and the primary question is "can a user actually do this thing?", write an E2E test. Checkout flows, authentication sequences, multi-step form submissions, and anything that involves state accumulated across multiple requests are E2E territory.

There are two common anti-patterns that break this framework. The first is writing a unit test for something that only fails at the integration boundary -- you end up with green unit tests and production incidents. The second is writing an E2E test for something that could be fully verified with a unit test -- you end up with a slow, fragile suite that discourages people from running tests at all.

A useful heuristic: if mocking feels necessary to make the test fast, but the mock is hiding the actual failure mode you care about, you are writing the test at the wrong level.

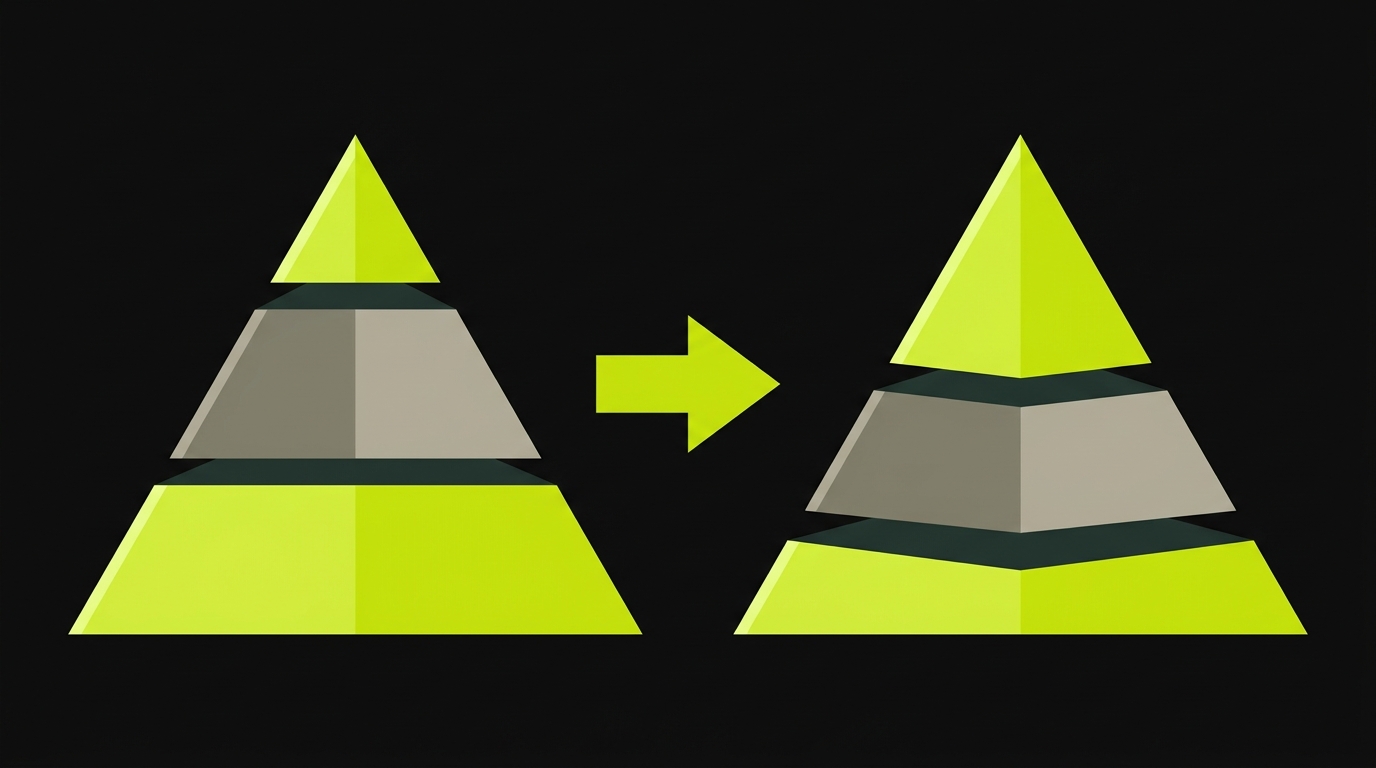

The AI Development Pyramid: Why the Ratio Is Shifting

The classic 70/20/10 allocation was designed for a world where human developers wrote all the code. AI code generation has changed the inputs to that equation in a specific, measurable way.

When a developer writes a function from scratch, they make hundreds of small decisions: how to handle null inputs, what to do when the database is unavailable, how to structure the error response. Those decisions are encoded in the implementation, and a developer who wrote the code typically writes tests that reflect that understanding. Unit tests written by the author of the code tend to cover the important edge cases.

When an AI coding assistant writes that same function, the code is structurally correct and the happy-path behavior is well-specified. The edge case handling reflects statistical patterns in training data rather than deliberate decisions about this system's requirements. More importantly, the developer reviewing the AI-generated code has a shallower mental model of it than an author would. The unit tests they write cluster around the specified behavior and miss the edge cases.

This means AI-generated code passes unit tests at rates comparable to human-written code, but produces more integration-level and user-flow-level failures than the unit test coverage would suggest. The unit tests are green. The incidents are real.

The practical implication is a shift in the optimal allocation. Teams using AI coding tools heavily are finding that a 60/25/15 ratio (or even 50/30/20 for high-stakes applications) delivers better incident rates than the classic 70/20/10. The expanded integration and E2E layers catch the category of failures that AI-generated code is most likely to produce.

This is also why shift-left testing with AI code generation requires a different strategy. Shifting left is still valuable -- catching bugs early is always cheaper. But the type of early testing that matters changes. You need integration tests earlier, and you need E2E tests on more flows, not just the "critical paths" that would have justified E2E investment in a human-coded codebase.

For teams thinking about continuous testing in AI development contexts, this means rethinking which gates run in CI. The traditional model was: unit tests on every commit, integration tests on PR merge, E2E tests nightly or on release. The AI-era model pushes integration tests to every commit and E2E tests to every PR merge for the critical flows.

The problem is that expanding E2E coverage manually does not scale with AI-accelerated shipping velocity. AI coding tools generate new user flows faster than QA teams can write E2E tests for them. This is the gap we built Autonoma to close. The Planner agent reads your codebase, understands your routes and user flows, and generates E2E test coverage automatically. When AI ships a new checkout variant on Tuesday, the test coverage follows on Tuesday -- not in the next sprint. The Maintainer agent keeps those tests passing as the code evolves, which is where the real ROI lives. The expanded E2E layer that AI development demands is only sustainable if E2E test creation is automated too.

Software Testing Levels: What Each Layer Looks Like in Practice

The theory is clear. What does it look like in a real codebase?

Unit test territory covers everything that has no meaningful external dependency. A pricing engine that calculates discounts based on user tier, usage volume, and promotion codes is a unit test. A date utility that converts between timezones is a unit test. A validation function that checks email format is a unit test. The rule of thumb: if you can test it with a pure function call and no network traffic, no database, and no file system, it is a unit test.

Integration test territory covers the seams between components. A repository class that writes to and reads from a PostgreSQL database is an integration test -- run it against a real test database, not a mock, because the ORM mapping and query planner behavior are part of what you are testing. An HTTP client that calls an external API is an integration test -- use a contract stub, but test the serialization, authentication headers, and error handling against a realistic server response. A message queue consumer is an integration test.

E2E test territory covers user-observable outcomes. A user can sign up, verify their email, and access their dashboard. A paying customer can complete a purchase and receive a confirmation email. An admin can create a user, assign them a role, and the user can then access the scoped resources. These are E2E tests. They are slow and expensive and worth every millisecond because no amount of unit or integration coverage gives you the same confidence that the assembled system works.

Here is what each layer looks like as actual test code.

Unit test -- a pricing calculation with no external dependencies:

// applyDiscount.test.ts

import { applyDiscount } from './pricing';

test('applies 20% loyalty discount to base price', () => {

expect(applyDiscount({ base: 100, tier: 'loyalty' })).toBe(80);

});

test('returns base price when tier is unknown', () => {

expect(applyDiscount({ base: 100, tier: 'invalid' })).toBe(100);

});Integration test -- verifying that a service writes to and reads from a real database:

// userRepository.integration.test.ts

import { createUser, findUserByEmail } from './userRepository';

import { db } from './testDatabase';

afterEach(() => db.rollback());

test('persists user and retrieves by email', async () => {

await createUser({ name: 'Ada', email: 'ada@example.com' });

const user = await findUserByEmail('ada@example.com');

expect(user?.name).toBe('Ada');

});E2E test -- a real user completing a login-to-dashboard flow:

// login.e2e.test.ts

import { test, expect } from '@playwright/test';

test('user can log in and see the dashboard', async ({ page }) => {

await page.goto('/login');

await page.fill('[name="email"]', 'ada@example.com');

await page.fill('[name="password"]', 'securepass');

await page.click('button[type="submit"]');

await expect(page.locator('h1')).toHaveText('Dashboard');

});For teams deciding between frameworks for the integration and E2E layers, the test automation frameworks guide covers the tool landscape in depth -- Playwright, Cypress, Vitest, and the newer AI-native options.

Anti-Patterns: What Wastes the Most Time Per Layer

Every layer has characteristic failure modes. These are the patterns that consistently destroy team velocity.

Unit test anti-patterns

The worst unit test anti-pattern is testing implementation details rather than behavior. A unit test that verifies a specific private method was called with specific arguments will break every time you refactor the internals, even when the observable behavior is unchanged. Test what the function returns, not how it gets there.

The second most destructive pattern is mocking everything. Teams that mock their database, their external services, their cache, and their message queue in unit tests end up with a test suite that passes perfectly even when none of the real integrations work. Mocks are for isolating the specific unit under test -- not for avoiding the complexity of real integrations. Real integrations belong in integration tests.

Integration test anti-patterns

The dominant integration test anti-pattern is running them too slowly due to poor database state management. Integration tests that reset the full database schema between every test, rather than using transactions with rollback, add seconds per test and become the bottleneck that teams start skipping. Use transactional test isolation where possible.

The second is testing at the integration layer what only E2E can verify. An integration test between your frontend API and your backend service tells you the contract is honored. It does not tell you that the frontend actually uses the contract correctly. Don't expect integration tests to catch frontend rendering bugs.

E2E test anti-patterns

The most costly E2E anti-pattern is testing too many scenarios end-to-end. Every additional E2E test adds maintenance burden. A flaky E2E test -- one that sometimes passes and sometimes fails with no code change -- is a trust destroyer. Teams start ignoring E2E failures because they assume it is flakiness, and then a real bug ships to production hidden in the noise.

Reserve E2E tests for user journeys where the full assembled system is the only way to verify correctness. The login flow. The payment flow. The core value delivery path. Everything else should be covered at a lower layer.

The second E2E anti-pattern is neglecting database state setup. E2E tests that depend on pre-existing data in a test environment are fragile because that data changes. Tests should set up the exact database state they require before executing -- either through API calls that create the necessary records or through database seeding scripts that run before each test. This is one of the harder operational problems in E2E test infrastructure, and it is the reason many teams avoid expanding their E2E coverage even when they know they need to.

E2E vs Integration Testing: The Boundary Decision

Because the boundary between integration and E2E is the most frequently confused, it is worth being explicit about the decision rule.

Write an integration test when the question is: "do these two specific components communicate correctly?" The test should be narrow, targeted at a specific contract, and fast enough to run in CI on every commit.

Write an E2E test when the question is: "can a user actually do this thing in our running application?" The test should exercise the full stack -- browser, frontend, API, database, any relevant third-party services -- and the pass condition is a user-observable outcome.

The confusion arises because some behaviors feel user-facing but are actually contractual. An API endpoint that returns a JSON response is an integration test: you are testing the contract, not the user experience. A user clicking "submit" on a form and seeing a success message is an E2E test: you are testing the assembled experience.

For a deeper treatment of the integration vs E2E boundary, the integration vs E2E testing deep dive covers the distinction with worked examples.

| Scenario | Correct Test Level | Why |

|---|---|---|

| Pricing calculation logic | Unit | Pure function, no external deps |

| User data saved to database correctly | Integration | Real DB needed; tests ORM mapping |

| Two microservices exchange correct payloads | Integration | Contract verification, not user flow |

| User can sign up and access dashboard | E2E | Multi-step user flow, full stack |

| Email validation rejects bad format | Unit | Isolated, deterministic logic |

| Checkout flow completes and sends confirmation | E2E | User-observable, crosses multiple services |

| Auth middleware correctly blocks unauthenticated requests | Integration | Tests middleware wiring, not full user journey |

| Search returns relevant results after indexing | E2E | Multi-step, depends on full pipeline |

The Testing Pyramid vs. the Testing Trophy

The testing pyramid is not the only allocation model. Kent C. Dodds popularized the Testing Trophy, which inverts the pyramid for frontend applications: static analysis at the base, a thin unit layer, a large integration layer, and a small E2E cap. The core insight is that for component-driven frontend codebases, integration tests provide the best confidence-per-dollar because they test components the way users actually interact with them.

The Trophy and the Pyramid are not in conflict -- they apply to different architectures. The Pyramid fits backend services, microservice systems, and API-heavy applications where isolated business logic is the dominant code pattern. The Trophy fits frontend applications built with component frameworks like React, Vue, or Svelte, where the boundary between "unit" and "integration" is blurry and testing components in isolation often means mocking away the behavior you care about.

What is interesting is that the AI-era shift described above actually moves the Pyramid closer to the Trophy's recommendations. When AI-generated code increases the failure rate at integration and E2E levels, the optimal Pyramid allocation expands the middle and top layers -- which is exactly what the Trophy prescribes for frontend code. Teams that use AI coding tools across both frontend and backend may find that a Trophy-like allocation (heavy integration, targeted E2E) works better than the traditional 70/20/10 regardless of architecture.

Recommended Tools Per Testing Level

| Layer | Popular Tools | When to Choose |

|---|---|---|

| Unit | Jest, Vitest, JUnit, pytest, Go testing | Default for all logic-heavy code. Choose Vitest for speed in TypeScript projects. |

| Integration | Testcontainers, Supertest, Pact, WireMock | When testing real boundaries. Use Testcontainers for database tests, Pact for API contract tests. |

| E2E (manual) | Playwright, Cypress | Hand-written E2E tests for critical flows. Playwright for cross-browser coverage, Cypress for component-driven E2E. |

| E2E (automated) | Autonoma | AI-generated E2E coverage that scales with your codebase. Reads your routes, generates tests, maintains them as code evolves. Open source and self-hostable, with a free tier. |

Building a Testing Strategy Around the Framework

The framework above gives you the decision rule for any individual test. A testing strategy is how you apply it at the team level, consistently, under the pressure of shipping velocity.

Three principles hold up across the teams that get this right.

First, write tests at the lowest level that gives you the confidence you need. If a unit test will catch the bug you care about, do not write an integration test. If an integration test will catch it, do not write an E2E test. Unnecessary elevation adds cost and fragility without adding confidence.

Second, treat E2E test coverage of AI-generated code as a first-class concern. When your team ships a user-facing feature built with an AI coding assistant, budget for E2E test coverage of that flow as part of the definition of done -- not as a later-sprint cleanup task. The incident pattern is too consistent to treat it as optional.

Third, address the software testing basics gap on your team before debating frameworks. Framework debates are often a proxy for uncertainty about what to test at each level. Teams that can articulate the decision framework ship faster and argue less about tooling.

The AI era is making E2E test coverage more valuable and harder to write manually at the same time. The teams that get this right are the ones that treat the testing strategy as a living document -- one that adapts as their ratio of AI-generated code changes, and one that automates the E2E layer rather than staffing it.

The testing pyramid is not a rigid prescription. It is a cost-benefit heuristic. The right allocation for your team depends on your codebase, your deployment cadence, your risk tolerance, and increasingly, how much of your code is AI-generated. The framework in this article gives you the tools to make that decision deliberately rather than by convention.

The testing pyramid is a model for allocating software tests across three levels: unit tests at the base, integration tests in the middle, and E2E (end-to-end) tests at the top. Unit tests are fast, isolated, and numerous. Integration tests verify that components work together. E2E tests simulate real user flows through the full application. The pyramid shape reflects the recommended ratio: more unit tests than integration, more integration than E2E.

Use unit tests for isolated logic with no meaningful external dependencies -- pure functions, calculations, validation rules. Use integration tests when you need to verify that two or more real components communicate correctly, such as a service and its database or two microservices. Use E2E tests for user-facing flows that cross the full stack and where the pass condition is a user-observable outcome -- checkout flows, authentication sequences, core value delivery paths.

The classic recommendation is 70% unit / 20% integration / 10% E2E. Teams using AI coding tools heavily are finding that shifting toward 60/25/15 or even 50/30/20 produces better incident rates, because AI-generated code passes unit tests but tends to produce more integration-level and user-flow-level failures. The right ratio depends on your codebase, risk tolerance, and how much of your code is AI-generated.

AI coding assistants generate structurally correct code that handles the specified happy-path behavior well. The edge case handling and cross-component behavior are harder to predict, and developers reviewing AI-generated code have a shallower mental model of it than a human author would. This means AI-generated code produces more user-flow failures relative to its unit test coverage. Teams need more integration and E2E tests to catch the failure patterns that AI code introduces.

Integration tests verify that specific components communicate correctly -- the contract between a service and its database, or between two microservices. E2E tests verify that a user can actually complete a flow through the running application. Integration tests are faster and narrower. E2E tests are slower, cover the full stack, and the pass condition is a user-observable outcome rather than a technical contract. For a detailed comparison, see the integration vs E2E testing guide.

Unit test anti-patterns: testing implementation details instead of behavior (breaks on every refactor), and mocking so much that real integration failures are hidden. Integration test anti-patterns: slow database resets between tests (use transactional isolation), and expecting integration tests to catch frontend rendering bugs. E2E test anti-patterns: testing too many scenarios end-to-end (creates flakiness), and not managing database state per test (creates order-dependent failures).

In the traditional model, E2E tests run nightly or on release candidates because they are slow. In the AI era, the recommendation is to run E2E tests for critical user flows on every PR merge, with the full E2E suite running nightly. Unit tests should run on every commit, and integration tests should run on every commit or PR. The key principle: the higher the test layer, the more expensive it is to run frequently -- but the cost of missing an E2E failure in production is almost always higher than the cost of running the test.

The core pyramid logic still applies -- unit tests are cheapest, E2E tests are most confident, and you want to catch bugs at the cheapest level possible. What changes in 2026 is the optimal allocation and the urgency of E2E coverage. AI coding tools ship user flows faster than manual E2E test authors can keep up, making automated E2E test generation a strategic priority rather than a nice-to-have. The decision framework in this article covers when to write each test type given this new context.