Quick summary: Autonoma is the open-source alternative to Functionize. Unlike Functionize's proprietary ML engine ($30K-80K/year, opaque pricing, no self-hosting), Autonoma generates tests automatically from your codebase using AI agents: no NLP descriptions needed. Full source code on GitHub (BSL 1.1), self-hosting, vision-based self-healing, transparent AI you can audit, no vendor lock-in. Free tier: 100K credits. Cloud: $499/month. Self-hosted: no ongoing costs.

Functionize markets itself as the ML-powered future of test automation. Their pitch sounds compelling: describe what you want to test in plain English, and their machine learning models create and maintain the tests for you. But once you look past the marketing, serious problems emerge. The ML is a black box you cannot inspect. The pricing requires enterprise contracts starting at $30K/year. There is no self-hosting. And the "ML-driven test creation" still requires you to describe every test scenario manually.

For teams that need transparent AI, infrastructure control, or pricing that does not require a procurement committee, Functionize's model falls apart. Autonoma is the open-source alternative that solves these problems. Transparent AI on GitHub, self-hosting on your infrastructure or our cloud, test generation directly from your codebase with no human descriptions required, and pricing that starts free. This guide covers where Functionize falls short, how Autonoma approaches testing differently, and how to switch.

Where Functionize Falls Short

Three fundamental problems push engineering teams away from Functionize toward open source alternatives.

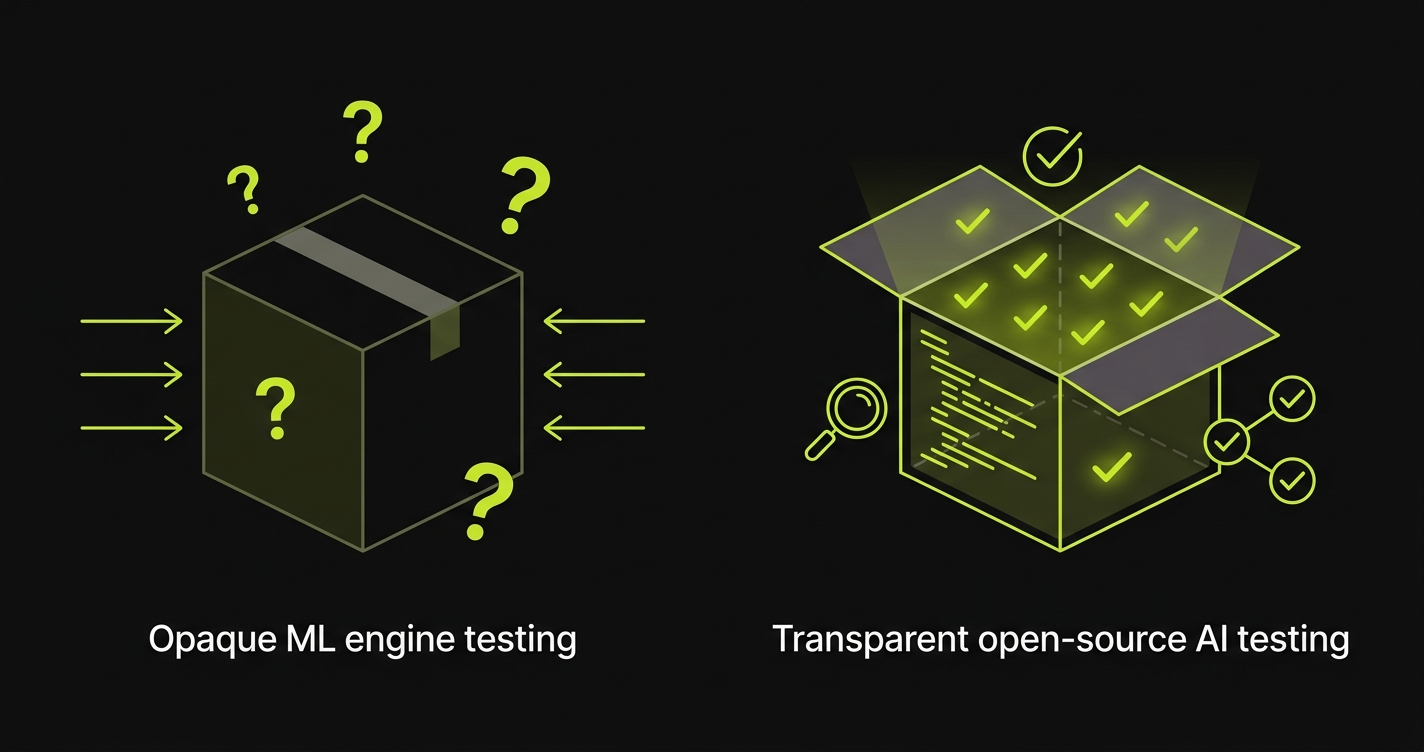

Black-Box ML You Cannot Audit

Functionize's core value proposition is ML-driven testing. Their models analyze your application, create tests from natural language descriptions, and self-heal when the UI changes. The problem is that none of this is transparent.

You cannot see how their ML models make decisions. When a test fails, you cannot determine whether the failure is a real bug, an ML misinterpretation, or a model drift issue. When self-healing kicks in, you cannot verify what changed or why. The entire testing logic lives inside proprietary ML models running on Functionize's servers, and you have zero visibility into what those models are actually doing.

For engineering teams, this opacity creates real trust problems. QA engineers need to understand why a test passed or failed. When the answer is "the ML decided," debugging becomes guesswork. One engineering lead described it this way: "We spent more time investigating whether Functionize's ML made a mistake than we spent investigating actual bugs. The black box created more work, not less."

This opacity also creates compliance issues. Teams building healthcare, financial, or government applications often need to audit their testing infrastructure. HIPAA, PCI DSS, and SOC 2 frameworks require understanding how testing decisions are made. When your testing logic is locked inside proprietary ML models you cannot inspect, auditors have legitimate concerns. Functionize cannot provide source code for review, model documentation for audit, or self-hosting for data sovereignty.

NLP Test Creation Still Requires Manual Descriptions

Functionize advertises "ML-driven test creation from NLP." In practice, this means you write natural language descriptions of what to test, and Functionize's ML interprets those descriptions to generate test steps. This sounds automated, but the bottleneck has not actually moved.

Someone still needs to identify every test scenario. Someone still needs to write a description for each flow. Someone still needs to review the ML's interpretation to ensure it understood correctly. The ML eliminates the coding step, but not the thinking, specification, or validation steps. Those are the parts that consume most of the effort in test creation.

Consider a team with a complex checkout flow. With Functionize, a QA engineer writes: "Test the checkout flow for a logged-in user with a saved credit card applying a discount coupon." Functionize's ML then interprets this and generates steps. But the QA engineer still had to know this scenario matters, write the description clearly enough for the ML to parse, and verify the generated steps match intent. If the checkout flow changes, someone needs to update the description or verify the ML adapted correctly.

The promise of "AI creates your tests" obscures the reality: you are still the test designer. You just switched from writing code to writing descriptions. The cognitive load has not decreased; only the output format changed.

Enterprise Pricing and Vendor Lock-In

Functionize does not publish pricing on their website. Every potential customer goes through a sales cycle: demo request, discovery call, proposal, negotiation, and contract. Industry reports and customer feedback place annual contracts in the $30,000-80,000 range depending on team size, usage, and negotiation leverage.

This pricing model creates several problems. First, there is no way to evaluate the platform without committing to a sales process. You cannot start with a free tier, experiment with a small project, and scale up. Second, contracts are typically annual, meaning you commit before you fully understand the platform's limitations. Third, the opaque pricing makes it impossible to accurately forecast testing costs or compare alternatives apples-to-apples.

The vendor lock-in compounds the pricing problem. Tests created in Functionize exist only in Functionize. The NLP descriptions, the ML-generated test steps, the execution history: all of it is locked in their proprietary platform. If you decide to leave after a year, you are starting from scratch. There is no export, no migration tool, no way to take your testing investment with you.

One team told us their experience: "We signed a $45K annual contract with Functionize. After 8 months, we realized the ML was not delivering the accuracy we expected. But we were locked in for 4 more months, and our entire test suite existed only in their platform. Leaving meant rebuilding everything."

Autonoma: The Open Source Alternative to Functionize

Autonoma is an open-source, AI-native testing platform that addresses every problem above with a fundamentally different approach.

Transparent AI, Not a Black Box

Full source code on GitHub. Licensed under BSL 1.1 (converts to Apache 2.0 in 2028). Every decision the AI makes is traceable. Every test generation step is auditable. Every self-healing action is logged with reasoning.

When Autonoma's AI generates a test, you can see exactly how it analyzed your codebase, why it chose specific user flows, and what verification steps it added. When self-healing adapts a test to a UI change, you can inspect the before-and-after and understand the reasoning. There is no black box. The AI is a tool you control, not an oracle you trust blindly.

This transparency directly solves the compliance problem. Need to audit testing infrastructure for HIPAA or SOC 2? Read the source code. Need to understand how the AI makes testing decisions? Inspect the logic. Need to verify how credentials and test data are handled? Trace the execution path. None of this is possible with Functionize's proprietary ML.

True Autonomous Testing: No Descriptions Needed

This is the fundamental difference from Functionize. Functionize requires you to describe what to test. Autonoma reads your code and figures it out.

How it works: You connect your GitHub repo, and Autonoma's test-planner-plugin analyzes your routes, components, and user flows to build a comprehensive knowledge base of your application. AI agents then generate E2E test cases based on your actual code structure. No natural language descriptions. No manual scenario identification. No human specification of what to test.

The AI understands your application by reading the code, not by reading human descriptions of the code. It finds authentication flows by tracing auth middleware. It identifies checkout paths by following route definitions and form components. It discovers edge cases by analyzing conditional logic and error handling. The coverage comes from code analysis, not human memory.

Tests execute using AI vision models that see your application like a human user. No CSS selectors. No XPath locators. No DOM dependencies. When your designer changes a button class from btn-submit to cta-primary, Functionize's ML might adapt (or might not: you cannot verify). Autonoma's vision model does not even notice the change because it identifies the button by its visual appearance and context, not by its CSS class.

This means the engineering hours currently spent writing test descriptions for Functionize, reviewing ML interpretations, and verifying test accuracy all drop to zero. Your QA team shifts from specifying tests to reviewing AI-generated test plans and analyzing results: higher-leverage work that improves product quality instead of feeding an ML engine.

Open Source and Self-Hosting

Run Autonoma on your infrastructure: AWS (ECS, EKS, or EC2), GCP (GKE or Compute Engine), Azure (AKS or VMs), or your own data center. When you self-host, your data never leaves your network. Tests run in your VPC. Application credentials stay on your servers. Source code and test data are never exposed to external systems.

The technology stack uses standard open source components: TypeScript and Node.js 24 for the runtime, Playwright for web testing, Appium for mobile testing, PostgreSQL for data storage, and Kubernetes for orchestration. No proprietary ML runtimes, no black-box model servers, no vendor-specific dependencies.

Self-hosting is free: no platform fees, no per-user charges, no per-model charges. You pay only for the cloud infrastructure you provision. For teams already running cloud infrastructure, this costs 85-95% less than Functionize.

Unlimited Parallel Execution

Every plan (free tier, cloud, and self-hosted) supports unlimited parallel execution. Functionize does not publicly document their parallel limits, but enterprise platforms typically gate parallelism by contract tier. With Autonoma, parallel capacity is limited only by the compute resources you allocate. Add more tests, spawn more workers. No sales calls, no contract amendments, no artificial limits.

No Vendor Lock-In

Tests are generated from your codebase, not stored in a proprietary format. There are no Functionize-specific NLP descriptions to maintain, no proprietary ML model dependencies, no platform-locked test artifacts. Fork the project if needed. Switch cloud providers or self-host anytime. Your testing capability is never held hostage by a vendor relationship.

Functionize vs Autonoma: Feature Comparison

| Feature | Functionize | Autonoma |

|---|---|---|

| Open Source | ❌ Proprietary closed source | ✅ BSL 1.1 on GitHub (Apache 2.0 in 2028) |

| Self-Hosting | ❌ Cloud only | ✅ Self-host anywhere |

| AI Transparency | ❌ Black-box ML, no auditability | ✅ Open source AI, fully auditable |

| Test Creation | ⚠️ NLP descriptions required (human writes specs) | ✅ Generates from codebase automatically (no descriptions) |

| Self-Healing | ✅ ML-based (opaque) | ✅ Vision-based (transparent, auditable) |

| Test Maintenance | ⚠️ Low (ML adapts, but you verify descriptions) | ✅ Zero (AI handles full lifecycle) |

| Vendor Lock-In | ⚠️ High (tests locked in proprietary format) | ✅ None (tests generated from code, fork codebase) |

| Pricing Transparency | ❌ Opaque (enterprise sales required) | ✅ Public pricing, free tier available |

| Starting Price | ~$30,000/year (enterprise contract) | Free (100K credits) |

| Cloud Price | $30K-80K/year | $499/month ($6K/year) |

| Self-Hosted Cost | Not available | Infrastructure only (no platform fees) |

| Source Code Access | ❌ Proprietary, no access | ✅ Full source code on GitHub |

| Data Sovereignty | ❌ Data on Functionize servers | ✅ Data stays on your infrastructure |

| Contract Required | Yes (annual enterprise contracts) | No (month-to-month, cancel anytime) |

| Free Trial | Demo only (sales process required) | ✅ Free tier, no credit card, 5-minute setup |

| Parallel Execution | ⚠️ Contract-dependent | ✅ Unlimited on all plans |

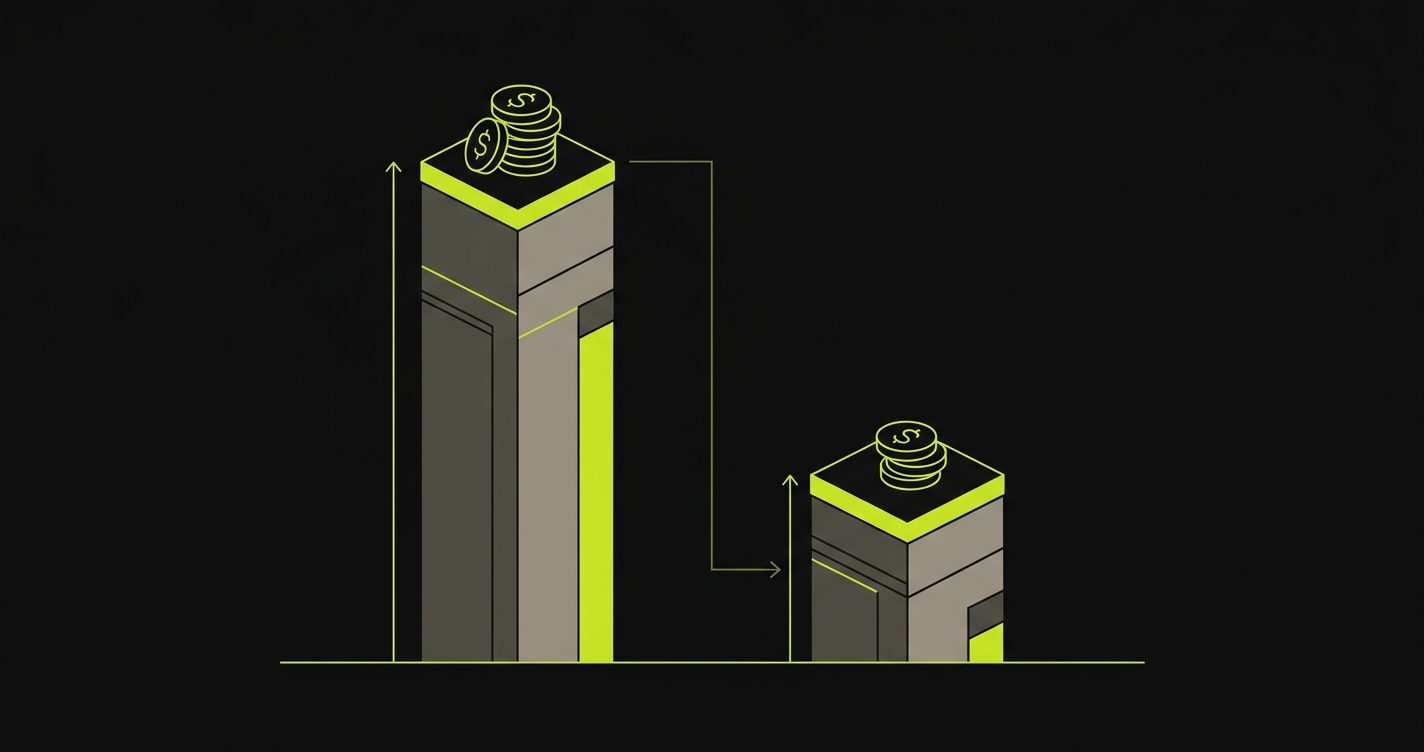

Cost: Open Source vs Proprietary ML

The cost gap between Functionize and Autonoma is one of the widest in the testing tool market.

Functionize charges $30,000-80,000 per year through enterprise contracts. For a mid-sized team, a typical contract lands around $45,000-60,000 annually. Even at the low end, that is $90K over three years. At the high end, $240K. And those costs do not include the engineering hours spent writing NLP descriptions, reviewing ML outputs, and debugging black-box failures.

Add 5-10 hours/month of description writing, ML output review, and debugging at $100-150/hour, and the hidden labor cost runs $6,000-18,000 per year. Over three years, total cost of ownership with Functionize reaches $108K-294K for a mid-sized team.

Autonoma cloud is $499/month ($6K/year, $18K over three years) with zero description writing and zero manual test maintenance. AI generates tests directly from your codebase. That represents an 83-94% cost reduction compared to Functionize.

Autonoma self-hosted eliminates the platform fee entirely. You pay only for infrastructure: typically $200-400/month depending on your parallel needs. Over three years, roughly $11K total. That is a 90-96% reduction compared to Functionize's total cost of ownership.

The biggest savings is not just the licensing difference. It is the elimination of the entire "describe what to test" workflow. Functionize shifted test creation from coding to writing descriptions. Autonoma eliminates the human input step entirely. That is where the real ROI lives.

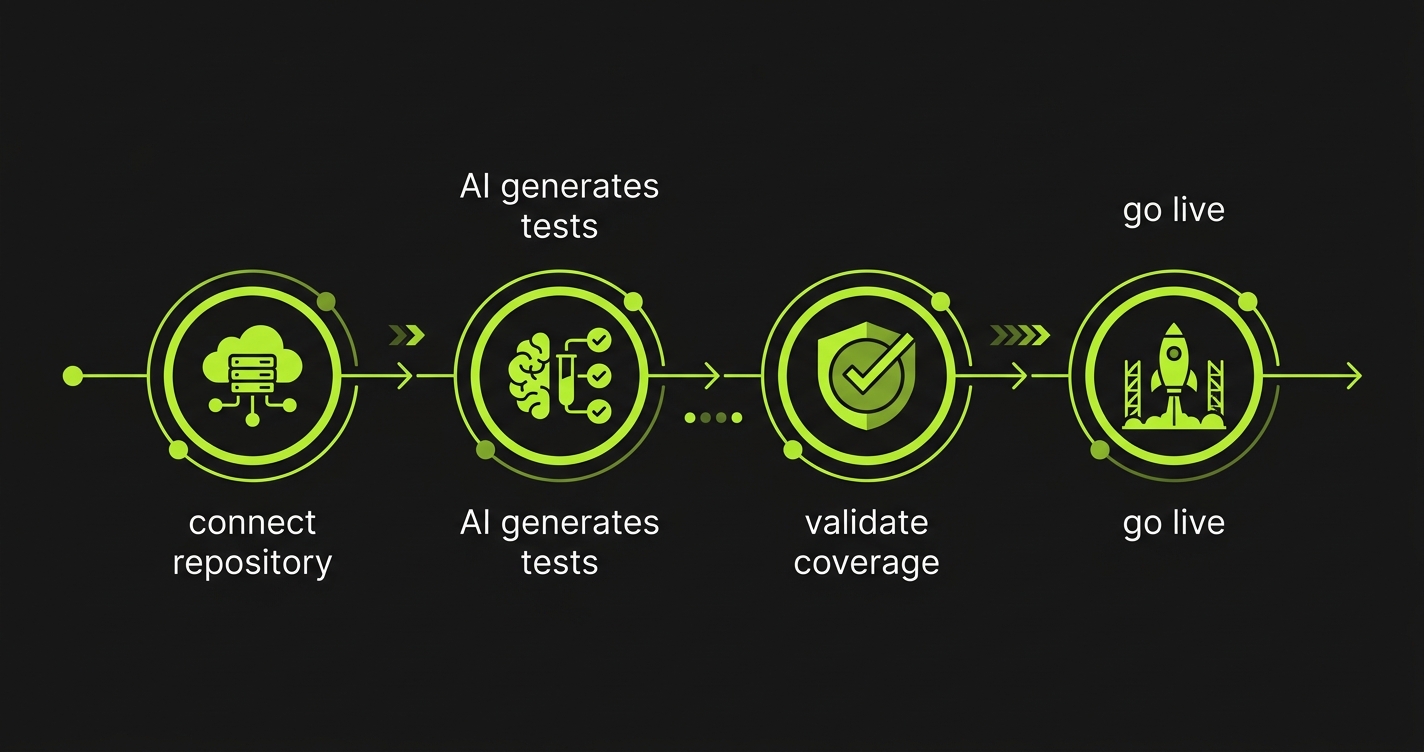

Migrating from Functionize to Autonoma

Migration from Functionize is straightforward because you are not porting tests: you are generating new ones from your codebase. Functionize tests (NLP descriptions and ML-generated steps) are locked in their platform with no export. But that does not matter because Autonoma does not need them.

1. Connect your repo. Sign up for the free tier at getautonoma.com or self-host by cloning the GitHub repo and following the deployment docs. Connect your GitHub repository and let Autonoma's AI analyze your codebase. This takes minutes.

2. AI generates tests. The test-planner-plugin builds a knowledge base of your application: routes, components, user flows, edge cases: and generates comprehensive E2E test cases automatically. No NLP descriptions to write. Start with your critical flows (authentication, checkout, core features) and run them alongside your existing Functionize suite to compare coverage.

3. Validate coverage. Compare AI-generated coverage against your Functionize test suite. Autonoma typically discovers flows and edge cases that teams never described in Functionize because the AI reads code exhaustively, not selectively. Check for gaps, review the AI-generated test plans, and iterate. Most teams achieve equivalent or better coverage within days.

4. Update CI/CD and cut over. Point your pipelines at Autonoma, notify your team, and let your Functionize contract expire. If self-hosting, provision infrastructure during the validation phase. The transition is low-risk because you have validated coverage before cutting over.

The key difference from migrating between traditional tools: there is nothing to port. Functionize tests cannot be exported, and Autonoma does not need them. You connect your repo, the AI generates coverage from your code, and you validate. Most teams complete the process in 1-2 weeks.

Frequently Asked Questions

Yes. Autonoma is an open-source AI testing platform available on GitHub. Unlike Functionize's proprietary ML engine, Autonoma offers a free tier with 100K credits, full self-hosting capabilities, and transparent AI that generates tests directly from your codebase. No enterprise sales call required to get started.

Yes. Autonoma is fully self-hostable with complete source code on GitHub. You can run it on your infrastructure (AWS, GCP, Azure, on-premise) with zero feature restrictions. Functionize offers no self-hosting option; all test data and ML models run on their servers.

Functionize typically costs $30,000-80,000 per year with enterprise contracts and opaque pricing. Autonoma offers a free tier with 100K credits, cloud at $499/month ($6K/year), and free self-hosting where you only pay for infrastructure. That represents 85-96% savings compared to Functionize.

Functionize requires you to describe tests in natural language, then uses proprietary ML to interpret those descriptions. You still provide the intent manually. Autonoma reads your codebase directly: routes, components, user flows: and generates comprehensive tests automatically without any human descriptions. The AI is also fully transparent: open source, auditable, no black box.

Yes. You don't port Functionize tests (they're locked in the platform). Instead, you connect your repo and Autonoma's AI generates tests from your codebase automatically. Most teams achieve equivalent or better coverage within days because the AI analyzes code exhaustively rather than relying on manually written descriptions.

Yes. Functionize uses proprietary ML models for self-healing that you cannot inspect or audit. Autonoma uses vision-based AI that is fully open source and auditable. When a test adapts to a UI change, you can see exactly what changed and why. Both approaches reduce maintenance, but only Autonoma gives you transparency into how healing decisions are made.

The Bottom Line

Functionize promises ML-driven testing but delivers a black box. You cannot audit their models, you still write test descriptions manually, pricing starts at $30K/year with opaque enterprise contracts, there is no self-hosting, and your tests are permanently locked in their proprietary platform.

Autonoma solves every one of those problems. Full source code on GitHub (BSL 1.1, Apache 2.0 in 2028). Transparent AI you can inspect and audit. Tests generated directly from your codebase: no NLP descriptions, no manual scenario writing. Self-host on your infrastructure or use our cloud. Unlimited parallels on every plan. No vendor lock-in. Free tier starts at 100K credits, cloud at $499/month, self-hosted at infrastructure cost only. Three-year savings: 83-96% depending on deployment model.

Ready to try open source testing?

Start Free - 100K credits, no credit card, 5-minute setup

View on GitHub - Inspect source code, self-host documentation

Book Demo - See AI test generation from your codebase

Related Reading: