Quick summary: Autonoma is the open-source alternative to Rainforest QA. Instead of crowdsourced human testers executing tests in minutes to hours, Autonoma's AI agents execute in seconds with perfect consistency. Full source code on GitHub (BSL 1.1), self-hosting on your infrastructure, no external testers accessing your app, no vendor lock-in. Free tier: 100K credits. Cloud: $499/month. Self-hosted: no ongoing platform costs.

Rainforest QA built its reputation on an appealing promise: skip the brittle Selenium scripts, define tests in a no-code interface, and let a crowd of human testers execute them for you. No test code to maintain. No flaky selectors. Just humans clicking through your app and reporting what breaks.

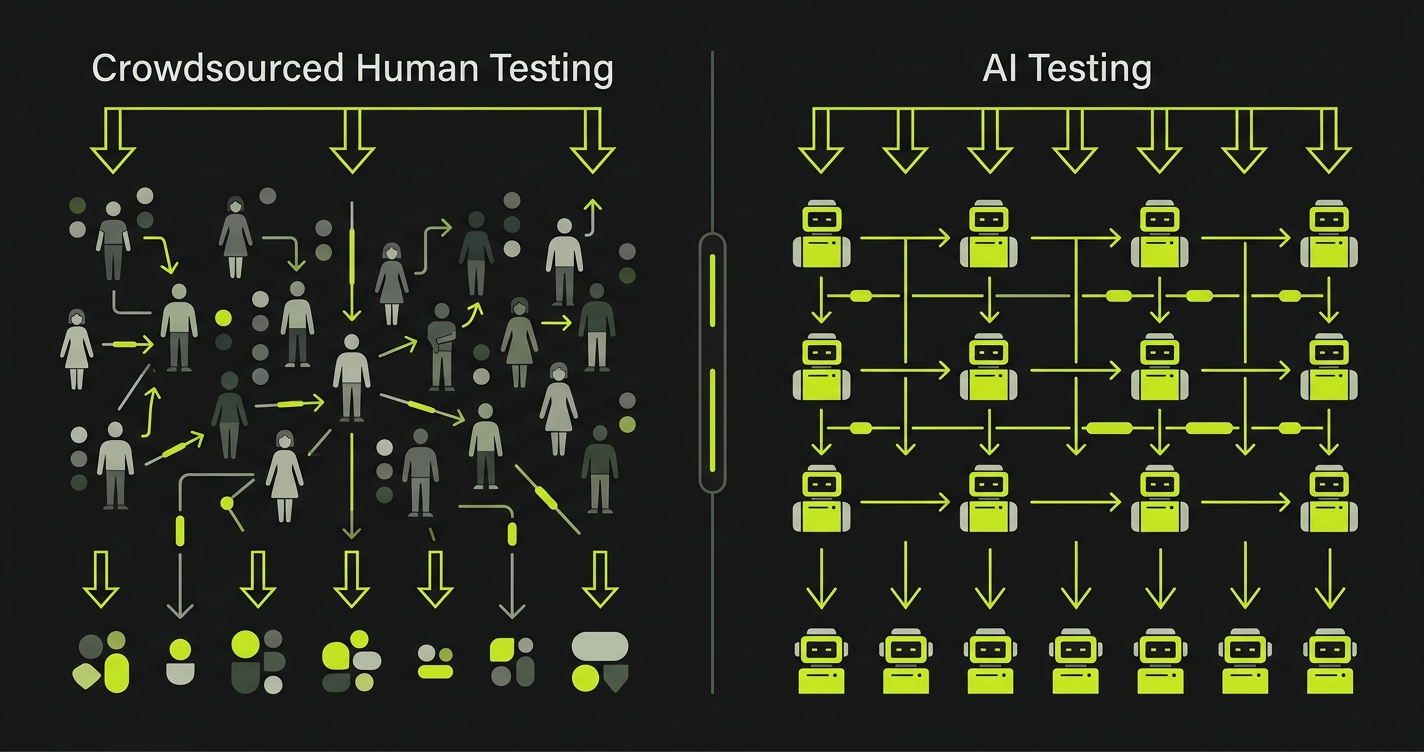

The reality is more complicated. Crowdsourced testers are slow (minutes to hours per run), inconsistent (quality varies tester to tester), and introduce security risk (strangers accessing your application). Rainforest QA has pivoted multiple times: from pure crowdsourcing to an AI-assisted hybrid: but the fundamental bottleneck remains: humans in the loop executing tests at human speed.

Autonoma removes the human bottleneck entirely. AI agents replace crowdsourced testers. Tests execute in seconds, not minutes. Results are perfectly consistent, run after run. Your application is never accessed by external humans. And it is fully open source: inspect the code, self-host on your infrastructure, fork it if you want. This guide covers where Rainforest QA falls short, how Autonoma solves those problems, and how to migrate.

Where Rainforest QA Falls Short

Three core problems drive engineering teams away from crowdsourced testing models.

Slow Execution: Humans Are the Bottleneck

Rainforest QA's execution model depends on human testers. A tester claims a test, reads the instructions, navigates your application, performs each step, and reports the result. This process takes minutes per test: sometimes 5-10 minutes for complex flows. A suite of 50 tests can take 30-60 minutes or longer depending on tester availability.

This speed makes Rainforest QA impractical for modern CI/CD workflows. When a developer opens a pull request, they need feedback in minutes, not hours. When your team ships multiple times per day, waiting for human testers to work through a queue means tests are always running behind the code they are supposed to validate.

Rainforest QA has added AI capabilities to speed up execution, but the hybrid model still relies on human verification for many test types. The platform cannot fully escape its crowdsourced architecture. Tests that need human judgment still route to human testers, and those testers still operate at human speed.

One engineering lead told us: "We integrated Rainforest QA into our CI pipeline. Pull requests sat waiting 45 minutes for test results. Developers started merging without waiting. The tests became a formality nobody trusted."

Inconsistent Results: Crowdsourced Quality Varies

When different humans execute the same test, they introduce variability. One tester might wait for an animation to complete before clicking; another might click immediately. One tester might interpret "verify the form is submitted" as checking for a success message; another might check the URL change. This inconsistency creates noise in your test results.

Rainforest QA mitigates this with training and AI-assisted validation, but the underlying problem persists. Human testers have varying levels of attention, different internet speeds, different screen resolutions, and different interpretations of ambiguous instructions. A test that passes with one tester may fail with another, not because of a bug, but because of tester variance.

This inconsistency erodes trust in the test suite. When your team sees a test failure, they have to ask: "Is this a real bug or a tester issue?" That question wastes engineering time and creates a culture of ignoring test failures, which defeats the entire purpose of testing.

Teams also report challenges with tester availability during off-peak hours. If your team deploys at 6 PM and needs test results before going home, tester availability can delay results until the next morning. The crowdsourced model does not guarantee execution speed because it depends on human supply and demand.

Security Risk: External Testers Access Your Application

Rainforest QA's crowdsourced model means real humans: people you have not hired, vetted, or onboarded: access your application during test execution. They navigate your staging environments, interact with your forms, and potentially see user data, internal tools, or pre-release features.

For teams building healthcare applications (HIPAA), financial platforms (PCI DSS, SOC 2), or government systems (FedRAMP), this is often a non-starter. Compliance frameworks require that access to test environments is controlled and auditable. Having an anonymous crowd of testers access your application creates an audit trail problem that is difficult to resolve.

Even for teams without strict compliance requirements, the security surface is concerning. Crowdsourced testers access your application URLs, authentication flows, and potentially sensitive business logic. Rainforest QA implements access controls and NDAs, but the fundamental architecture requires external human access to your systems.

One security engineer told us: "We spent weeks evaluating Rainforest QA. The moment we realized strangers would access our staging environment with test credentials, the security team vetoed it. No amount of NDAs change the attack surface."

Additionally, Rainforest QA is proprietary and cloud-only. There is no source code to audit, no self-hosting option to keep data on your infrastructure, and no way to verify how your application data is handled during test execution.

Autonoma: The Open Source Alternative to Rainforest QA

Autonoma replaces crowdsourced human testers with AI agents. Every limitation of the crowdsourced model disappears.

AI Agents Replace Human Testers

Autonoma does not hire humans to click through your app. AI agents execute tests using vision models that see your application like a human would: but execute in seconds, not minutes.

How it works: You connect your GitHub repo, and Autonoma's test-planner-plugin reads your routes, components, and user flows to build a knowledge base of your application. AI agents then generate comprehensive E2E test cases based on your actual code structure. Tests execute using AI vision models that understand intent ("click the submit button") rather than relying on brittle selectors or human interpretation.

The result: a test that takes a Rainforest QA tester 5-10 minutes to execute completes in seconds with Autonoma. A 50-test suite that takes Rainforest QA 30-60 minutes finishes in under 5 minutes. This speed makes CI/CD integration practical: developers get feedback on pull requests in minutes, not hours.

Unlike crowdsourced testers who vary in quality and interpretation, AI agents produce identical results every run. The same test executed 100 times produces the same outcome 100 times (assuming no application changes). No tester variance, no interpretation differences, no availability issues. When a test fails, it means something actually changed in your application: not that a tester had a bad day.

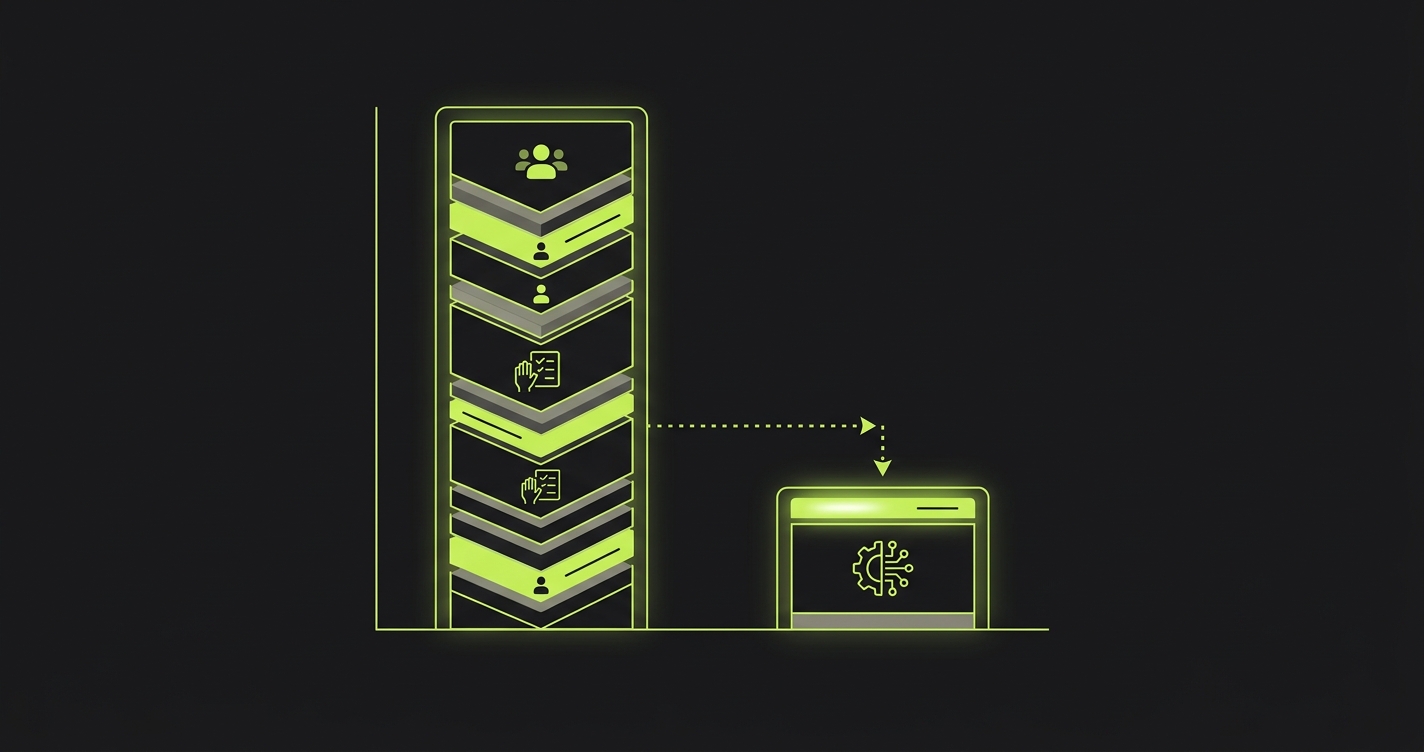

Open Source and Self-Hosting

Full source code on GitHub. Licensed under BSL 1.1 (converts to Apache 2.0 in 2028). You can use it in production, inspect every line, audit security, and self-host with no feature restrictions. The only limitation: you cannot resell Autonoma's functionality as a commercial service.

This directly addresses the security concern that eliminates Rainforest QA for many teams. When you self-host Autonoma, AI agents run on your infrastructure. No external humans access your application. No credentials leave your network. No application URLs are exposed to third parties. Your staging environment stays private.

For compliance teams, self-hosting means full audit control. Need to verify how test credentials are handled? Read the source code. Need to prove that test data stays within your VPC for HIPAA compliance? Show the deployment architecture. Need SOC 2 evidence that testing infrastructure is controlled? Point auditors at your self-hosted instance and the open source codebase.

The technology stack is built on standard open source components: TypeScript and Node.js 24 for the runtime, Playwright for web testing, Appium for mobile testing, PostgreSQL for data storage, and Kubernetes for orchestration. No proprietary runtimes, no black-box components, no vendor-specific dependencies.

Run Autonoma on your infrastructure: AWS (ECS, EKS, or EC2), GCP (GKE or Compute Engine), Azure (AKS or VMs), or your own data center. Self-hosting is free: no platform fees, no per-user charges, no per-test markup. You pay only for the cloud infrastructure you provision.

No-Code Test Generation (Actually Autonomous)

Rainforest QA offers a no-code test builder where you define steps in their interface: "Go to login page," "Enter username," "Click submit." Humans then execute those steps. You still have to define every test manually.

Autonoma takes no-code further: you do not define tests at all. The AI reads your codebase and generates tests automatically. It understands your routes, components, authentication flows, and user journeys from the source code. You review the AI-generated test plans and approve them. No manual test definition, no step-by-step instructions, no maintenance when your UI changes.

When your designer updates a button style, your developer restructures a form, or your team ships a new checkout flow, Autonoma's AI adapts automatically. It uses vision models that understand intent, not DOM structure. A button that moves from the top of the page to the bottom, or changes from green to blue, does not break the test. The AI still understands "click the primary call-to-action."

Unlimited Parallel Execution

Every plan (free tier, cloud, and self-hosted) supports unlimited parallel execution. On the free tier, this is subject to credit limits. On cloud and self-hosted plans, your test suite scales with your infrastructure.

Rainforest QA's parallelism is limited by tester availability. If 50 testers are available and you need 50 tests run simultaneously, you might get parallel execution. But tester availability fluctuates: during off-peak hours, you may wait in a queue. With Autonoma, AI agents spawn instantly and run as many tests in parallel as your infrastructure supports. No queuing, no waiting, no dependency on human availability.

Rainforest QA vs Autonoma: Feature Comparison

| Feature | Rainforest QA | Autonoma |

|---|---|---|

| Open Source | ❌ Proprietary closed source | ✅ BSL 1.1 on GitHub (Apache 2.0 in 2028) |

| Self-Hosting | ❌ Cloud only, no source code access | ✅ Self-host anywhere (AWS, GCP, Azure, on-prem) |

| Test Execution | ⚠️ Crowdsourced humans (minutes per test) | ✅ AI agents (seconds per test) |

| Execution Speed | ❌ 5-10 min per test (human speed) | ✅ Seconds per test (AI speed) |

| Result Consistency | ⚠️ Varies by tester quality and interpretation | ✅ Identical results every run |

| Test Generation | ❌ Manual no-code test builder | ✅ AI generates tests from codebase automatically |

| Test Maintenance | ⚠️ Manual updates when UI changes | ✅ AI self-healing (zero maintenance) |

| Security | ⚠️ External humans access your app | ✅ AI agents on your infrastructure (no human access) |

| CI/CD Integration | ⚠️ Slow (humans bottleneck the pipeline) | ✅ Fast (AI executes in seconds, practical for PR gates) |

| Parallel Execution | ⚠️ Limited by tester availability | ✅ Unlimited on all plans |

| Vendor Lock-In | ⚠️ High (proprietary platform, no export) | ✅ None (tests generated from code, fork codebase) |

| Data Sovereignty | ❌ Data on Rainforest QA servers | ✅ Data stays on your infrastructure |

| Source Code Access | ❌ Proprietary, no access | ✅ Full source code on GitHub |

| Starting Price | ~$200-500+/month (volume-based) | Free (100K credits, no credit card) |

| Self-Hosted Cost | Not available | Infrastructure only (no platform fees) |

Cost: AI Agents vs Crowdsourced Testers

The cost comparison between Rainforest QA and Autonoma goes beyond subscription fees. You need to account for the hidden costs of the crowdsourced model.

Rainforest QA pricing is volume-based, typically ranging from $200-500+/month depending on test count and execution frequency. For a mid-sized team running 100+ tests multiple times per week, annual costs reach $3,000-6,000 or more. But the real cost is in delayed feedback loops: when test results take 30-60 minutes, developers context-switch, bugs get discovered later in the cycle, and hotfixes become more expensive.

One team calculated that Rainforest QA's slow feedback loop added an average of 2 hours per developer per week in context-switching and delayed bug fixes. With 10 developers at $100/hour, that is $104,000/year in productivity loss: far exceeding the subscription cost.

Autonoma cloud is $499/month ($6K/year) with AI execution in seconds. No human testers to pay. No slow feedback loops. No productivity loss from waiting for results. Over three years, Autonoma cloud costs $18K. Rainforest QA costs $9K-18K in subscription fees alone: before accounting for the productivity impact of slow execution.

Autonoma self-hosted eliminates the platform fee entirely. You pay only for infrastructure you provision on AWS, GCP, or Azure, typically $200-400/month depending on your parallel needs. Over three years, that totals roughly $11K with zero human execution delays.

The biggest savings is not the subscription difference. It is the elimination of the human bottleneck. When tests execute in seconds instead of minutes, developers get instant feedback, bugs are caught earlier, and the entire development cycle accelerates. That productivity gain compounds every sprint.

Migrating from Rainforest QA to Autonoma

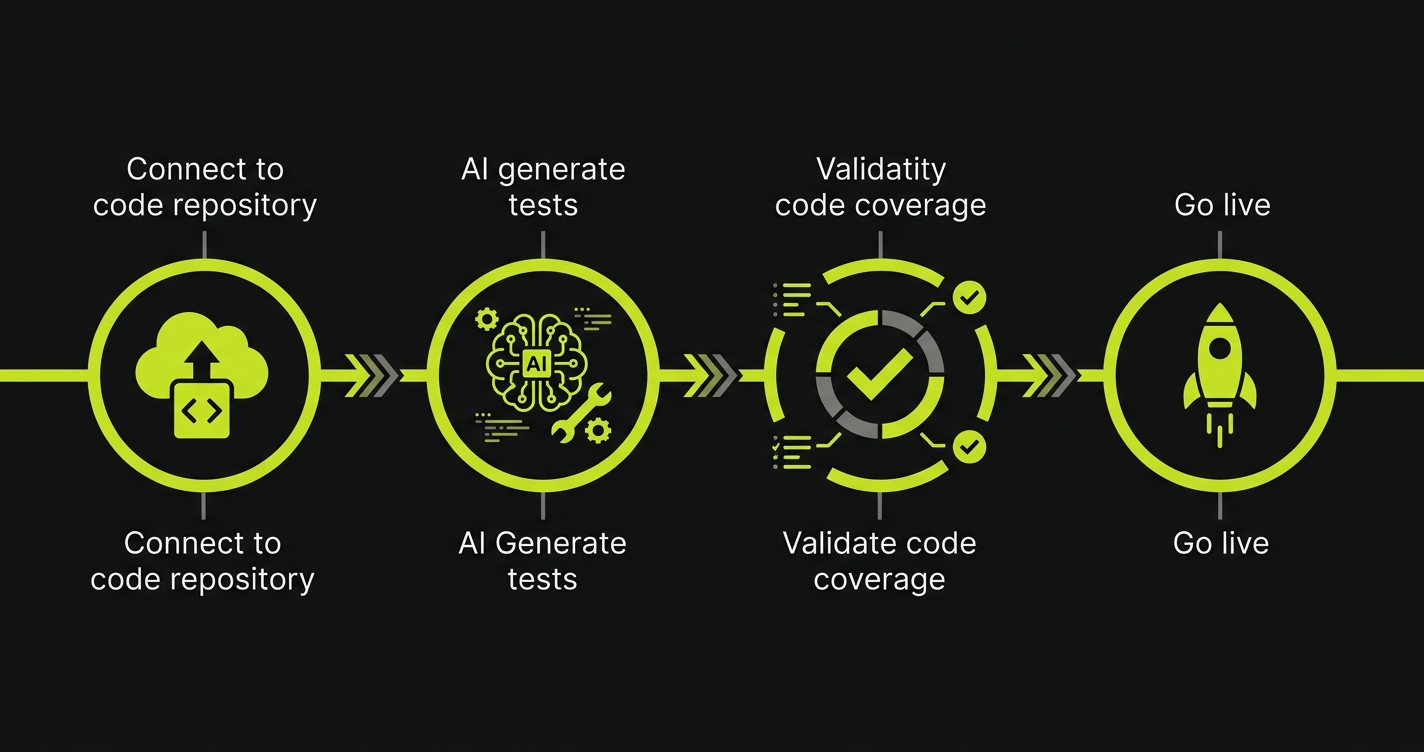

Migration from Rainforest QA to Autonoma is straightforward because you are not rewriting tests: Autonoma generates them from your codebase.

1. Connect your repo. Sign up for the free tier at getautonoma.com or self-host by cloning the GitHub repo and following the deployment docs. Connect your GitHub repository and let Autonoma's AI analyze your codebase. This takes minutes.

2. AI generates tests. The test-planner-plugin builds a knowledge base of your application and generates comprehensive E2E test cases automatically. Your Rainforest QA tests were defined as step-by-step instructions for human testers: Autonoma's AI reads your actual source code instead, generating tests that understand your application at a deeper level than no-code test definitions can capture.

3. Validate coverage. Run Autonoma's AI-generated tests alongside your existing Rainforest QA suite. Compare coverage, identify any gaps, and iterate. Autonoma's vision-based tests are more resilient than human-dependent execution because the AI understands intent, not just instructions. Most teams achieve full coverage within days.

4. Update CI/CD and cut over. Point your CI/CD pipelines at Autonoma and cancel your Rainforest QA subscription. If you are self-hosting, provision your infrastructure during the validation phase. The transition is low-risk because you validated coverage in step 3, and Autonoma's execution speed means your CI/CD pipeline actually gets faster.

Teams that relied on Rainforest QA for its no-code approach will find Autonoma even simpler: you do not define tests at all. The AI handles everything from generation to execution to maintenance.

Frequently Asked Questions

Yes. Autonoma is an open-source testing platform available on GitHub. Unlike Rainforest QA's proprietary crowdsourced model, Autonoma uses AI agents to execute tests in seconds rather than relying on human testers. Free tier includes 100K credits with full self-hosting capabilities and no feature limitations.

Rainforest QA relies on crowdsourced human testers to execute tests, which means slow execution (minutes to hours), inconsistent results across testers, and security concerns from external testers accessing your app. Autonoma replaces human testers with AI agents that execute tests in seconds with perfect consistency. Autonoma is also open source and self-hostable, while Rainforest QA is proprietary and cloud-only.

Yes. Autonoma is fully self-hostable with complete source code on GitHub. You can run it on your infrastructure (AWS, GCP, Azure, on-premise) with zero feature restrictions. Rainforest QA offers no self-hosting option and no source code access.

Rainforest QA pricing starts around $200-500+/month depending on test volume and execution frequency. Autonoma offers a free tier with 100K credits, then $499/month for 1M credits with unlimited parallels and AI-powered execution. Self-hosting Autonoma eliminates ongoing platform costs entirely: you pay only for infrastructure.

Rainforest QA's crowdsourced model means external human testers access your application during test execution, which raises security concerns for sensitive applications. Autonoma's AI agents run in isolated environments on your own infrastructure (when self-hosted), so no external humans ever access your app. Your credentials and data never leave your network.

Yes. You don't need to recreate tests manually. Connect your repo and Autonoma's AI generates tests from your codebase automatically. Migration involves validating AI-generated coverage against your existing Rainforest QA test suite. Most teams achieve full coverage within days because the AI reads your actual code rather than requiring no-code test definitions.

Yes, significantly faster. Rainforest QA's human-dependent execution makes CI/CD integration slow: tests take minutes to hours because humans must execute each step. Autonoma's AI agents execute tests in seconds, making it practical for PR-level testing, continuous integration, and deployment gates where speed matters.

The Bottom Line

Rainforest QA pioneered the idea that you should not have to write and maintain test scripts. That insight was correct. But their solution: crowdsourced human testers: introduces its own set of problems: slow execution, inconsistent results, security risk from external testers, no source code access, no self-hosting, and pricing that scales with human labor costs.

Autonoma delivers on the original promise without the crowdsourced baggage. AI agents replace human testers entirely. Tests execute in seconds, not minutes. Results are perfectly consistent. No external humans access your application. Full source code on GitHub (BSL 1.1, Apache 2.0 in 2028). Self-host on your infrastructure or use our cloud. Free tier starts at 100K credits, cloud at $499/month, self-hosted at infrastructure cost only.

The future of testing is not crowdsourcing work to humans. It is AI agents that understand your codebase and test autonomously: faster, cheaper, and more reliably than any crowd.

Ready to replace crowdsourced testing with AI?

Start Free - 100K credits, no credit card, 5-minute setup

View on GitHub - Inspect source code, self-host documentation

Book Demo - See AI autonomous testing in action

Related Reading: