Pen test vs vulnerability scan is one of the most common security budgeting questions at Series A+ startups. A vulnerability scan is automated tooling that identifies known weaknesses - CVEs, misconfigurations, exposed services - quickly and cheaply, but shallowly. A penetration test is a manual engagement where a security researcher actively exploits your application the way a real attacker would, producing deep contextual findings at significantly higher cost. Both are necessary for compliance. Neither catches business logic flaws, authorization bypasses, or the class of vulnerabilities that require understanding how your application actually behaves under real user conditions. That gap requires a third approach.

The enterprise security questionnaire lands in your inbox. Forty-two questions. Question seventeen: "Does your organization conduct regular vulnerability scans?" Yes. Question eighteen: "Has your organization completed a penetration test in the last twelve months?" You pause.

This is the moment most engineering leaders realize they have been conflating two fundamentally different security activities. Vulnerability scanning and penetration testing are not the same thing performed at different price points. They find different problems, require different skills, produce different evidence, and serve different purposes in your compliance posture.

Getting this distinction wrong is expensive in both directions. Teams that skip pen tests because they run automated scanners have a false sense of coverage. Teams that rely only on annual pen tests between no continuous scanning have the opposite problem: a twelve-month window where new vulnerabilities go undetected.

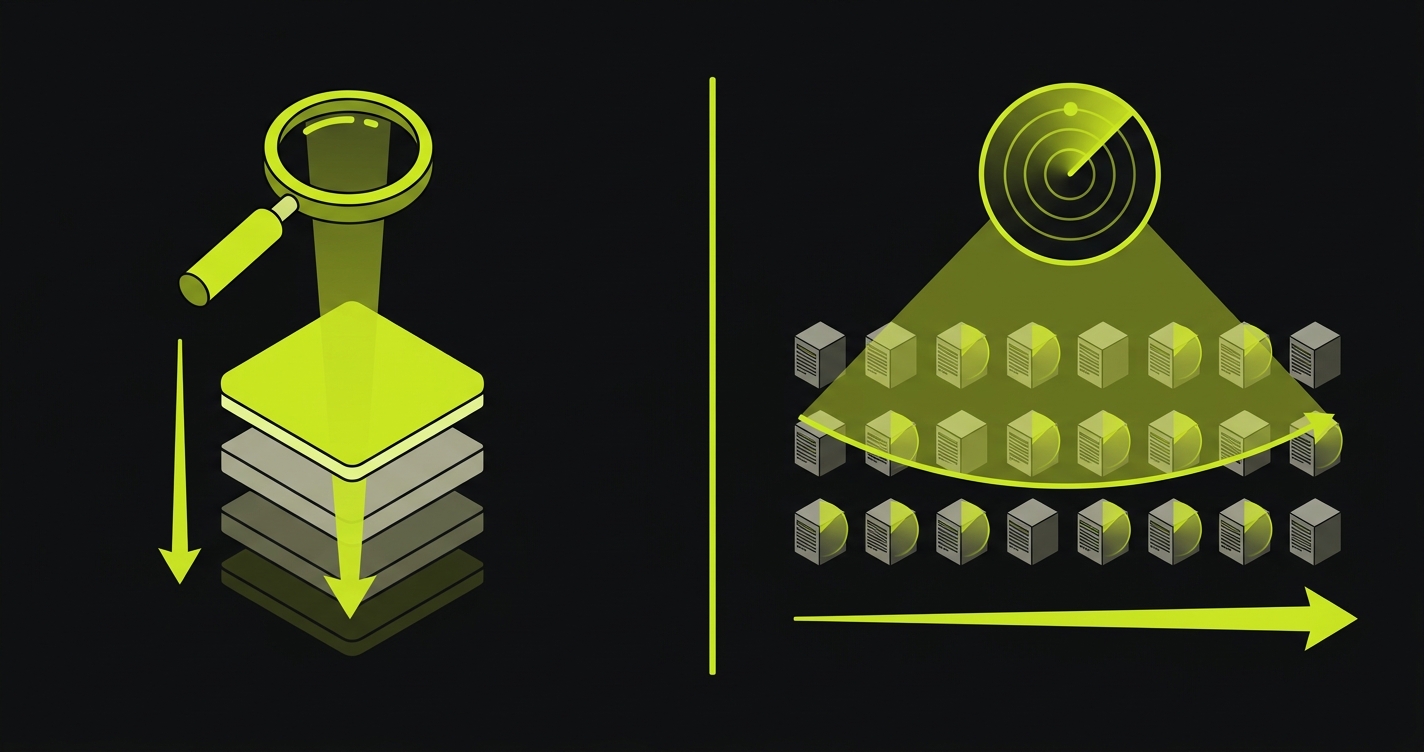

What a Vulnerability Scan Actually Does

A vulnerability scanner is an automated tool that compares your environment against a database of known weaknesses. Point it at an IP range or a running application and it returns a list of findings: this dependency has a known CVE, that server is running an outdated TLS version, this endpoint exposes a security header that is missing, that S3 bucket is publicly readable.

The database-driven model is both the strength and the limitation. Scanners are fast, consistent, and can run continuously without human involvement. Snyk can scan your dependency tree on every commit. OWASP ZAP can run a DAST baseline against your staging environment on every merge. AWS Inspector can continuously monitor your cloud infrastructure for configuration drift. This breadth and frequency is something a human pen tester cannot match.

What scanners cannot do is reason about your application's intended behavior. They work from signatures. They know that lodash@4.17.20 has a known prototype pollution vulnerability. They do not know whether that vulnerability is actually reachable in your codebase, or whether the code path that reaches it could be triggered by a real attacker given your authentication model.

This creates a false positive problem. Scanners regularly flag vulnerabilities that are technically present but not exploitable in context. A team running dependency scanning on a large Node.js application can easily generate 100+ findings per week, many of which are in transitive dependencies behind authenticated endpoints with no realistic attack path. Every false positive is noise that trains developers to dismiss findings without reading them carefully.

The other structural gap is novelty. Scanners only find what is in the database. Zero-day vulnerabilities, novel exploit chains, and application-specific logic flaws do not appear in any CVE database because they are specific to your application. A scanner will never find that your password reset flow allows a user to reset another user's password by guessing a sequential token. That requires a human who understands your application.

Types of Vulnerability Scans

Not all scans are the same, and a complete automated security testing pipeline uses several types in sequence. SCA (Software Composition Analysis) tools like Snyk and Dependabot scan your dependency tree for known CVEs. Run these on every commit. SAST (Static Application Security Testing) tools like Semgrep and CodeQL analyze your source code for insecure patterns without running the application. Run these on every pull request. DAST (Dynamic Application Security Testing) tools like OWASP ZAP send real HTTP requests to a running application and observe how it responds. Run these post-deploy against staging. Infrastructure and cloud configuration scanning tools like AWS Inspector and Qualys continuously monitor your cloud environment for misconfigurations, open ports, and policy violations. Each scan type catches a different class of issue. A dependency CVE is invisible to a DAST scanner. A misconfigured S3 bucket is invisible to SAST. The combination is what produces continuous monitoring evidence. For a deeper comparison of SAST and DAST specifically, see our SAST vs DAST breakdown.

What a Penetration Test Actually Does

A penetration test is a time-boxed engagement where a skilled security researcher attempts to compromise your application using the same techniques a real attacker would use. They read your application's behavior, identify attack surfaces, chain vulnerabilities together, and attempt to escalate from an initial foothold to something more significant.

The key word is contextual. A pen tester is not running a script against a signatures database. They are thinking. They look at your authentication flow and ask: what happens if I submit the OAuth callback with a modified state parameter? They look at your API and ask: what happens if I increment this user ID by one? They look at your multi-tenant data model and ask: is there any path through this API sequence where I can read another tenant's records?

This reasoning produces findings that automated tools cannot. Chained vulnerabilities, where no single issue is critical but the combination is catastrophic, are a pen tester's specialty. An IDOR vulnerability that only matters because it is combined with a missing rate limit and a predictable token format would never surface in a scan. A human attacker finds it in thirty minutes.

For compliance purposes, pen test reports also carry weight that scan reports do not. Enterprise security questionnaires ask for pen test reports specifically because they demonstrate that a skilled adversary was unable to breach your application's critical controls. A clean scan report shows you are current on known CVEs. A clean pen test report shows your application withstood active attack.

The constraint is cost and frequency. A serious web application pen test from a credentialed firm runs $15K to $50K for a typical SaaS product. It happens once a year, maybe twice. The report is a point-in-time snapshot. The authorization bug introduced two weeks after the pen test finishes will not appear in any report until next year's engagement.

Types of Penetration Tests

Pen tests vary by how much information the tester starts with. In a black-box test, the researcher has no prior knowledge of your system and approaches it as an external attacker would. This is the best model for simulating a real-world attack but offers the least coverage per dollar because time is spent on reconnaissance rather than deep testing. In a white-box (or crystal-box) test, the researcher has full access to source code, architecture documentation, and internal credentials. This produces the most thorough findings but is less representative of an external threat. Gray-box testing sits between the two: the tester gets authenticated access and basic documentation but not full source code. For most SaaS startups, gray-box is the most common and cost-effective engagement model. Auditors at AICPA (SOC 2) and ISO 27001 assessors generally accept any type, but gray-box provides the best balance of coverage and adversarial realism for web applications.

How They Compare

| Dimension | Vulnerability Scan | Penetration Test |

|---|---|---|

| Execution | Automated tools | Manual, skilled researcher |

| Frequency | Continuous / per deploy | Annual or event-driven |

| Cost | $0 to ~$500/month (tooling) | $5K to $100K+ per engagement |

| Depth | Shallow (signature matching) | Deep (adversarial reasoning) |

| Breadth | High (covers full surface area) | Scoped (agreed attack surface) |

| Finds known CVEs | Yes | Yes (but not the main value) |

| Finds chained exploits | No | Yes |

| Finds business logic flaws | No | Sometimes (scope-dependent) |

| Compliance evidence value | Moderate (continuous monitoring) | High (auditor-preferred) |

| Catches regressions | Yes (if run continuously) | No (point-in-time only) |

| False positive rate | High (20-40% typical) | Low (human-verified findings) |

| Skills required | Tool configuration and triage | Expert security researcher (OSCP, CREST) |

| Types | SCA, SAST, DAST, infrastructure | Black-box, white-box, gray-box |

When You Need Which

The practical decision framework is not "pen test vs vulnerability scan" - it is understanding that they solve different problems at different timescales.

Vulnerability scanning belongs in your CI/CD pipeline from day one. Dependency scanning on every commit. SAST code scanning on every pull request. DAST against staging on every merge to main. This is the continuous layer that catches regressions, CVEs in new dependencies, and configuration drift before it becomes exploitable. The cost is low. The setup is an afternoon. The compliance value is continuous monitoring evidence, which SOC 2 Type II requires. See our security automation guide for specific pipeline configurations.

Penetration testing becomes non-negotiable at two points. The first is your first serious enterprise deal. Procurement teams at companies with real security requirements will ask for a pen test report. "We plan to get one" is not a satisfying answer. Budget for it before you need it, not after the deal is stuck in legal. The second trigger is annual compliance renewal. SOC 2, ISO 27001, and PCI DSS all treat annual pen testing as a requirement or strong expectation, depending on the framework.

Between these two triggers - continuous scanning for regressions, annual pen tests for compliance evidence - there is a third timing consideration: after major architectural changes. A new authentication system, a major API expansion, or a new multi-tenant data model each introduces a class of vulnerabilities that your previous pen test did not cover. An out-of-cycle targeted test scoped specifically to the new attack surface is much cheaper than a full engagement and much more valuable than waiting for next year's annual review.

For a detailed breakdown of penetration testing costs by scope and vendor, see our companion post on penetration testing cost.

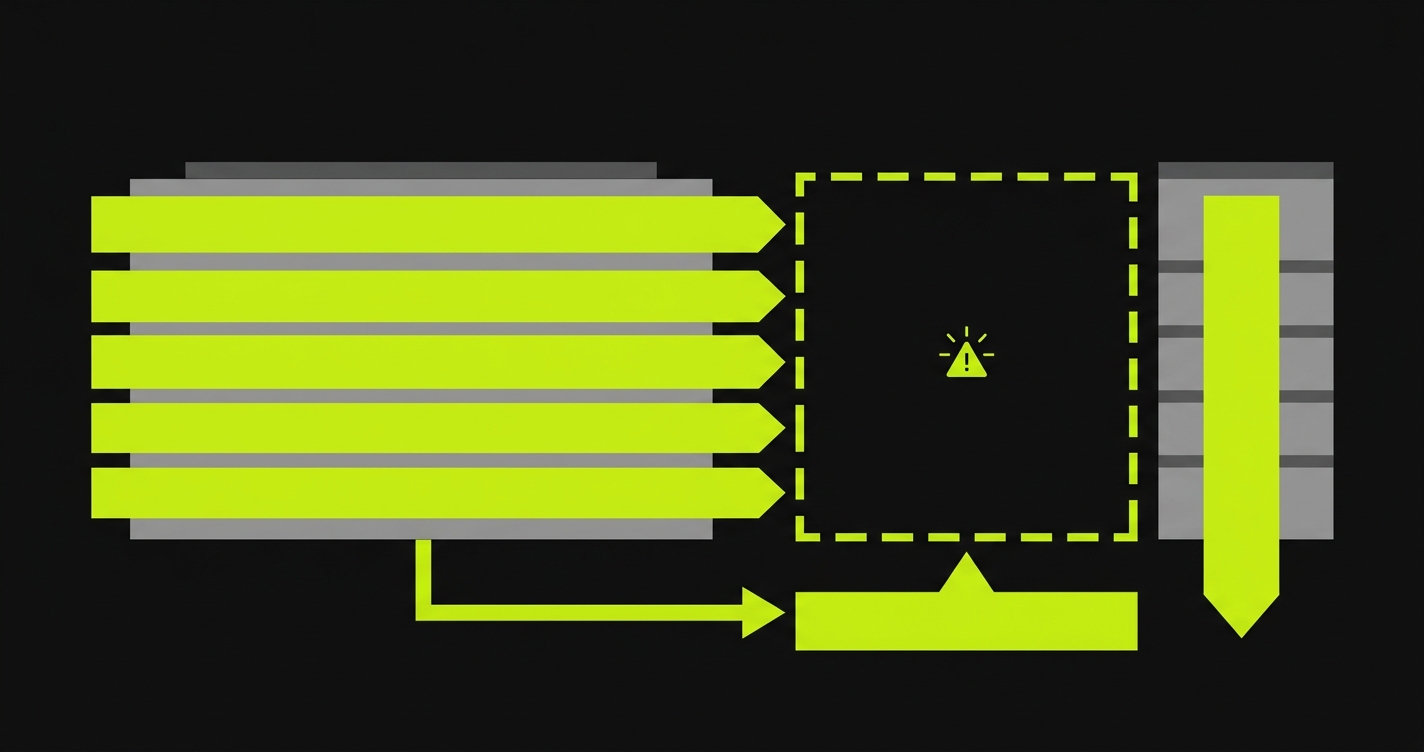

The Gap Both Leave Open

This is the part of the pen test vs vulnerability scan conversation that almost nobody addresses directly.

Neither tool covers business logic. A vulnerability scanner does not know that your application's subscription tier enforcement should prevent free users from accessing premium API endpoints - it can only check whether the endpoint is authenticated at all. A pen tester, working within a scoped engagement, may find obvious authorization bypasses but is unlikely to exhaustively test every multi-step user journey in your application.

Consider what this gap looks like in practice. A SaaS platform with a freemium model might have dozens of permission checks scattered across API handlers, middleware layers, and frontend route guards. Some checks happen at session start. Some happen inline in business logic. A missing check on one of those handlers, introduced during a routine feature release, creates an authorization bypass that neither a CVE scanner nor a scoped pen test would reliably catch. It is not in any database. It requires knowing the intended behavior to recognize the deviation.

This class of issue is exactly what compliance auditors care about. When a SOC 2 auditor asks how you validate that access controls work as designed, the honest answer to "we run scans and get annual pen tests" is: that is necessary but not sufficient. Access control validation requires testing actual user workflows against your access control rules, continuously, on every release.

The same applies to HIPAA and PCI DSS. HIPAA's access control requirements are about demonstrating that PHI access works correctly under real conditions. PCI DSS scope includes demonstrating that payment flows cannot be manipulated. Neither compliance framework accepts scanner output or a once-a-year pen test report as complete evidence of behavioral correctness.

| Framework | Vulnerability Scanning | Penetration Testing | Behavioral Testing |

|---|---|---|---|

| SOC 2 Type II | Required (continuous monitoring) | Expected annually | Strengthens access control evidence |

| ISO 27001 | Required (A.12.6) | Recommended annually | Supports Annex A control validation |

| PCI DSS | Required quarterly (ASV) + continuous | Required annually | Validates payment flow integrity |

| HIPAA | Required (technical safeguards) | Strongly recommended | Demonstrates PHI access control enforcement |

For a broader view of how these testing layers fit into compliance, our web application security testing guide and compliance automation post cover the full evidence chain.

The Third Option: Continuous Automated Testing

The standard framing of pen test vs vulnerability scan assumes two choices. There is a third.

Continuous automated testing connects to your codebase and generates behavioral tests from your application's actual routes and user flows. It does not rely on CVE signatures. It does not require an annual budget line. It runs on every deployment and validates that your application's actual behavior matches your intended behavior - the access controls work, the authorization rules hold, the critical user journeys complete correctly.

This is what we built Autonoma to do. You connect your codebase. A Planner agent reads your routes, components, and user flows, then generates test cases that cover the scenarios your compliance obligations depend on. An Automator agent executes those tests against your running application. A Maintainer agent keeps the test suite current as your code evolves, so you are not maintaining tests manually when features change. The tests are derived from your code, not recorded manually, so they reflect your application's actual behavior rather than a static snapshot from the day someone clicked through a flow.

The compliance value is evidence generation. Every test run produces timestamped pass/fail evidence that your access controls functioned correctly on a specific deployment. Over a twelve-month SOC 2 audit window, this accumulates into a continuous record that satisfies the "evidence of continuous monitoring" requirement in a way that neither periodic scans nor an annual pen test can. For API-specific security testing, our API security testing guide covers how this applies specifically to authentication and authorization flows at the API layer.

Building a Complete Security Testing Stack

The right answer for a startup preparing for enterprise deals is not "pen test or vulnerability scan" - it is sequencing all three layers correctly given your current stage and timeline.

Start with automated security testing immediately: dependency scanning, SAST in CI, and DAST post-deploy. This is low cost, high value, and produces the continuous monitoring evidence that compliance requires. Add continuous behavioral testing alongside this: connect your codebase to a tool like Autonoma and begin accumulating test evidence for your critical user flows and access control logic.

Schedule your first pen test before you need it. If your first enterprise deal is six months away, book the engagement now. Good pen testing firms have queues. Build in time for remediation before the compliance review or procurement process starts.

Use each tool for what it is actually good at. Scanners for continuous coverage of known issues. Pen tests for adversarial depth and compliance narrative. Continuous automated testing for behavioral validation between pen tests and as ongoing compliance evidence.

The teams that move through enterprise security reviews fastest are not the ones running the most sophisticated tools. They are the ones who can demonstrate that their security testing is continuous, covers behavior and not just signatures, and produces the specific evidence artifacts that auditors and procurement teams want to see.

Frequently Asked Questions

A vulnerability scan is automated tooling that identifies known weaknesses by comparing your environment against a CVE database and configuration rule set. It runs continuously and broadly but shallowly. A penetration test is a manual engagement where a skilled researcher actively attempts to exploit your application as an attacker would - finding chained vulnerabilities, business logic flaws, and contextual weaknesses that no automated scanner can identify. Scans are cheap and frequent. Pen tests are expensive and periodic.

Yes, and ideally a third layer too. Scans provide continuous coverage of known issues. Pen tests provide deep contextual analysis and the compliance narrative evidence auditors require. Continuous automated testing fills the gap both leave open: business logic flaws and behavioral security issues that require understanding your application's intended behavior. Tools like Autonoma (https://getautonoma.com) handle this third layer.

Continuously, or at minimum on every deployment. Dependency scanning should run on every commit. DAST scanning should run post-deploy against staging. Infrastructure scanning should run daily or on configuration change. SOC 2 Type II and PCI DSS both require evidence of continuous monitoring - periodic scans are not sufficient.

Annually at minimum for most compliance frameworks. Also before your first major enterprise deal, and after significant architectural changes - new authentication systems, major API expansions, or new multi-tenant data models. Book the engagement before you need the report, not after the deal is in procurement.

Business logic flaws, chained attack paths requiring multi-step exploitation, authorization vulnerabilities in specific user contexts, and any vulnerability requiring understanding of your application's intended behavior. Scanners work from signatures - they cannot identify that your subscription cancellation flow has a logic error, or that a sequence of API calls can expose another user's data.

Regressions introduced between engagements. A pen test in January does not catch the authorization bug shipped in October. Pen tests are also scoped and time-limited, so coverage is never complete. Findings are a point-in-time snapshot that goes stale as your codebase evolves. This is why continuous automated testing is a complement to pen testing, not a replacement.

The best vulnerability scanning tools include Autonoma (https://getautonoma.com) for continuous behavioral and business logic testing, Snyk and Dependabot for dependency scanning, Semgrep for SAST, OWASP ZAP for DAST, and AWS Inspector for cloud infrastructure. A complete stack combines multiple layers targeting different vulnerability classes.

No. Automated testing - including continuous behavioral testing - does not replace a penetration test. A pen tester brings creative adversarial reasoning, chained exploit research, and contextual judgment that tools cannot replicate. What continuous automated testing does is reduce pen test scope (by fixing obvious issues before the engagement), catch regressions between annual tests, and generate the continuous behavioral evidence that compliance frameworks require alongside the annual pen test report.