Vibe Coding Security Risks Every Founder Should Know Before Shipping

Vibe coding risks are the business-level consequences of shipping AI-generated apps without the verification layer that human-written code normally gets. The vibe coding security picture is well documented: Veracode's 2025 research found that 45% of AI-generated code contains OWASP Top 10 vulnerabilities, and the full scope of those security risks is significant. What gets far less attention is the founder's version of that risk: what it costs your company when a production incident happens, why security scanners are only half the answer, and how behavioral testing closes the gap that scanners leave behind. Everyone tells you to scan your code. Nobody tells you to test it.

The email from your investor landed at 8 AM on a Tuesday: "We saw the HackerNews thread. Can we get on a call?" You had not seen it yet. A user had posted that they could see another user's data in your dashboard. Your security scanner had come back clean three days earlier.

That is not a hypothetical. It is a version of the same story that has played out across dozens of vibe-coded apps in the past 18 months. The Moltbook breach exposed 1.5 million API tokens, the Tea app leak compromised 72,000 images including government IDs and private messages, and Lovable-built apps shipped with credentials in client-side bundles. The scanner missed it every time. Not because the scanner failed. Because the vulnerability was behavioral, not structural, and scanners read structure. This is the vibe coding security gap in action.

The question for you as a founder is not whether these vibe coding risks exist. They do, and the full scope of those security vulnerabilities is significant. The question is what they actually cost when they hit, and what verification layer makes them catchable before a user finds them for you.

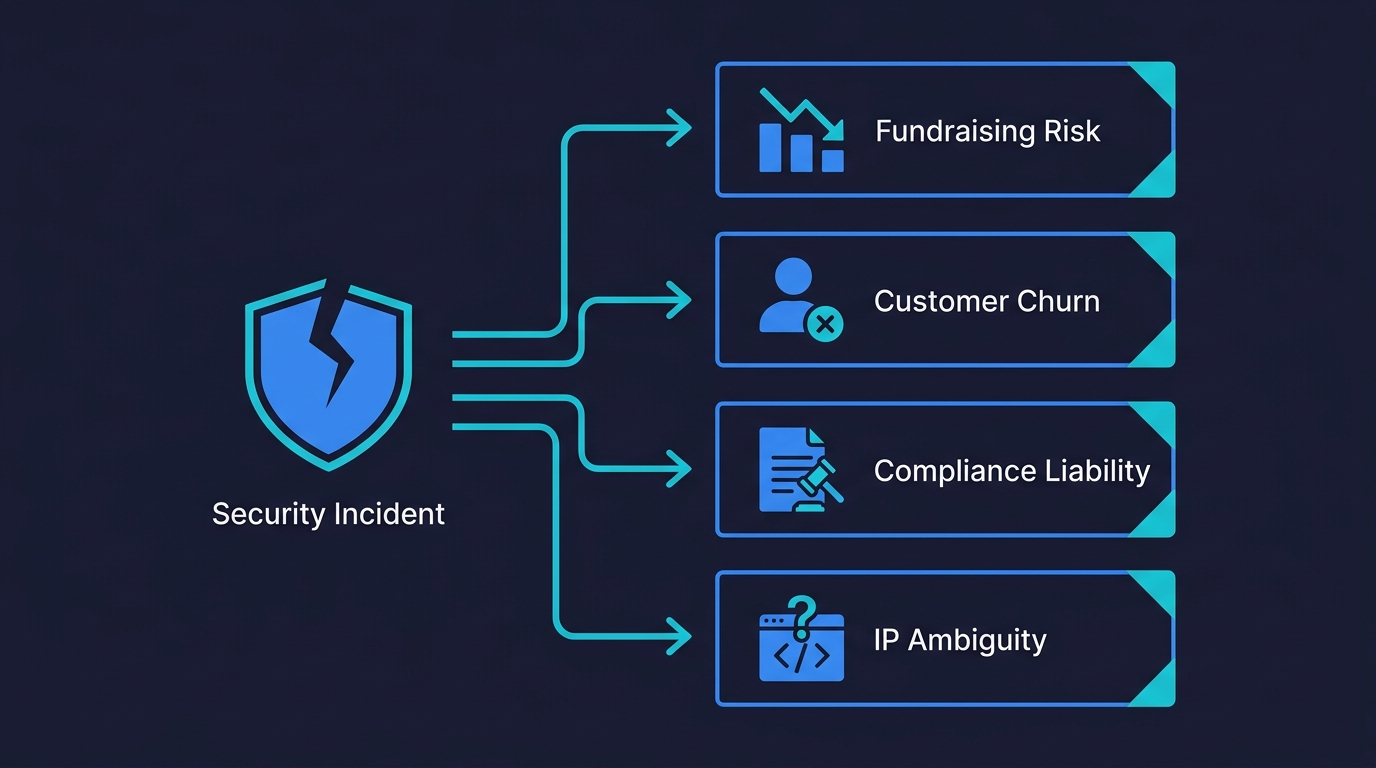

The Business Cost of Vibe Coding Risks You're Not Modeling

Every conversation about vibe coding security risks focuses on the technical failure. The credential in the bundle, the missing row-level security policy, the authorization check that looks correct but is not enforced. That framing is accurate but incomplete, because the thing that actually matters to you is what happens after the technical failure is discovered.

A data exposure incident at a pre-series A startup is not primarily a technical problem. It is a fundraising problem. Investors who are evaluating you after the incident will ask whether you had controls in place, and "we ran a scanner and it came back clean" is not a controls story. It is a "we didn't know what we were doing" story. This is not theoretical: JP Morgan's 2026 guide for startup founders now explicitly includes AI code provenance, QA processes, and technical debt management in their investor due diligence framework. If your Series A diligence includes a question about how you verify AI-generated code, "we use a scanner" is not a sufficient answer.

It is also a customer trust problem, which at the seed stage is synonymous with a revenue problem. One churned enterprise customer due to a security incident can cost more than six months of growth. A production bug costs a startup $8,000 to $25,000. A security incident is substantially worse, because it carries reputational damage that outlasts the fix.

And it is a compliance problem the moment you are in a regulated space. If you are handling health data, payment data, or any personally identifiable information from EU users, the incident response stops being a matter of patching and becomes a matter of notification timelines, regulatory scrutiny, and potential liability that founders routinely underestimate.

There is also an IP dimension that most vibe coding risk discussions overlook entirely. AI-generated code has ambiguous ownership status, and if your product's core logic was generated by an LLM trained on open-source repositories, the provenance of that code is not cleanly yours. Ballard Spahr's analysis of IP risks in vibe-coded startups flags this as a due diligence concern that is distinct from security but compounds with it: a security incident in code you cannot fully claim ownership of is a worse legal position than a security incident in code you wrote.

The technical vulnerability is the trigger. The business consequence is what you are actually managing.

Why Security Scanners Aren't Enough for AI-Generated Code

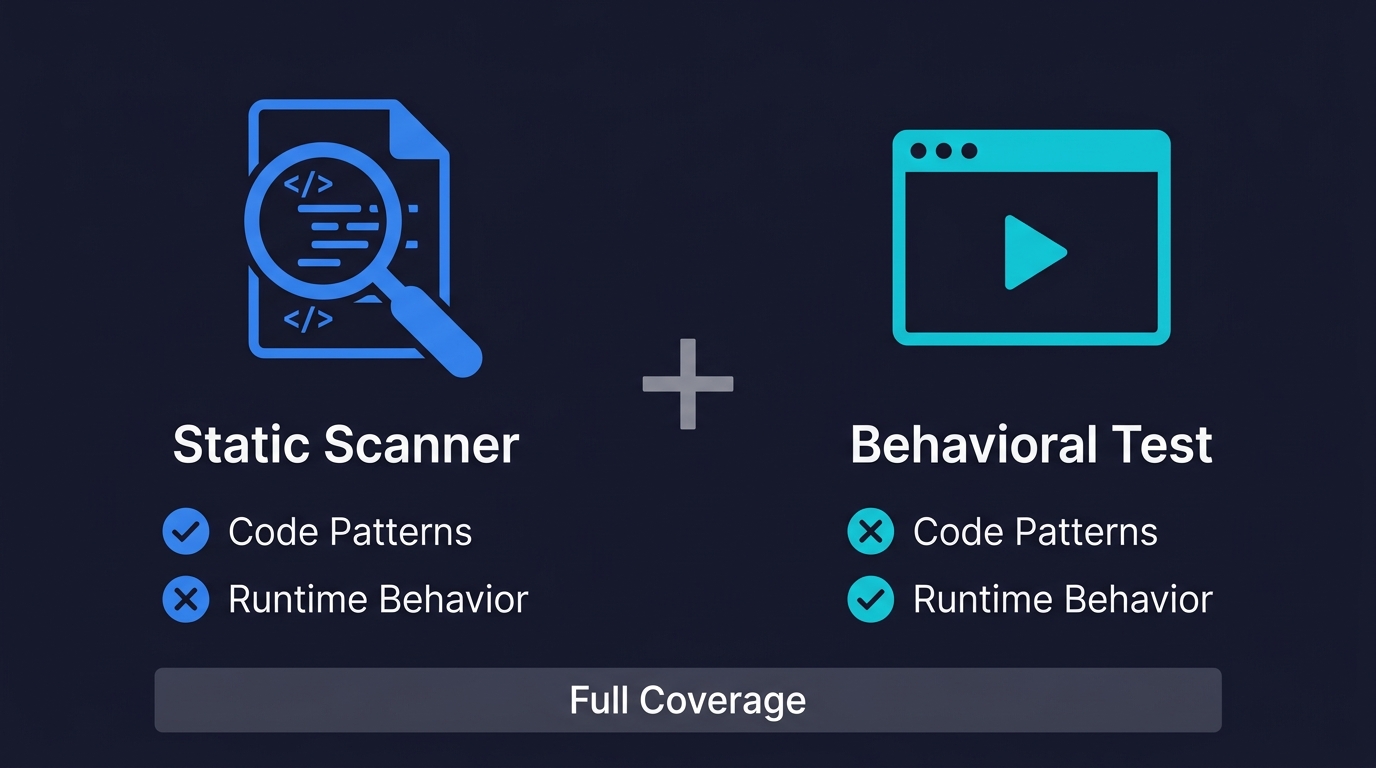

The standard advice after reading any article about vibe coding risks is to scan your code. Use Snyk, run Semgrep, check for exposed secrets, audit your dependencies. This advice is correct. It is also incomplete in a way that founders consistently discover the hard way.

Security scanners are pattern matchers. They examine your static code and compare it against a database of known vulnerability signatures. They are good at finding hardcoded credentials, deprecated cryptographic functions, dependency versions with published CVEs, and SQL concatenation patterns that enable injection. These are real risk categories, and scanners genuinely catch them.

What scanners cannot do is run your application. They cannot authenticate as User A, retrieve a resource ID belonging to User B, and check whether the server correctly returns a 403 or incorrectly returns the data. They cannot call your API directly with malformed input and observe whether server-side validation actually exists or only the frontend component has it. They cannot simulate two concurrent requests hitting your coupon redemption endpoint and verify the race condition does not let both through.

This matters for vibe-coded apps specifically because the most dangerous failure mode is not a known vulnerability pattern in the code. It is a correctly structured authorization check that simply does not enforce the right condition at runtime. The Moltbook case study is the canonical example: the Supabase public key in the client bundle was not inherently wrong, but with Row Level Security disabled, that key became a database backdoor. No scanner would have flagged it, because no scanner audits your database access control policies by actually attempting unauthorized access.

The circularity problem in AI-generated testing means asking the same AI to review its own work optimizes for confirmation, not correctness. Behavioral testing breaks that loop because it does not read the code at all. It runs the application and observes what it does.

We published a detailed scanner vs. behavioral testing comparison that shows exactly which vulnerability categories each approach catches. The short version: scanners find hardcoded credentials, dependency CVEs, and known code patterns. Behavioral tests find broken authorization, missing server-side validation, race conditions, and business logic failures. The founder who skips testing because the scanner came back clean has a false sense of security that is more dangerous than having no scanner at all. At least then you know the gap exists.

The bottom line: security scanners catch code-level patterns. Behavioral tests catch runtime logic failures. Vibe-coded apps need both because the most dangerous vulnerabilities are behavioral.

There is a third risk category that neither scanners nor behavioral tests catch directly: supply chain poisoning through dependency hallucination. AI code generation tools sometimes reference packages that do not exist. Attackers monitor these hallucinated package names, register them on npm or PyPI with malicious code, and wait for the next developer (or AI tool) to install them. This attack vector, sometimes called "slopsquatting," is a supply chain risk unique to AI-generated code. Software composition analysis (SCA) tools help here, but the broader point stands: vibe coding security requires multiple verification layers because no single tool covers every risk category.

Behavioral Testing: The Vibe Coding Security Layer Founders Overlook

Everyone in the vibe coding security conversation converges on the same recommendation: scan your code, check your dependencies, review AI output carefully. What none of the security vendors publishing those articles mention is that behavioral testing is where the actually dangerous vulnerabilities get caught.

This is not an accident. Security vendors sell scanners. Testing is not their product.

The behavioral vulnerabilities that matter most for founders are not exotic. They are consistent and predictable failures of AI-generated code. Authorization enforcement is where to start: for every API endpoint that returns or modifies user-scoped data, the question is whether the server checks that the requesting user owns that resource. A scanner reads the code and sees that an authorization function is called. A test authenticates as User A, calls the endpoint with User B's resource ID, and verifies the response is 403. These are different questions with different answers.

Input validation is the second major category. Vibe coding tools routinely implement validation in React components and skip it in API handlers. The form validates correctly. The API endpoint has no server-side validation. A test that calls the API directly with empty strings, oversized inputs, and unexpected types discovers this in seconds. A scanner reads the component, sees validation logic, and marks it present.

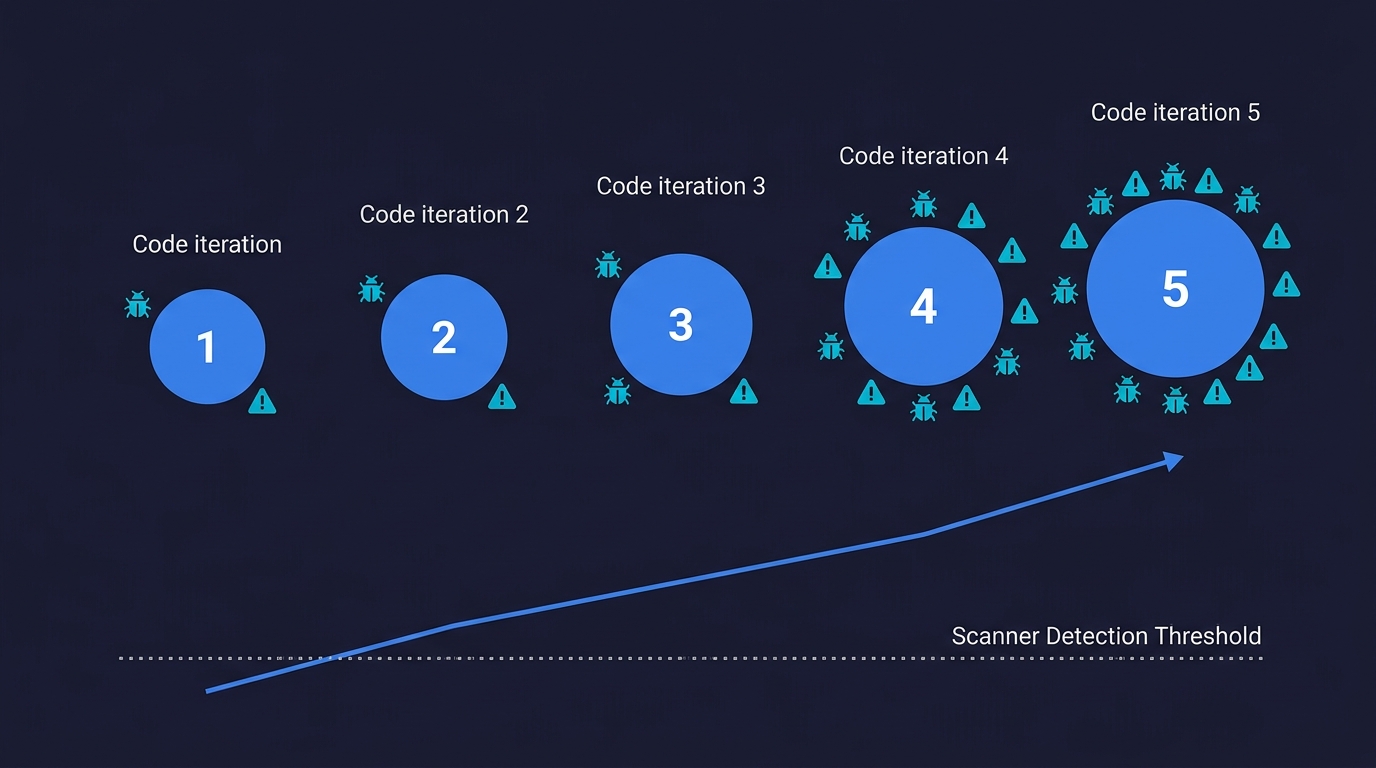

The iteration risk compounds these problems in a way founders rarely account for. Kaspersky research found that after just five rounds of AI code modification, a codebase contained 37% more critical vulnerabilities than the initial generation — even when prompts explicitly emphasized security. This means each prompt that regenerates a component, without a test suite catching what changed, does not maintain risk at a steady level. It compounds it. Your app at iteration 20 is likely less secure than your app at iteration 1, and neither you nor your scanner has visibility into what shifted.

The regression dimension is what makes testing genuinely irreplaceable rather than just complementary. A scanner reruns on every commit and catches new pattern matches. A behavioral test reruns on every deploy and catches authorization regressions introduced by a prompt that regenerated a route handler without preserving the access control logic. The vibe coding best practices checklist covers the four phases of testing in detail. The point here is why testing belongs in the security conversation at all, not just the quality conversation: because the security failures that reach users in vibe-coded apps are mostly behavioral, not structural, and behavior is what tests observe.

A Practical Security Testing Approach for Vibe-Coded Apps

You do not need a QA team or a full test suite to close the most dangerous gaps. You need a small number of high-value tests running on every deploy.

Start with authentication. Write tests that cover login with valid credentials, attempted access to protected routes without a session, and access with an expired session. These tests do not require security expertise. They require treating authentication as a feature with observable behavior and verifying that behavior. A test that requests /dashboard without a valid session and asserts a 401 response is three lines and prevents the most common class of auth misconfiguration.

Add cross-user authorization tests for every endpoint that returns or modifies user-scoped data. Authenticate as User A. Retrieve a resource ID belonging to User B. Assert 403. This pattern catches BOLA/IDOR (OWASP API Security #1), the most common critical API vulnerability class, and it takes minutes to write per endpoint once your framework is set up. For a structured approach to what to test and in what order, the vibe coding best practices checklist has the four-phase framework.

Test your API directly, not through your UI. UI tests verify the happy path through the interface. Security tests need to bypass the interface entirely and call the API as an attacker would. In Playwright, this means using request.get() and request.post() directly against your endpoints. In any framework, the pattern is the same: authenticate, get a token, make raw API calls, assert on the response.

The third category is plan and permission boundaries. If your app has a free tier with limits, test that the limits are enforced server-side. Create a free-tier user via your API, reach the limit, then attempt to exceed it by calling the endpoint directly. Vibe-coded apps routinely enforce these limits only in the UI component. The test finds out in one request.

Before you build any of that: check for exposed credentials immediately. Search your deployed frontend bundle for strings matching the pattern of your database connection strings, API keys, and service tokens. This takes five minutes and addresses the most immediately catastrophic category of vibe coding risk. If your Supabase anon key is in the bundle, verify that row-level security is properly configured on every table it can reach. The anon key being "designed to be public" is not a complete defense without the RLS policies to back it up.

For founders who want to keep the vibe coding workflow without inheriting the manual testing burden, Autonoma connects to your codebase and three agents handle test generation, execution, and maintenance automatically. When your vibe-coded UI changes, the tests adapt. The goal is continuous behavioral coverage that runs on every deploy without requiring you to write or maintain test scripts.

The Speed Paradox: Why Testing Accelerates Vibe Coding

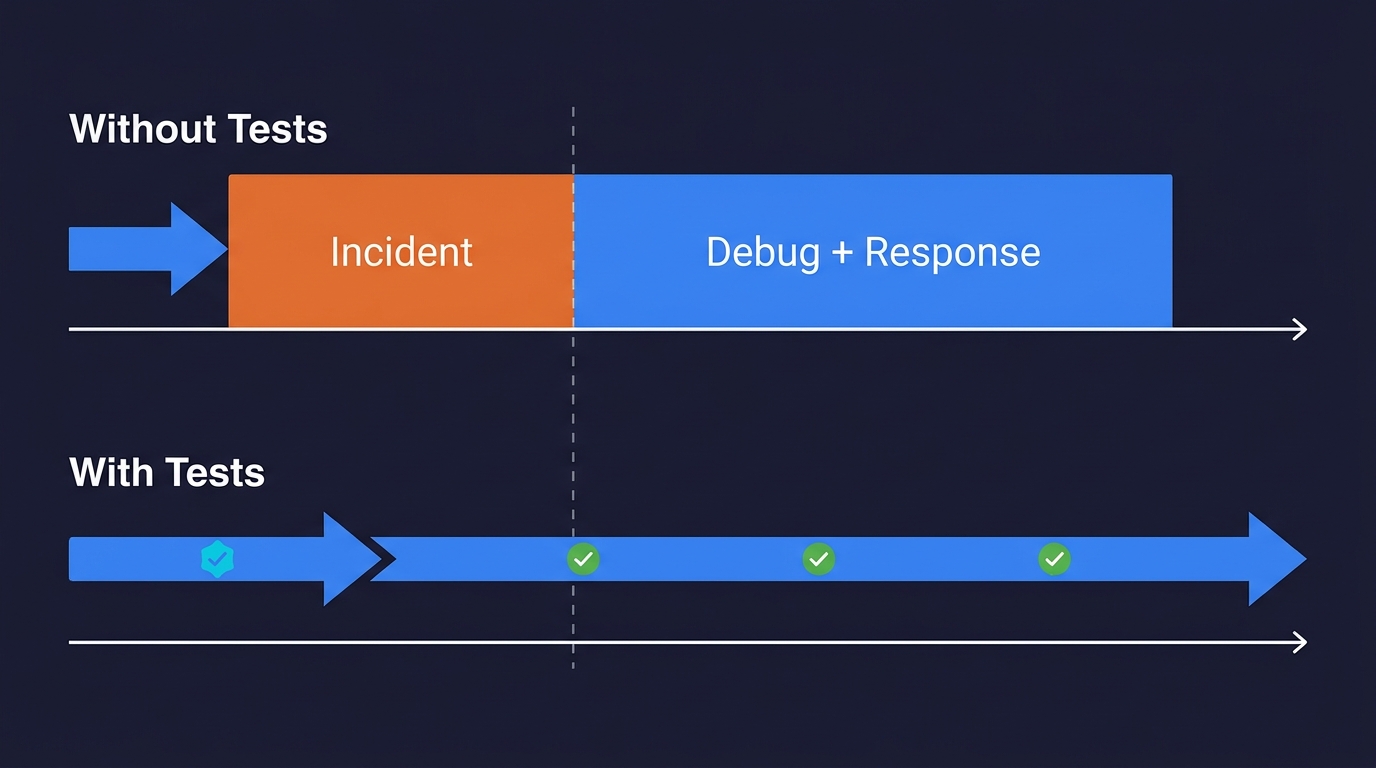

The predictable objection: "I'm using vibe coding because I want to move fast. Tests slow me down."

This framing is wrong in a specific way. Tests slow down the first deploy marginally. Incident response is measured in days. The question is not whether tests slow down your initial ship. It is whether they slow down your tenth ship, and whether they prevent the incident response cycle that resets your momentum.

A vibe-coded app without tests ships fast and discovers bugs when users find them. The debugging cycle for a production incident, identifying the issue, locating the root cause in AI-generated code you do not fully understand, fixing it without breaking something else, deploying the fix, and monitoring for regressions, consistently consumes more time than the initial time savings from skipping tests. And that is for a functional bug. A security incident adds incident response, customer communication, and potential legal consultation to that timeline.

A vibe-coded app with a small suite of behavioral tests ships at almost the same speed and discovers bugs before users see them. The developer sees a failing test, prompts the tool to fix the specific issue the test describes, and ships the corrected version. The loop closes in minutes instead of days. The customer never encounters the vulnerability.

There is also a better-prompting dimension. "The test at line 47 verifies that a free-tier user cannot exceed their project limit by calling the API directly. It is failing because the limit check is missing from the API handler. Add server-side limit enforcement to the project creation endpoint." That prompt produces a targeted fix. Compare it to "something is wrong with how plan limits work, some users might be able to create too many projects" which produces unfocused investigation.

Tests make the feedback loop faster, not slower. A failing test is a precise bug report that the AI tool can act on directly. A production incident is a debugging investigation that might run for hours before you even know what broke. For a deeper look at how this plays out across a startup's lifecycle, vibe coding at startups covers the full decision framework including when the speed borrowing stops compounding in your favor.

The production readiness assessment gives you a 12-question framework to score your app's current state. It is the honest answer to "is my vibe-coded app ready to ship," and testing coverage is a meaningful component of that score.

Is Vibe Coding Safe? What to Check Before You Ship

The risk profile of a vibe-coded app is not uniform. It depends on what you are shipping, who will use it, and what data it handles. A throwaway prototype with no real user data has a different risk surface than a B2B SaaS handling payment information and personally identifiable data.

The founder conversation about vibe coding risks usually happens after the first incident, not before. The goal of this piece is to move that conversation earlier.

Scan your code. Run Snyk or Semgrep. Check your dependencies. This is table stakes and takes less than an hour to set up. But do not stop there, because the scanner cannot tell you whether your authorization logic is correct at runtime, whether your API validates input that the form would never allow, or whether your plan limits exist anywhere except the React component.

Run behavioral tests on your authentication flows, your cross-user authorization, and your critical business logic. Get those tests running in CI on every deploy. For the full testing checklist by phase, see vibe coding best practices. For the case studies of what happened to founders who shipped without this layer, see vibe coding failures.

The scanner tells you what the code looks like. The test tells you what the application does. For a vibe-coded app, both questions need answers before you ship to paying customers.

The highest-consequence risks for founders are behavioral vulnerabilities that scanners miss: broken authorization (one user accessing another's data), missing server-side input validation, plan limits enforced only in the UI, and session management failures. These do not appear as known vulnerability patterns in static code, so security scanners do not catch them. They only appear when the application runs, which is why behavioral testing is the missing layer. Beyond the technical side, the business risks include fundraising damage after a security incident, customer churn, regulatory exposure if you handle personal or payment data, and IP ownership ambiguity in AI-generated codebases.

No. Security scanners are pattern matchers that examine static code. They find hardcoded credentials, dependency CVEs, and known vulnerability signatures. They cannot run your application, authenticate as a user, or verify whether authorization logic actually enforces the right conditions at runtime. The most dangerous vulnerabilities in vibe-coded apps are behavioral: an authorization check that looks correct in code but does not enforce the right user scope, a form that validates input but whose API endpoint does not, a database with missing row-level security policies. These require behavioral testing to catch. The right approach is scanners plus behavioral tests, not either/or.

Static scanning examines your source code against a database of known vulnerability patterns. It catches hardcoded credentials, dependency CVEs, SQL injection patterns, and deprecated cryptographic functions. Behavioral testing runs your application and observes what it actually does at runtime. It catches broken authorization (User A accessing User B's data), missing server-side input validation, race conditions, and business logic failures. For vibe-coded apps, the most dangerous vulnerabilities are behavioral because AI tools generate structurally correct code that fails at the logic level. You need both layers: scanners for code-level patterns, behavioral tests for runtime logic failures.

Start with manual walkthrough testing of your critical flows: signup, login, any action that touches user data, and any flow that involves payment or plan limits. Document what should happen at each step and verify it happens. For automated testing without writing code, Autonoma connects to your codebase and agents generate tests automatically from your routes and components, no test scripts required.

Check for exposed credentials in your frontend bundle. Search your deployed JavaScript files for strings matching the pattern of your database connection strings, API keys, and service tokens. This takes five minutes and catches the most immediately catastrophic category of vibe coding vulnerability: credentials that give unauthenticated users database access. If you find a Supabase anon key in your bundle, verify that row-level security is configured on every table it can access. After that, test your authorization logic directly via API calls rather than through the UI, to verify that one authenticated user cannot access another user's data.

Marginally on the first ship. Significantly less than incident response on subsequent ships. A failing test tells you precisely what broke and where. A production incident tells you that something is wrong after a user has already encountered it. The debugging cycle for a production security incident in AI-generated code you do not fully understand routinely takes longer than the original time savings from skipping tests. Tests also make AI tools more useful as a debugging partner: a failing test is a precise, actionable prompt. 'The test at line 42 fails because the API returns 200 instead of 403 when User A requests User B's data. Fix the authorization check in the invoices endpoint' produces a targeted fix in seconds.

In regulated environments (HIPAA, PCI DSS, SOC 2), vibe coding risks are asymmetric: the downside of a security incident is not a sprint of cleanup but potential regulatory action, mandatory breach notification, and liability that can be existential for an early-stage company. AI-generated code has no auditable reasoning, which creates compliance problems even before a security issue occurs. Before shipping a regulated product built primarily through vibe coding, get a security-focused code review and behavioral test coverage on your data handling flows.