Vibe coding, a term coined by Andrej Karpathy in February 2025, is the practice of building software primarily through AI-generated code with minimal manual oversight, often using tools like Cursor, Windsurf, or Claude Code to ship entire features from natural language prompts. At startups, vibe coding is genuinely valuable for MVPs, prototypes, and internal tools where speed outweighs code quality. It breaks down in customer-facing production systems, regulated environments, and anywhere code needs to be owned and understood by a team over time. The path forward is not "vibe code everything" or "vibe code nothing." It is knowing which use cases are safe, which need guardrails, and which require automated testing to compensate for the gaps that fast AI-generated code always leaves behind.

You built your MVP in a weekend. Cursor wrote most of it. You showed investors on Monday and closed a round two weeks later. Now it is six months later. Your codebase has grown to 40,000 lines, 95% of which were generated by AI. A new engineer joined last week and has been reading code for three days without being able to explain what anything does. Three production bugs this month, all in code you do not fully understand, all symptoms of the technical debt that vibe coding leaves behind. You are not alone. About 25% of YC W25 startups reported codebases that were 95% AI-generated. Most of those founders are now living in the same house you built and discovering the same leaks.

The question is not whether vibe coding was the wrong call. It probably was not. The question is what to do now, and how to make better calls going forward.

When Vibe Coding Actually Works at Startups

Before diagnosing the failure modes, it is worth being honest about why vibe coding spread so fast. It genuinely works in certain contexts, and dismissing it entirely is as wrong as embracing it uncritically.

MVPs and prototypes are the clearest win. You need something working quickly to test a hypothesis. The code does not need to be maintainable because you will probably throw most of it away. Addy Osmani, Google's engineering director, draws a sharp distinction here: "Vibe Coding Is Not AI-Assisted Engineering." He means that vibe coding is a prototyping tool, not a software engineering methodology. When you treat it as the former, it delivers. When you treat it as the latter, it eventually fails.

The speed ceiling for vibe-coded MVPs is real and well-documented at this point. Anything, a vibe coding platform, hit $2M ARR in its first two weeks and raised at a $100M valuation. The founders were former Google engineers who had the technical judgment to evaluate what the AI generated. That context matters: the speed wins at the MVP stage go to people who can tell the difference between good and bad AI output.

Internal tools are a reasonable middle ground. An admin dashboard, a data export script, a Slack bot that surfaces analytics to your team. These tools have smaller user bases, lower stakes when they break, and limited security exposure. The cost of a bug is a frustrated internal user, not churned revenue. Vibe coding internal tools, with some light review, is defensible at nearly any stage.

Exploration and research spikes work well. You want to know if a third-party API does what you think it does, or whether a given architecture is feasible. Generating a working prototype in an afternoon to answer a technical question costs nothing and accelerates real decisions. The code goes in a branch and probably never merges.

Should I Vibe Code My MVP?

Yes, if you are pre-launch, testing a hypothesis, and willing to refactor after validation. No, if you are building in a regulated industry, need production reliability from day one, or lack the technical judgment to evaluate what the AI generates.

Vibe coding your MVP is likely the right call when the codebase is small and the stakes are low. The speed advantage is real and the technical debt is manageable at that stage. The condition is intent: go in knowing that this code will need cleanup or a partial rewrite after validation. Treat it as a prototype that proved a market, not as the foundation of your production system.

The common thread across all three use cases above: low stakes, short timeframes, or exploratory intent. The moment any of those conditions changes, the calculus shifts.

When Vibe Coding Breaks at Startups

A DEV Community survey found 16 of 18 engineering leaders reported vibe coding disasters. That is not a coincidence. The failures follow predictable patterns.

The handoff problem. Code you do not understand is code nobody can own. When your first hire joins and asks "why does this function do that," and your answer is "I asked Cursor to make it work and it did," you have created an ownership vacuum. Engineers who cannot reason about the codebase they are maintaining are engineers who break things when they try to fix things. CodeRabbit found that AI-generated code increases code duplication by 4x, which means your new engineer is not just reading code they did not write. They are reading the same pattern repeated four times in slightly different forms without understanding which one is canonical.

Security and compliance. Vibe-coded systems have a consistent blind spot: security is not a feature that gets added at the end. Authentication edge cases, authorization logic, and data validation are areas where AI models produce plausible-looking but exploitable code. Without an engineer who understands what the generated code is doing at a system level, you cannot audit it for OWASP Top 10 vulnerabilities. In regulated environments like HIPAA, SOC 2, and PCI, this is not just a quality problem. It is a legal one. We covered the full scope of vibe coding security risks in a separate deep dive, including why security scanners alone are not enough.

Scale and team size. AI tools speed up individuals working alone on familiar problems. They slow down teams working together on evolving systems. (The METR study found experienced developers were actually 19% slower with AI tools on unfamiliar codebases, despite feeling faster.) As your team grows from two to ten, the coordination overhead of a vibe-coded codebase compounds.

Production reliability. AlterSquare audited five vibe-coded startup codebases and found the same pattern across all of them: inconsistent error handling, no defensive coding, brittle integrations with third-party services. Code that works in the happy path and breaks on any unexpected input. This is the natural output of a generation process that optimizes for "make this work" rather than "make this robust."

The gap between a demo that works and production code that holds up is exactly what automated E2E testing covers — and Autonoma is designed for vibe-coded startups specifically, reading your codebase to generate and maintain tests without requiring you to write or understand a single test script.

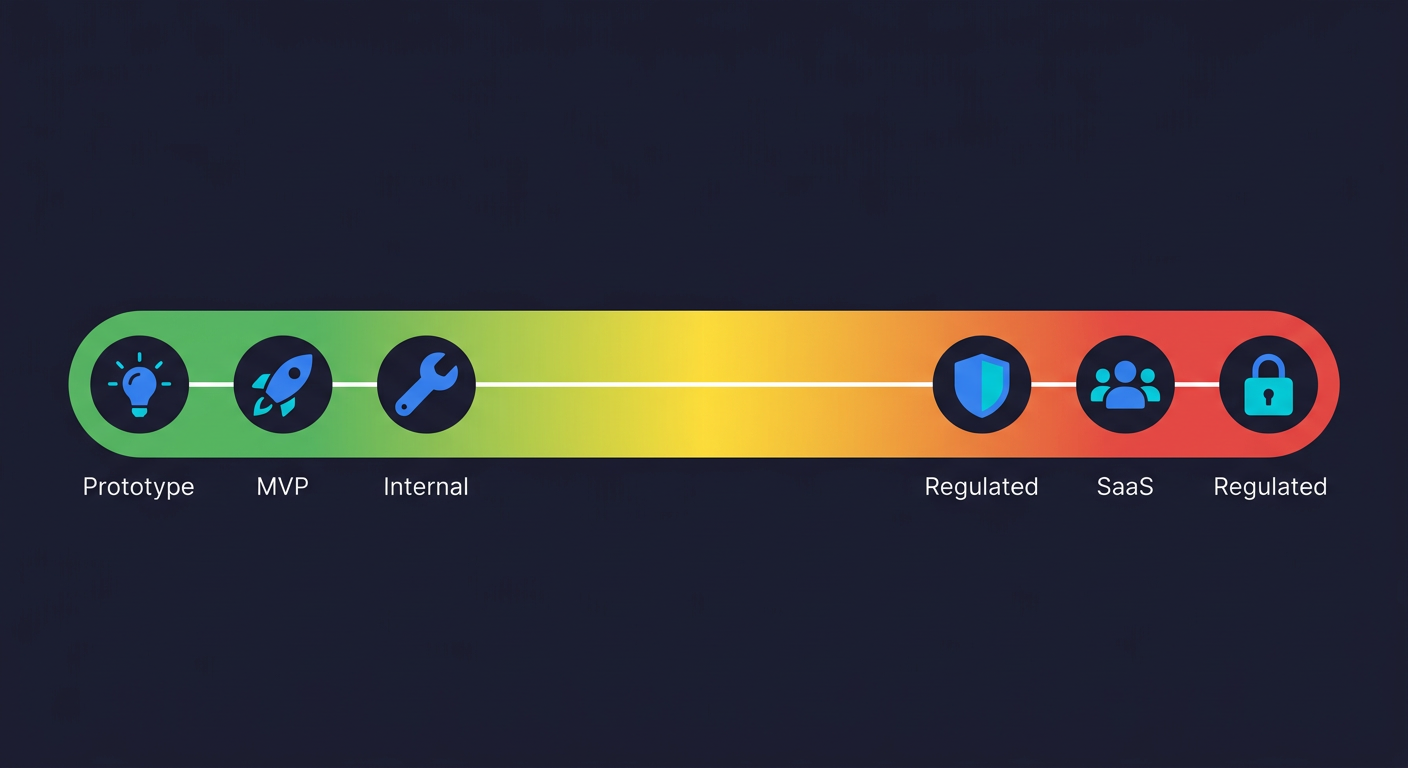

The Use-Case Matrix

Not all vibe coding decisions are equal. The risk profile depends heavily on what you are building and who will use it. Here is how we think about it:

| Use Case | Vibe Coding Safety | Why | What You Need |

|---|---|---|---|

| Throwaway prototype / spike | Safe | Low stakes, short life, no real users | Nothing extra |

| MVP (pre-launch) | Safe with intent to refactor | Fast iteration matters most; code will change | Plan a cleanup sprint after validation |

| Internal tool | Conditional | Limited blast radius; lower security exposure | Light code review; basic access controls |

| Customer-facing SaaS (post-launch) | Needs guardrails | Real users, real churn risk, production reliability matters | Automated E2E testing on critical flows, CI, monitoring |

| Regulated app (HIPAA / PCI / SOC 2) | Not safe without strong review | Compliance requires auditability and human ownership | Security audit, human code review, thorough test coverage |

The pattern is a gradient, not a binary. Early-stage, internal, exploratory: vibe coding is fine and probably optimal. Customer-facing, scaled, regulated: vibe coding needs to be counterbalanced by the things it skips.

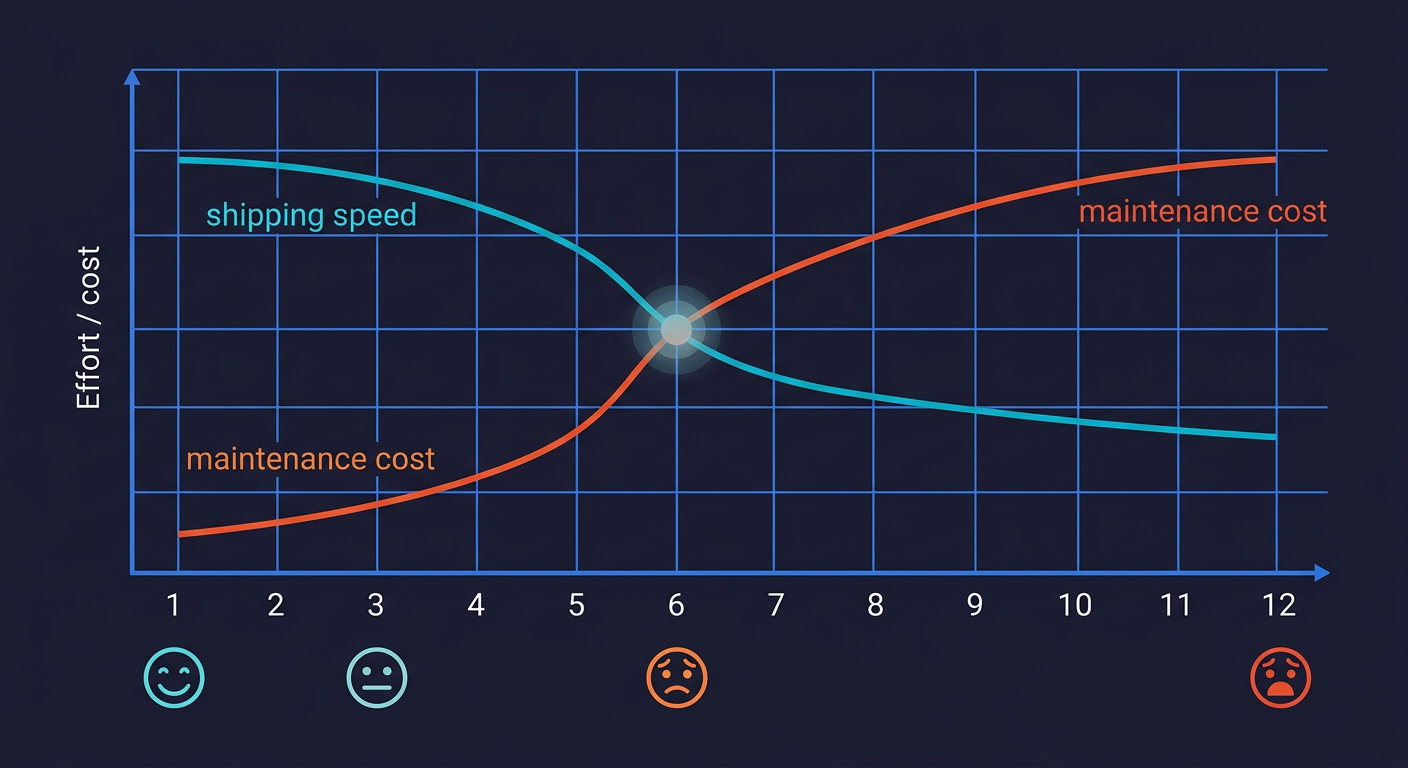

The Vibe Coding Hangover

The vibe coding hangover is the predictable phase, typically hitting 6-12 months after launch, when the speed borrowed from AI-generated code is repaid through escalating maintenance costs, unexplainable bugs, and engineer onboarding friction. It has a distinct texture.

The MVP worked. Investors saw it. Users signed up. The product is real. Now you are trying to add a feature that touches three different parts of the codebase and every change breaks something unexpected. A senior engineer you hired took one look at the codebase and asked for a refactor that would take six weeks. Your CI pipeline is red half the time and nobody trusts it. You are afraid to deploy on Fridays.

This is the vibe coding hangover. The speed you borrowed in months one and two is being repaid with interest in month six.

Academic research confirms this pattern. A study on vibe coding in practice found that the productivity flow of AI-assisted development systematically trades off against technical debt accumulation, producing architectural inconsistencies and security gaps that compound as projects scale.

The traffic data tells this story at an industry level too. Traffic to major vibe coding tools dropped roughly 50% in late 2025 before rebounding. This is part of the broader vibe coding bubble dynamic: building got democratized, but testing didn't follow. The rebound suggests the founders who stuck with it figured out how to make it sustainable.

What makes it sustainable is not stopping. It is adding the infrastructure that compensates for what vibe coding skips.

Vibe Coding Technical Debt: What It Looks Like

When AlterSquare audited those five startups, the common findings were not random. They were consistent: duplicated logic across modules, no clear separation of concerns, missing error handling, and zero test coverage. The CodeRabbit finding that AI-generated code increases duplication by 4x is not an outlier. It is the baseline pattern in any codebase built primarily through vibe coding. The debt is not invisible. It just requires someone with enough context to look at the right places, which is exactly the person you did not have when you were shipping fast.

The refactor is not the answer. The instinct is to declare a refactor sprint and fix everything. In practice, refactoring a vibe-coded codebase without test coverage is just introducing new bugs to replace the old ones. Every senior engineer who has tried this will tell you the same thing: before you refactor, you need tests. Not after. The cost of not testing compounds exactly here, when you most need the safety net.

What to Automate: The Bridge Between Speed and Reliability

The answer to the vibe coding hangover is not a cultural intervention or a hiring plan. It is automation. Specifically, automated testing and monitoring that runs continuously and catches what fast AI-generated code misses.

Start with E2E tests on your critical flows. Your checkout, your signup, your core activation path. These are the flows where a bug creates immediate, measurable business damage. A broken checkout that sits undetected over a weekend can cost a 10-person startup $8,000 to $25,000 when you account for lost revenue, engineering time, and support load. Our E2E testing playbook for startups covers exactly where to start.

The specific challenge with vibe-coded codebases is that scripted tests break constantly when the code changes, because vibe-coded code changes constantly. This is the core of the vibe coding testing gap: you need tests that keep up with your velocity. For teams without a dedicated QA function, shipping reliable software without a QA team means automating the testing layer itself, not just the tests.

CI/CD is non-negotiable at this stage. Every deploy should run your critical tests automatically. Not as a blocking gate at first, just as a signal. You want to know within minutes of a deploy whether something in your critical flows broke. Right now you find out when a user files a support ticket.

Add monitoring for the things tests cannot cover. Error rates, latency spikes, unexpected status codes. A simple setup with Sentry or Datadog on your critical endpoints gives you a production signal that your test suite cannot. Tests tell you what you expected to break. Monitoring tells you what you did not expect.

This is where Autonoma fits into vibe-coded startups. The problem with scripted E2E tests on fast-moving AI-generated codebases is that the tests become as hard to maintain as the code. Autonoma connects to your codebase and agents read your code to generate and maintain tests automatically. When your vibe-coded UI changes, the tests adapt. When you add a new flow, agents identify it and generate coverage. You keep the speed of vibe coding without inheriting the reliability gap. See how we think about agentic testing at our overview of agentic testing.

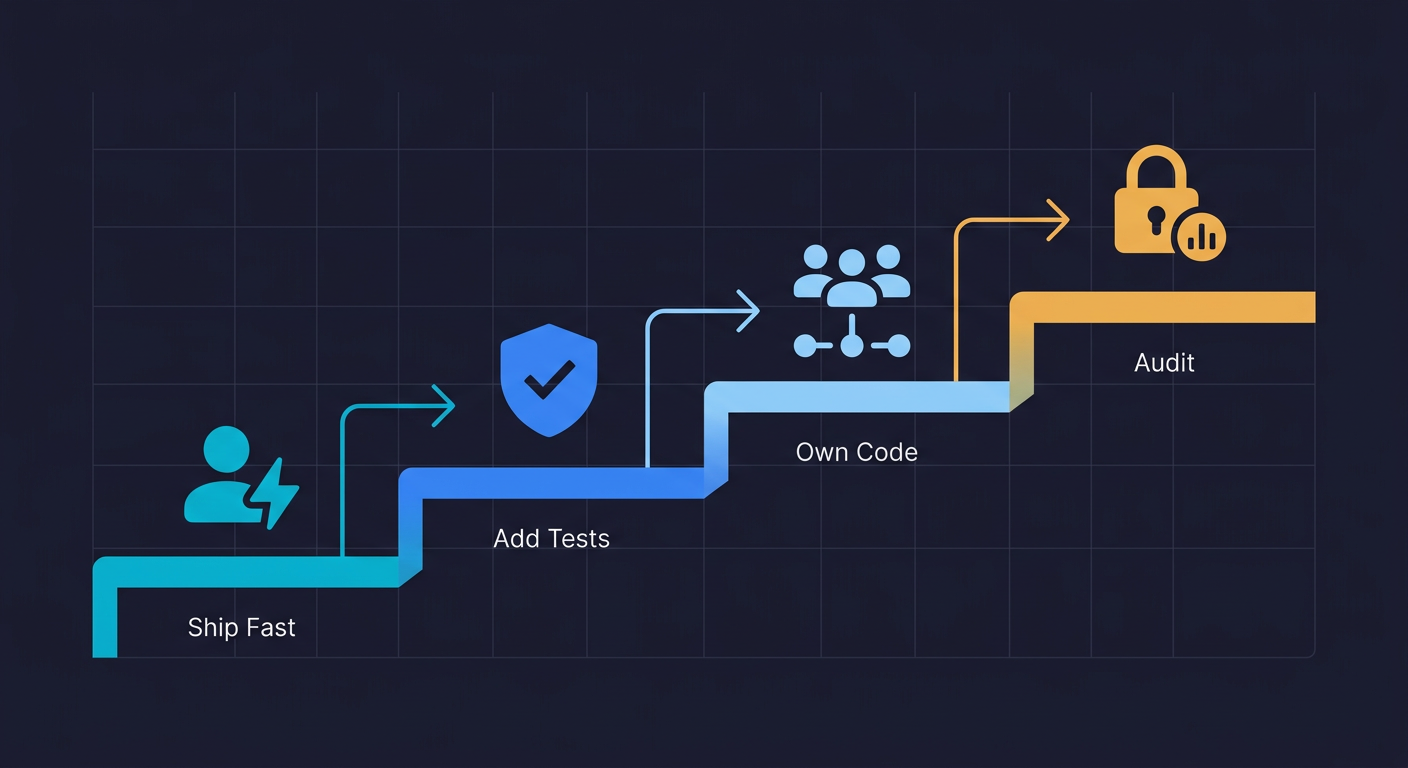

The Vibe Coding Decision Framework for Startup Founders

You are at some point in the vibe coding lifecycle. Here is the decision framework we have seen work consistently:

Phase 1 (Pre-launch, solo or two-person): Vibe code freely. Speed is the only variable that matters. Ship to users. Get validation. Do not spend time on test infrastructure. The cost of being slow is higher than the cost of technical debt you might need to repay.

Phase 2 (Post-launch, growing, customer revenue): Add a floor. You do not need to refactor. You need to know when your critical paths break. Add E2E tests on your three most important flows. Get them running in CI. This is a one-time investment that pays continuous dividends. Use an AI coding agent to generate the initial tests if you want to stay in the vibe coding workflow.

Phase 3 (Scaling, team of five-plus): Establish ownership. Each part of the codebase needs an engineer who understands it, not just an engineer who can prompt AI to change it. This is not anti-AI. It is pro-accountability. Engineers who own code write better prompts for that code, catch AI mistakes faster, and can explain decisions to teammates.

Phase 4 (Any stage, regulated environment): Stop and audit. Before adding features to a regulated system built primarily by AI, get a security-focused code review. The compliance risk is asymmetric: the downside of a HIPAA violation or PCI breach is not a sprint of cleanup. It is existential.

When to Stop Vibe Coding

Knowing when to stop vibe coding is often clearer in retrospect than in the moment. The short answer: stop when the cost of a production bug in your vibe-coded system exceeds the cost of slowing down to add tests and establish ownership. For most startups, that inflection point arrives when you have paying customers on a feature that was vibe-coded without coverage. The moment a bug in AI-generated code churns a paying customer or triggers a compliance incident, the economics of pure vibe coding have already turned against you. Add the guardrails before that moment, not after.

The $4.7B vibe coding market (projected to hit $12.3B by 2027) suggests this is not a trend that is going away. The founders who figure out the sustainable version, fast AI-generated code with automated quality guardrails, will have a genuine competitive advantage. The ones who treat it as an unqualified solution will keep hitting the same wall.

The honest takeaway from the data: AI coding tools make individual developers faster in contexts they understand. They create problems in contexts that require coordination, ownership, and robustness. Most startups start in the first context and eventually move into the second. The transition is the hard part, and almost nobody plans for it. Understanding what a production bug actually costs is usually what finally motivates teams to add the guardrails.

Plan for it before you hit the wall.

Frequently Asked Questions

Vibe coding is the practice of building software primarily through AI-generated code with minimal manual oversight, using tools like Cursor, Windsurf, or Claude Code to generate entire features from natural language prompts. Startups use it because it dramatically compresses the time from idea to working software. An MVP that might take three weeks to build manually can be built in a weekend. That speed advantage is real and meaningful at the pre-product-market-fit stage, where the ability to test hypotheses quickly is more valuable than code quality.

Vibe coding becomes a problem when code needs to be owned and understood by a team over time. The inflection points are: when a second engineer joins and cannot read the codebase, when security or compliance requirements apply, when production bugs are traced back to AI-generated code that nobody fully understands, and when the cost of maintenance starts exceeding the original speed benefit. A CodeRabbit study found AI-generated code increases duplication 4x, which compounds the maintenance problem as codebases grow.

For a pre-launch MVP where you are testing a hypothesis and expect to iterate or rebuild: yes, vibe coding is likely the right call. The speed advantage is real. The technical debt is manageable when the codebase is small and expectations are set correctly. The condition is intent: if you go in knowing this code will need cleanup after validation, the debt is planned. If you go in thinking you will just keep building on top of it indefinitely, you are setting yourself up for the vibe coding hangover at month six.

Start with end-to-end tests on your three most critical user flows: checkout, signup, and core activation. These are the flows where a bug creates immediate, measurable business damage. Get those tests running in CI on every deploy. Add error monitoring (Sentry or similar) on your critical endpoints. For teams that want to keep the vibe coding workflow while adding reliability, tools like Autonoma connect to your codebase and agents generate and maintain E2E tests automatically without requiring you to write or maintain test scripts manually.

Not safely without strong human review. Regulated environments require auditability: someone needs to be able to explain why specific security decisions were made in the code. AI-generated code has no auditable reasoning. Authentication edge cases, data validation, and authorization logic are areas where AI models produce plausible-looking but potentially vulnerable code. Before shipping a regulated product built primarily through vibe coding, get a security-focused code review from an engineer who understands the compliance requirements. The asymmetric downside of a compliance breach makes this non-negotiable.