The Cost of Vibe Coding: A TCO Framework That Finally Makes the ROI Case

The cost of vibe coding is not the $20/month Cursor subscription. It is the compounding bill that arrives later: engineering hours spent debugging AI-generated code that passed no tests, customer churn from the bugs that reached production, incident response that pulls your senior engineers off roadmap work, and a technical debt load that makes every subsequent sprint slower. Research from Escape.tech (2,000+ vulnerabilities across 5,600 vibe-coded apps), Veracode (53% of teams shipping AI code discovered security issues post-deployment), and an ICSE 2026 meta-analysis (QA "frequently overlooked" across 101 sources) all point to the same structural gap: vibe coding accelerates creation and leaves verification to chance. The teams that account for the full cost before they scale discover that testing infrastructure is not an optional add-on. It is what separates vibe coding ROI from vibe coding liability.

The question most CTOs are actually asking is not whether to use AI coding tools. That decision is effectively made. The question is whether the downstream costs offset the velocity gains, and whether anyone has done the math honestly.

That math is harder than it sounds. How much does vibe coding really cost? Velocity gains are visible and immediate. The costs that offset them are distributed across incident response, QA cycles, senior engineer time, and customer churn, and none of those line items say "vibe coding" in the description.

What follows is the framework we use with engineering leaders to surface the full picture. The goal is not to argue against AI coding tools. It is to give you the numbers that turn a gut-feel bet into a defensible business case.

Vibe Coding ROI: The Productivity Math Everyone Runs, and the Part They Skip

The standard vibe coding business case looks like this: developer productivity increases 2-4x on certain tasks, AI subscriptions cost $20-100 per seat per month, and the ROI is obvious before you finish the slide. That math is not wrong. It is just incomplete.

The part that gets skipped is what changes in the rest of the system when the code generation layer accelerates. More code, generated faster, with AI that introduces security vulnerabilities at measurably higher rates than human developers. More features shipped per sprint, but with a defect rate that the QA infrastructure was not sized to handle. More velocity at the top of the funnel, and a longer tail of consequences downstream.

The ICSE 2026 paper synthesizing 101 sources on vibe coding in practice found that technical debt accumulates at roughly 3x the rate of traditional development in AI-assisted workflows, with QA being "frequently overlooked." That is not an edge case. That is the median outcome when teams adopt vibe coding without a corresponding investment in verification.

Amazon's experience is the most detailed public case study of this math at scale. Four Sev-1 incidents in 90 days, including a 6-hour outage with an estimated 6.3 million lost orders, following an 80% AI coding adoption mandate. The velocity gains were genuine. The verification infrastructure did not scale with them. The bill arrived in production.

The comparison that sharpens the math: AI coding tools cost $20-100/month per seat. A fully loaded developer costs $180,000-$250,000/year. The hidden costs of untested vibe coding in a 15-engineer team frequently exceed $500,000 annually, the equivalent of 2-3 engineers spending their entire year on defect management rather than product development. The question is not whether AI tools are cheaper than developers. It is whether the total cost of using them without verification infrastructure exceeds the cost of the developers they were supposed to augment.

The Five Hidden Costs of Vibe Coding CTOs Undercount

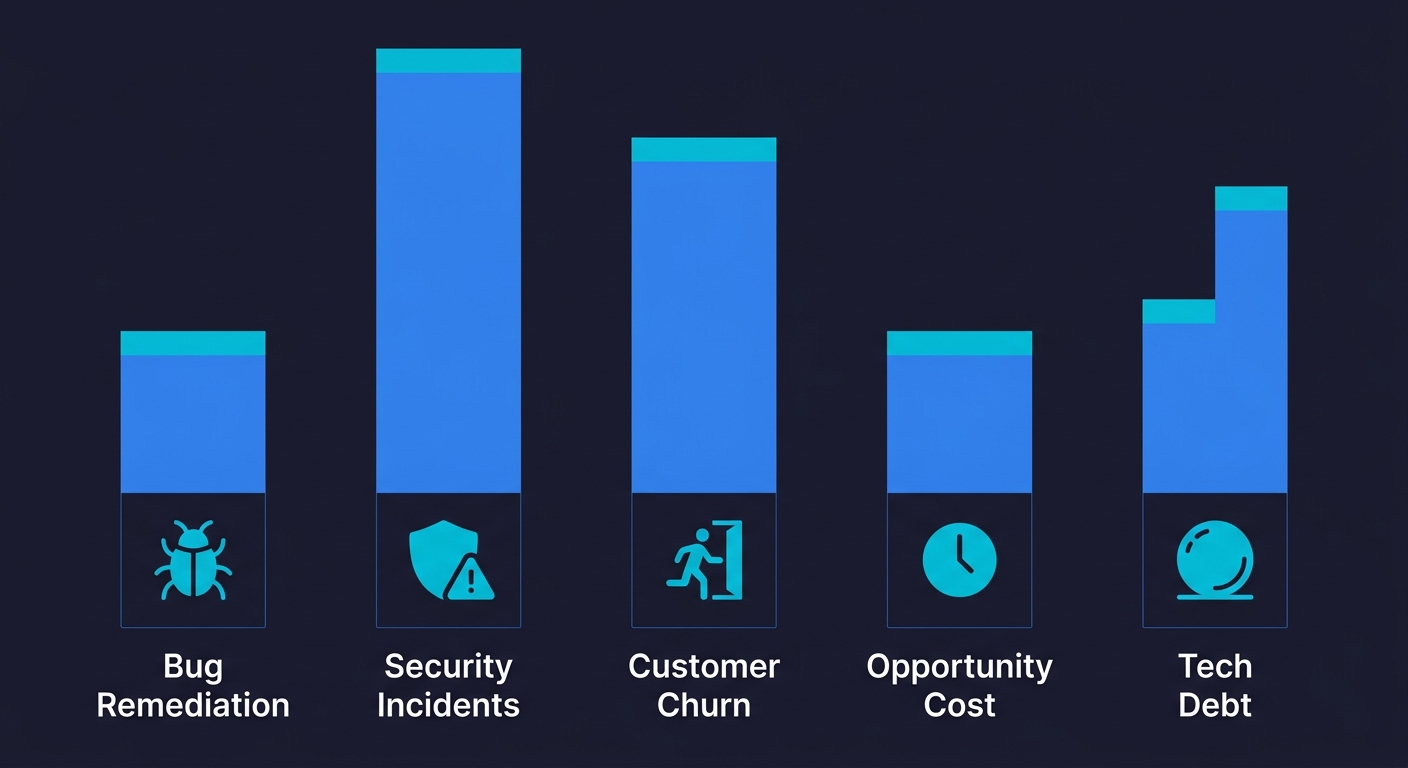

The hidden costs of AI-generated code, what you might call the AI code quality cost, fall into five distinct categories: (1) bug remediation engineering time, (2) security incident response, (3) customer churn from production bugs, (4) incident response opportunity cost, and (5) technical debt compound interest. Most organizations track the first one and undercount the rest.

1. Bug Remediation Engineering Time

The most direct cost is engineering time spent finding and fixing defects that testing would have caught earlier. Georgetown CSET research found that 86% of AI-generated code failed to defend against XSS attacks. CodeRabbit's analysis found AI-generated code is 2.74x more likely to introduce XSS vulnerabilities specifically, and roughly 1.7x more likely to contain major issues across all defect categories.

The remediation math is straightforward and punishing. IBM's research on defect cost amplification found bugs fixed in production cost 15x more than bugs fixed in development. For a team generating 3x more code with AI tools, the defect surface expands significantly. If 20% of an engineer's time shifted to bug remediation, and that engineer costs $200,000 fully loaded, the annual drag is $40,000 per engineer, before counting the opportunity cost of features they did not ship.

2. Security Incident Response

Security incidents are the cost category with the widest variance and the highest tail risk. The average cost of a data breach in 2025 was $4.88 million according to IBM's annual Cost of a Data Breach report. Most vibe-coded applications are not Fortune 500 targets, but the underlying vulnerability pattern applies regardless of company size.

Escape.tech's research across 5,600 vibe-coded applications found over 2,000 vulnerabilities, many of them critical. Veracode and SonarSource found that 53% of teams shipping AI-assisted code discovered security issues after deployment. The Moltbook breach, in which 1.5 million API tokens were exposed via an AI-generated endpoint that lacked proper authentication, is a concrete example of what this looks like in practice: a single vibe-coded route, zero tests, full credential exposure.

Security incident response involves direct costs (forensics, legal, notification, remediation) and indirect costs (regulatory fines, reputational damage, customer attrition). The direct costs alone routinely exceed the annual engineering budget of small teams. For a more detailed breakdown of what vibe coding security risks look like at the application layer, that analysis covers the specific vulnerability patterns that show up most consistently.

3. Customer Churn from Production Bugs

This is the cost bucket that is hardest to attribute but often the largest. When bugs reach production, some fraction of affected users churn. Churn attribution is messy because customers rarely say "I left because of a bug." They say nothing, or they say "the product doesn't work," or they simply stop showing up in retention cohorts.

The research on bug-driven churn is consistent: a single poor experience following a product failure increases churn probability significantly, with numbers ranging from 25% to 60% depending on the study and product category. For a B2B SaaS product with $10,000 ACV and 500 customers, losing 5% of the customer base to preventable bugs costs $250,000 in ARR annually. At $50,000 ACV with 200 customers, the same churn rate is $500,000.

The counterfactual is harder to establish (what would retention have been without those bugs?), but the direction is clear: production defects are not free. They are a tax on revenue that shows up in the cohort data.

4. Incident Response and Engineering Opportunity Cost

Incident response is a known cost with a precise attribution problem. When a Sev-1 fires, you know exactly who is on the bridge, how long they are there, and roughly what their time costs. What is harder to measure is the opportunity cost: the features, migrations, and architectural improvements that did not happen because your best engineers spent the week on a production incident.

For a team of 20 engineers where a major incident pulls 4 senior engineers offline for a week, the direct cost is roughly $40,000 in loaded engineering time. The opportunity cost of a delayed roadmap item depends on the business, but for a competitive market, shipping a week later than a competitor compounds over time.

Amazon's four Sev-1 incidents in 90 days represent at minimum four weeks of senior engineering attention diverted from product development. At Amazon's scale, that number is staggering. At a 50-person startup, it is existential.

5. Technical Debt Compound Interest

Technical debt is the cost that shows up last but compounds the longest. Vibe-coded codebases accumulate debt faster than traditionally written ones, per the ICSE research, because AI optimizes for making things work rather than making them maintainable. The code passes the immediate test (does it do the thing?) and fails the longer test (can we change it without breaking something adjacent?).

The code-level evidence is stark. GitClear's analysis of 211 million lines of code found that code duplication jumped from 8.3% to 12.3% between 2021 and 2024, refactoring activity dropped by 60%, and code churn doubled. These are the leading indicators of the 3x debt accumulation rate the ICSE research identified. More code is being generated, less of it is being cleaned up, and the duplicate patterns compound into maintenance surface area that grows with every sprint.

The standard metric for technical debt's engineering tax is that teams spend 25-30% of engineering time servicing existing debt rather than building new capabilities. In a vibe-coded codebase where debt accumulates 3x faster, that percentage grows over time. At 30% of a 10-engineer team, you are paying for three engineers who are building nothing new.

For a more complete picture of what vibe coding quality issues look like inside the codebase, and how they manifest as maintenance burden, that guide covers the specific anti-patterns in detail.

Total Cost of Ownership: A Framework CTOs Can Apply

The following framework is designed to let you quantify your organization's specific exposure. Use your actual numbers; the ranges shown are illustrative benchmarks.

| Cost Category | How to Measure | Illustrative Annual Range | Key Variables |

|---|---|---|---|

| Bug remediation engineering time | % of sprint capacity on bug fixes × engineer loaded cost | $40K - $200K per 10 engineers | Defect rate, team size, severity distribution |

| Security incident response | Incidents per year × average response cost | $50K - $4.88M per incident | Breach type, notification requirements, regulatory exposure |

| Customer churn from production bugs | Bug-attributed churn rate × ACV × customer count | $100K - $1M+ in lost ARR | ACV, customer count, churn attribution method |

| Incident response opportunity cost | Sev-1 hours × senior engineer rate × incidents per year | $20K - $150K | Incident frequency, team seniority mix |

| Technical debt compound interest | % sprint capacity on debt service × team cost × 12 | $200K - $600K per 10 engineers | Codebase age, debt accumulation rate, team size |

| Total cost of not testing | Sum of above | $410K - $7M+ | Highly dependent on incident exposure and customer base |

The ranges are wide because the tail risk (a single security breach) dominates the distribution. Most vibe coding teams will sit in the lower-to-middle range on all categories except security, where the low-probability, high-consequence structure makes the expected value expensive even when no breach has occurred yet.

These costs are not static. They compound. At 3x the normal debt accumulation rate, a team spending 25% of sprint capacity on debt service in Year 1 is approaching 40% by Year 2, as duplicated code, inconsistent abstractions, and untested edge cases stack up. By Year 3, teams regularly describe "development paralysis," where shipping any new feature requires reworking two adjacent ones. The pattern is consistent enough across our customers that we call it the 18-month wall: the point where vibe coding velocity gains are fully consumed by maintenance costs, and the team is shipping slower than it was before adopting AI tools.

The cost of not testing analysis we published earlier makes this case in more detail, with specific numbers from teams who went through the exercise.

Testing Infrastructure: What It Costs vs. What It Prevents

The comparison that actually moves decisions is direct: what does testing infrastructure cost, and what does it prevent?

Manual QA is the traditional answer. A QA engineer in North America costs $90,000-$140,000 fully loaded, and can cover a fraction of the test surface a modern application requires. Manual QA scales linearly with headcount and doesn't keep pace with AI-generated code volume. It also fails exactly the vibe coding testing scenario where code is being generated faster than humans can review it.

Automated testing written by developers is the next answer. It costs engineering time to write, more engineering time to maintain, and has a well-documented fragility problem: tests break when the UI changes, and someone has to fix them. Flaky tests are their own form of engineering debt.

Agentic testing is the answer we built for this specific problem. Autonoma connects to your codebase, and agents read your routes, components, and user flows to generate and run E2E tests automatically. No one writes test scripts. No one records flows. The Planner agent handles database state setup for each test scenario, so tests are realistic rather than idealized. The Maintainer agent keeps tests passing as your code changes, eliminating the maintenance burden entirely.

The cost comparison is not close. At a fraction of the cost of a QA engineer, agentic testing covers the full application surface, runs on every pull request, and self-heals when the code changes. For teams shipping with AI coding tools, it is the infrastructure that makes the velocity gain safe to keep.

| Testing Approach | Annual Cost Estimate | Defect Coverage | Scales with Vibe Coding? | Maintenance Burden |

|---|---|---|---|---|

| No testing | $0 upfront | 0% | N/A | None (until production) |

| Manual QA team | $90K-$280K per 1-2 QA engineers | Partial | No (linear headcount) | Retesting on every release |

| Developer-written automated tests | 20-30% of engineering time | Depends on coverage | Poorly (tests break with UI changes) | High (constant maintenance) |

| Agentic testing (Autonoma) | Fraction of QA engineer cost | Full application surface | Yes (reads codebase, self-heals) | None (self-maintaining) |

Applying the Framework: A Concrete Example

Consider a Series A startup: 15 engineers, $8,000 ACV, 300 customers, shipping with Cursor and Claude Code across the full stack. No dedicated QA. Some unit tests, no E2E coverage.

Bug remediation: at 15% of sprint capacity spent on bugs, and an average fully-loaded engineer cost of $180,000, that is $405,000 per year in engineering time consumed by defects. A conservative estimate given the ICSE data on AI code quality.

Security exposure: no breaches to date, but Escape.tech found critical vulnerabilities in roughly 35% of vibe-coded applications audited. At $8,000 ACV, a breach that causes 10% churn costs $240,000 in ARR before factoring in direct response costs.

Customer churn from bugs: at 4% bug-attributed annual churn on 300 customers at $8,000 ACV, that is $96,000 in lost ARR per year.

Technical debt: at 25% of sprint capacity servicing debt, $405,000 per year in engineering time not building new product. Competitive disadvantage compounds.

Total estimated annual cost of inadequate testing: roughly $900,000 to $1.2M before accounting for a major security incident. Against that, automated testing infrastructure costs an order of magnitude less.

The math is not subtle. The question is whether the accounting is visible before or after a Sev-1.

How Testing Infrastructure Changes the Vibe Coding ROI Equation

The agentic testing approach changes four things simultaneously for teams shipping with AI coding tools.

First, it eliminates the manual QA bottleneck without replacing it with a test-writing burden on developers. The codebase is the spec. Agents read it and generate tests from it.

Second, it makes every pull request a verified artifact rather than a best guess. Before vibe-coded changes merge, the full application surface has been exercised, not just the unit the developer thought to test.

Third, it eliminates the maintenance cycle that makes automated tests technically expensive. When the UI changes, Autonoma's Maintainer agent updates the tests. No one has to fix broken selectors at 2am.

Fourth, it generates the kind of evidence that satisfies compliance requirements. As the EU AI Act's high-risk provisions take effect in August 2026, teams using AI coding tools will need to demonstrate adequate oversight and quality controls. An audit trail of what was tested, when, and what the results were is not optional in that regulatory environment.

For a complete view of vibe coding best practices, including how to structure the verification layer alongside AI-assisted development, that guide covers the full operational model. And for founders specifically, the vibe coding risks for founders analysis addresses the business case in the context of fundraising and due diligence, where technical debt and testing coverage are increasingly scrutinized.

The Decision CTOs Are Actually Making

The ROI calculation for vibe coding testing infrastructure is not actually that complicated. The inputs are:

What is the current annual cost of inadequate testing (using the framework above)? What fraction of that cost would automated testing prevent? What does automated testing cost?

For most teams we talk to, the current cost of inadequate testing is 5-10x the cost of the testing infrastructure that would address it. Research suggests vibe coding ROI turns negative when teams spend more than 26% of additional sprint capacity on quality remediation, a threshold that most teams hit within 6-12 months of scaling AI coding tools without quality controls. The reason more teams have not made the investment is not the math. It is that the costs are distributed and hard to attribute, while the investment is concentrated and visible.

Bug remediation shows up as "engineering velocity is slower than expected." Churn from bugs shows up as "retention is softer than the benchmark." Technical debt shows up as "sprints are taking longer." None of these are labeled "cost of not testing" in the budget.

The goal of this framework is to relabel them accurately, so the decision is made on the real numbers rather than the visible ones.

Vibe coding is not going away. The velocity gains are real, the tools are getting better, and the competitive pressure to ship faster is not softening. The question for CTOs is not whether to use AI coding tools. It is whether the verification infrastructure scales with them.

At Autonoma, we built the answer to that specific question. Connect your codebase, and agents generate, run, and maintain your E2E tests automatically. The creation layer and the verification layer scale together. That is the only version of vibe coding ROI that survives contact with production.

The real cost of vibe coding has five components: bug remediation engineering time (typically 15-25% of sprint capacity in untested AI-assisted codebases), security incident response (ranging from $50K for minor incidents to $4.88M for a full data breach per IBM's 2025 research), customer churn from production defects, incident response opportunity cost, and technical debt compound interest. For a 15-engineer Series A startup shipping primarily with AI coding tools and no meaningful test coverage, the combined annual cost typically lands between $900K and $1.2M before a major security incident. The costs are distributed across the organization and rarely labeled accurately, which is why the accounting often happens after a Sev-1 rather than before one.

Vibe coding technical debt accumulates roughly 3x faster than traditional technical debt, per the ICSE 2026 meta-analysis synthesizing 101 sources on AI-assisted development in practice. The primary mechanism is that AI coding tools optimize for making code work in the immediate context rather than making it maintainable across future changes. Code generated by Cursor or Claude Code may pass every requirement at the moment of generation and become a maintenance liability within two sprints. The specific patterns include: overfitted logic that solves the stated problem without generalizing, missing error handling for edge cases the AI did not anticipate, inconsistent abstractions across a codebase where each AI session had slightly different context, and security assumptions baked in at generation time that become vulnerabilities as the surrounding system changes. Teams that add agentic testing catch these issues at PR time rather than at incident time.

The vibe coding ROI calculation is genuine but incomplete. AI coding tools increase developer velocity 2-4x on certain task types, AI subscriptions cost $20-100 per seat per month, and the immediate math favors adoption. The part that changes the calculation is the downstream cost: bug remediation, security exposure, churn, and technical debt service. A fully-loaded senior developer costs $180,000-$250,000 per year. The hidden costs of untested vibe coding in a 10-15 engineer team frequently exceed $500,000 annually, which represents 2-3 engineer equivalents consumed by defect management rather than product development. The vibe coding ROI case holds only when the verification layer scales with the generation layer. Testing infrastructure is the variable that determines whether vibe coding is a productivity multiplier or a liability accelerator.

The security risk profile of vibe-coded applications is meaningfully worse than traditionally written code by several research measures. Escape.tech found over 2,000 vulnerabilities across 5,600 vibe-coded applications audited in 2025. Georgetown CSET research found that 86% of AI-generated code failed to defend against XSS attacks. CodeRabbit found AI-generated code is 2.74x more likely to introduce XSS vulnerabilities and roughly 1.7x more likely to contain major issues across all defect categories. Veracode and SonarSource found that 53% of teams shipping AI-assisted code discovered security issues post-deployment. The Moltbook breach exposed 1.5 million API tokens through an AI-generated endpoint with no authentication check. The cost consequence ranges from $50K in direct response costs for a minor incident to $4.88M for a full data breach, plus regulatory exposure under GDPR and the EU AI Act. Security is the fat tail in the vibe coding cost distribution. For a detailed breakdown, see our guide on vibe coding security risks.

Agentic testing reduces the cost of vibe coding by closing the gap between code generation velocity and verification coverage. Tools like Autonoma connect to your codebase and use agents to read your routes, components, and user flows, generating and running E2E tests automatically. No one writes test scripts, records flows, or maintains selectors. The Planner agent handles database state setup for each scenario. The Maintainer agent updates tests when the code changes. The cost reduction operates across all five vibe coding cost categories: bug remediation costs drop because defects are caught at PR time rather than in production, security exposure decreases because behavioral tests exercise authentication and authorization paths, customer churn risk falls because production bugs are caught before users encounter them, incident frequency drops because the verification layer is comprehensive rather than ad hoc, and technical debt accumulates more slowly because the test suite enforces behavioral contracts that resist degradation.

Yes, vibe coding ROI is positive when testing infrastructure is included in the model. The velocity gains from AI coding tools (2-4x on applicable tasks) are real and well-documented. The question is whether those gains are captured net of the hidden costs, or whether they appear in one budget line while the costs appear in others. Teams that add agentic testing to their vibe coding workflow capture the velocity gains while preventing the defect accumulation that erodes them. The comparison that matters is: AI coding tools plus agentic testing versus AI coding tools alone. The first produces durable velocity. The second produces velocity that compounds into technical debt and incident exposure. The cost of agentic testing infrastructure is typically an order of magnitude less than the annual cost of inadequate testing it prevents, making the net ROI of the full stack (AI coding plus automated testing) strongly positive.

Before scaling vibe coding, CTOs should complete three steps. First, audit the current defect rate and bug remediation capacity. If more than 15% of sprint capacity is consumed by bug fixes before any AI scaling, adding AI-generated code volume without improving verification will accelerate the problem. Second, establish automated test coverage as a prerequisite for AI tool adoption at scale. Autonoma and similar agentic testing tools can generate baseline coverage from your existing codebase before you begin scaling AI-assisted development. Third, define incident response thresholds and attribution. Tracking whether incidents are connected to AI-assisted changes (as Amazon's internal documents eventually did) requires that attribution infrastructure exist before incidents accumulate. The organizations that scale vibe coding successfully treat testing infrastructure as co-requisite with the AI tooling, not as a follow-on investment. For the full operational playbook, see our guides on vibe coding best practices and agentic testing for vibe-coded apps.