Vibe coding technical debt is the accumulating maintenance burden that emerges in the weeks and months after a team ships AI-generated code without adequate test coverage or architectural review. It differs from traditional technical debt in both speed and shape: a large-scale study of 8.1 million pull requests found that technical debt increases 30-41% after AI coding tool adoption, it concentrates in specific failure modes (missing error handling, duplicated logic, "works but nobody knows why" functions), and it compounds across every subsequent sprint. This article tracks the full lifecycle from Day 1 velocity to Day 90 reckoning and ends with a practical remediation playbook for teams that need to stop the bleeding and refactor with confidence.

You can feel it before you can name it. The tickets that used to close in an afternoon now take two days and leave three new questions open. Your best engineer spent last week on a bug that turned out to be a function nobody remembers writing. A junior dev asked how a core flow actually works, and the honest answer was: we're not entirely sure.

This is vibe coding technical debt in its middle phase. Not the early chaos (that gets blamed on "moving fast"), not the late crisis (that gets blamed on "the architecture"). The middle phase, where the codebase is technically functional but increasingly hostile, is the hardest to address because it doesn't feel like an emergency yet.

If you are wondering what happens after vibe coding, after the demo high fades and the maintenance reality sets in, this article is for teams sitting in that middle phase right now. Here is what is happening, why the 90-day mark is when it surfaces, and how to fix vibe coded technical debt before the rewrite conversation starts.

Day 1: The Velocity Is Genuine

Something important to acknowledge before covering what goes wrong: the initial speed is not an illusion. AI coding tools genuinely accelerate development on a wide range of tasks. A feature that would take a senior engineer three days can ship in an afternoon. Scaffolding that used to consume the first two weeks of a project appears in hours. The productivity gain that gets cited in demos is real.

The problem is not that vibe coding is slow. The problem is what vibe-coded codebases look like under the surface after the initial sprint.

On Day 1, the application works. Users can log in. The core flows run. Nothing is on fire. The team is shipping and the codebase is young enough that no one has had to maintain anything yet.

The technical debt is already there. It is just not visible yet.

Day 30: The First Signals of AI-Generated Code Debt

The first crack usually appears as a debugging session that takes longer than it should.

Someone opens a function to fix a small bug and discovers the function is 300 lines long, handles four unrelated concerns, and was clearly assembled from several AI-generated fragments that nobody quite connected into a coherent whole. The fix for the small bug requires understanding the entire function. Understanding the entire function requires understanding why it was written that way, and there is no context -- no commit message, no ticket reference, no comment explaining the logic.

Around Day 30, a few patterns start emerging simultaneously.

Duplicated logic appears in unexpected places. The same validation function exists in three files, each slightly different. The same API call is made in two components that should be sharing state but aren't. GitClear's analysis of 211 million lines of code found that code duplication jumped from 8.3% to 12.3% between 2021 and 2024 -- the period when AI coding tools became mainstream. This is not a coincidence. AI tools generate self-contained, locally-correct code that doesn't always know (or check) what already exists in the codebase.

Error handling is inconsistent, or missing entirely. The happy path works. The error paths are patchwork. In one route, API errors throw exceptions. In another, they return null. In a third, they log a message to the console and silently continue. Each pattern was generated by a different AI session, each session optimizing for the immediate task without awareness of the existing conventions.

Dependencies nobody chose are now in the project. AI tools introduce libraries readily -- a date formatting utility here, a UUID generator there. After a month of active development, the package.json has grown by 15-20 dependencies that nobody consciously evaluated. Some of them are unmaintained. Some of them duplicate functionality already in the project. None of them were discussed.

These are warning signs. They don't cause production incidents on Day 30. They slow down every subsequent piece of work.

The duplicated logic, missing error handling, and silently-introduced dependencies that accumulate at Day 30 are also where behavioral test coverage pays the earliest dividends — Autonoma agents can read a vibe-coded codebase and generate a behavioral baseline before refactoring begins, so every subsequent change has a safety net.

Day 60: The Wall

By Day 60, a simple feature request hits a wall that surprises everyone.

A product manager asks for a change to the checkout flow. It sounds small. The engineers open the codebase and find that the checkout logic is spread across seven files, some in the frontend, some in API routes, some in a utility module that appears to be called from both. The state management is inconsistent. There are two different approaches to error handling in adjacent files. Making the change without breaking something adjacent requires understanding the full execution path, and nobody can reconstruct it quickly.

This is vibe coding technical debt made concrete. The velocity was borrowed from the future, and the future has arrived.

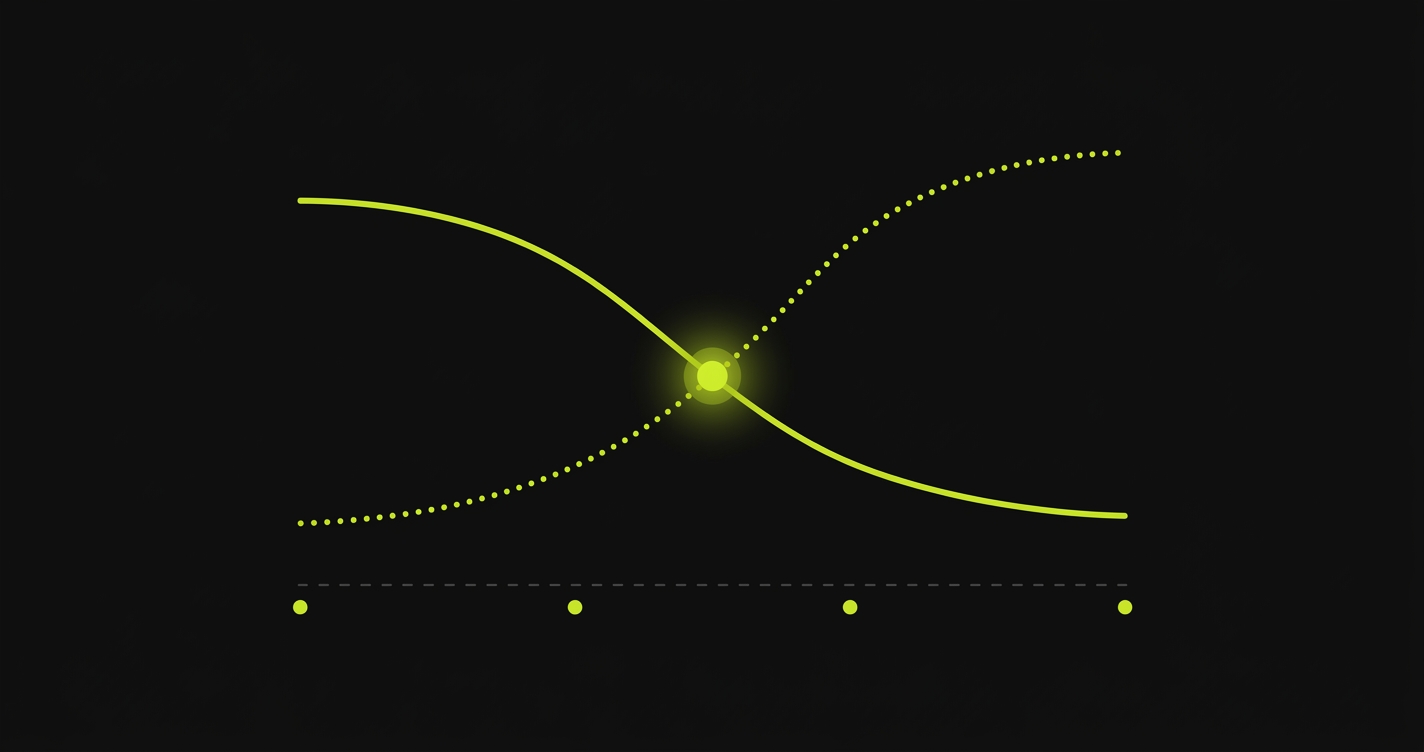

The specific patterns that create this wall are predictable. AI-generated code tends toward what you might call local correctness -- each function, each file, each component is internally coherent. What suffers is global correctness -- the way the pieces fit together, the way state flows across the system, the way error conditions propagate. A human engineer writing code slowly builds a mental model of the whole system as they work. An AI tool optimizes for the immediate context.

Day 60 also tends to be when the first feature request collides with a missing edge case. A user tries something the team didn't explicitly test. A payment fails in a currency the AI didn't handle. An authentication flow breaks when a user's email contains a character that wasn't anticipated. These are not catastrophic failures. They are symptoms of a codebase that was built for the demo rather than for the full distribution of user behavior.

Day 90: The Reckoning

By Day 90, the conversation that nobody wanted to have is on the calendar.

The team is spending 20-30% of sprint capacity on bugs that trace back to the original vibe-coded implementation. Feature velocity is a fraction of what it was on Day 1. There are functions in the codebase that work, everyone agrees they work, but nobody can explain why -- and touching them feels dangerous. The product has real users now, which means production incidents have real consequences.

The question on the table: rewrite, or remediate?

The rewrite argument is emotionally appealing. Start clean. Do it right this time. But rewrites have a well-documented failure mode: they take longer than estimated, they replicate the business logic bugs that were hidden in the old code (because the old code was the only documentation of those bugs), and they leave the existing product unmaintained for months while the new version is built. For a product with real users, this is a significant bet.

| Factor | Full Rewrite | Incremental Remediation |

|---|---|---|

| Timeline | 3-6 months (often 2-3x the estimate) | 4-8 weeks for initial stability |

| Risk to users | High: product frozen during build | Low: product stays live throughout |

| Hidden logic preservation | Often lost in translation | Captured by behavioral tests first |

| Team morale | Initial excitement, then fatigue | Steady progress, requires discipline |

| Recurring debt prevention | No guarantee without process changes | Built into the remediation workflow |

The remediation path is less satisfying but more reliable. It means accepting that the codebase is imperfect, building a test suite that documents current behavior, and incrementally improving the code while keeping the product running. It requires discipline and patience. It also requires something that most vibe-coded codebases don't have yet: tests.

This is where the actual work starts.

The Remediation Playbook: Four Phases

The goal of remediation is not a perfect codebase. It is a codebase that is safe to change. You cannot refactor what you cannot test. The test suite is not just a quality tool -- it is your safety net for every subsequent change.

Phase 1: Triage the Surface Area (Week 1-2)

Before touching any code, map the risk. Pull your last 30 days of production incidents and tag each by the area of the codebase that caused it. Run a coverage audit to see where you have tests and where you have nothing. The two maps -- incident clusters and coverage gaps -- will overlap heavily. That overlap is your highest-priority remediation target.

At this stage, resist the urge to refactor. The goal is understanding, not improvement. You are building a map before you navigate.

For teams dealing with an active bug crisis, the triage playbook in our vibe coding quality issues guide covers the immediate stabilization steps in detail. That is the right starting point if you are in the acute phase. This article picks up after the bleeding has stopped.

Phase 2: Build a Behavioral Test Suite (Week 2-4)

Before refactoring a single line, you need tests that document current behavior. Not tests that verify the behavior you intended -- tests that verify the behavior that actually exists today.

This distinction matters. Vibe-coded codebases often have undocumented business logic embedded in the implementation. A function that calculates a price might be applying a discount at a step nobody explicitly designed. If you refactor the function based on what you thought the logic should be, you break the behavior that users are currently relying on. Tests that capture current behavior protect you from this.

The challenge is that writing these tests manually is slow -- often too slow for a team that is also shipping product. This is where Autonoma accelerates the process: rather than writing test scripts by hand, you connect your codebase to this open-source agentic testing tool and AI agents read your routes, components, and user flows to generate behavioral tests automatically. The agents handle database state setup for each scenario, so tests exercise realistic conditions. You get a coverage baseline in days rather than weeks, which means you can start the actual refactoring work sooner.

The output of Phase 2 is a test suite that you can run before and after any change. Green means you did not break existing behavior. Red means you need to investigate. This is the safety net that makes everything else possible.

Phase 3: Incremental Refactoring (Week 4-8)

With a behavioral test suite in place, you can start improving the code. The priority order matters.

Consolidate duplicated logic first. Find the functions that exist in three places and pick one. This is usually the lowest-risk improvement and it has an immediate payoff: once the logic lives in one place, bugs are fixed once, not three times. Run your tests after each consolidation to confirm behavior is unchanged.

Standardize error handling next. Pick an error handling pattern for your codebase and apply it consistently. This is more invasive than consolidation and requires more test coverage to do safely, but inconsistent error handling is one of the most common sources of production incidents in vibe-coded codebases.

Address the "works but nobody knows why" functions last. These are the highest-risk items. They require the most careful test coverage before touching, and they often reveal undocumented business logic as you work through them. Budget more time than you think these will take.

The key discipline throughout Phase 3: never refactor without running your test suite first, and never merge a refactoring that leaves the test suite red. The tests are your contract with current behavior. Honor it.

Phase 4: Prevent Recurrence (Ongoing)

Remediation without prevention is just a delayed repeat. The structural changes that prevent vibe coding technical debt from accumulating again are the same ones covered in the vibe coding best practices guide -- coverage as a merge gate, intent-level code review, and automated testing that runs on every pull request.

The difference at Day 90, compared to Day 1, is that you now have empirical evidence of what happens when these guardrails aren't in place. That evidence is useful in the conversation with your team about why the process needs to change.

For a full picture of what the technical debt costs in dollar terms, the TCO framework covers the five hidden cost categories and the math behind them. For engineering leaders who need to make the case to management for the investment in testing infrastructure, that analysis provides the numbers.

The Testing Gap: Why AI Code Quality Degrades Without Coverage

Everything in the vibe coding technical debt story traces back to one gap: the codebase grew faster than the test suite.

This is not a character flaw. It is an expected consequence of how AI coding tools work. GitHub's research shows developers complete tasks 55% faster with Copilot. Test writing velocity does not keep pace -- it stays the same, or gets worse because the code is harder to understand. The gap between code volume and test coverage grows with every sprint. After 90 days, that gap is the size of a production incident waiting to happen.

The fix is not telling developers to write more tests manually. They are already stretched. The fix is making test generation match code generation. This is why we built Autonoma as open-source: AI agents generate behavioral tests from your codebase at the same velocity that AI coding tools generate the code itself. The verification layer finally scales with the output rather than falling behind it.

The QA Engineer's Vibe Coding Survival Guide covers the practitioner-level detail on building this testing layer. For engineering leaders, the summary is straightforward: invest in automated test coverage before you start the refactoring work, not after. The test suite is not a cleanup artifact. It is the tool that makes cleanup safe.

Day 91: How Teams Recover From Vibe Coding Technical Debt

The teams that successfully remediate vibe-coded codebases without a full rewrite share a few patterns.

They started the test suite before the refactoring, not alongside it. They triaged by risk rather than by what annoyed them most. They treated the "works but nobody knows why" code as the highest-risk category, not something to ignore because it was working. And they built automated testing infrastructure, often using open-source agentic tools like Autonoma to generate that initial coverage quickly, that prevented the same patterns from accumulating again.

None of this is fast. The 90-day reckoning does not have a 90-day resolution. But teams that approach it systematically -- map the risk, build the tests, refactor incrementally, prevent recurrence -- come out with a codebase that is meaningfully better than what they started with, and a process that does not recreate the problem with every sprint.

The velocity that made vibe coding appealing on Day 1 is available again on Day 91. It just needs the infrastructure that makes it sustainable. For teams ready to start that transition, the production readiness guide covers the concrete steps to get there.

Vibe coding technical debt is the maintenance burden that accumulates when AI-generated code is shipped without adequate test coverage, architectural review, or consistency enforcement. It differs from traditional technical debt in three ways. First, it accumulates faster: a study of 8.1 million pull requests found technical debt increases 30-41% after AI coding tool adoption. Second, it concentrates in specific failure modes: missing error handling, duplicated logic across files, inconsistent abstractions, and functions that work but whose logic nobody can reconstruct. Third, it compounds at the codebase level rather than in isolated components, because AI tools generate locally-correct code that doesn't account for global patterns. The debt shows up in how pieces fit together rather than in any single function.

The timeline is consistent across teams: warning signs appear around Day 30 (duplicated logic, missing error handling, unexplained dependencies), the velocity impact becomes measurable around Day 60 (feature requests taking 3-5x longer than estimated, refactoring feeling impossible), and the full reckoning typically arrives between Day 60 and Day 90 (sprint capacity 20-30% consumed by bugs, production incidents tracing back to original implementation, the rewrite conversation starting). The exact timing varies by team size and how much test coverage was in place from the start. Teams with any meaningful E2E coverage typically see the curve flatten earlier. Teams with no tests tend to hit the wall hard.

For most teams with real users, remediation is the right choice. Rewrites are emotionally appealing because they feel decisive, but they have a well-documented failure mode: they take longer than estimated (usually 2-3x), they replicate hidden business logic bugs that were embedded in the original implementation, and they leave the existing product unmaintained during the build. Remediation is slower but survivable. The key sequence: triage the risk surface area, build a behavioral test suite that documents current behavior, refactor incrementally behind the tests, and put guardrails in place to prevent recurrence. The only situation where rewrite makes sense is when the codebase is so early-stage that there are no real users yet and no significant accumulated behavior to preserve.

The key insight is that you want tests that capture current behavior, not tests that verify intended behavior. These are different things in a vibe-coded codebase. Start by identifying your critical user paths -- login, core product actions, payment flows -- and write tests that exercise those paths end-to-end. Run them against the current application and capture the results. These become your behavioral baseline. Any refactoring that changes those results is a potential regression, even if it looks like an improvement. For teams that need to build this coverage quickly, Autonoma is open-source and automates the process: AI agents read your codebase and generate behavioral tests from your routes, components, and user flows without requiring manual test scripting. This is especially useful when the codebase is large and the team does not have weeks to spend on manual test writing.

Four patterns appear consistently. First, duplicated logic: the same function or business rule implemented in three or four places, each slightly different, because each AI coding session generated its own version without checking for existing implementations. Second, inconsistent error handling: some paths throw, some return null, some log and continue -- each pattern generated by a different AI session with different conventions. Third, dependency sprawl: 15-20 silently-introduced packages that nobody consciously evaluated, some unmaintained, some duplicating existing functionality. Fourth, locally-correct but globally-inconsistent code: each function works in isolation but the way they fit together is fragile -- state flows unpredictably, abstractions are inconsistent across modules, and touching one component has unexpected effects on adjacent ones.

The structural prevention is simple to describe and requires discipline to maintain: test coverage as a merge gate, not a retrospective metric. No PR that touches a critical path ships without behavioral test coverage. In practice, this means treating test generation as co-requisite with code generation. If your team uses AI tools to write code, use AI tools to generate tests for that code. The [vibe coding best practices guide](/blog/vibe-coding-best-practices) covers the operational model in detail. The teams that avoid the 90-day reckoning are the ones that made testing infrastructure a prerequisite for AI tool adoption at scale, not an afterthought.