The QA Engineer's Guide to Surviving the Vibe Coding Era

QA engineer vibe coding skills are the highest-leverage capability gap in engineering right now. Vibe coding tools ship AI-generated code at speeds no human test suite can keep up with, and 16 of 18 engineering leaders surveyed hit serious quality failures within 90 days of adopting AI coding tools. This guide covers the specific skills QA engineers need to develop, how to position yourself for the roles opening up, and a concrete 90-day plan to get there. The short version: the testing void created by vibe coding makes QA engineers more critical than ever, not less.

Something broke on engineering teams this year, and most of them are still figuring out what it is. Developers ship more code faster than ever. Test coverage is not keeping up. Bugs that used to get caught in review are reaching production. Incidents are up. Confidence in releases is down. And on a lot of these teams, the QA function either got cut in the last round of layoffs or got handed a backlog nobody has time to work through.

Our overview of how vibe coding changes QA covered the landscape and the four stages of QA evolution. This guide is the specific playbook: what to learn, how to position yourself, and a 90-day plan to get there.

That redesign is the job. Not the job that will exist someday, but the actual work happening on engineering teams right now, often without a clear owner. The testing gap is massive and well-documented. This guide covers the skills QA engineers need to step into that role, how to make the case internally for the work, and a 90-day plan built around what is actually feasible without organizational buy-in on day one.

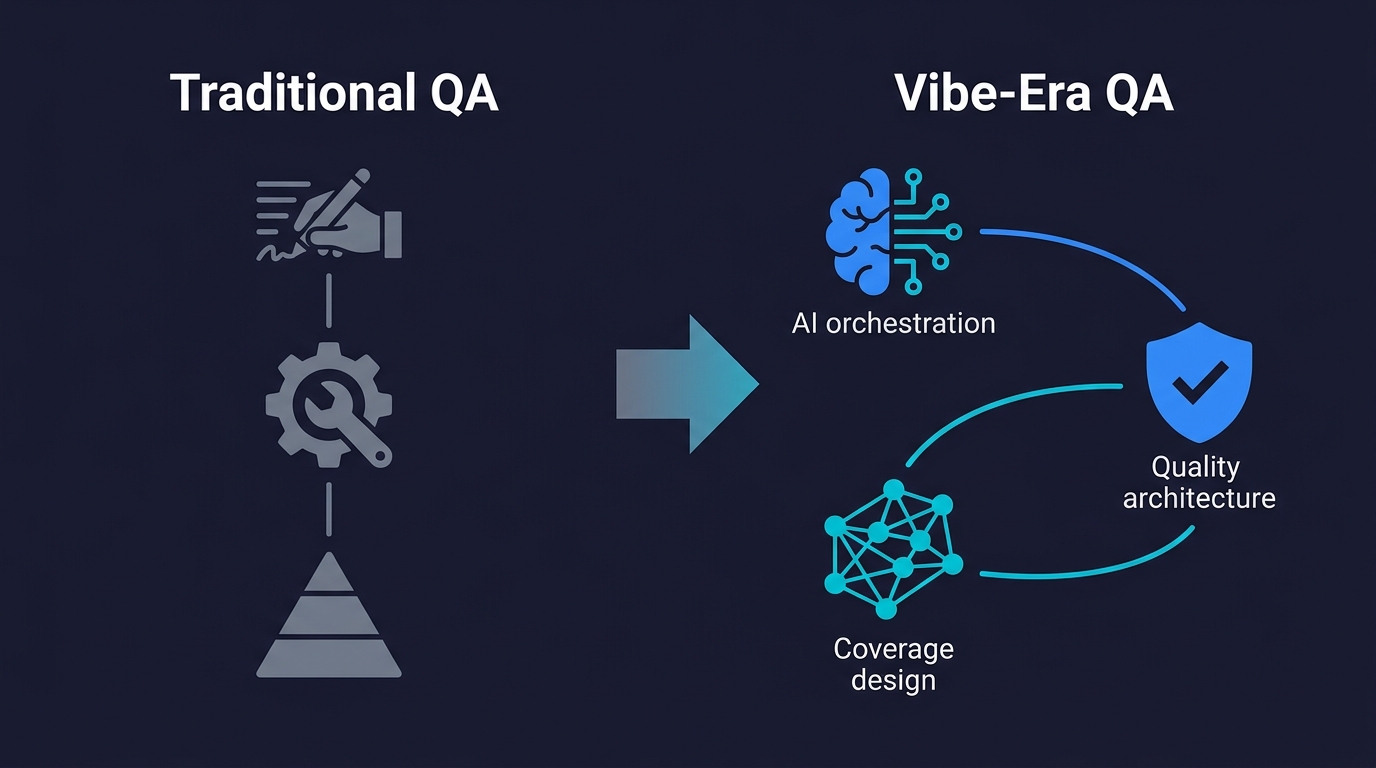

The New QA Skill Stack for Vibe Coding

The skills that made a QA engineer excellent in 2022 are necessary but no longer sufficient. Exploratory testing instincts, edge-case thinking, system-level understanding, and test design principles all carry forward. Here is what to add on top of them, in order of leverage.

The five skills, summarized:

- Prompt engineering for test generation: writing adversarial prompts that produce meaningful coverage, not happy-path noise

- AI output validation: structured review of AI-generated code for the specific failure classes AI tools produce

- Agentic testing orchestration: configuring and directing autonomous testing agents, shaping coverage architecturally

- Security testing for AI code: behavioral security testing against OWASP Top 10, focused on runtime behavior rather than static patterns

- Continuous testing pipeline architecture: designing quality infrastructure that keeps pace with AI-speed code generation

The four stages of QA evolution — from manual tester to AI testing orchestrator — map where you are now. The skill stack below is what moves you forward.

Prompt Engineering for Test Generation

This is the most immediately applicable skill and the easiest to underestimate. The ability to write precise, adversarial prompts that produce useful test coverage from AI tools is a distinct skill set. It is not just "telling the AI what to test."

The difference in practice: a weak prompt says "write tests for the login flow." A strong prompt says "generate boundary-value tests for the email validation in the login form, including SQL injection patterns, Unicode edge cases, and inputs exceeding 254 characters, then generate tests for concurrent session behavior when the same account logs in from two devices simultaneously."

The weak prompt produces happy-path tests that the developers already know work. The strong prompt produces the tests that catch the bugs vibe-coded implementations reliably miss. Developing this skill means learning the specific failure modes of AI-generated code, which happen at a documented rate and follow recognizable patterns: missing boundary checks, unsanitized inputs, optimistic auth assumptions, and race conditions in async flows.

Practice this concretely: take a recently vibe-coded feature in your codebase, write a test generation prompt, review the output critically, then iterate the prompt until the coverage addresses the failure modes you'd actually be worried about. Do this weekly. Track what prompt structures reliably surface meaningful test gaps versus what produces noise.

The industry term for this broader shift is "vibe testing," the counterpart to vibe coding where test cases are generated from natural language prompts rather than scripted code. The concept is sound. The execution gap is that most vibe testing today produces happy-path coverage. The QA engineer's skill is in making vibe testing adversarial, targeted, and connected to the specific failure modes that AI-generated code actually produces.

AI Output Validation

Most teams have no systematic way to evaluate whether the code their AI tools just produced is actually correct. This is a gap QA engineers are uniquely positioned to fill, and it pays to develop it as an explicit capability.

AI output validation is not code review in the traditional sense. It is a structured adversarial review designed to surface the specific classes of error that AI tools produce. The security angle alone justifies it: 53% of teams that shipped AI-generated code later discovered security issues that passed initial review. Forty-five percent of AI-generated code samples introduced OWASP Top 10 vulnerabilities. Those issues were not found by developers reviewing their own AI-generated code. They were found later, by someone looking specifically for them.

Develop a personal checklist for AI output review. It should cover auth assumptions (did the AI assume the calling user has the right permissions without verifying?), data validation (where does user input touch database queries or file system operations?), error handling (what happens on the unhappy path the AI didn't implement?), and concurrency (does this code assume single-user access where the real system has multiple users?). These four categories catch the majority of vibe coding failures documented in real production incidents.

Agentic Testing Orchestration

This is the emerging skill with the highest long-term leverage. As AI testing tools become the infrastructure layer for quality at most engineering organizations, the QA engineers who can configure, direct, and evaluate those tools will hold a structural advantage.

Agentic testing means working with platforms that read your codebase, plan test coverage from your routes and components, execute tests autonomously, and self-heal when the code changes. Autonoma, which we built for exactly this workflow, is the reference implementation. The work is not writing test scripts. It is understanding coverage architecture: which flows matter most, how to structure codebase organization to make testing more effective, how to evaluate whether the agents are testing the right things.

This requires a different mental model from traditional test automation. In traditional automation, you write a test and it tests exactly what you specified. With agentic testing, you shape the system's coverage by how you structure your code and what guidance you provide to the agent. The skill is architectural, not scripting-level.

Start by connecting your current project to an agentic testing tool and spending time understanding what it tests and what it misses. The gaps it misses will teach you more about coverage architecture than any tutorial.

Security Testing for AI Code

This deserves its own entry because the security failure rate of vibe-coded applications is high enough to make it a specialized discipline, not just an add-on to general QA work.

The security risk profile of AI-generated code has specific characteristics. Static scanners miss a large class of vulnerabilities because the vulnerabilities exist in runtime behavior, not code patterns. The Moltbook case study is the canonical example: a Supabase key that was safe by design became a database backdoor because RLS was never enabled. A static scanner would not have caught it. A behavioral security test that authenticates as User A and attempts to read User B's data through the API catches missing access control immediately.

The skill to develop here is behavioral security testing: testing the runtime behavior of an application against known attack patterns rather than scanning the source code for known vulnerable patterns. Learn OWASP Top 10 well enough to write tests that verify the application's defenses against each category. IDOR testing, authentication bypass testing, injection testing through application interfaces rather than static analysis. These are skills most QA engineers have partial exposure to. Deepen them until you can run a structured behavioral security review of a vibe-coded feature before it ships.

Continuous Testing Pipeline Architecture

Understanding how to design and maintain a testing pipeline that keeps pace with AI-speed code generation is a leadership-level skill that most QA engineers are not developing fast enough. The death of the traditional test suite is not just a problem for developers. It is an opportunity for QA engineers who can architect what replaces it.

The replacement is continuous, codebase-grounded coverage that runs automatically on every commit, reports failures in real time, and does not require manual maintenance as the codebase evolves. Designing this pipeline requires understanding CI/CD integration, test coverage metrics that meaningfully measure risk rather than just coverage percentage, and how to make the feedback loop fast enough that developers actually respond to test failures before they stack.

This is the skill that moves QA engineers from an execution role into an architectural one.

Career Positioning Strategies for QA Engineers

Skills matter. How you position them matters at least as much.

Rename the Role You're Selling

"QA Engineer" is a title that signals a specific function to most engineering leaders: test writing and test execution. In a vibe coding world, that function is being automated. What engineering leaders actually need, and are struggling to find, is something closer to "Quality Architect" or "AI Testing Lead" or "Quality Engineering Lead." The function is designing and owning the quality infrastructure that catches what AI coding tools produce.

This is not a superficial rebrand. It is a meaningful reframing of the value you provide. You are not executing tests. You are building and owning the system that ensures AI-generated code meets production standards. That is a different role with different leverage, and it commands different compensation and organizational influence.

When talking to your current leadership or interviewing at new companies, frame your value in terms of risk and velocity, not test counts. "I own the quality infrastructure that lets us ship AI-generated code without production incidents" is more compelling than "I write and maintain our E2E test suite." Both might describe the same actual work. The framing determines how leadership thinks about your function.

Have the Vibe Coding Governance Conversation

Most engineering organizations that have adopted AI coding tools have done so without establishing quality governance for the AI-generated code. They are shipping faster and experiencing quality problems, and they do not have a systematic response. This is a gap you can fill if you initiate the conversation proactively.

The conversation to have with your engineering director or VP of Engineering is not "our QA process needs updating." It is "our team is shipping AI-generated code without governance for the quality risk that creates. Here's the specific failure pattern that's been documented at other organizations, here's what it costs when it hits production, and here's the framework I'd propose for managing it." Then bring the data: the quality failure rates referenced above, 63% of developers spending more time debugging AI code than writing it manually, and the concrete bug rate data and triage playbook.

This conversation positions you as the engineer who understood the organizational risk before leadership did. That is how QA engineers become quality leads.

Specialize Visibly

The QA engineers who are positioning most successfully in the vibe coding era are not generalists. They are specialists who have developed a visible point of view on a specific dimension of the vibe coding quality problem. Security testing for AI code is one. Agentic testing architecture is another. The startup QA context is a third, where the vibe coding patterns are most aggressive and the quality governance is most absent.

Pick the specialization that intersects with your existing experience and the market you want to operate in. Then build visibility in that specialization: write about it publicly, contribute to community discussions, document your approach. The community of engineers who have developed serious thinking about vibe coding and QA is still small. Visibility in it is achievable.

Where the New QA for Vibe Coding Opportunities Are

The Quality Lead Role at AI-Native Teams

The fastest-growing category of QA opportunity is the quality lead at teams that have fully adopted AI coding tools and are hitting quality problems. These teams move fast, have real revenue at stake, and cannot afford the production incidents that vibe coding failures create. They also do not want to slow down their AI coding workflows with manual quality gates.

What they need is a QA engineer who understands agentic testing, can set up continuous quality infrastructure that does not create friction for developers, and can establish the governance framework that keeps AI-generated code from reaching production with critical defects. This role does not exist as a named job description at most companies yet. It is being invented by QA engineers who walk into the right conversation with the right framing.

The market backdrop makes it urgent: the AI coding tools market is valued at $4.7 billion globally in 2026, projected to reach $12.3 billion by 2027. Every dollar of that growth is code that needs quality infrastructure. The QA engineers who build expertise in that infrastructure now are positioning themselves for the market that develops over the next 18 months.

AI Testing Infrastructure Roles

There is an emerging category of role at companies building or implementing AI testing tools. These companies need QA engineers who understand both the quality engineering side and the AI tooling side deeply. The combination is rare, which means compensation is strong and the work is high-leverage.

This includes companies building testing agents, companies implementing AI testing infrastructure at scale, and consulting organizations helping enterprises navigate the vibe coding quality transition. Platforms like Autonoma that read codebases and generate tests autonomously need QA engineers who can evaluate coverage, identify gaps, and shape how the agents approach quality.

Platform and DevEx Teams

Platform engineering teams at larger organizations are increasingly responsible for the tooling and infrastructure that other engineers use to ship software. As AI coding tools become part of that tooling stack, platform teams need QA engineers who can evaluate those tools, establish quality standards for AI-generated code, and build the testing infrastructure that enforces those standards.

This is a less visible opportunity than the AI testing startup world, but it is larger. The majority of companies adopting AI coding tools are enterprises, and their platform teams are the ones making the infrastructure decisions that determine how AI-generated code gets tested before it ships.

Your 90-Day Vibe Coding QA Action Plan

Knowing what skills to develop and which opportunities are opening is not enough without a concrete starting point. Here is a 90-day structure that moves you from awareness to positioned.

Days 1 to 30: Skill audit and targeted learning. Start by auditing your current skill against the stack above. Be honest about gaps. Then pick one skill to develop deeply in the first month: prompt engineering for test generation is the fastest to develop and the most immediately applicable. Spend two hours a week practicing adversarial test prompt writing against real code in your current project. Document what you learn. Connect your current project to an agentic testing tool, even just for evaluation, and spend time understanding what it covers and misses.

Days 31 to 60: Organizational positioning. By the second month, you should have enough practical experience with agentic testing and AI output validation to have a credible conversation with your engineering leadership. Prepare the vibe coding governance pitch: the data on quality failure rates, the specific risks in your organization's current workflow, and a concrete proposal for what changes. Have that conversation. Whether the organization responds or not, you've established yourself as the engineer who understood the risk.

Simultaneously, pick your specialization and start building visibility. Write one piece of technical content about your specific angle on vibe coding quality. Post it where your professional community reads.

Days 61 to 90: Market evaluation and role positioning. By the third month, you should have a clear sense of whether your current organization is moving in a direction that leverages your evolving skills. If it is, great. If it is not, use the third month to evaluate the market. Look specifically at AI-native companies, platform engineering teams, and companies building AI testing infrastructure. The conversations you have in those interviews will also sharpen your positioning, regardless of whether they lead to offers.

The specific milestones:

- By day 30: Run at least ten adversarial test generation sessions and document what makes prompts effective.

- By day 60: Have the governance conversation with leadership and publish at least one piece of technical content about vibe coding quality.

- By day 90: Have a clear answer to where your skills are most valued and a concrete plan to get there.

Vibe Coding and QA: The Actual Situation

The instinct to be anxious about vibe coding is understandable but based on a misread of the situation. The threat to QA engineers is not that AI will test the code it wrote. That does not work, and the industry is learning that quickly. The threat is remaining associated with a model of QA that cannot scale with AI-speed code generation: manual test writing, brittle script maintenance, and periodic release gates.

The opportunity is in the gap between how fast vibe coding produces code and how fast the industry can establish quality infrastructure for it. That gap is real, it is documented, it is costing organizations money, and it is not closing by itself.

The practical testing checklist for vibe-coded apps is one tool. The production readiness assessment is another. But tools are only useful to engineers who have positioned themselves to apply them. The 90-day plan above is about that positioning.

QA engineers who understand vibe coding workflows, who can architect the quality infrastructure that catches what AI tools produce, and who can communicate the organizational risk of not having that infrastructure are not in a shrinking market. They are in the fastest-growing category of engineering need in 2026. The question is whether you get there before the position is obvious to everyone.

QA engineer vibe coding skills refer to the specific capabilities needed to ensure quality in codebases where AI tools like Cursor, Lovable, and Bolt generate significant portions of the code. This includes prompt engineering for test generation, AI output validation, behavioral security testing for AI-generated code, and agentic testing orchestration. These skills differ from traditional QA in that they focus on the specific failure patterns of AI-generated code (missing boundary checks, optimistic auth, unsanitized inputs) rather than the failure patterns of human-authored code. The best starting point is connecting your codebase to an agentic testing tool like Autonoma and developing a structured approach to adversarial test prompt writing.

The data points in the opposite direction. CodeRabbit found that AI-generated code produces 1.7x more major issues than human-written code. 16 of 18 engineering leaders who adopted vibe coding tools hit serious quality failures within 90 days. The vibe coding and QA dynamic is not that AI replaces QA engineers. It is that AI-generated code requires more rigorous quality infrastructure than human-written code, not less. The QA tasks at risk are script maintenance and manual test execution. The QA work that becomes more valuable is testing strategy, coverage architecture, security testing, and AI output validation. Engineers who shift from execution-level work to infrastructure-level work are positioned well.

Several titles are emerging that reflect the shift. Quality Engineering Lead, AI Testing Lead, Quality Architect, and Developer Quality Experience Engineer are all being used at companies navigating this transition. The common thread is that these titles signal infrastructure ownership rather than test execution. A Quality Engineering Lead is responsible for the system that catches bugs, not for catching individual bugs manually. At AI-native startups, the role is often described as 'the person who makes sure what the AI ships is actually correct.' The title matters less than the framing: position yourself as owning the quality infrastructure, not executing the test plan.

Start by connecting a real project to an agentic testing tool and spending time understanding its coverage decisions. Tools like Autonoma read your codebase, plan test cases from your routes and components, and execute tests autonomously. The learning is in understanding what the agent tests, what it misses, and how codebase structure affects coverage quality. Develop the architectural intuition for how to organize code and express intent in ways that make agentic testing more effective. Then practice shaping coverage by providing structured guidance rather than writing individual test scripts. The skill is closer to coverage architecture than script writing.

Behavioral security testing is the highest-leverage specialization. Static scanners miss the majority of vibe coding security vulnerabilities because those vulnerabilities exist in runtime behavior, not code patterns. The skills to develop: IDOR testing (verify that authenticated users cannot access other users' data through the API), authentication bypass testing (verify that auth guards are actually enforced at every endpoint, not just the UI layer), injection testing through application interfaces rather than static analysis, and database access control verification (confirm that row-level security, API key scoping, and permission checks are functioning). Work through the OWASP Top 10 as a test specification rather than a reading list. Write a test for each category and verify your current application's defenses.

The best opportunities are at AI-native teams that have adopted vibe coding tools and are experiencing the quality problems that follow, platform engineering teams at enterprises building AI coding tool infrastructure, and companies building AI testing tools themselves. Tools and platforms in the vibe coding and QA space include Autonoma for agentic testing, along with companies building developer experience infrastructure for AI-heavy teams. Job descriptions may not yet use the phrase 'vibe coding QA.' Look instead for signals like 'AI-generated code quality,' 'agentic testing,' 'AI output validation,' and 'quality infrastructure' in the role description. Those signals indicate teams that understand the problem and are looking for someone who does too.