Vibe coding and QA is the friction point nobody in the industry is talking about honestly. Vibe coding (a term coined by Andrej Karpathy describing AI-assisted development where developers describe intent and AI writes the code) has made building software dramatically faster, but it has created a testing void that grows with every AI-generated commit. QA engineers are not being replaced by vibe coding. They are being needed in a fundamentally different way: less as script writers and manual testers, more as quality strategists who can orchestrate AI-powered testing infrastructure at the speed that vibe coding demands. The role is changing. It is not disappearing.

If you have spent the last year watching vibe coding reshape your industry and feeling like your QA skills are slowly becoming irrelevant, you are not imagining it. The specific skills that defined QA for the last decade, writing Selenium scripts, maintaining test suites, doing systematic manual regression passes, are genuinely less valuable than they used to be. That part of the anxiety is accurate.

The part that is wrong is the conclusion people draw from it.

The automation frameworks you spent years building feel fragile against a codebase that changes at AI speed. The manual testing workflows cannot keep up with a developer shipping three features before lunch. The old tools are not broken. They are just slower than the problem they are trying to solve. That mismatch is real, and it is worth naming honestly before talking about what comes next.

The Testing Void Vibe Coding Created

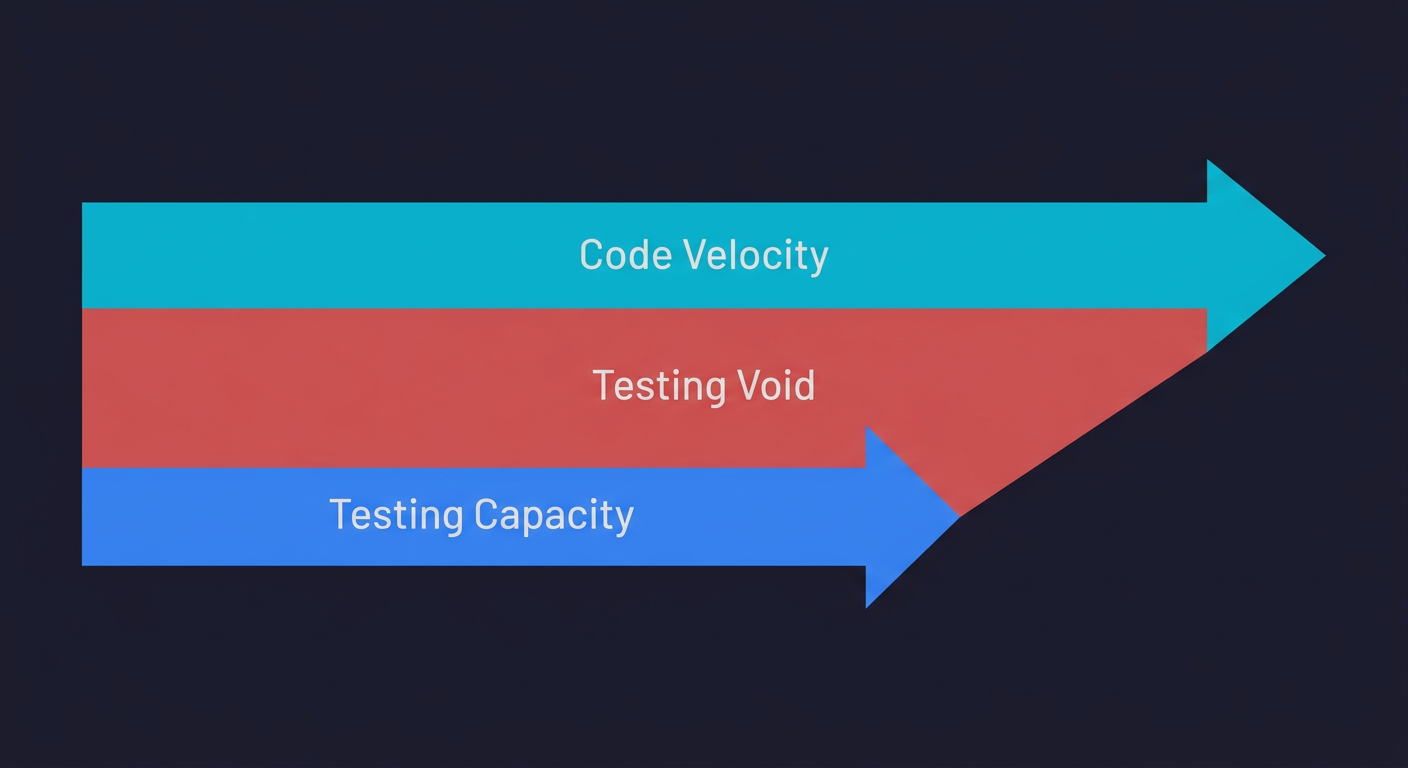

Here is what vibe coding actually did to software quality: it broke the relationship between shipping velocity and quality verification. Before AI coding tools, the speed at which a developer could ship code roughly matched the speed at which a QA team could test it. Not perfectly, but the gap was manageable. Vibe coding obliterated that balance.

A developer using Cursor or Claude Code can now generate and ship features in hours that would have taken days. The vibe coding testing gap that emerged from this acceleration is enormous. AI-generated code ships 1.7x more major issues than human-written code. 63% of vibe coders spend more time debugging after shipping. Research from GitClear shows 4x code duplication rates in AI-generated codebases, and 45% of AI-generated code contains security flaws. Production incidents are up. Real-world vibe-coded apps have broken in production in ways that proper QA coverage would have caught before they reached users.

The testing void is not a reason to fear the future of QA. It is the argument for why QA is more important now than it has ever been. The vibe coding bubble is not a technology failure. It is a verification failure. And verification is precisely what QA professionals were built to provide.

What the QA Engineer Role Looked Like Before Vibe Coding

To understand where QA is going, it helps to be honest about where it has been.

For most of its history, the QA role was reactive and manual. A developer shipped a feature. QA wrote test cases. QA executed test cases, mostly by hand. QA filed bugs. The developer fixed bugs. QA retested. This cycle repeated for every release. It was slow, it was expensive, and it created a bottleneck that engineering teams often resented.

The first wave of automation did not fundamentally change the dynamic. QA engineers learned Selenium, then Cypress, then Playwright. They wrote scripts instead of clicking buttons. The tests ran faster. But someone still had to write and maintain every script. Every UI change broke something. The automation backlog became a different version of the manual backlog. Faster, but not structurally different.

This is the version of QA that vibe coding is genuinely disrupting. Script-by-script automation, maintained by hand, verified by clicking through user flows, this work is being replaced. Not by vibe coding itself, but by the agentic testing layer that vibe coding made necessary.

If your job today is primarily writing Playwright scripts for user flows that an AI could document and test automatically, that specific job is changing. The skills are not wasted. The orientation needs to shift.

The QA Role That Vibe Coding Actually Needs

Vibe coders cannot test their own software. This is not an opinion; it is a structural reality. When vibe coding at startups, the developer building the product typically has limited testing experience and no time to acquire it. They ship features from prompts. They move fast. They need someone who can think about quality systematically, someone who asks "what happens when this fails?" before a user finds out.

That person is a QA engineer, operating at a different level than before.

The skills that vibe coding makes critical are not the ones that get threatened by AI. They are the judgment calls that AI cannot make: deciding which user flows are critical enough to test, understanding what "good enough" means for a given risk tolerance, diagnosing why something broke in a way that the error message does not explain, and designing a testing strategy that covers a codebase that changes every day.

Vibe-coded apps also introduce new categories of risk that traditional QA was not designed to address. AI-generated code contains 2.74x more XSS vulnerabilities. Logic errors appear in places where the code looks syntactically correct but behaves incorrectly under edge cases the AI never considered. These require QA engineers who understand not just how to run tests, but what to test for in AI-generated code specifically.

The QA professional that vibe coding needs is not a script writer. It is a quality strategist: someone who can look at a fast-moving, AI-generated codebase and design the verification layer that keeps it trustworthy.

The Four Stages of QA Evolution in the Vibe Coding Era

The transition is not a single leap. It happens in stages, and understanding where you are on the path matters.

Stage 1 was the manual tester. Test cases written in spreadsheets. Execution by hand. Bugs filed in Jira. This work is largely gone or going. Not because of vibe coding specifically, but because automation made it uneconomical years ago.

Stage 2 was the automation engineer. Selenium, Cypress, Playwright. Test scripts checked into the repo. CI/CD integration. This is where most mid-career QA professionals sit today. Some of this work is being commoditized by AI. Not eliminated, but reduced in value as the tools for generating test scripts improve.

Stage 3 is the quality strategist. This is where the role is heading for senior QA professionals and QA managers. The job shifts from "write tests" to "design the quality system." What needs to be tested? At what layers? With what coverage? How do we know when the system is trustworthy enough to ship? These are judgment calls that require deep product understanding and testing expertise, and AI cannot make them.

Stage 4 is the AI testing orchestrator. This is emerging now. QA professionals who understand how to configure, direct, and interpret agentic testing systems, tools that connect to the codebase, plan test cases from code analysis, execute them automatically, and self-heal when the code changes. The skill is not coding the tests. It is understanding what the AI-generated tests cover, what they miss, and how to close the gaps.

| Stage | Role | Core Skills | Tools | Status |

|---|---|---|---|---|

| 1 | Manual Tester | Test case writing, exploratory testing, bug reporting | Spreadsheets, Jira, manual browsers | Largely phased out |

| 2 | Automation Engineer | Script writing, CI/CD integration, framework maintenance | Selenium, Cypress, Playwright | Being commoditized by AI |

| 3 | Quality Strategist | Risk-based testing, coverage design, production readiness assessment | Coverage modeling, quality dashboards, risk frameworks | Current transition target |

| 4 | AI Testing Orchestrator | Agentic testing configuration, AI output interpretation, gap analysis | Autonoma, codebase-connected AI testing platforms | Emerging role |

Stage 4 is already accessible today. Autonoma is the agentic testing platform QA engineers use to configure that layer: connect your codebase, direct the agents toward the flows that matter most, and interpret the coverage results — the script-writing work is gone, and what remains is exactly the judgment-layer work that defines the quality strategist role.

Why Testing Is Being Democratized (And What That Means for QA)

One of the more disorienting changes in the vibe coding era is that testing itself is being democratized. Testing a vibe-coded app no longer requires writing code. AI-powered tools can generate and run tests from a codebase automatically, with no Playwright expertise required. Product managers are running tests. Founders are checking their own user flows without a QA team.

This sounds threatening. It is actually clarifying.

When basic test execution is democratized, what remains valuable is the expertise that makes testing meaningful. Anyone can run a test. Not anyone can interpret what a test failure actually means for the user experience. Not anyone can design a test suite that catches the edge cases that matter while not wasting CI resources on cases that do not. Not anyone can look at a new AI-generated feature and identify the three user flows most likely to fail.

The democratization of test execution raises the floor for everyone. It raises the ceiling for QA professionals who understand what to do with the output.

This is the same pattern that repeated in every previous automation wave. When code deployment was automated, it did not eliminate DevOps engineers. It created the DevOps discipline. When monitoring was automated, it did not eliminate SREs. It elevated what SREs were expected to know. Testing automation follows the same path.

Vibe Testing: The QA Side of the Equation

If vibe coding is about building software through natural-language prompts, vibe testing is its counterpart: using AI agents to generate, execute, and maintain tests without writing traditional automation scripts. The term is gaining traction across QA communities, and it describes a real shift in how verification happens.

Vibe testing is not a replacement for QA strategy. It is a tool within the QA professional's arsenal. Platforms like Autonoma connect directly to a codebase, read routes and user flows, generate test cases automatically, execute them against running applications, and self-heal when the code changes. This is what agentic testing looks like in practice: no Playwright scripts to maintain, no test code to debug, no manual regression passes.

But vibe testing without QA strategy is like vibe coding without code review: fast and dangerous. Someone still needs to decide what risk tolerance is acceptable, which user flows are critical, and whether the AI-generated tests are actually covering the failure modes that matter. That judgment layer is where QA professionals add value that no tool can replicate.

Assessing Production Readiness Is Now a QA Skill

One underrated consequence of vibe coding is that the question "is this ready to ship?" has become significantly harder to answer. Assessing whether a vibe-coded app is production ready requires someone who understands not just whether the tests pass, but whether the tests are testing the right things.

This is a QA skill. It has always been a QA skill. Vibe coding just made it a much more prominent one because the developers shipping code often have no framework for answering that question themselves.

QA engineers who develop fluency in production readiness assessment, who can walk into a vibe-coded codebase and give an honest, structured answer to "is this safe to ship?", are solving a problem that the entire industry currently has and few people can address. That is a very strong career position.

What QA Engineers Should Learn in the Vibe Coding Era

The career anxiety in the r/QualityAssurance thread is real, but it is answerable. Here is the honest map of where to focus.

Learn agentic testing tools first. Tools like Autonoma represent the direction the verification layer is heading. Understanding how codebase-connected, AI-driven testing works, what it covers, what its limits are, how to configure it for different risk profiles, is a skill that will be worth more every quarter for the next several years. You do not need to build these tools. You need to know how to use and direct them.

Develop security testing fluency. Vibe-coded code ships with more vulnerabilities by default. QA engineers who can bridge the gap between functional testing and security testing are increasingly rare and increasingly in demand. You do not need to become a penetration tester. You need to understand what AI-generated code tends to get wrong on the security dimension and how to test for it.

Move from test writing to test strategy. The highest-leverage skill shift is from "I write the tests" to "I design the system that ensures quality." This means understanding risk-based testing, coverage modeling, what the right ratio of unit to integration to E2E tests looks like for a given product, and how to make the case for testing investment to stakeholders who did not grow up thinking about it.

Build context on AI-generated code patterns. Understanding how AI coding tools generate code, what patterns they default to, where they tend to introduce bugs, and what edge cases they systematically miss, turns a QA engineer into someone who can catch problems before they reach the testing layer. That is an unusually valuable position.

Your first 30 days. If you want to start the transition now, here is a concrete path. Week one: connect an agentic testing tool like Autonoma to one of your existing projects and see what it catches that your current suite misses. Week two: audit your existing test suite for coverage gaps specific to AI-generated code patterns. Week three: draft a risk-based testing strategy for your most critical user flows and present it to your team lead. Week four: identify one area where your security testing coverage is weakest and start closing the gap. This is not a five-year plan. It is a month of focused effort that repositions how your team thinks about your role.

The Future of QA: A Career Opportunity Nobody Is Talking About

The QA community is having the wrong conversation. The question is not "will vibe coding replace QA?" The more interesting question is: what happens to software quality when the supply of vibe-coded applications dramatically outstrips the supply of people who know how to verify them?

The answer is exactly what has happened with every previous supply-demand gap in software engineering: rates go up, the discipline gets elevated, and the people who positioned themselves early capture the best opportunities.

There is more code being shipped right now than at any point in the history of software. Most of it is being shipped without adequate testing. The companies doing the shipping are going to learn this lesson either from a QA professional who tells them proactively, or from a production incident that costs them users and revenue.

That dynamic is not a threat to QA. It is a decade of job security for anyone willing to evolve the role.

Vibe coding affects QA engineers by changing what skills are most valuable, not by eliminating the need for QA. The shift is from manual test execution and script writing toward quality strategy, agentic testing orchestration, and production readiness assessment. Because vibe coding ships code faster and with more quality gaps than traditional development, QA expertise is actually in higher demand. The QA engineers most affected are those whose work is primarily manual testing or maintaining automation scripts that AI can now generate automatically. The QA engineers least affected, and most in demand, are those who operate at the strategy and infrastructure level.

No. Vibe coding is more likely to increase demand for QA expertise than replace it. AI-generated code ships 1.7x more major issues than human-written code and 2.74x more security vulnerabilities. That creates a larger verification problem, not a smaller one. What changes is the form of QA work: manual test execution and hand-written script maintenance decrease in value, while test strategy, agentic testing configuration, and production readiness assessment increase in value. Tools like Autonoma represent the direction the testing layer is heading, codebase-connected and fully automated, but they require QA professionals to direct, interpret, and act on them.

No. QA is transforming, not dying. The version of QA that is most threatened by vibe coding is reactive, manual, and script-focused: test cases written in spreadsheets, executed by hand, with Selenium scripts that break every time the UI changes. The version of QA that vibe coding needs is strategic, forward-looking, and infrastructure-oriented: someone who can design a quality system for a fast-moving, AI-generated codebase and ensure it stays trustworthy at the speed vibe coding demands. The career opportunity for QA professionals who make this transition is larger than it has been in years.

The most valuable skills for QA engineers in the vibe coding era are: (1) Agentic testing tools, understanding how platforms like Autonoma work, what they cover, and how to direct them. (2) Security testing fluency, because AI-generated code ships with more vulnerabilities by default and requires QA engineers who understand what to test for. (3) Test strategy and coverage design, moving from writing individual tests to designing the system that ensures quality at scale. (4) Production readiness assessment, the ability to evaluate whether a vibe-coded application is genuinely ready to ship. These skills are in short supply and growing in demand.

The future of QA is the AI testing orchestrator role: QA professionals who understand how to configure, direct, and interpret agentic testing systems that connect to codebases, generate tests automatically, execute them against running applications, and self-heal when the code changes. This is not a distant future. It is already emerging. The QA professionals who will thrive are those who move up the stack from test execution to test strategy, develop fluency in AI-generated code patterns and where they fail, and position themselves as the people who can answer the hardest question in a vibe coding team: is this actually safe to ship?

The best testing tools for vibe-coded apps are those that match the zero-expertise, high-velocity model of vibe coding itself. Traditional script-based tools like Playwright and Cypress require manual maintenance that vibe coding teams cannot sustain. The leading option is Autonoma, which connects to your codebase, reads your routes and user flows, generates test cases automatically, and self-heals when your code changes. For teams starting from zero, smoke tests covering critical paths in CI and static analysis for security vulnerabilities are reasonable starting points, but neither gives the full-application coverage that codebase-connected agentic testing provides.