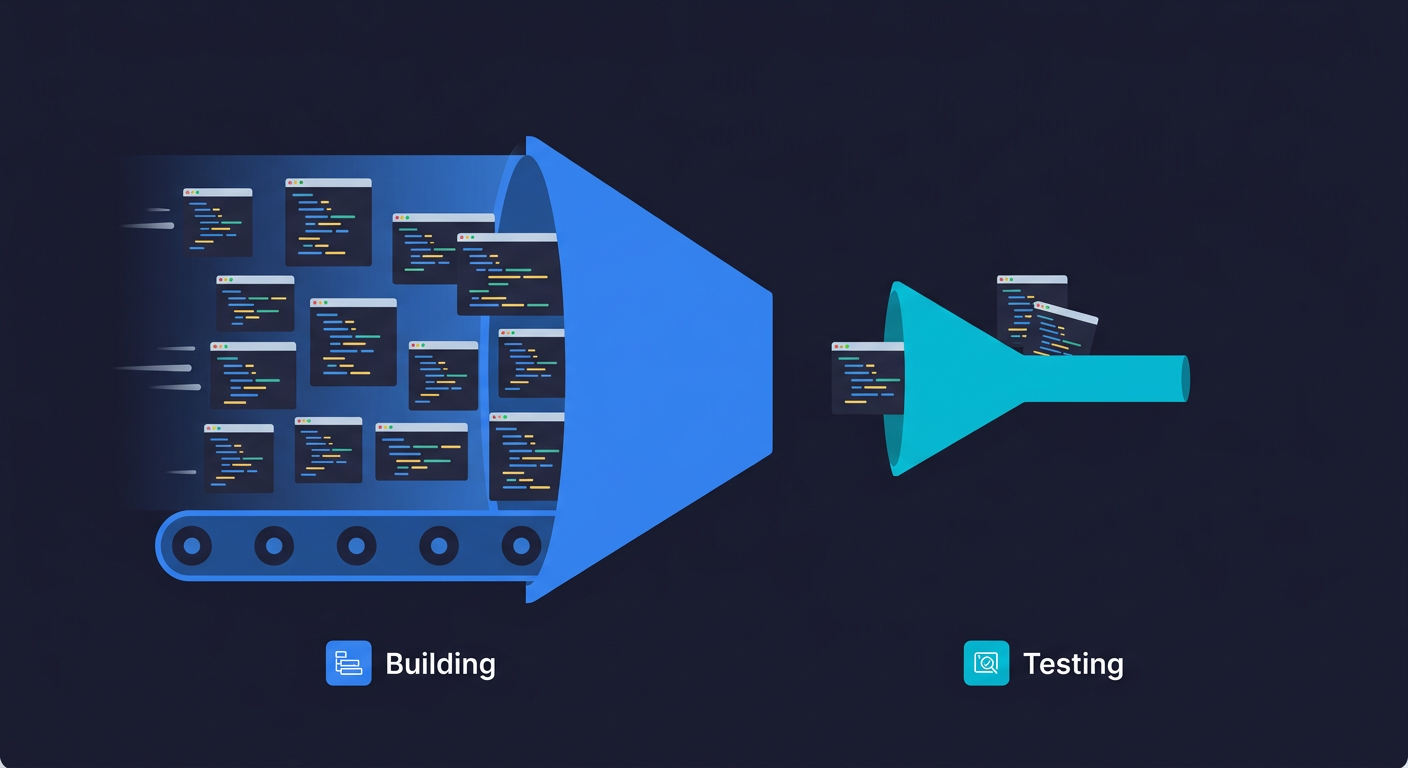

The vibe coding bubble isn't about vibe coding itself. It's about a dangerous asymmetry it created. Vibe coding (AI-assisted development where developers describe intent and let AI write the code) has democratized software building: 81,000 monthly US searches, Collins Dictionary Word of the Year 2025, a $4.7B market growing to $12.3B. But it hasn't democratized testing. AI-generated code ships 1.7x more major issues and 2.74x more XSS vulnerabilities. 63% of vibe coders spend more time debugging than before. Building is easy now. Software quality verification is not.

The numbers look incredible. Then you look at the quality data.

r/vibecoding crossed 89,000 members and is growing at 16% per month. 80% of Fortune 500 companies use active AI agents. The market just grew 162% year over year. Every week, someone ships a new SaaS in a weekend and posts the screenshot. The hype is real, the productivity gains are real, and the enthusiasm is completely understandable.

But here's what those same headlines don't mention: the people shipping those products over a weekend aren't testing them. They can't. Vibe coding lowered the floor for building software. It didn't lower the floor for verifying it. That asymmetry is the actual bubble, and it's creating a vibe coding testing gap that grows wider with every AI-generated commit.

What Vibe Coding Actually Got Right

Before making the case for the testing gap, it's worth being honest about what vibe coding genuinely changed.

The barrier to building software used to be technical. You needed to understand syntax, architecture, data models, and deployment pipelines before you could ship anything. That knowledge took years to acquire. AI coding assistants changed the equation: you can now describe what you want and get working code back in seconds. Non-engineers are building internal tools. Junior developers are shipping at senior-developer speed. Founders are prototyping in hours instead of weeks.

This isn't a fluffy trend. Collins Dictionary naming "vibe coding" its Word of the Year in 2025 marks a genuine inflection point. When a mainstream cultural institution formalizes a technical term, the behavior it describes has escaped the early-adopter bubble and gone broad. Vibe coding is now how a meaningful percentage of new software gets started.

The tools are also getting better at the harder parts. Database schema generation, API integration, responsive layouts, things that used to require significant expertise are increasingly handled by the AI layer. For prototypes, internal tools, and early-stage products, the quality bar that vibe coding reaches is good enough. (For a practical framework on when vibe coding works and when it breaks at startups, see our companion guide.)

The problem isn't that vibe coding produces bad code. The problem is that nobody is checking.

The Testing Void: Where Vibe Coding Goes Wrong

Here's the data that doesn't make it into the launch-tweet thread. A CodeRabbit analysis of AI-generated codebases found 1.7x more major issues in AI co-authored code. Developers stopped reviewing as critically: refactoring-type comments dropped from 25% to under 10% of code reviews as adoption grew. On the security side, AI-generated code introduced 2.74x more XSS vulnerabilities than human-written code.

The perception gap is just as revealing. The METR study found experienced developers using AI tools completed tasks 19% slower than without AI, despite believing they were 20% faster. That 39-percentage-point gap between perception and reality is the vibe coding bubble in miniature. (For the full data picture on what the testing void looks like, including a severity scale for assessing your own risk, see our deep dive.)

The consequences are not hypothetical. A security audit of apps built on Lovable, one of the most popular vibe coding platforms, found that 170 out of 1,645 applications had critical security vulnerabilities: SQL injection, path traversal, privilege escalation. A 10% critical failure rate across a platform used primarily by non-engineers building production apps.

Amazon's internal experiment with forced AI coding adoption is another cautionary data point. After accelerating the shift to AI-generated code, incident rates climbed. The code was produced faster. It also broke more. The velocity gain at the creation layer created a software quality deficit at the verification layer that humans couldn't absorb.

The backlash followed the predictable hype cycle. Barclays data shows Lovable's traffic dropped 40% since its June peak despite reaching $100M ARR, Vercel's v0 fell 64%, and Bolt.new declined 27%. The rebound happened because vibe coding genuinely is useful. The drop happened because enough people had shipped things that broke in front of real users, and the initial excitement curdled into wariness.

This is why asking "is vibe coding dead?" misses the point. Vibe coding isn't dead. It's in the awkward phase between hype and maturity, where the creation tools have arrived but the verification tools haven't.

The r/vibecoding threads are full of examples of vibe coding gone wrong: products that broke in production within days of launch, authentication flows that looked correct but weren't, payment integrations that silently failed. This is the shape of the bubble: the creation side has been transformed. The verification side hasn't. Every vibe-coded product shipped today is running without the safety net that traditionally-developed software had, not because testing doesn't matter, but because the people doing the building don't have the skills to do the testing.

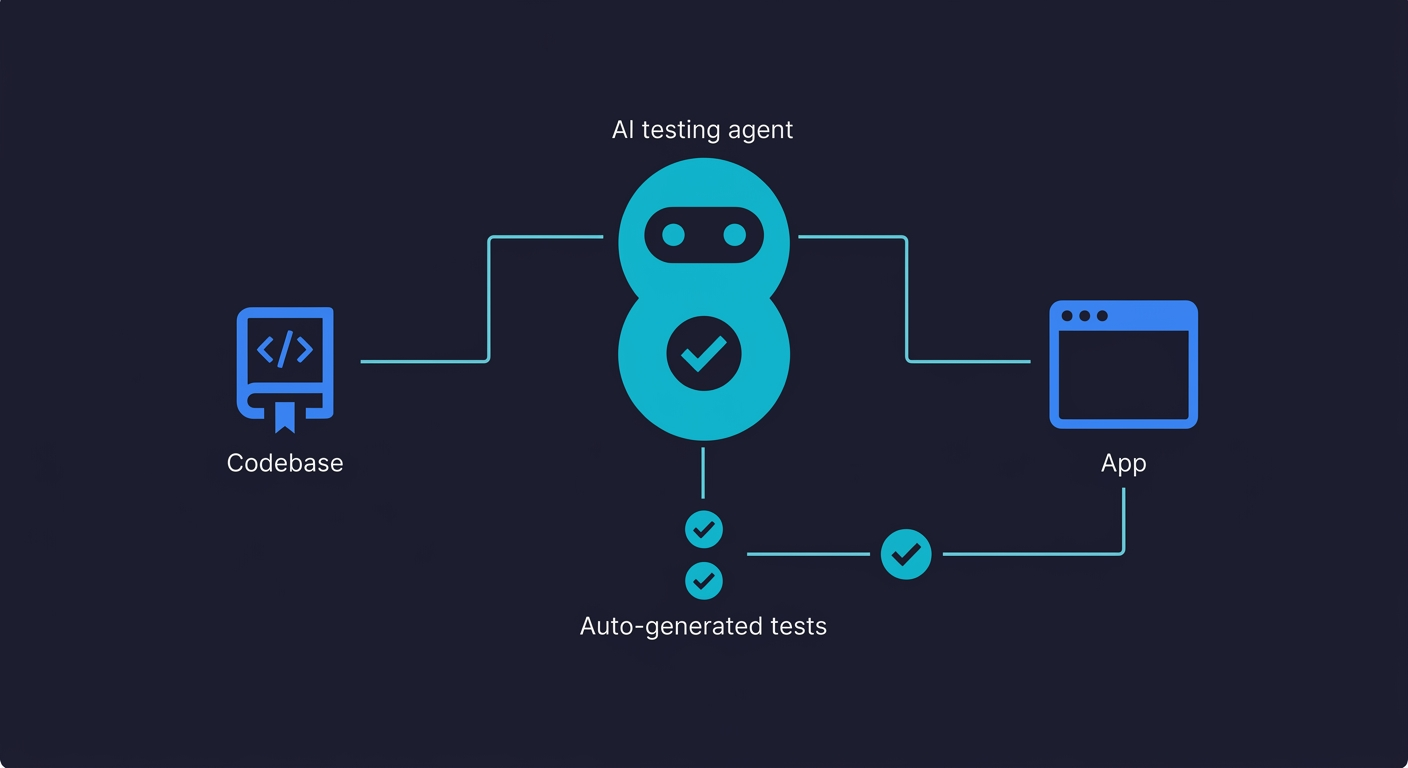

This is exactly the structural gap that Autonoma was built to close: AI agents that read your codebase and run E2E tests against your running application on every deploy, without requiring the testing expertise that most vibe coders don't have.

The Historical Parallel: Is the Vibe Coding Bubble Real?

We've seen this pattern before.

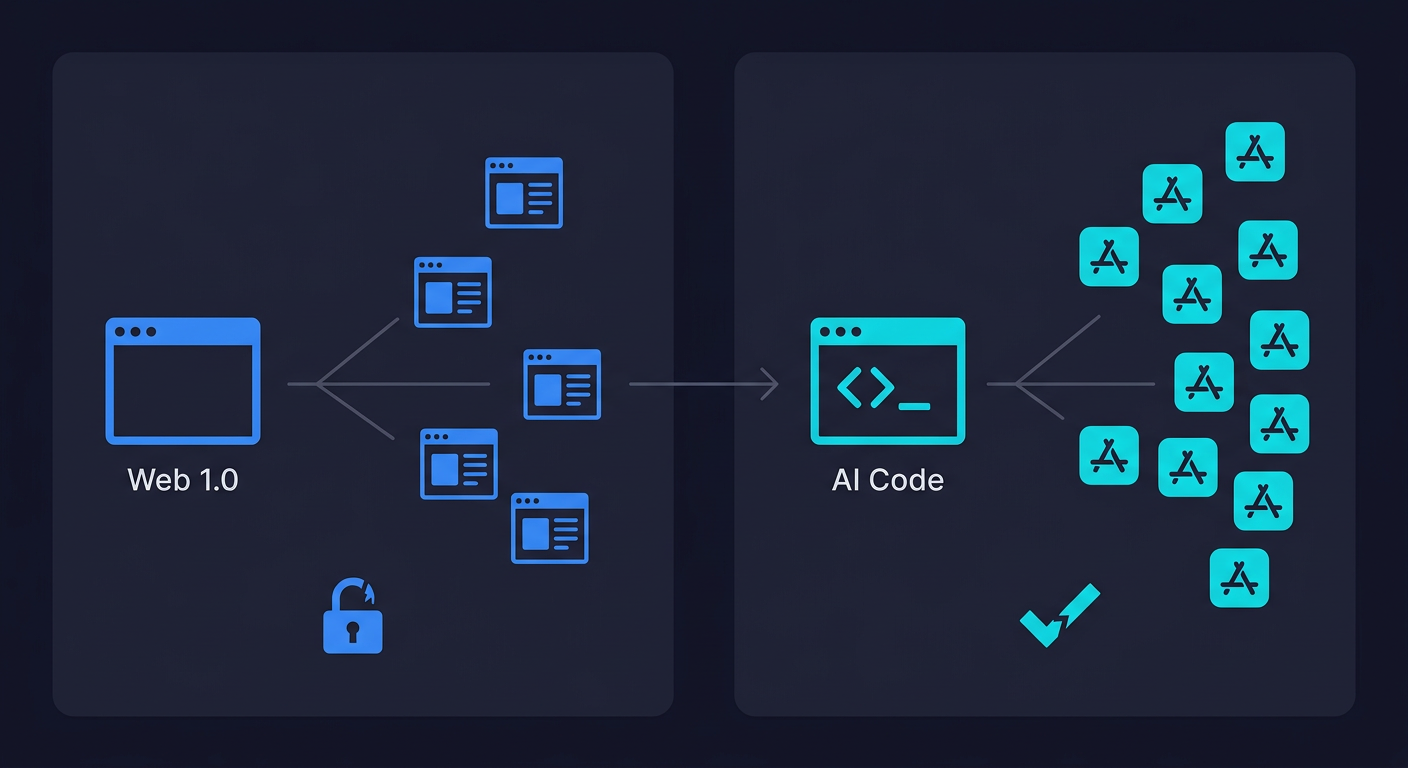

In the late 1990s, the web went from a technical specialty to a mainstream activity over about 18 months. Suddenly anyone could build a website. Dreamweaver and FrontPage democratized the creation layer. Millions of new sites launched. Almost none of them were secured.

The result wasn't that the web collapsed. The result was that an entirely new industry emerged. Cybersecurity as a profession grew from a niche within IT to one of the largest segments of the technology labor market. Tools for automated vulnerability scanning, penetration testing, and security auditing became billion-dollar categories. The asymmetry between "anyone can build" and "almost nobody can secure" didn't kill the web. It created the market for the security layer.

The same dynamic is playing out now, one layer up. Vibe coding is the Dreamweaver moment for application development. The demand asymmetry between creation and verification isn't a sign that vibe coding will fail. It's a sign that the testing layer is about to have its moment.

Why "Just Add Tests" Doesn't Work

The obvious response is: "Tell vibe coders to write tests." It doesn't work, for a structural reason.

Vibe coding is popular precisely because the people doing it couldn't, or didn't want to, write code manually. Writing tests is harder than writing application code. It requires understanding testing architecture, selector stability, async handling, and CI pipeline configuration. More pointedly: the people who vibe-code an MVP on a weekend don't know what tests would even be meaningful for their specific application. Writing a test requires knowing what the correct behavior should be across a range of conditions, not just the happy path.

AI coding tools haven't closed that gap. They've widened it, because they've let people build far ahead of what their verification skills can cover. And when the same AI writes both code and tests, you get the circularity problem: both outputs share the same blind spots, and the tests confirm wrong behavior rather than catching it.

What Vibe Coders Should Do Right Now

None of this means you should stop shipping. It means you should ship with a floor.

Authentication and payment logic deserve a human read before anything goes live. AI-generated auth code in particular has well-documented patterns of looking correct while silently failing on edge cases. If you don't understand what the code is doing, that's the section to slow down on.

Run a static analysis tool before deploying. Tools like Semgrep or ESLint take minutes to set up and will catch the most common classes of vulnerability that AI code introduces, including the XSS patterns and missing input validation that show up repeatedly in vibe-coded codebases.

Pick your single most critical user flow and add one E2E smoke test for it. It doesn't need to cover everything. It needs to tell you when the thing that matters most breaks. One real test beats a hundred assumptions.

Use a staging environment, even a simple one. Shipping AI-generated changes straight to production removes the last checkpoint between your code and your users. A staging URL costs almost nothing and catches the failures you didn't anticipate.

Finally: if you can't explain what a function does in plain language, don't ship it. This isn't about writing tests. It's about maintaining the understanding that lets you debug when something goes wrong in production at 2am.

The Future of QA Testing AI: The Demand Thesis

Here's the counterintuitive read: vibe coding is going to increase demand for testing, not decrease it.

Every piece of software that gets shipped needs testing. The faster software gets built, the more testing needs to happen. Vibe coding is accelerating the rate of software creation across the entire market, from hobbyists to enterprise developers. Every one of those projects has the same requirement: at some point, someone has to verify it works and keeps working as it changes.

The supply of people who can write and maintain meaningful test suites hasn't scaled with the supply of code. Gartner predicts 40% of AI-augmented coding projects will be cancelled by 2027 due to escalating quality and maintenance costs. That gap is the opportunity.

The early web parallel plays out here too. The explosion of websites didn't reduce demand for security expertise. It amplified it, because there were suddenly millions of new attack surfaces being created by people who didn't know what they were doing. The explosion of vibe-coded applications won't reduce demand for testing expertise. It will amplify it for exactly the same reason.

What will change is the form of that demand. The testing layer that emerges from the vibe coding era won't look like a QA engineer manually writing Playwright scripts. It will look like an automated system that reads your codebase, derives what should be tested, and runs those tests continuously, without requiring the developer to have testing expertise. The expertise gets embedded in the tool rather than the human.

That's the continuous testing model, and it's where the market is heading.

What the AI Testing Layer Looks Like

The vibe coder who shipped a product over a weekend doesn't want to hire a QA engineer. They can't afford to. And they don't have time to learn Playwright. What they need is a system that can look at their codebase and tell them whether the critical user flows still work after their latest push.

That system has to be as automated as the coding layer. It has to require zero testing expertise to operate. And it has to keep up with codebases that change as fast as vibe-coded products do, where a single session might restructure half the application.

Agentic testing is the answer to that requirement. Tools like Autonoma connect to your codebase, read your code to understand what should be tested, and run tests against your running application, without anyone writing scripts. When the application changes, the tests adapt. The testing layer matches the velocity of the creation layer.

The industry is already naming this shift. On the creation side, "agentic engineering" (a term popularized by Addy Osmani) describes the maturation from unstructured vibe coding to AI-assisted development with specs, review loops, and guardrails. On the verification side, "vibe testing" describes the equivalent: automated testing that requires zero manual scripting, derived directly from the codebase. The creation layer matures through agentic engineering. The verification layer matures through agentic testing. Both are responses to the same asymmetry.

After the Vibe Coding Bubble: Software Quality Catches Up

Every technology bubble follows the same arc. The hype outruns the infrastructure. The infrastructure catches up. The technology matures into something durable and broadly useful.

Vibe coding is somewhere in the middle of that arc right now. The hangover is real: the incidents, the debugging time, the security vulnerabilities, the r/vibecoding threads about projects that broke in production. The rebound after the initial hype drop suggests the underlying value proposition survives the correction.

And for anyone asking whether vibe coding is overrated: the productivity gains are not overrated. The assumption that fast code equals safe code is what's overrated.

What emerges on the other side of the correction won't be a world where vibe coding disappears. It'll be a world where vibe coding has a testing layer built around it, the same way the post-Dreamweaver web eventually got security infrastructure built around it. The tools, the practices, and the expectations will shift to account for the verification gap.

The bubble, in other words, isn't in vibe coding. It's in the assumption that building something means software quality comes for free. That assumption is already popping. The question is what comes next.

The testing layer is what comes next.

Key Takeaways

- The vibe coding bubble is a testing gap, not a technology failure

- AI-generated code ships 1.7x more major issues than human-written code

- 63% of vibe coders spend more time debugging than before AI tools

- Testing demand will increase, not decrease, as vibe coding scales

- Agentic testing that requires zero expertise is the scalable answer

- The historical parallel to web security suggests a new industry is forming

The vibe coding bubble refers to the asymmetry between how radically AI tools have democratized software creation and how little they've done for software verification. Vibe coding (using AI assistants to generate code from plain-language prompts) has made building applications dramatically easier. But testing that code, verifying it works correctly, securely, and reliably across real user conditions, still requires expertise most vibe coders don't have. The 'bubble' is the growing gap between code being shipped and code being tested, which is showing up in higher incident rates, more security vulnerabilities, and developers spending more time debugging than before.

No. Vibe coding isn't dead, it's maturing. Platform traffic dropped significantly (Lovable down 40%, v0 down 64%) before stabilizing, which is a normal technology adoption curve. What's dying is the assumption that AI-generated code doesn't need testing. The tools that survive will be those paired with automated verification layers. Vibe coding as a practice will become more durable as the testing infrastructure catches up.

No, but the assumption that vibe coding = shipping is overrated. The productivity gains from AI coding assistants are real and well-documented. Developers genuinely produce code faster, prototypes get built in hours instead of days, and non-engineers can create functional internal tools they couldn't before. What's overrated is the idea that producing the code is the hard part. It wasn't even before vibe coding. Testing, debugging, security, and reliability are the hard parts, and vibe coding hasn't touched any of them.

The data says yes, compared to traditionally-reviewed code. Research into AI-assisted development has found AI-generated codebases contain 2.74x more XSS (cross-site scripting) vulnerabilities and 1.7x more major issues overall. The likely cause is reduced code review discipline: CodeRabbit data shows that as vibe coding adoption grew, refactoring-type review comments dropped from 25% to under 10% of total review activity, suggesting developers are scrutinizing AI-generated code less carefully than code they wrote themselves.

Vibe coding is more likely to increase demand for QA and testing expertise than replace it. The creation layer has been accelerated dramatically, which means there is more code being shipped, more applications hitting production, and more test coverage needed, all without a proportional increase in developers who can write meaningful tests. The form of QA work will change: manual test script writing becomes less valuable, while expertise in automated testing infrastructure, agentic testing platforms, and test strategy becomes more valuable. Tools like Autonoma represent the direction the testing layer is heading: codebase-connected, fully automated, zero maintenance.

The best testing tools for vibe-coded projects are those that don't require testing expertise to operate. Since vibe coders typically lack the background to write Playwright or Cypress scripts, they need automated solutions that derive test cases from the codebase itself. Agentic testing platforms, led by Autonoma, connect to your codebase, read your routes and user flows, generate test cases automatically, and self-heal when your code changes. This matches the zero-expertise model of vibe coding itself. Other reasonable starting points include smoke tests in CI for critical paths and static analysis tools for security vulnerabilities, but neither gives the full-application coverage that agentic E2E testing provides.

A more mature ecosystem where the testing layer has caught up to the creation layer. The historical parallel is the early web: Dreamweaver and FrontPage democratized website creation in the late 1990s, nobody was securing those sites, and the result was the rise of the cybersecurity industry. Vibe coding is doing the same thing one layer up, democratizing application creation without democratizing verification. What follows is the same: a dedicated infrastructure layer for testing AI-generated code, built around agentic tools that can operate without human testing expertise. See the Autonoma blog on agentic testing for a look at what that infrastructure looks like today.