What is quality engineering? Quality engineering means designing your development systems so that testing, observability, and reliability are built into the workflow rather than bolted on afterward. For modern engineering teams, this means CI pipelines that catch regressions before merge, automated test generation that removes the friction of writing E2E tests, and feedback loops tight enough that engineers see the impact of their code within minutes, not days. The goal is high-velocity shipping with high reliability, and the teams that achieve both are the ones that treat quality as a systems problem, not a people problem.

The teams that ship fast and ship reliably aren't the ones with the biggest QA departments. They're the ones with the tightest feedback loops. If your current quality process is "someone clicks through the app before a deploy," this guide is for you.

The "Move Fast and Break Things" Trap

Every startup starts the same way. You ship fast. You test manually. Someone clicks through the happy path before a deploy. It works because your product is small, your team is small, and everyone has the full application model in their heads.

Then it stops working. Not all at once. Slowly. A checkout bug makes it to production because the person who tested the deploy didn't know the payment flow changed. An onboarding regression sits unnoticed for a week because nobody checked the signup page after the last refactor. Your Slack channel starts filling with customer-reported bugs that should have been caught before merge.

The instinct at this point is to add process. Hire a QA person. Add a manual testing phase before each release. Create a sign-off checklist. This is the wrong instinct. You just introduced a bottleneck into the only thing that made you competitive: speed.

The real answer is not more people checking things. It is better systems that catch things automatically. The data supports this: over 80% of organizations report financial impacts from software defects exceeding $500,000 annually, and nearly two-thirds deploy code without fully testing it. The problem is not that teams do not care about quality. The problem is that the systems for catching defects are either too slow, too manual, or too disconnected from the developer workflow.

Quality Engineering vs. QA: The Distinction That Matters

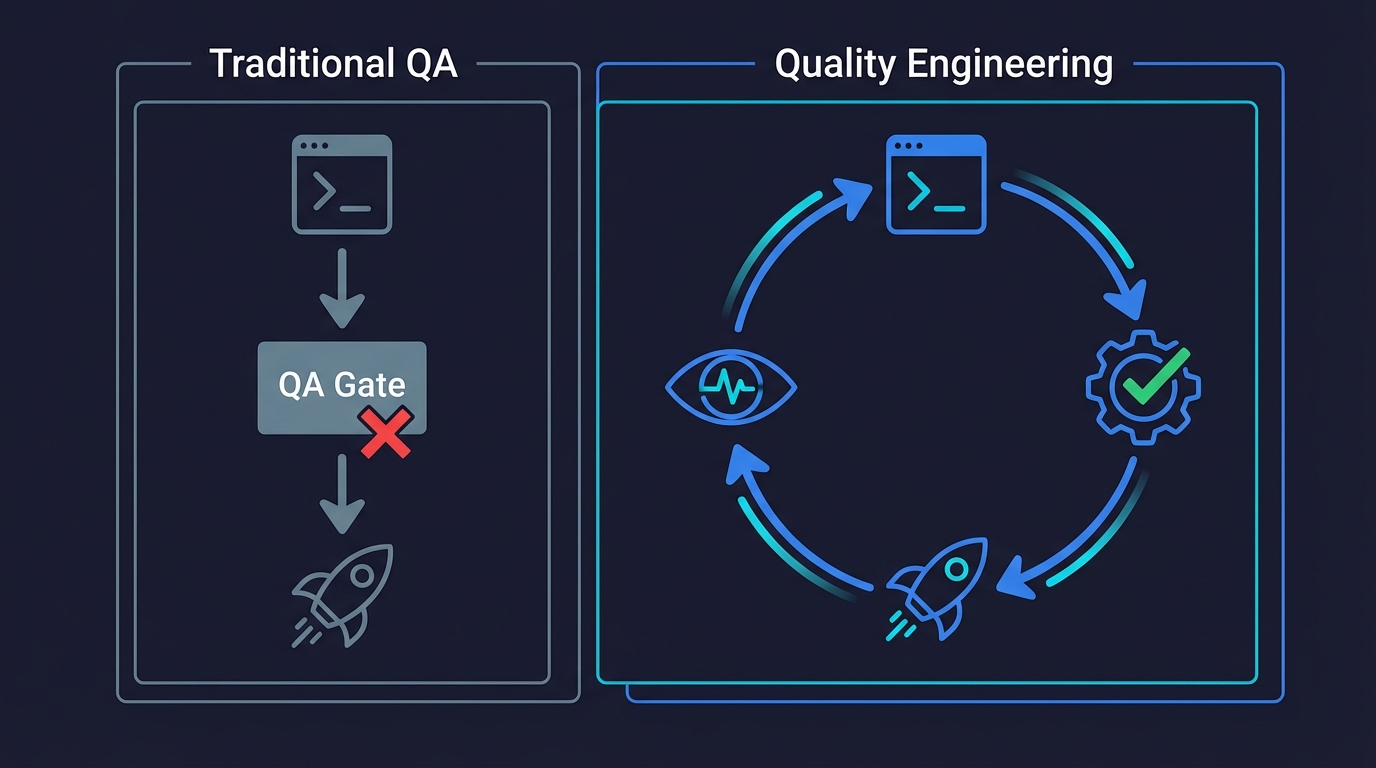

Traditional QA is reactive. A feature gets built, handed off to QA, tested, bugs are filed, fixes go back to the developer. The feedback loop is long. The QA team becomes a bottleneck. Developers learn to treat testing as someone else's job. When deadlines tighten, QA gets squeezed first.

Quality engineering inverts the model. Instead of testing after development, you build the testing infrastructure into the development process itself. The engineer who writes the feature is the same person whose CI pipeline catches the regression. There is no handoff. There is no separate phase. Testing is not a gate at the end; it is a continuous signal throughout.

This distinction matters because it changes incentives. When QA is a separate function, developers optimize for "getting through QA" rather than "shipping correct code." When testing infrastructure is part of the developer's own workflow, the incentive is aligned: the fastest way to ship is to ship correctly the first time.

The practical difference looks like this:

| Traditional QA | Quality Engineering |

|---|---|

| Separate team tests after development | Testing is embedded in the development workflow |

| Bugs found days or weeks after code was written | Regressions caught in CI before merge |

| QA becomes a bottleneck before releases | Releases are continuous, testing is automatic |

| Developers treat testing as someone else's job | Developers own the quality of their own code |

| Manual test scripts and checklists | Automated pipelines and generated tests |

| Quality scales by hiring more testers | Quality scales by improving systems |

The companies that get quality engineering right do not have larger QA teams. They have better systems. And the core of those systems is removing the friction that stops engineers from testing their own work.

The Three Systems That Define Quality Engineering

Quality engineering is not one thing. It is three interlocking systems that, together, create the conditions for reliable, high-velocity shipping.

System 1: Fast, Trustworthy CI

Your CI pipeline is your first line of defense. If it is slow, flaky, or ignored, nothing else matters. Engineers will route around a CI pipeline they don't trust.

A quality-engineered CI pipeline has three properties. First, it is fast. If your pipeline takes more than ten minutes to give feedback on a pull request, developers will context-switch away and lose the mental model of what they just wrote. The feedback loop is broken. Aim for five minutes or less from push to green or red.

Second, it is trustworthy. A flaky test suite is worse than no test suite. If engineers learn to re-run CI and hope for green, you have trained your team to ignore your quality signal. Every flaky test must be quarantined or fixed immediately. There is no "we'll get to it." Our guide to reducing test flakiness covers the tactical patterns for eliminating intermittent failures.

Third, it is blocking. CI results that are advisory (you can merge even when red) are not a quality system. They are a suggestion. A quality-engineered pipeline blocks merge on failure and the team treats that as non-negotiable.

System 2: Automated Test Coverage That Doesn't Depend on Developer Willpower

Here is the uncomfortable truth about test coverage: developers know they should write tests. They do not want to write E2E tests. The feedback is too slow, the maintenance is too high, and the payoff feels too distant when there is a feature to ship.

Quality engineering acknowledges this reality and designs around it. Instead of relying on discipline to produce test coverage, you create systems that generate coverage with minimal developer effort.

This is where the tooling landscape has shifted dramatically. Choosing between Playwright and Cypress is a common starting point, but the framework decision is secondary to the maintenance question. Traditional test automation requires a developer to write a Playwright or Cypress script for each flow, maintain selectors when the UI changes, manage test data, and debug failures. That is a significant time investment that competes directly with feature work. For a small team without dedicated QA headcount, it is often a losing competition.

Agentic testing changes the equation. Instead of asking developers to write test scripts, an agent reads your codebase, understands your user flows from the code itself, and generates tests autonomously. When the UI changes, the agent adapts. No selectors to maintain, no scripts to update, no fixtures to keep current.

At Autonoma, this is exactly what we built. Our agents connect to your codebase, read your routes and components, and autonomously develop the E2E tests that cover your critical flows. The developer's job is to build the feature. The agent's job is to make sure it keeps working. This is the systems-level answer to "how do we get test coverage without slowing down developers." You remove the friction instead of demanding more effort.

System 3: Observability and Fast Feedback Loops

Testing catches bugs before they ship. Observability catches the ones that slip through. A complete quality engineering system includes both.

Observability in this context means structured logging, error tracking (Sentry, Datadog, or equivalent), and alerting that reaches the engineer who wrote the code, not a generic ops channel. When a production error occurs, the person who can fix it fastest should know about it within minutes.

The feedback loop matters as much as the tools. If a production bug takes three days to reach the developer who introduced it, they have already moved on to a different feature. The context is gone. The fix takes longer. Quality engineering compresses this loop: from customer report to developer awareness in minutes, not days.

Pair this with shift-left testing and you get a system where most bugs are caught before merge (by CI and automated tests) and the few that escape are caught quickly in production (by observability and alerting). The window for a bug to cause damage shrinks from days to minutes.

How to Transition: A Practical Roadmap

If you are an engineering leader reading this because your team is at the breaking point between "move fast" and "things are breaking," here is the concrete sequence that works. This is not theory. It is the pattern we see repeatedly at the startups we work with at Autonoma.

Phase 1: Stop the Bleeding (Week 1-2)

Start with your CI pipeline. If it is slow, speed it up. If it is flaky, quarantine the flaky tests. If it is not blocking merges, make it blocking. This is your foundation. Nothing else works if CI is broken.

Audit your current test coverage. You do not need a coverage report. You need to answer one question: if your checkout flow broke right now, would CI catch it before merge? If the answer is no, that is the first gap to close.

Phase 2: Remove the Friction (Week 3-4)

This is the phase that separates quality engineering from "we should write more tests." Instead of asking your team to write more tests, give them tools that generate tests automatically.

Connect an agentic testing tool to your codebase. At Autonoma, this means pointing our agent at your repository. The Planner agent reads your routes, components, and user flows, then generates a test plan. The Executor agent runs the tests. When your code changes, the tests adapt. Your developers do not write or maintain test scripts. They review the test plan to confirm it covers the right flows, and the agent handles execution and maintenance.

This is the key insight of modern quality engineering: the bottleneck is not developer skill or motivation. It is the friction of test creation and maintenance. Remove the friction and coverage follows naturally.

Phase 3: Close the Loop (Week 5-8)

Add observability to your production environment. Set up error tracking that routes alerts to the engineer who last touched the relevant code. Create a response protocol: production errors that affect users get a same-day investigation.

Connect your test coverage to your deployment pipeline. Tests run on every PR. Deployment is blocked on test passage. Production errors trigger new regression tests (either manually or through your agentic testing tool). This creates a self-reinforcing loop: every bug that makes it to production results in a test that prevents it from recurring.

Phase 4: Sustain and Scale (Ongoing)

Quality engineering is not a project with an end date. It is a set of systems that require maintenance and iteration. Review your pipeline speed monthly. Audit flaky tests weekly. Check that your test coverage still maps to your most critical flows as the product evolves.

The teams that do this well do not talk about quality as a value or a priority. They talk about it as infrastructure. The CI pipeline is infrastructure. The test suite is infrastructure. Observability is infrastructure. You maintain infrastructure because it is the foundation everything else runs on.

This mindset also challenges the conventional testing pyramid. When agentic tools make E2E tests cheap to create and maintain, the traditional argument for "lots of unit tests, few E2E tests" weakens. Quality engineering is about deploying the right tests at the right layer, not following a rigid hierarchy.

Common Quality Engineering Challenges

Every team that transitions to quality engineering hits the same friction points. Knowing them in advance makes the difference between a successful transition and one that stalls.

"We don't have time to set up testing infrastructure." This is the most common objection and the most self-defeating. The time you spend firefighting production bugs is the time you would have saved with testing infrastructure. The question is not whether you can afford to invest in quality engineering. It is whether you can afford not to. Start small: a blocking CI pipeline and five E2E tests on your critical flows is enough to change the trajectory.

"Our codebase is too messy for automated testing." A messy codebase is a reason to invest in quality engineering, not a reason to avoid it. Automated tests on your critical flows give you the safety net to refactor with confidence. Without that safety net, the codebase stays messy because nobody is willing to touch it.

"Developers push back on owning testing." This is almost always a tooling problem, not a culture problem. When writing a test takes 30 minutes of selector work and fixture setup, developers will resist. When an AI agent generates the tests autonomously, there is nothing to push back on. The friction was the problem, not the developers.

"We tried test automation before and it didn't stick." Most failed attempts fail because the maintenance cost exceeded the perceived benefit. Scripted test suites that break on every UI change train your team to view testing as a liability. Agentic testing breaks this cycle because maintenance is handled by the agent, not by developers.

What Quality Engineering Is Not

Quality engineering is not a speech about craftsmanship. It is not a "quality week" initiative. It is not a poster on the wall. If your quality strategy requires engineers to be more careful or take more pride in their work, it is not a strategy. It is a hope.

Quality engineering is also not a QA team by another name. If you renamed your QA department to "Quality Engineering" without changing the systems, you changed a label, not a practice. The test for whether you have a quality engineering culture is simple: if you removed every QA-titled person from your org, would your release quality change? If the answer is yes, your testing depends on people, not systems. That is the opposite of quality engineering.

And quality engineering is not perfection. It is not zero bugs. It is a system that catches the important bugs fast and limits the blast radius of the ones that slip through. Trying to catch every bug before release is the same mistake as having no tests: both are ways to guarantee you ship slowly.

The Role of AI and Agentic Testing in Modern Quality Engineering

The quality engineering principles above are not new. CI pipelines, automated testing, observability, feedback loops -- engineering leaders have talked about these for a decade. What the SEI at Carnegie Mellon identifies as "engineering-centric" quality techniques have been well-understood in theory for years. What changed is that the hardest part (generating and maintaining comprehensive test coverage) used to require significant ongoing human effort. That is no longer true.

Generative AI in software testing and AI-powered QA tools have reached the point where AI agents can read a codebase, understand user flows, and generate meaningful E2E test coverage autonomously. This is not the record-and-replay approach of older tools. Modern agentic testing tools like Autonoma access your source code directly. They understand your routes, your components, your data models. They generate tests from that understanding, and they maintain those tests as your code evolves.

This matters for quality engineering because it solves the adoption problem. The reason most teams do not have good test coverage is not that they do not value testing. It is that the cost of creating and maintaining test coverage has historically been too high relative to the benefit, especially for small teams without dedicated QA. Agentic testing collapses that cost. The agent does the tedious work. Developers focus on building features. Quality coverage grows as a side effect of shipping, not as a competing priority.

For engineering leaders, this means the quality engineering roadmap described above is more achievable than it has ever been. The "remove the friction" phase that used to require months of investment in test infrastructure can now be accomplished in days by connecting an agentic testing tool to your codebase.

Measuring Quality Engineering: Metrics That Actually Matter

If you cannot measure your quality engineering systems, you cannot improve them. But most teams measure the wrong things. Test coverage percentage is the most common and least useful metric. A team with 80% line coverage and no E2E tests on their checkout flow has a meaningless number and a real risk.

The metrics that actually tell you whether your quality engineering systems are working:

Time to detect. When a regression is introduced, how long until your systems catch it? In a well-engineered pipeline, the answer is minutes (caught in CI). If the answer is days (caught in production by a customer), your detection system has gaps.

Time to feedback. When CI fails, how long until the developer sees the failure and understands what broke? This measures the tightness of your feedback loop. If CI takes 20 minutes and the error message is unclear, the feedback loop is broken even though the detection system works.

Escaped defect rate. How many bugs reach production per release? Track this over time. A quality engineering system should produce a declining trend. If it is flat or increasing despite adding tests, your tests are covering the wrong things.

Mean time to recovery (MTTR). When a bug reaches production, how long until it is fixed? This measures your observability and response systems. Quality engineering reduces MTTR by ensuring the right person is alerted fast and has the context to fix quickly. MTTR is one of the four DORA metrics that high-performing engineering teams track. According to the DORA State of DevOps research, elite-performing teams recover from incidents in less than one hour, while low performers take between one week and one month.

CI trust score. What percentage of CI failures are real bugs vs. flaky tests? If more than 5% of your CI failures are flaky, your team is learning to distrust the pipeline. Track and fix this aggressively.

Quality Engineering and the Developer Experience

There is a direct line between quality engineering and developer satisfaction. Engineers on teams with reliable CI, automated test coverage, and fast feedback loops report higher satisfaction not because they care about testing in the abstract, but because they spend less time firefighting.

A production bug that could have been caught in CI costs roughly ten times the engineering time to fix compared to catching it before merge. That is ten times the context-switching, ten times the emergency debugging, ten times the interruption to whatever the developer was actually working on. Quality engineering systems reduce that tax.

When you frame quality engineering this way, as "how do we reduce the time engineers spend on things that are not building the product," the investment case becomes obvious. Every hour saved on debugging a production regression is an hour that goes back to feature work. Every flaky test fixed is a developer who trusts the pipeline again. Every automated test generated by an agent is a test a developer did not have to write by hand.

This is why the best engineering leaders do not pitch quality engineering as "we need to care more about quality." They pitch it as "I want to remove the things that slow you down and frustrate you." The framing matters because the first one sounds like a lecture. The second one sounds like an improvement to their daily work.

Quality engineering is the practice of embedding testing, observability, and fast feedback loops directly into the development workflow. Instead of treating quality as a separate phase or department, quality engineering makes reliable, tested code a natural byproduct of how the team ships. It includes CI pipelines that block on failure, automated test coverage that does not depend on developer willpower, and observability systems that catch production issues fast.

Traditional QA is a separate function that tests software after it is built, creating a handoff between development and testing. Quality engineering eliminates that handoff by building testing infrastructure into the development process itself. The engineer who writes the feature also has automated systems that verify it works. Quality scales through better systems, not more testers.

At a startup, quality engineering focuses on three systems: fast and trustworthy CI that blocks merges on failure, automated test generation (using agentic testing tools) that creates E2E coverage without requiring developers to write test scripts, and observability that catches production bugs quickly. The goal is high-velocity shipping with high reliability, using systems rather than headcount.

Agentic testing uses AI agents that read your codebase, understand user flows, and generate tests autonomously. It relates to quality engineering because it solves the biggest friction point: getting comprehensive test coverage without requiring developers to write and maintain test scripts. Agents handle test creation and maintenance while developers focus on building features.

The most useful quality engineering metrics are time to detect (how quickly regressions are caught), escaped defect rate (bugs reaching production per release), mean time to recovery (how fast production bugs are fixed), and CI trust score (percentage of CI failures that are real bugs vs. flaky tests). Line coverage percentage is the most common and least useful metric.