Quality engineering is a discipline where quality is embedded throughout the development process rather than enforced at the end by a dedicated gatekeeper. Instead of a QA team that reviews code before release, engineers own the quality of what they ship, automated tests run in CI on every pull request, and coverage is generated from the codebase itself. The transition from gatekeeper QA to quality engineering is one of the most impactful structural changes a startup can make -- and one of the most commonly botched.

The bug slipped through on a Tuesday afternoon. The QA engineer was still in the middle of the regression pass when someone decided the release could not wait. By Thursday, three enterprise customers had filed support tickets. By Friday, the post-mortem was already becoming a referendum on whether QA was the bottleneck or the solution.

We have seen this exact scenario play out at startup after startup. It is not a story about one bad decision. It is a story about a model of quality that was never designed to keep pace with how modern software teams actually ship.

What the Gatekeeper Model Actually Looks Like

At most early-stage startups, quality control follows the same script. Developers build features in sprints. When a feature is "done," it goes to QA. A QA engineer runs through a test plan, finds issues, files them back to developers, gets a fix, tests again. If everything passes, the feature ships. The QA team is the last line of defense before production.

This model has a certain logic to it. It mirrors how manufacturing quality control worked, and it creates a clear handoff point. The problem is that it treats quality like a filter at the end of a pipeline rather than a property of the pipeline itself.

The gatekeeper model breaks in four predictable ways at fast-moving startups. Releases pile up because QA is the bottleneck. Developers build features without thinking about testability because that is QA's problem. The QA team finds bugs late, when they are expensive to fix. And every release becomes a stressful race to clear the QA gate before the sprint ends.

By the time a startup reaches Series A, the tension is usually visible. The QA team is always behind. Developers are frustrated by the back-and-forth. Product is frustrated that features take longer to ship than they should. And the QA engineers are burning out trying to keep up with a surface area that one team was never equipped to cover alone.

What Is Quality Engineering?

Software quality engineering is not just a rebrand of QA. It represents a different theory of where quality comes from.

In the gatekeeper model, quality is verified at the end. In quality engineering, quality is built in from the start. The developer who writes the feature also writes the tests. Automated checks run in CI before any human reviews the code. When a test fails, it fails immediately, in the pull request, before it ever reaches a QA engineer's queue.

This is what shift-left testing actually means in practice. Not a policy that says "developers should write more tests," but an infrastructure that makes writing tests the natural path and running them automatic. Shift-left is not a mindset. It is a technical architecture.

Quality engineering also changes what QA specialists do. Instead of executing regression test plans, they design test strategies. Instead of filing bugs, they build the infrastructure that catches bugs before humans see them. Instead of being the last line of defense, they become the architects of a system that defends itself.

Quality Assurance vs Quality Engineering

The distinction matters for hiring, for structuring teams, and for how you measure success. (If your QA hire is struggling, the problem may be structural, not personal.) Quality assurance focuses on finding defects after the fact. Quality engineering focuses on preventing them structurally. A traditional QA metric is defect escape rate (how many bugs made it to production). A quality engineering metric is test coverage in CI, mean time to detect a failure, and the percentage of releases that require zero manual testing steps.

| Dimension | Gatekeeper QA | Quality Engineering |

|---|---|---|

| When testing happens | After development, before release | During development, on every pull request |

| Who tests | Dedicated QA team | Everyone who writes code |

| Automation approach | Manual-first, automation as supplement | Automation-first, manual for exploratory only |

| Key metric | Defect escape rate | CI coverage, MTTD, zero-touch release % |

| Bottleneck | QA is the constraint | No single function is the constraint |

| QA specialist role | Test executor and gatekeeper | Test architect and strategy owner |

| Feedback loop | Days to weeks (post-release) | Minutes (in-PR) |

Autonoma accelerates this transition by handling the repetitive test execution — AI agents run E2E tests on every PR, freeing quality engineers to focus on strategy, risk analysis, and test design.

What Breaks During the Transition

Most teams that attempt this transition underestimate how much it disrupts the existing order. Three failure modes show up consistently.

The Nobody-Testing Gap

When a startup decides to move away from gatekeeper QA, the gatekeeper does not disappear overnight. But the implicit expectation changes before the infrastructure is ready to support it. Developers are told they own quality now. QA is told they are no longer responsible for regression. For a period of weeks or months, nobody is actually testing anything with any rigor.

This gap is not theoretical. It is the window where something significant breaks in production, the post-mortem is uncomfortable, and the team's instinct is to regress to the old model because at least that felt like it was working.

The way to avoid the gap is to invest in the QA automation infrastructure before removing the human safety net. That means running automated tests in CI (GitHub Actions, CircleCI, or similar), achieving meaningful coverage of critical paths, and giving developers visibility into what is and is not tested -- all before you start pulling back the manual QA function. The transition has to be parallel, not sequential.

Developer Backlash

Developers push back on owning quality for two reasons, and both are legitimate. First, they were not hired to write tests. Many engineers went into development specifically because they want to build things, not test them. Asking them to own the entire quality surface of their features in addition to building those features is a real scope expansion.

Second, writing good automated tests is genuinely hard. A developer who has never built a test suite does not automatically know how to write tests that are stable, fast, meaningful, and maintainable. They write brittle tests, those tests break constantly, and the reasonable conclusion is that automated testing is more trouble than it is worth.

The backlash is usually not about laziness. It is about skill gap and incentive misalignment. Developers are measured on features shipped. Testing is invisible when it works and blamed when it does not. Until you change the measurement, you get the behavior the measurement incentivizes.

Addressing this requires two things in parallel. The first is tooling that genuinely reduces the cost of writing and maintaining tests -- not just frameworks like Playwright or Cypress, but infrastructure that makes testing a fast, low-friction part of the development loop. The second is explicit recognition of test quality as part of engineering output. If your code review process ignores test coverage, your team will too.

Ownership Confusion

Even after the cultural conversation and the tooling investments, a persistent question remains: who owns this? A developer ships a feature and writes tests for the happy path. Three sprints later, a regression appears in an edge case that nobody wrote a test for. Whose fault is that?

In the gatekeeper model, the answer is easy. QA missed it. In quality engineering, the ownership is distributed and the accountability structure is genuinely unclear until you make it explicit.

Ownership confusion shows up most painfully around two things: edge cases and integration points. Developers reasonably test the code they wrote. But integration points -- where your service calls another service, where your frontend talks to your backend, where your app depends on a third-party API -- live between ownership boundaries. Nobody volunteers to test the seams.

The solution is to be explicit about coverage responsibilities in a way that leaves no seams. Critical user flows need to be owned end-to-end, not split across the teams that built each component. End-to-end tests that validate complete journeys belong to the team most accountable for the user's experience of that journey, typically product or platform.

How to Run the Transition Without Breaking Things

The teams that navigate this transition successfully share a common approach. They do not flip a switch. They run a gradual transfer of ownership with a safety net in place throughout.

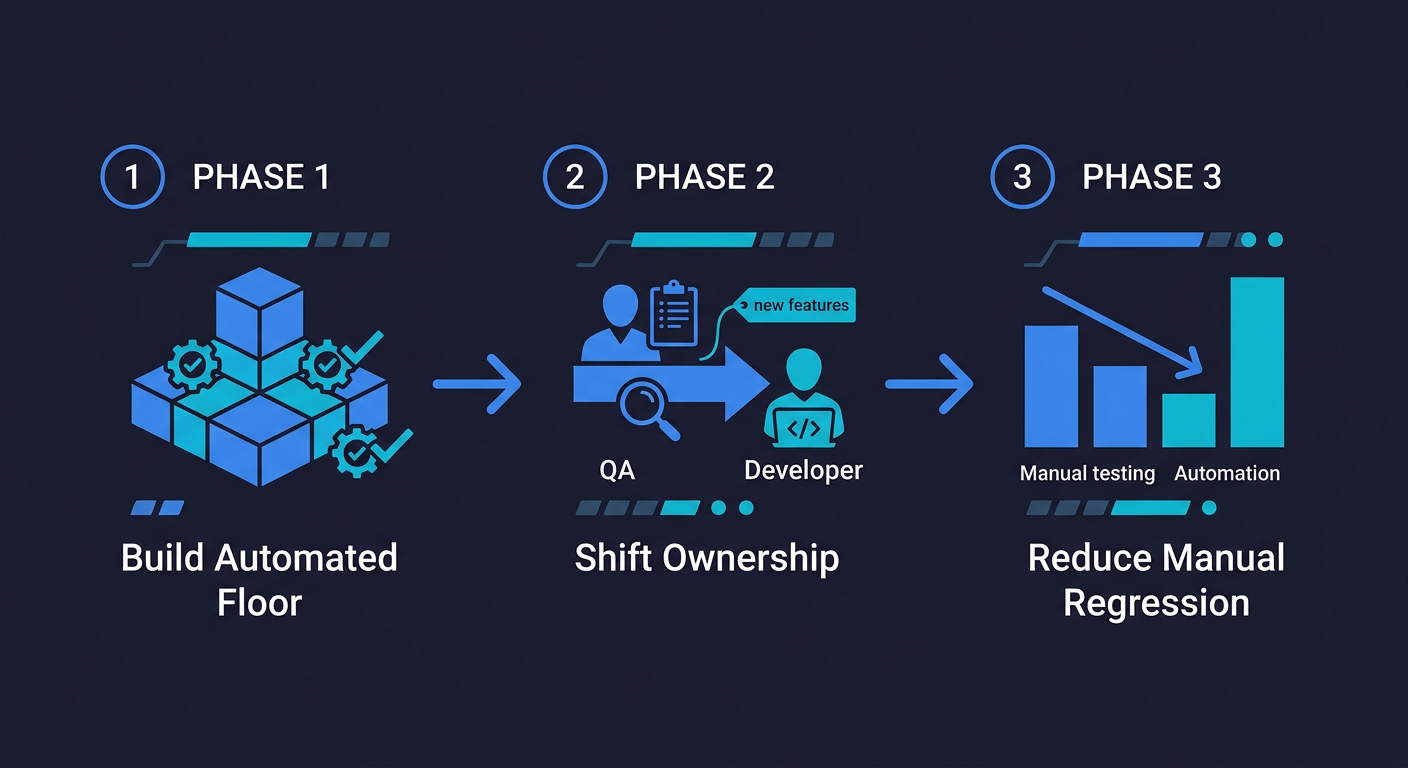

The first phase is building the automated floor. Before changing any responsibilities, get automated tests running in CI for your highest-priority user flows. These do not need to be comprehensive. They need to be stable, meaningful, and actually running on every pull request. The goal is to have something to hand off.

The second phase is shifting ownership incrementally. Start with new features. Every net-new feature ships with automated tests written by the developer who built it. Existing untested code stays with QA (or whoever owns it) while the new model takes hold for everything going forward. This is slower than a full flip, but it avoids the nobody-testing gap.

The third phase is reducing manual regression. As automated coverage grows, the manual regression cycle shrinks. Not because you mandated it, but because the automated suite is actually catching the things the manual suite was catching. When that confidence is established, the QA team's focus shifts from execution to strategy.

The measure of success is not that you eliminated your QA team. It is that releases stopped being events. Deployments happen continuously because the automated suite provides enough confidence that no human needs to bless each release individually.

What the End State Actually Looks Like

A team that has completed the transition does not look like a team that deleted its QA function. It looks like a team where quality is invisible because it is structural.

Developers open pull requests and automated tests run before any reviewer sees the code. A failing test blocks the merge, not a QA ticket in a queue. Critical user flows are covered end-to-end, and when a flow changes, the tests update to reflect the change. New features arrive with coverage already in place. Post-mortems spend less time on "how did this escape QA" and more time on "what does this tell us about our test coverage model."

QA specialists -- the ones who stay -- are working on test architecture, coverage strategy, and the hardest edge cases. They are not running regression cycles before every release. They are building the system that makes regression cycles unnecessary.

This is what we mean when we talk about quality engineering as a discipline distinct from quality assurance. Assurance is a check at the end. Engineering is a property of the system.

For teams that want to accelerate this transition without building all of the test infrastructure from scratch, Autonoma is how we approach it at scale. Our agents read your codebase -- routes, components, user flows -- and plan and execute tests against your running application. When your product changes, a Maintainer agent keeps the tests passing without human intervention. No manual regression cycles. No test maintenance treadmill. The coverage scales with your codebase because the codebase is the spec.

The teams that have made this transition most successfully are not the ones with the largest QA budgets. They are the ones that stopped treating quality as someone else's job and built systems that make it everyone's by default.

Quality engineering is an approach where quality is built into the development process rather than verified at the end by a dedicated QA gate. Developers own the quality of what they ship, automated tests run in CI on every pull request, and coverage is derived from the codebase itself. It is distinct from traditional quality assurance, which focuses on finding defects after the fact. Tools like Autonoma (https://getautonoma.com) automate the test generation and maintenance work that makes quality engineering practical at startup scale.

Quality assurance (QA) traditionally means verifying that software meets requirements through testing, usually at the end of a development cycle. Quality engineering embeds quality throughout the entire development process -- developers write tests, automated checks run in CI, and the test suite is maintained as living documentation of expected behavior. QA is reactive; quality engineering is structural. The distinction matters because QA can only find defects, while quality engineering prevents them from reaching production in the first place.

Shift-left testing means moving testing earlier in the development process -- to the left on a timeline where the left is development and the right is production. In practice, this means developers write tests as part of building features, automated tests run in CI before code review, and defects are caught immediately rather than discovered weeks later during a QA cycle. Shift-left is not a policy; it requires a technical infrastructure that makes testing fast, automatic, and part of the default development workflow.

The transition has three phases. First, build an automated testing floor -- get critical user flows covered by automated tests running in CI before changing any team responsibilities. Second, shift ownership incrementally by requiring automated tests for all new features while existing coverage stays where it is. Third, reduce manual regression as automated confidence grows. The key is running these phases in parallel with the existing QA function, not replacing it before the replacement infrastructure is ready. Autonomous testing tools like Autonoma (https://getautonoma.com) can accelerate phase one significantly by generating test coverage from your codebase automatically.

Developer resistance to quality ownership is usually about two things: scope expansion and skill gap. Writing good, stable automated tests is genuinely hard, and developers who were not trained to do it will write brittle tests that break constantly and conclude that testing is more trouble than it is worth. The fix is not cultural pressure -- it is tooling that makes writing and maintaining tests fast and low-friction, combined with explicit recognition of test quality as part of engineering output in code review and performance evaluation.

In a mature quality engineering model, developers write tests for the features they build. QA specialists focus on test strategy, coverage architecture, and the hardest edge cases rather than executing regression cycles. Platform or infrastructure teams own end-to-end tests for critical user journeys. The result is quality that is distributed rather than delegated. No single team is the bottleneck. Tools like Autonoma (https://getautonoma.com) fit into this model by automating test generation and maintenance, freeing QA specialists to focus on strategy instead of execution.