Is vibe coding production ready? It depends on a combination of factors most teams never explicitly evaluate: what the app does, who uses it, whether it handles money or sensitive data, and what happens when it breaks. The honest answer is that vibe coding is production ready for some applications and not others, and the difference is not a matter of code quality alone. It is a matter of consequence. This article provides a scored assessment framework you can apply to any vibe-coded app today to determine whether it is ready to ship, what it is missing, and what guardrails close the gap.

There is a specific kind of confidence that comes from watching your app work flawlessly in a demo. The AI wrote the code, the app does what it is supposed to do, and nothing has broken yet. That confidence is real. It is also misleading.

"Works in a demo" and "production ready" describe two different states. The first means the happy path runs. The second means you have thought through what happens when the happy path doesn't run, when data is malformed, when a third-party API goes down at 3am, when a user tries something the AI never anticipated.

Founders and CTOs asking whether vibe coding is production ready are usually asking because they already know the answer is complicated. This assessment framework gives you the vocabulary and the scored criteria to answer it honestly, for your specific app, your specific risk profile, and your specific users.

What "Production Ready" Actually Means

Before scoring anything, let's agree on what we are evaluating. Production readiness is not a binary property. It is a risk profile. An app is production ready when its risk of failure, and the consequence of that failure, is acceptable given its context. Vibe coding quality is not about whether the code is elegant. It is about whether the code is reliable under the conditions it will actually face.

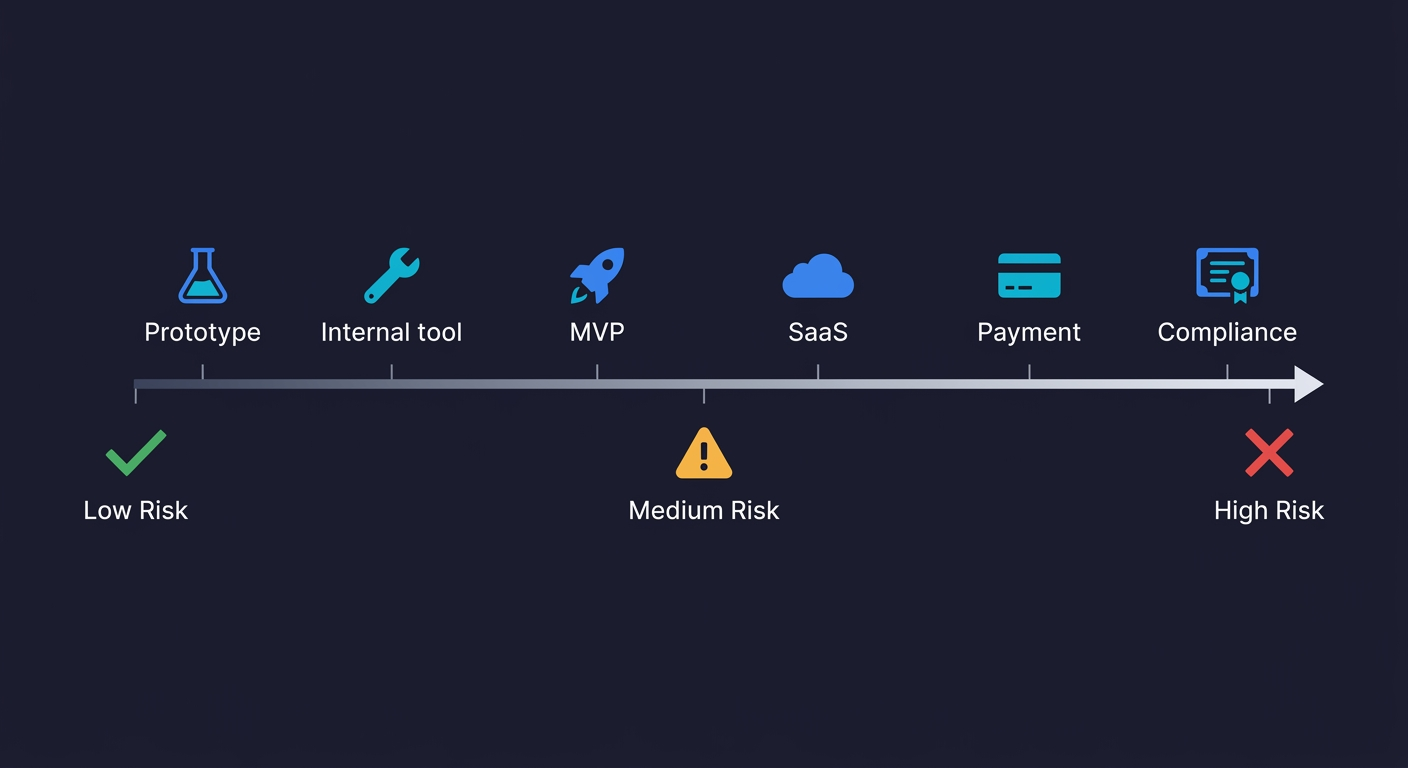

The short answer: vibe coding is production ready for low-to-medium risk applications (internal tools, MVPs, team utilities) with basic coverage infrastructure, and not production ready for high-risk applications handling payments, PII, or regulated data without E2E testing, CI, security review, and monitoring.

A CRUD app that lets a team of five manage project tasks has a different risk profile than a payments flow handling customer credit cards. Both can be vibe-coded. Both can be production ready. The bar is just different.

The three dimensions that determine production readiness for any vibe-coded application are: consequence (what breaks if it fails), exposure (who uses it and how many), and coverage (what guardrails exist to catch failures before users do). Every question in the framework below maps to one of these three dimensions.

The Production-Readiness Assessment Framework

Score each question honestly. The total tells you where your app sits.

| Question | Yes (points) | No (points) | Dimension |

|---|---|---|---|

| Does this app handle real money (payments, billing, refunds)? | +3 | 0 | Consequence |

| Does it store or process personal data (PII, health, financial)? | +3 | 0 | Consequence |

| Is it customer-facing (external users, not just your team)? | +2 | 0 | Exposure |

| Does it have more than 100 active users? | +2 | 0 | Exposure |

| Does a production bug directly cause revenue loss? | +2 | 0 | Consequence |

| Is it subject to compliance requirements (HIPAA, PCI, SOC 2, GDPR)? | +3 | 0 | Consequence |

| Does it integrate with third-party services that can fail independently? | +1 | 0 | Consequence |

| Do you have automated E2E tests on your critical user flows? | 0 | +2 | Coverage |

| Do those tests run automatically on every deploy (CI)? | 0 | +2 | Coverage |

| Do you have error monitoring in production (Sentry, Datadog, etc.)? | 0 | +1 | Coverage |

| Has the code been reviewed for OWASP Top 10 vulnerabilities? | 0 | +2 | Coverage |

| Can any engineer on the team explain what each major module does? | 0 | +1 | Coverage |

Reading Your Score

0-4 points: Low-risk context. Your app's failure modes are contained. If it is also well-covered (bottom half of the table), it is production ready with minimal additional investment. Internal tools, prototypes with limited users, and feature flags in larger systems typically land here. Action items: Add basic error logging. Set up a simple health check. You are likely good to ship.

5-9 points: Medium-risk context. The app has real stakes. Coverage questions become critical. If you scored high on consequence and exposure but have gaps in coverage (no CI, no monitoring, no security review), you are shipping a risk that is not visible yet. Address the bottom half before you grow the user base. Action items: Write E2E tests for your top 3 critical user flows. Add CI to run those tests on every deploy. Set up error monitoring (Sentry or equivalent). Schedule a targeted security review of your authentication code.

10+ points: High-risk context. This is a customer-facing app that handles sensitive data or money, likely with compliance implications, and likely without the coverage infrastructure to catch failures early. Shipping to production in this zone without closing the coverage gaps is a decision with measurable downside. You have probably already felt it. Action items: Commission an OWASP Top 10 security audit on auth and payment code. Add comprehensive E2E coverage with CI enforcement. Deploy production monitoring with alerting. Get human code review on every security-critical module before shipping.

The Coverage dimension questions are where most vibe-coded apps score worst — and where the gap is easiest to close quickly. Autonoma addresses the two most commonly missing items simultaneously: E2E tests on critical flows and tests that run automatically on every deploy, generated from your codebase without any manual scripting.

The Use-Case Matrix

The framework above scores a specific app. This matrix maps common use cases to where they typically land and what production readiness requires at each level.

| Use Case | Typical Score | Production Ready As-Is? | What It Needs |

|---|---|---|---|

| Throwaway prototype, no real users | 0-2 | Yes | Nothing. This is not a production app. |

| Internal tool, team of 5-20 | 1-4 | Usually yes | Basic access controls, error logging |

| MVP with early paying customers | 4-7 | With guardrails | E2E tests on checkout/signup, CI, monitoring |

| Customer-facing SaaS, 100-1000 users | 6-9 | With investment | Full test coverage on critical paths, security review, monitoring, documented incident response |

| App handling payments or PII | 8-12 | Not without audit | OWASP security audit, human code review of auth/payment flows, penetration testing |

| Regulated industry (HIPAA / PCI / SOC 2) | 10+ | No | Compliance-grade security review, auditability of design decisions, legal counsel on AI-generated code liability |

The pattern is consistent: higher stakes require coverage infrastructure, not necessarily a rewrite. Most vibe-coded apps can reach production readiness without abandoning the codebase. They need the guardrails that vibe coding by default skips.

What Vibe Coding Skips (And Why It Matters)

Understanding why vibe-coded apps have predictable production gaps makes the framework above more intuitive. This is not a critique of the tools. It is a description of what the tools optimize for.

AI code generation optimizes for the happy path. Given a prompt like "build a user authentication system," the model produces code that handles the common case correctly. A user enters valid credentials and gets a session token. The edge cases, invalid input formats, expired tokens, concurrent session conflicts, brute force patterns, are not in the prompt. They are not in the output either. This is not a flaw. It is a feature of how the generation process works. The model gives you what you asked for.

Research on vibe coding security risks consistently finds that over half of AI-generated code contains at least one security vulnerability that passes initial review. The vulnerabilities are not random. They cluster in authentication edge cases, input validation, and authorization logic, exactly the code that is never explicitly described in a natural language prompt.

The vibe coding testing gap compounds this. If the code that was generated without explicit consideration of edge cases is also not tested for edge cases, those gaps stay invisible until a user hits them. In a typical vibe-coded codebase, that is every deploy.

There is a second compounding factor that teams discover only after shipping: debugging AI-generated code is harder than debugging code you wrote yourself. When a production bug surfaces in a vibe-coded app, the person investigating often did not write the code and may not understand its internal logic. The code works, but nobody on the team can explain why it works the way it does. This creates a compounding problem: the code was generated without edge case consideration, it ships without test coverage, and when it breaks, the debugging process takes longer because the codebase is not fully understood by the team maintaining it. Vibe coding reliability in production depends not just on what the AI generates, but on whether the team can maintain and fix it under pressure.

The production readiness problem is not that vibe-coded code is bad. It is that vibe-coded code is untested and the test coverage does not get generated automatically alongside the features.

What "AI-Generated Code in Production" Actually Looks Like

A useful way to calibrate the framework is to look at what actually breaks. The vibe coding failures documented in real production apps follow patterns that map directly to the framework questions above.

Payments and billing: A checkout flow that worked in testing fails for a small percentage of real transactions because the AI-generated payment integration did not handle a specific card type error response. The happy path was tested. The exception handling was not.

Authentication under load: A session management system that worked for ten concurrent users starts issuing invalid tokens under a few hundred concurrent users because the AI-generated token store had a race condition that only manifests at scale.

Data handling at the edge: A form that stores user data correctly in 99% of cases silently drops data for inputs that contain certain Unicode characters because the AI-generated sanitization function was not tested against edge-case inputs.

These are not catastrophic failures. They are the quiet, hard-to-reproduce bugs that erode trust with real users over time. The broader sustainability problem is that vibe coding democratized building but did not democratize the quality infrastructure that makes software reliable at scale.

Closing the Gap: Vibe Coding Production-Readiness Checklist

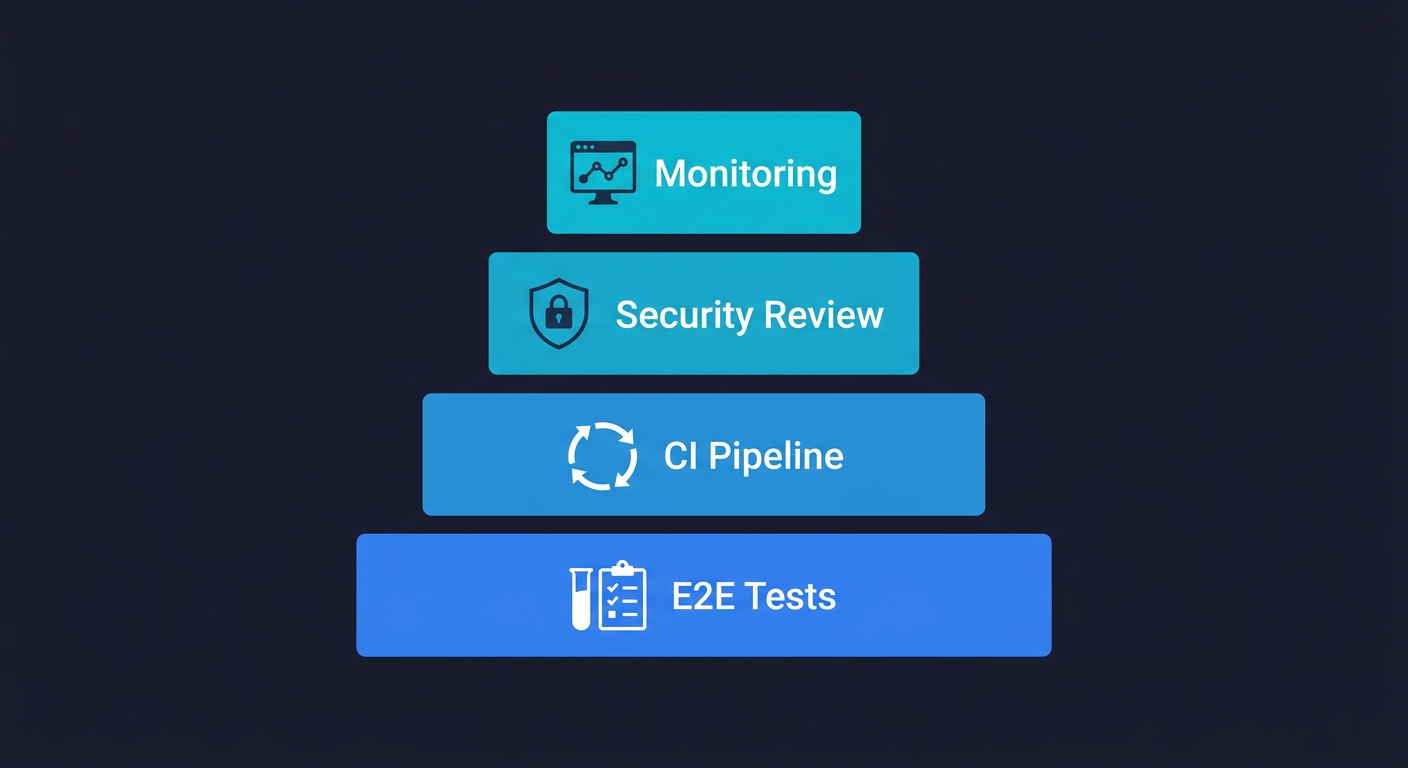

The framework identifies gaps. This is what closing them actually looks like, in order of impact. For enterprise teams evaluating vibe coding reliability at scale, this is the coverage checklist that separates apps that work from apps that are production ready.

E2E tests on your critical flows come first. Not unit tests, not integration tests, E2E. Your checkout, your signup, your core activation path. These catch the behavioral failures that matter most and test the full stack the way a real user does. A broken checkout that sits undetected over a weekend costs a small team between $8,000 and $25,000 when you account for lost revenue, engineering time, and support load. The specific challenge for vibe-coded apps is that E2E tests written against fast-moving AI-generated code break constantly as the code changes. How to test a vibe-coded app covers the practical approaches for teams without QA engineers.

CI is the multiplier. Tests that run manually are tests that do not run reliably. Every deploy should trigger your critical tests automatically. Not as a hard gate at first, just as a signal that something broke before a user tells you.

Security review on your auth and payment code specifically. These are the highest-consequence modules in any app, and they are the modules where AI-generated code has the most consistent gaps. A targeted review of authentication logic, session management, and any code that touches payment instruments is a few hours of an engineer's time against a large asymmetric downside.

Production monitoring fills the gaps tests cannot cover. Tests tell you what you expected to break. Monitoring tells you what you did not expect. Error rates, latency spikes, unexpected status codes on your critical endpoints. This is the signal that catches the bugs your test suite missed.

This is where Autonoma fits specifically into vibe-coded production apps. The problem with writing E2E tests for AI-generated code is that the tests need to keep up with code that changes fast. Our approach: connect your codebase, and agents read your routes, components, and user flows to generate and maintain tests automatically. When your vibe-coded UI changes, the tests adapt. You get the coverage the framework requires without inheriting a test maintenance burden on top of the code maintenance burden you already have.

The QA function itself is evolving in response to vibe coding. The role of quality engineering is no longer writing scripts against known behavior. It is building the infrastructure that catches unknown behavior, which is exactly the category of bugs that vibe coding produces.

A Note on Regulated Environments

If your app is subject to HIPAA, PCI DSS, SOC 2, or GDPR, the framework above is necessary but not sufficient. These compliance frameworks require auditability: the ability to explain, document, and demonstrate why specific decisions were made in the code. AI-generated code has no auditable reasoning. The model produced output. It cannot explain why it chose one implementation over another.

This does not mean you cannot use vibe coding in regulated environments at all. It means you cannot use it as the sole author of code that needs to be explained to an auditor. Every security-critical module in a regulated app, authentication, authorization, data handling, encryption, needs human review and documented design decisions. The startup-focused breakdown covers the regulated environment decision in more depth.

The legal question of liability for AI-generated code in regulated industries is still developing. In 2025 and 2026, the safest posture is: AI can generate the draft, a qualified engineer reviews and owns it.

Is Vibe Coding Production Ready? The Verdict

Is vibe coding production ready? Yes, for the right applications, with the right coverage stack in place. No, for high-consequence applications without that infrastructure.

The framework gives you a score. The matrix maps your use case. The coverage stack tells you what to build. None of it requires abandoning vibe coding or rewriting your codebase from scratch.

The teams that ship vibe-coded software to production successfully are not the ones who wrote better prompts. They are the ones who built the quality infrastructure that compensates for what AI-generated code does not produce automatically. E2E coverage on critical flows, CI, monitoring, and targeted security review on the high-consequence modules.

That infrastructure is not optional at scale. It is the thing that makes the difference between a vibe-coded app that works and a vibe-coded app that is production ready.

Frequently Asked Questions

It depends on the application. Vibe coding is production ready for internal tools, low-stakes apps, and MVPs with appropriate guardrails. It is not production ready for customer-facing apps handling payments or PII without E2E test coverage, CI, monitoring, and a security review on auth and payment flows. The production-readiness assessment framework in this article gives you a scored evaluation for your specific app.

AI-generated code optimizes for the happy path. Edge cases in authentication, input validation, error handling, and authorization logic are not in the prompt and typically not in the output. Security research consistently finds that over half of AI-generated code contains vulnerabilities that pass initial review. Combined with the absence of automated testing in most vibe-coded codebases, these gaps stay invisible until a real user hits them.

With significant guardrails, yes. Payment flows in vibe-coded apps require a targeted security review of the payment integration code, E2E tests that cover both success and failure paths (declined cards, network errors, duplicate charges), and monitoring on transaction error rates. The AI-generated payment code is likely correct for the common case and wrong for specific error responses from the payment processor. Audit those edge cases specifically.

E2E tests on your three most critical user flows (signup, core activation, checkout if applicable) running in CI on every deploy. That is the floor. Above the floor: error monitoring in production, a security review of authentication and authorization code, and documented incident response for when tests fail. Tools like Autonoma connect to your codebase and agents generate and maintain those E2E tests automatically, which is particularly useful for fast-moving vibe-coded apps where manually written tests break constantly.

Not as the sole author of compliance-critical code. Regulated environments require auditability: the ability to explain and document why specific security decisions were made. AI-generated code has no auditable reasoning. Every security-critical module in a regulated app needs human review and documented design decisions. Vibe coding can generate the initial implementation, but a qualified engineer must review, own, and document it before it ships in a regulated environment.

For E2E coverage on fast-moving codebases, [Autonoma](https://getautonoma.com) connects to your codebase and agents generate and maintain tests automatically as code changes. For production monitoring, Sentry covers error tracking and Datadog or similar covers infrastructure metrics. For security review, tools like Snyk or Semgrep catch common vulnerability patterns in AI-generated code. The full testing approach for non-engineers is covered in the guide on how to test a vibe-coded app.