Why Your Best Engineers Should Write E2E Tests (And Why They Won't)

E2E testing (end-to-end testing) verifies that your application works correctly from the user's perspective, simulating real flows like signup, checkout, or core activation across the full stack. Shift left testing means moving those checks earlier in the development cycle, so bugs surface in a pull request rather than in production. The friction isn't the concept. It's the implementation: writing and maintaining test scripts is tedious, time-consuming work that smart engineers rationally deprioritize. The solution isn't more discipline. It's removing the friction entirely.

Every CTO we talk to has some version of the same story. They know E2E testing matters. They've seen what happens when a regression slips into production on a Friday. They've done the math on incident response cost versus prevention cost. They've had the all-hands where they committed to better test coverage.

Six months later, the test suite is half-stale, someone is quietly bypassing it, and the engineers who were supposed to own it are apologetic but clearly relieved to be back on feature work.

This isn't a failure of discipline. It's a rational response to a broken incentive structure. And until you understand what actually makes engineers avoid E2E tests, you'll keep having the same conversation.

The Honest Reason Engineers Skip E2E Tests

Ask an engineer why they didn't write tests for their last PR and they'll give you a time answer. "Didn't have time." "Sprint was tight." "I'll add them next sprint." These are all true and all beside the point.

The real answer is that writing E2E tests in Playwright or Cypress is genuinely unpleasant work. Not because engineers are lazy. Because the work itself has a terrible effort-to-value ratio compared to everything else on their plate.

Think about what writing a test actually involves. You pick a flow. You write selectors for elements that may or may not have stable IDs. You sequence the steps. You account for async behavior and loading states. You handle the test database state. You run it, find it flaky, debug it, make it less flaky. Then you merge it. Three weeks later a designer renames a class and your test breaks, and now someone spends 45 minutes tracking down a selector that no longer exists.

Your best engineers see all of this clearly. They're not avoiding E2E testing because they don't value quality. They're avoiding it because they've done the math: the engineering hours required to write and maintain test scripts are hours not spent shipping the features that move the product forward. Stripe's developer productivity survey found that developers spend over 40% of their time on maintenance and technical debt, not new features. For a five-person team in a competitive market, adding test maintenance on top of that makes the trade-off live and painful every sprint.

Why Your Best Engineers Are the Worst Test Writers

Here is the counterintuitive part: the engineers most qualified to write good E2E tests are the ones most likely to resist writing them.

Junior engineers will write tests. They'll follow the process, document the selectors, commit the scripts. The tests will be brittle and miss the important cases, but they'll exist.

Senior engineers know too much. They know which flows are actually critical versus which ones break rarely. They know which elements are stable and which ones change every sprint. They know the test suite from six months ago that everyone quietly stopped running because the maintenance cost was too high. They've seen this movie. The brittleness, the selector rot, the three-hour Friday debugging sessions for a test failure that turned out to be a flaky assertion.

Senior engineers write fewer tests not because they care less but because they understand the full cost of writing them badly, which means they set a higher bar for when it's worth doing, which means they do it less often.

This is rational. It's also a significant organizational risk. The engineers with the best judgment about what should be tested are opting out of the process.

What Shift Left Testing Actually Requires

Shift left testing is the right idea. Move testing earlier in the development cycle. Find bugs in a pull request instead of in production. The cost differential is real: according to the NIST Systems Sciences Institute, a bug caught in requirements costs 1x to fix, but the same bug caught in production costs 15x to 100x more. A bug caught in a PR costs an hour. The same bug caught in production costs a day or more, plus incident response, plus customer impact.

You may have heard the advice to minimize E2E tests entirely. Google's influential "Just Say No to More End-to-End Tests" post advocates a 70/20/10 split: 70% unit tests, 20% integration, 10% E2E. That framework makes sense when you have a large QA organization and hundreds of engineers writing unit tests every day. For a startup with 5 to 15 engineers and no QA team, the pyramid inverts in practice. Nobody is writing enough unit tests to catch integration-level bugs, and the 10% E2E coverage the pyramid recommends is the only thing standing between your users and a broken checkout flow.

The challenge is that most implementations of shift left testing treat it as a discipline problem. "Engineers need to write tests before merging." "QA needs to review earlier in the sprint." These are policy solutions to a structural problem.

The structural problem is that writing tests by hand creates exactly the friction that makes shift left testing fail in practice. You can mandate that engineers write E2E tests before merging. What you can't mandate is that they write good ones under sprint pressure. Under pressure, you get tests that pass because they're not testing anything meaningful. You get tests written around the implementation rather than the intended behavior. You get tests that will be a maintenance liability within two sprints.

Shift left works when the feedback loop is fast, low-friction, and structurally integrated into how engineers work. Not as an extra step they're required to complete, but as something that happens automatically as part of the normal commit-review-merge cycle.

For most small teams, that means the question isn't "how do we get engineers to write more tests?" It's "how do we get test coverage without requiring engineers to write and maintain scripts?"

Why Test Maintenance Is Where It All Falls Apart

The creation cost of E2E tests is high. The maintenance cost is what kills most testing practices.

A test script is a snapshot of how your application worked on the day it was written. Your application is not a snapshot. It evolves every sprint. New components replace old ones. Flows get redesigned. The user journey changes. Every one of those changes potentially breaks tests that were working fine.

For a lean team shipping weekly, the maintenance cost accumulates fast. Test maintenance can consume a significant share of total engineering time by the second year (we've written about the compounding cost of test maintenance in detail). Not testing, not writing new tests: fixing old tests that broke because the product improved. At a blended engineering rate of $150/hour, that's $60,000 to $120,000 per year for a 10-person team spent maintaining tests, not writing them.

The engineers maintaining those tests are aware of the irony. They're spending engineering time on work created by engineering progress. Tests that exist to accelerate development are slowing development down.

This is the moment when a reasonable engineering lead makes the call to deprioritize the test suite. And honestly, it's the right call given the options available. The mistake is treating it as a permanent trade-off rather than a solvable problem.

How AI-Generated Tests Change This Equation

The friction that makes E2E testing unsustainable for lean teams comes from two sources: writing the tests in the first place, and maintaining them as the product evolves. Both of those are now solvable with AI-powered testing.

We built Autonoma specifically because we kept seeing this pattern: small teams with good engineers who understood why testing mattered, couldn't sustain it because the implementation required too much ongoing human labor.

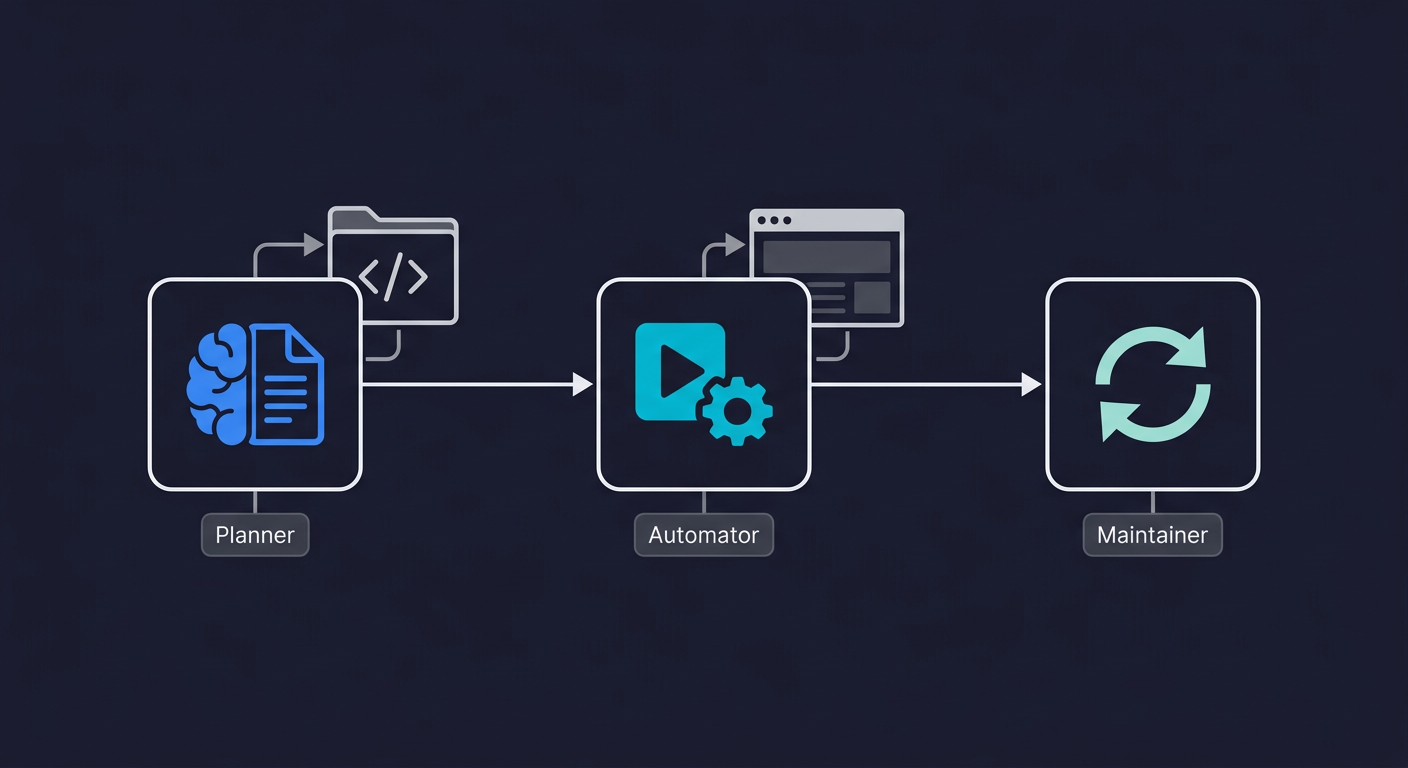

The approach is different from scripted test automation. You connect your codebase and agents generate tests from the code itself, execute them against your running application, and update them automatically when the application evolves. For a deeper look at the architecture, see what is agentic testing.

The critical difference from traditional scripted testing is where the test definition lives. Scripted tests encode what the UI looked like on the day they were written. Tests generated from your codebase are derived from what the code actually does, not from a surface-level recording of UI interactions. When your application changes, the Maintainer agent re-reads the codebase and updates the tests accordingly. The tests don't go stale because they're tied to the code, not to brittle selectors.

To see what that difference looks like in practice, compare a typical scripted checkout test with what an AI agent generates from the same codebase:

// Traditional Playwright test: ~35 lines, manually maintained

test('user can complete checkout', async ({ page }) => {

await page.goto('/products');

await page.click('[data-testid="product-card-1"] .add-to-cart');

await page.click('[data-testid="cart-icon"]');

await page.waitForSelector('.cart-drawer');

await page.click('[data-testid="checkout-btn"]');

await page.fill('#email', 'test@example.com');

await page.fill('#card-number', '4242424242424242');

await page.fill('#card-expiry', '12/28');

await page.fill('#card-cvc', '123');

await page.click('[data-testid="pay-now-btn"]');

await page.waitForURL('/order-confirmation');

await expect(page.locator('.order-success')).toBeVisible();

});

// When .cart-drawer becomes .cart-sidebar or

// #card-number moves inside a Stripe iframe: test breaks.

// Someone spends 45 minutes finding and fixing the selector.With AI-generated testing, the agent reads your route definitions, component tree, and API schemas, then generates and executes the equivalent flow without brittle selectors. When your team renames a component or restructures a page, the Maintainer agent re-reads the updated code and regenerates the affected test steps. No one opens a test file.

| Scripted E2E Tests | AI-Generated E2E Tests | |

|---|---|---|

| Initial creation time | 3-4 hours per flow | Minutes (auto-generated from codebase) |

| Maintenance cost per sprint | 2-5 hours fixing broken selectors | Near zero (agent adapts to changes) |

| Coverage breadth | Limited by engineer bandwidth | Scales with codebase, not headcount |

| Resilience to UI changes | Low (selector-dependent) | High (intent-based, code-aware) |

| Requires coding expertise | Yes (Playwright/Cypress fluency) | No scripting required |

| CI/CD integration | Manual pipeline setup | Built-in, runs on every PR |

For your best engineers, this changes the calculation entirely. They no longer have to choose between writing features and maintaining tests. The coverage expands as the product grows, automatically.

Keeping Ownership Without the Test Automation Tax

There's a version of this conversation where the argument for AI-generated tests sounds like "take engineers out of the loop entirely." That's not the right framing, and it's not what happens in practice.

Engineers still need to understand what's being tested. They still need to review test results and triage failures. They still need to think about which flows are critical and what the test coverage actually means for their release confidence. What changes is the labor: writing selectors, sequencing steps, updating scripts after refactors, debugging flaky assertions.

The ownership stays with the engineering team. The grunt work moves to the agents.

This is actually the outcome your best engineers want. They care about quality. They want confidence in their releases. They're not opting out of testing because they think it's unimportant. They're opting out of a specific implementation that doesn't respect their time.

When the friction drops, the behavior changes. Engineers who never looked at the test suite start reviewing test coverage as part of PR review. CTOs who had quietly deprioritized testing start treating it as a standard part of the development workflow, because it finally feels like it costs less than it protects.

What to Do Monday Morning

If this article resonated, here's how to start changing the dynamic on your team without a multi-sprint initiative:

Audit your critical flows. List the 3 to 5 user journeys that absolutely cannot break: signup, core activation, checkout, and any API your customers depend on. If you don't have E2E coverage on these today, everything else is a distraction.

Measure your current testing cost. Track how many engineering hours per sprint go to writing new tests, fixing broken ones, and debugging flaky failures. Most teams are surprised by the number. This is the baseline you're trying to improve.

Evaluate AI-generated testing. Tools like Autonoma can generate and maintain E2E coverage from your codebase without scripts. Run a pilot on one critical flow and compare: how long did it take to get coverage versus writing it by hand? How does it hold up after a sprint of product changes?

Make tests a merge gate, not a suggestion. Once you trust the coverage, wire it into CI so tests run on every pull request. Shift left testing only works when the feedback loop is mandatory and fast.

Review coverage monthly, not daily. The goal is a testing practice that runs in the background, not one that requires constant attention. If your team is spending more than an hour per sprint thinking about tests, the friction hasn't been removed yet.

The Real Shift Left Is Removing the Friction

Shift left testing as a concept is right. Move quality checks earlier. Surface bugs in development, not production. The implementation most teams choose, handwritten scripts that require ongoing maintenance, is what causes shift left to fail in practice.

The teams that have made shift left work sustainably are the ones that solved the friction problem first. Not by demanding more discipline from engineers, but by changing what the work actually requires.

If your best engineers are avoiding E2E tests, they're not wrong. They're responding rationally to a broken trade-off. Fix the trade-off: remove the maintenance burden, keep the ownership, and let the agents do the work that was always going to be tedious. Your engineers will start caring about test coverage again, not because you mandated it, but because it finally stopped costing them more than it was worth.

Frequently Asked Questions

E2E testing (end-to-end testing) verifies that your application works correctly from the user's perspective across the full stack, simulating real flows like signup, checkout, or core activation. For small teams, it matters because production bugs are the most expensive kind: they require incident response, root cause analysis, a hotfix deploy, and often customer communication. E2E tests running in CI catch these regressions before they reach users, compressing what would be a day-long incident into a 10-minute PR fix.

Senior engineers understand the full cost of writing tests badly. They've seen brittle test suites from past jobs: selector rot, flaky assertions, three-hour debugging sessions for tests that weren't testing anything real. They set a higher bar for when test-writing is worth doing, which means they do it less often under sprint pressure. This is rational self-preservation, not laziness. The solution is reducing the cost of writing good tests, not adding more process pressure.

Shift left testing moves quality checks earlier in the development cycle, so bugs are found in pull requests rather than in production or staging. Traditional QA happens at the end: a QA engineer reviews the build before release. Shift left testing happens continuously, with automated checks running on every commit. The cost difference is significant: a bug found in a PR takes an hour to fix, while the same bug in production can take a full day plus incident overhead. For teams without dedicated QA, shift left is achieved through automated E2E tests running in CI on every PR.

Test scripts are snapshots of how your application worked when they were written. Every product change potentially breaks those snapshots. A designer renames a CSS class, a component gets refactored, a flow is redesigned, and the tests that were working fine start failing. For a team shipping weekly, this maintenance accumulates fast. We've seen teams where test maintenance consumed 15-20% of engineering time by year two. At that point, a reasonable engineering lead deprioritizes the suite, and the testing practice collapses.

The best tools for E2E testing without a QA team are those that minimize maintenance burden. [Autonoma](https://getautonoma.com) generates and maintains tests automatically from your codebase, requiring no scripts and no ongoing maintenance. Playwright and Cypress are strong choices if you have engineers willing to write and maintain scripts. The key is getting tests running in CI as a merge gate on your 3-5 most critical user flows, and then keeping that maintenance cost low enough that it doesn't get deprioritized under sprint pressure.

AI-generated E2E testing uses agents that read your codebase (routes, components, user flows) and generate test cases from the code itself rather than from manual recordings or natural language descriptions. The agents execute those tests and automatically update them when the application evolves. The key difference from traditional automation is that tests are tied to the codebase, not to brittle UI selectors, so they don't go stale when the product changes.

Yes. AI-generated tests change the labor involved, not the ownership. Engineers still review test results, triage failures, and understand what's covered. What changes is the grunt work: writing selectors, sequencing steps, updating scripts after refactors. Ownership staying with the engineering team is what makes test coverage meaningful. The goal is removing the maintenance tax that causes engineers to check out of the testing process, not removing engineers from the loop.

Start with your 3-5 most critical user flows: signup and login, your core activation flow, checkout or billing if applicable, and any API endpoint external customers depend on. That typically means 10-30 tests. A small suite that runs reliably and gates merges is worth more than a large suite with frequent false positives. Expand coverage only after your initial suite has been stable and trusted for at least a month.