Vibe coding limitations fall into four categories: code generation quality (edge cases, complex algorithms, domain logic), codebase understanding (large repos, cross-file dependencies, legacy code), tooling gaps (testing, debugging, performance optimization), and maintenance friction (refactoring AI output, debugging AI logic, scaling AI-built systems). This article breaks down each limitation, explains why it exists in the current AI architecture, and gives the practical workaround that actually works in production teams. This is not an argument against vibe coding. It is a map of where the guardrails need to go.

Four distinct failure categories. Dozens of production teams hitting the same walls. The pattern is consistent enough that it is no longer a matter of model quality or prompt skill. These AI coding limitations are structural boundaries baked into how the tools work, and understanding those boundaries is now a baseline skill for any team using them seriously.

This post maps all four categories of vibe coding limitations, explains the architectural reason each one exists, and gives you the workaround that production teams are actually using. Not the workaround that works in a demo. The one that holds up at 3am when something breaks. If you are evaluating whether vibe-coded apps are production ready, this is the prerequisite read.

| Limitation Category | Core Problem | Root Cause | Production Workaround |

|---|---|---|---|

| Code Generation | Misses edge cases, domain rules, complex algorithms, security patterns | Statistical token prediction favors happy paths | Explicit edge case prompts; domain expert review |

| Codebase Understanding | Cannot reason beyond context window | Transformer architecture limits | Strong indexing tools; manual file references; full test suite |

| Tooling | Cannot verify its own output | Same model generates code and tests | External verification (separate agent testing) |

| Maintenance | Inconsistent patterns; no architectural memory | Stateless generation across prompts | Convention files; architecture-first design |

Code Generation Limitations

Edge Cases and Boundary Conditions

AI IDEs are trained on code that mostly works. The happy path is well-represented in training data. The edge cases are not. When you ask Cursor or Windsurf to generate a payment flow, it will produce code that processes a valid card correctly almost every time. What it will miss: the zero-amount transaction edge case, the currency mismatch between frontend and backend, the timeout scenario where the charge succeeds but the response never returns.

This is not a random failure. It is structural. An analysis of 470 open-source GitHub pull requests found that AI-co-authored code contains 1.7x more issues than human-written code, with the gap widening specifically on edge cases and boundary conditions. The model learns to produce code that passes the obvious checks because that is what most of the code in its training set does. Edge cases, by definition, are underrepresented.

Why it exists: Language models generate the statistically likely next token. A developer writing a payment handler in training data was probably not writing down every edge case they were accounting for mentally. The model sees the output, not the reasoning. So it learns to produce code that looks like production code without having learned all the cases that production code needs to handle.

The workaround that actually works: Be explicit about edges in your prompt. Do not ask "write a payment handler." Ask "write a payment handler that handles network timeouts, zero-amount transactions, duplicate charge attempts, and currency mismatches, returning distinct error codes for each." Specificity forces coverage. You can also ask the model directly: "What edge cases in this function am I not handling?" It is better at auditing than generating from scratch because auditing is closer to the pattern-matching task it was trained on.

Complex Algorithmic Problems

Ask Claude Code to scaffold a REST API. It does it in minutes. Ask it to implement a custom scheduling algorithm with specific constraints on resource allocation and priority ordering. It will produce something that looks correct and usually is not.

The distinction is familiar pattern versus novel composition. CRUD operations, authentication flows, database queries, component rendering logic: these exist in millions of forms in training data. A bin-packing variant with three custom constraints does not. The model is not deriving the algorithm from first principles. It is pattern-matching against the closest thing it has seen, and the closest thing may be subtly wrong.

Why it exists: Generalization in neural networks is imperfect. The model can interpolate well within its training distribution. Novel algorithmic problems that fall outside that distribution produce outputs that are locally coherent but globally incorrect.

The workaround that actually works: Provide pseudocode or a formal description of the algorithm before asking for implementation. Break the problem into verifiable steps and ask the model to implement and test each step before moving to the next. Use the AI as a syntax accelerator, not as the algorithm designer.

Domain-Specific Logic

Regulatory compliance logic in fintech. Clinical data validation rules in healthtech. Actuarial calculations in insurance. The AI will write syntactically valid code. Whether it correctly encodes the domain rule is a different question.

The model has seen some domain-specific code, but domain rules change, vary by jurisdiction, and often exist primarily in regulatory documents rather than public codebases. An AI IDE confidently generating a KYC validation function may be generating something that would fail a compliance audit. It will not flag this uncertainty unprompted.

Why it exists: Domain knowledge that lives in PDFs, legal documents, and internal policy manuals is absent from the training distribution. The model fills the gap with plausible-sounding but potentially incorrect logic.

The workaround that actually works: Treat all domain-critical logic as requiring expert review regardless of how confident the AI output appears. Paste the relevant regulatory text or business rule directly into the prompt context. Have a domain expert sign off on the generated logic before it touches production. For the patterns that matter here, see the vibe coding failures that actually happened in production for several real examples of this exact failure mode.

Security Blind Spots

AI-generated code compiles and runs. Whether it is secure is a separate question that the model rarely surfaces on its own. Research consistently shows that 40 to 60 percent of AI-generated code contains at least one security vulnerability. The most common: SQL injection through string concatenation instead of parameterized queries, hardcoded API keys and credentials, missing input validation on user-facing endpoints, and cross-site scripting (XSS) vulnerabilities in rendered output.

The pattern is predictable. The model produces code that handles the functional requirement. The secure alternative (parameterized queries, environment variables, sanitized inputs) exists in training data, but the insecure version is statistically more common in the code the model learned from. When both options satisfy the prompt, the model has no strong signal to prefer the secure one.

Why it exists: Secure coding patterns are a subset of functional coding patterns. The model optimizes for "code that works," not "code that is safe." Security constraints are implicit. Unless the prompt specifies them, the model treats them as optional. This is compounded by the fact that AI models can hallucinate package names that do not exist, and attackers have begun registering malicious packages under those hallucinated names.

The workaround that actually works: Never trust AI output for authentication, payment processing, or user input handling without a dedicated security review. Add static analysis security testing (SAST) to your CI/CD pipeline so every AI-generated commit gets scanned automatically. Be explicit in prompts: "use parameterized queries, not string concatenation" and "store credentials in environment variables, not in code." For a deeper look at the vibe coding problems that scanners miss entirely, the security gaps in vibe-coded applications covers the logic-level vulnerabilities that static analysis cannot catch.

Codebase Understanding Limitations

Large Codebases and Context Windows

AI IDEs operate within context windows. Cursor's context window is large, but a 200,000-line codebase is larger. When the model cannot see the entire codebase simultaneously, it makes assumptions. Some of those assumptions are wrong.

You ask the AI to add a new API endpoint. It generates the endpoint correctly. It does not know that a middleware function registered 47 files away transforms all request bodies before they reach route handlers. The new endpoint skips that transformation. The bug is invisible until something downstream fails.

Why it exists: Context window constraints are a hard architectural limit of current transformer models. The model can only reason about what it can see. What it cannot see, it guesses or ignores.

The workaround that actually works: Use AI IDEs with the best indexing and retrieval capabilities (Cursor's codebase indexing is meaningfully better than raw context window pasting). Provide explicit file references when generating code that touches shared infrastructure. For changes that affect cross-cutting concerns like middleware, auth, or shared utilities, manually point the AI to every file that function touches. Do not assume it found them on its own.

Cross-File Dependencies

Related to context limits, but distinct. Even when files are within context range, implicit dependencies between modules are often missed. A TypeScript type defined in one file, used in five others, changed by the AI in a way that technically compiles but breaks runtime behavior downstream.

This shows up constantly in refactoring tasks. You ask the AI to rename a function. It finds the call sites it can see. The call site in the test file three directories away is not in the context. The renamed function passes its own tests. The test file starts failing.

Why it exists: Static analysis of dependencies requires understanding the entire dependency graph. Language models approximate this through pattern matching, not through actual graph traversal. They miss what they cannot see.

The workaround that actually works: Run your full test suite after every AI-generated change before you accept it into the branch. Do not use the AI's confidence in its own output as a substitute for verification. For refactors specifically, use your IDE's built-in refactoring tools (which do traverse the actual dependency graph) before asking the AI to do anything on top of the change.

Legacy Code and Undocumented Systems

The AI is excellent at greenfield. It struggles with brownfield. Legacy codebases often contain logic that was written under constraints that no longer exist, with dependencies on systems that are no longer documented, implementing behavior that is not reflected in the code's naming or structure.

Ask an AI to extend a legacy billing system and it will produce code that handles the cases it can infer from the existing code. It cannot infer the cases that exist because of a business decision made seven years ago by someone who is no longer at the company. The TCO framework for vibe coding covers how these invisible constraints compound into real cost.

Why it exists: The model cannot recover context that does not exist in the codebase. Institutional knowledge lives in engineers' heads. When that knowledge is not encoded in comments, documentation, or test cases, the AI has no way to access it.

The workaround that actually works: Before touching legacy code with an AI, spend time encoding the relevant context: comments explaining why unusual code exists, documentation of the dependencies, test cases that cover the edge cases the code silently handles. This is work that pays dividends beyond AI assistance, but it is particularly important when you are about to give a context-limited model the steering wheel.

Tooling Limitations

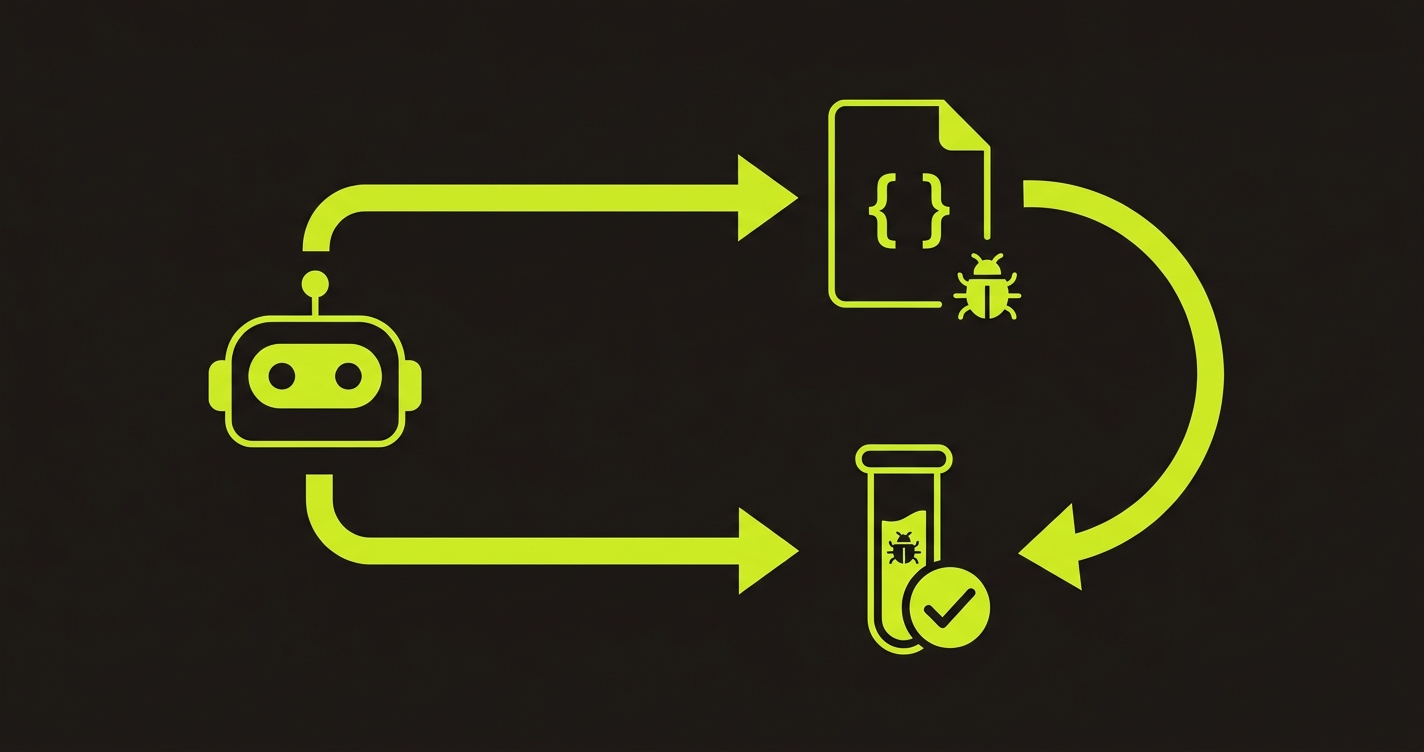

Testing AI-Generated Code

This is the sharpest limitation of the current AI coding landscape and the one that compounds everything else. Developers report spending up to 63% more time debugging AI-generated code than code they wrote themselves. AI IDEs generate code quickly. They do not reliably generate tests for that code, and when they do, those tests tend to test the implementation rather than the behavior. The implications for traditional test suites are severe enough that some teams are rethinking whether conventional test suites survive vibe coding at all.

An AI writes a user registration function. You ask it to write tests. It writes tests that call the function with valid inputs and assert that the function returns what it returns. If the function has a bug, the tests will faithfully replicate that bug in the assertion. The tests pass. The bug ships.

Why it exists: The model cannot step outside its own generation to evaluate correctness independently. It is pattern-completing the test file the same way it pattern-completed the implementation file. Both outputs are locally coherent within the model's understanding. Whether they are jointly correct relative to actual requirements is a different question.

The workaround that actually works: External verification. Tests generated by the same AI that wrote the code have a known bias toward the implementation. Tests generated by a separate agent that reads the codebase and derives expected behavior from routes, components, and user flows are structurally independent. We built Autonoma as an open-source agentic testing tool to solve exactly this: AI agents read your code, derive what the application should do, and generate tests that reflect intent rather than implementation. If the AI code is wrong, the externally derived tests catch it. This is also why vibe coding best practices emphasize external testing as non-negotiable, not a nice-to-have.

Debugging Complex Failures

When AI-generated code fails in ways that are not obvious, asking the AI to debug it creates a specific kind of problem: the model confidently suggests fixes that address the symptom while missing the root cause. It generated the code with a certain mental model of how it works. It debugs with that same mental model. If the mental model is the source of the bug, debugging within it will not find the fix.

You show the AI a stack trace. It suggests adding a null check. The null check makes the error message change. The underlying race condition that caused the null is still there.

Why it exists: Debugging requires reasoning about runtime behavior, state across time, and interactions between components. Language models reason about static code. They cannot simulate execution or track state across function calls the way a debugger with actual runtime information can.

The workaround that actually works: Start debugging with actual runtime tools: logs, breakpoints, profilers, and reproduction steps in an isolated environment. Use the AI for a second opinion once you have isolated the failure to a specific component, not as the primary diagnostic tool for a system-level failure. The triage playbook for vibe coding quality issues covers the structured approach for when bugs spike post-deployment.

Performance Optimization

AI IDEs optimize for code that works, not code that works efficiently. The generated implementation processes a list with a nested loop when a hash map would give O(n) performance. It queries the database N+1 times in a loop because each iteration looks standalone. It re-renders entire component trees because the dependency array in a useEffect is wrong.

These are not exotic bugs. They are standard performance failure modes that experienced engineers recognize immediately and avoid. The AI has seen them in training data too. It just has no strong signal to prefer the efficient version when it can satisfy the prompt with either.

Why it exists: The model's objective is to produce code that satisfies the prompt, not code that performs optimally. Performance constraints are usually implicit. If you do not specify them, the model does not optimize for them.

The workaround that actually works: Profile before you optimize, even on AI-generated code. Do not assume the obvious bottleneck. Once you find it, specify the constraint explicitly in the prompt: "refactor this to use a single database query with a JOIN instead of N+1 queries" gets you a much better result than "make this faster." For SQL specifically, paste the query and ask for an EXPLAIN plan analysis first.

Maintenance Limitations

Refactoring AI-Generated Code

AI-generated code has a specific character that makes it harder to refactor than human-written code. Analysis across thousands of repositories shows that code churn has roughly doubled since widespread AI tool adoption, and much of that churn is rework on AI-generated code that was not structured for longevity. It tends toward verbosity (the model favors explicit over implicit), inconsistent abstractions (different prompts produce different patterns for similar problems), and shallow structure (it solves the immediate problem without building the abstraction that would make the third variation easy).

Six months after you vibed an application into existence, your codebase has three different patterns for handling API errors, four ways of structuring form components, and no clear line between business logic and presentation. Refactoring it requires understanding which of the inconsistent patterns was intentional and which was just what the model happened to produce on a given day.

Why it exists: Each AI generation is a stateless act. The model has no memory of how it solved similar problems earlier in the project. It does not enforce architectural consistency because it does not have an architectural model of your specific codebase. It has a general model of what codebases look like.

The workaround that actually works: Establish and enforce conventions in your prompts from day one. A system prompt or project rules file (Cursor's .cursorrules, Windsurf's .windsurfrules) that specifies your error handling pattern, your component structure, your service layer conventions. The AI will follow these if they are present and specific. The teams that skip this step are the ones dealing with vibe coding technical debt six months later.

Scaling AI-Built Systems

A system built with an AI IDE that handles 100 users may not handle 10,000. Not because the AI made bad choices deliberately, but because it made choices that are invisible at small scale and critical at large scale. Synchronous processing where async is needed. In-memory state where a distributed cache belongs. Single-instance assumptions where horizontal scaling is required.

Why it exists: The AI has no model of your expected load. It produces code that handles the prompt's implied use case. "Build a job queue" produces something that works. Whether it works under your specific throughput requirements is a constraint you have to specify explicitly, and even then, the model's choices require scrutiny.

The workaround that actually works: Design your architecture before you prompt. Decide on your data layer, your async patterns, your caching strategy, and your service boundaries before you let the AI fill in the implementation. Give the AI a specific, constrained design space rather than the open canvas it defaults to. For a fuller view of which tools handle these vibe coding challenges better than others, the best vibe coding tools comparison evaluates how each IDE addresses the scaling gap.

The Bigger Picture

The limitation that ties everything else together is verification. Code generation, codebase understanding, tooling, and maintenance are all categories where AI IDE limitations are improving rapidly. Context windows are growing. Retrieval is getting better. Agentic workflows are catching more edge cases than single-shot generation. Google's DORA research found a 7.2% decrease in delivery stability correlated with a 25% increase in AI tool usage, suggesting that faster generation without stronger verification is a net negative.

Testing is different. The structural problem, that the same model verifying its own output will share its failure modes, does not go away with larger context windows. It requires external verification. It is no coincidence that only 28% of healthcare and 34% of financial services companies have adopted vibe coding. The industries with the highest cost of failure are the most cautious about these exact AI IDE limitations. The shift is already reshaping how QA teams operate across the industry.

The teams using AI IDEs most effectively are not the ones that have found a way to trust AI output. They are the ones that have built independent verification into their pipeline and use AI aggressively within that guardrail. Open-source agentic testing tools like Autonoma exist specifically because this verification gap is structural, not incidental. The boundaries documented here are not reasons to avoid vibe coding. They are the map you need to use it well.

The main code generation limitations are edge case coverage (AI models are trained on happy paths and miss boundary conditions), complex algorithmic problems that fall outside training distribution, and domain-specific logic where the rules exist in regulatory documents rather than public codebases. The practical fix for each is specificity in prompts: describing edge cases explicitly, providing pseudocode for complex algorithms, and pasting relevant domain rules directly into the prompt context.

AI IDEs are limited by context windows. Even with large context windows, a substantial production codebase exceeds what the model can reason about simultaneously. The model cannot see cross-file dependencies, shared middleware, or implicit contracts between modules outside its context. The workaround is to use IDEs with strong codebase indexing, explicitly reference relevant files when generating code that touches shared infrastructure, and run your full test suite after every AI-generated change.

Not reliably. When the same AI model that generates code also generates the tests, both outputs share the same failure modes. A bug in the implementation will be reflected in the test's assertions, causing the tests to pass while the bug ships. The solution is external verification: tests generated by a separate agent that derives expected behavior from the codebase independently, rather than from the implementation it is testing. Autonoma is an open-source agentic testing tool built for this: independent AI agents read your codebase and generate behavioral tests that reflect what the application should do, not what the code says it does.

Architectural consistency and scalability assumptions. AI IDEs generate code that solves the immediate problem without enforcing patterns across generations or optimizing for load. Teams that skip establishing conventions in their prompt rules and architecture decisions end up with inconsistent patterns that are expensive to refactor. The fix is to decide on your architecture before prompting, not after, and to encode your conventions in project rules files from day one.

Poorly, compared to greenfield. Legacy codebases contain institutional knowledge that is not encoded in the code: business rules from past decisions, constraints from dependencies that have changed, behavior that exists for reasons no longer documented. AI IDEs cannot recover this context. The workaround is to encode it before AI-assisted development: comments, tests covering edge cases, and documentation of non-obvious constraints.